The Qwen Brain Drain: Why Alibaba’s Loss Is Your Local Inference Gain

Alibaba’s Qwen team just experienced a corporate decapitation so swift it would make Game of Thrones writers jealous. Junyang Lin, the lead researcher who turned Qwen from an also-ran into a genuine OpenAI competitor, resigned via tweet at 1 AM Beijing time, taking core contributors to Qwen-Coder and Qwen-VL with him. The irony? This exodus coincides with the release of Qwen3.5, a model family so efficient that the 2B parameter version runs vision and reasoning tasks in under 5GB of VRAM.

While Alibaba’s executives hold emergency All Hands meetings to stem the bleeding, infrastructure architects have a narrow window to capitalize on the chaos. Qwen3.5 represents a fundamental shift in how we think about local inference hardware requirements and memory bandwidth, but only if you know how to bypass the standard serving stack that treats every model like it needs an A100 cluster.

The Infrastructure Reality Check

Standard LLM serving assumes you’re either calling OpenAI’s API or hosting a 70B+ parameter behemoth on eight H100s. This binary thinking misses the emerging middle: fine-tuned open-weight models running on single consumer GPUs with specialized optimization libraries like Unsloth.

Key Optimization Benchmarks

- Training Speed: 1.5× faster than Flash Attention 2 setups

- Memory Efficiency: 50% less VRAM consumption

- Quantization Impact: QLoRA 4-bit training is explicitly not recommended for Qwen3.5 due to higher-than-normal quantization differences, bf16 LoRA is the stable path

VRAM Requirements Map

- 0.8B: 3GB (fits on a phone)

- 4B: 10GB (RTX 3080 territory)

- 9B: 22GB (RTX 4090)

- 27B: 56GB (single A100 or dual 4090s)

- 35B-A3B (MoE): 74GB (requires multi-GPU or cloud A100)

These aren’t theoretical numbers. The 27B model matches GPT-4’s coding performance on specific benchmarks while fitting comfortably on a hardware acquisition landscape affecting inference choices, specifically, hardware you can actually buy without negotiating with Nvidia’s enterprise sales team.

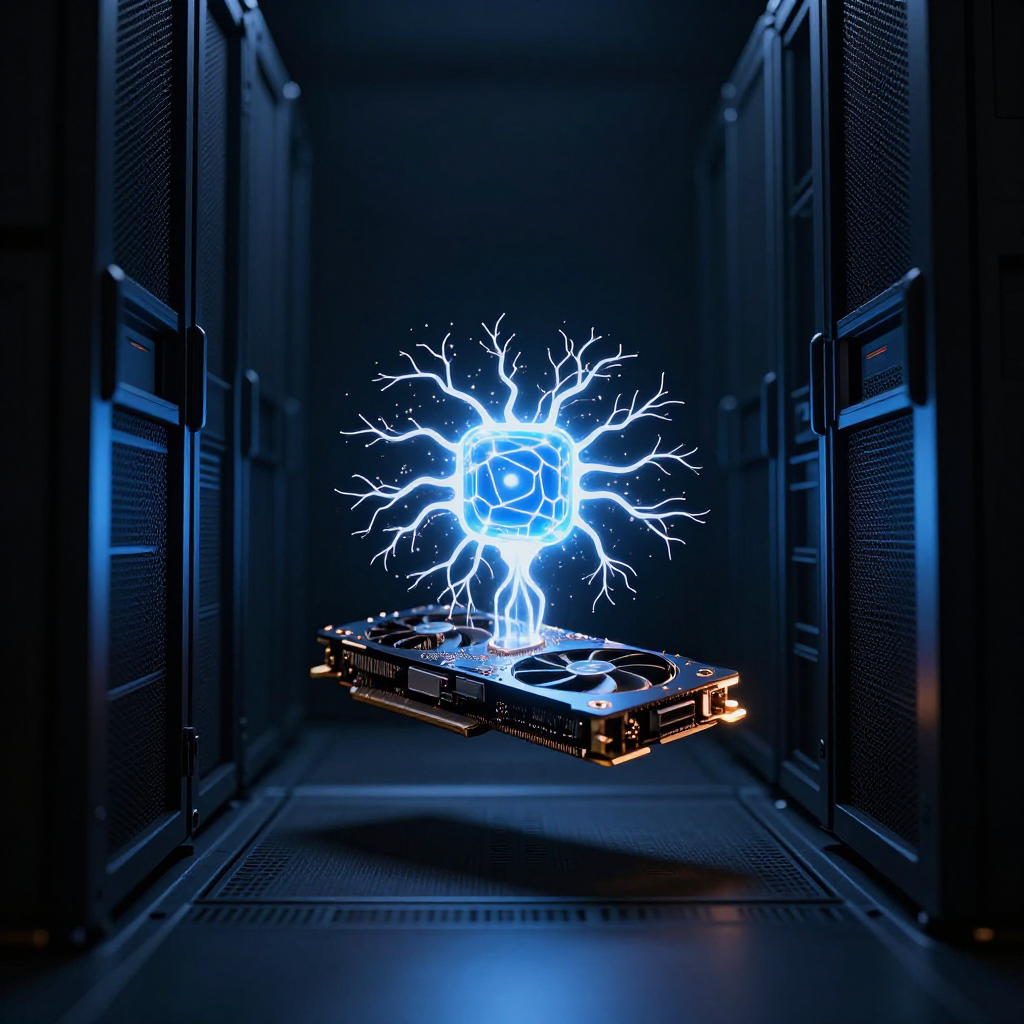

Unsloth: The Anti-Framework

Most fine-tuning libraries treat GPU memory like an unlimited resource and compilation time like a joke. Unsloth takes the opposite approach, using custom Triton kernels that compile once (slowly, on T4s) then execute with brutal efficiency.

Custom Mamba Triton Kernels

Qwen3.5 uses custom Mamba implementations that require kernel compilation. On older GPUs like the T4, this compilation dominates initial startup time. The trade-off? Once compiled, these kernels bypass PyTorch’s overhead entirely, achieving the 1.5× speedup that makes iterative fine-tuning feasible on limited hardware.

MoE-Aware Memory Management

For the Mixture-of-Experts variants (35B-A3B, 122B-A10B, 397B-A17B), standard training frameworks load all experts simultaneously, exploding VRAM usage. Unsloth’s MoE kernels enable ~12× faster training with >35% less VRAM through selective expert activation. The 35B-A3B model, technically 35 billion parameters but only activating 3 billion per token, trains in bf16 LoRA on 74GB VRAM, something impossible with standard DeepSpeed configurations.

Gradient Checkpointing That Actually Works

Unsloth’s gradient checkpointing isn’t the memory-hungry PyTorch default. Their implementation trades compute for memory in a way that allows 500K context length training on consumer hardware. For Qwen3.5 specifically, this means you can fine-tune on long documents without the OOM crashes that plague standard TRL implementations.

The Implementation Reality

Let’s cut through the documentation. Here’s the actual code to fine-tune Qwen3.5-9B on a single RTX 4090:

from unsloth import FastLanguageModel

import torch

from datasets import load_dataset

from trl import SFTTrainer, SFTConfig

# Qwen3.5 requires transformers v5, older versions will fail silently

max_seq_length = 2048

model, tokenizer = FastLanguageModel.from_pretrained(

model_name="Qwen/Qwen3.5-9B",

max_seq_length=max_seq_length,

load_in_4bit=False, # Critical: 4-bit breaks Qwen3.5's reasoning

load_in_16bit=True, # bf16 is the sweet spot

full_finetuning=False,

)

model = FastLanguageModel.get_peft_model(

model,

r=16,

target_modules=[

"q_proj", "k_proj", "v_proj", "o_proj",

"gate_proj", "up_proj", "down_proj",

],

lora_alpha=16,

lora_dropout=0,

bias="none",

use_gradient_checkpointing="unsloth", # Not "true", not "False", the string matters

random_state=3407,

max_seq_length=max_seq_length,

)

trainer = SFTTrainer(

model=model,

train_dataset=dataset,

tokenizer=tokenizer,

args=SFTConfig(

max_seq_length=max_seq_length,

per_device_train_batch_size=1,

gradient_accumulation_steps=4,

warmup_steps=10,

max_steps=100,

learning_rate=2e-4,

optim="adamw_8bit",

fp16=True,

),

)

Notice the load_in_4bit=False. Unsloth explicitly warns against QLoRA for Qwen3.5 due to quantization artifacts that destroy the model’s reasoning capabilities, a detail buried in their docs but critical for production systems.

Deployment Patterns Beyond Docker Containers

Once fine-tuned, you face the serving decision that determines whether your infrastructure costs $50/month or $5,000/month.

GGUF for Edge Deployment

Converting to GGUF format (Q4_K_M quantization) produces a ~731MB file from the 9B model, smaller than most JavaScript frameworks. This runs on on-device model deployment and local AI shifts through Ollama or llama.cpp, enabling offline inference on M4 Macs or edge devices.

vLLM for Production Throughput

For API serving, vLLM’s PagedAttention and continuous batching provide the throughput needed for concurrent requests. The catch: vLLM 0.16.0 doesn’t support Qwen3.5. You need the nightly build or 0.17.0+, a compatibility gap that has burned teams deploying on “stable” releases.

The Multi-Format Strategy

Smart architectures export both:

1. GGUF (Q4_K_M) for development and edge cases

2. bf16 merged for high-performance serving via vLLM

This dual-format approach lets you debug locally with Ollama (ollama run hf.co/username/model:Q4_K_M) then deploy the same weights to production vLLM instances without retraining.

Advanced Survival Tactics

Preventing Catastrophic Forgetting

Fine-tuning Qwen3.5 on domain-specific data (legal contracts, medical triage, manufacturing specs) risks wiping its general reasoning capabilities. The defense is a replay buffer: mix 10-20% general-purpose Q&A data covering math, coding, and common knowledge with your specialized dataset. Keep learning rates aggressively low (1e-5 to 5e-5) and epochs capped at 3-5.

Model Merging for Multi-Domain Expertise

When you have separate LoRA adapters for different tasks, say, one for legal document analysis and another for formal Japanese tone, merge them using TIES (TrIm, Elect Sign, and Merge) or DARE via mergekit. Qwen3.5’s sparse MoE architecture actually facilitates this, allowing expert routing to handle conflicting adapter weights more gracefully than dense models.

Vision Fine-Tuning Infrastructure

Qwen3.5’s vision variants require careful layer selection. Unsloth allows fine-tuning specific components:

model = FastVisionModel.get_peft_model(

model,

finetune_vision_layers=True, # Set False for text-only tasks

finetune_language_layers=True,

finetune_attention_modules=True,

finetune_mlp_modules=True,

r=16,

target_modules="all-linear",

)

This selective fine-tuning prevents overfitting on small vision datasets, a common failure mode when teams treat vision models like language models.

The Efficiency Imperative

While Google chases efficient transformer paradigms for better serving and transformer architecture innovations improving system design, the Qwen3.5 + Unsloth stack delivers practical efficiency today. The 2B model running at 1.27GB quantized outperforms Llama-3-8B on coding benchmarks, proving that parameter count is becoming a vanity metric.

The exodus of Alibaba’s top researchers might signal the end of Qwen’s dominance, or it might liberate these models from corporate strategy shifts. Either way, the infrastructure patterns for efficient fine-tuning are now mature enough that you don’t need a research lab to deploy them, just a consumer GPU and the willingness to ignore standard serving wisdom.

The teams that master these patterns will inherit the capability to run specialized AI on hardware they actually own, insulated from API pricing changes and corporate re-orgs. In an industry obsessed with scale, that’s the real competitive advantage.