Apple finally stopped pretending they weren’t building AI hardware. With the M5 Pro and M5 Max announcement, the company isn’t just iterating on CPU performance, they’re weaponizing memory bandwidth for on-device inference. The headline claim is bold: up to 4x faster LLM prompt processing than M4 Max, and up to 6.7x faster than the M1 Max. But the real story lies in the architectural shift that makes these numbers possible, and what it means for developers who’ve been waiting for local AI to stop being a novelty.

The Fusion Architecture Reality Check

Apple’s new “Fusion Architecture” isn’t marketing fluff, it’s a dual-die packaging strategy that fundamentally changes how the M5 Pro and M5 Max scale. Unlike previous generations where Pro meant “two base chips” and Max meant “four”, the M5 generation uses advanced packaging to bond two third-generation 3nm dies into a single SoC with ultra-high-bandwidth, low-latency interconnects.

This matters because LLM inference is overwhelmingly memory-bound, not compute-bound. You can have all the tensor cores in the world, but if you can’t feed them data fast enough, you’re leaving performance on the table. The M5 Max delivers up to 614GB/s of unified memory bandwidth, nearly double the M4 Max’s ~300GB/s and competitive with discrete GPU territory. For context, an RTX 3090 pushes 936GB/s, but with a catch: you’re limited to 24GB of VRAM. The M5 Max gives you 128GB of unified memory, or roughly 5x the capacity.

The bandwidth increase isn’t just incremental, it’s architectural. By placing a Neural Accelerator in each of the up-to-40 GPU cores and connecting them to a 16-core Neural Engine with higher memory bandwidth, Apple is essentially building an inference engine that doesn’t need to shuttle data between CPU, GPU, and RAM across slow buses. Everything happens in the same memory pool.

By the Numbers: When 4x Actually Means Something

Let’s cut through the marketing math. Apple’s 4x claim for LLM prompt processing compares the M5 Max against the M4 Max, but the generational leap becomes clearer when you look at the performance of previous generation Mac Mini and earlier silicon. The M5 Pro delivers up to 3.9x faster LLM processing than the M4 Pro, while the M5 Max hits that 4x figure against the M4 Max.

But the comparison to M1 is where jaws drop: up to 6.9x faster prompt processing on the M5 Pro versus M1 Pro, and 6.7x on the M5 Max versus M1 Max. If you’ve been holding onto an Intel Mac or an early Apple Silicon machine, we’re talking about an order-of-magnitude shift in local inference capability.

The memory configurations tell the rest of the story:

| Chip | Max Memory | Memory Bandwidth | GPU Cores | Neural Accelerators |

|---|---|---|---|---|

| M5 Pro | 64GB | 307GB/s | 20 | 20 |

| M5 Max | 128GB | 614GB/s | 40 | 40 |

| M4 Max | 128GB | ~300GB/s | 40 | – |

| RTX 3090 | 24GB | 936GB/s | – | – |

That 614GB/s figure is particularly interesting for model loading. Developer forums have been vocal about the pain points of running large models locally, specifically, pre-fill times (the initial processing of a prompt) can stretch into minutes on older hardware. The M3 Ultra Mac Studio, for instance, has been reported to take 10+ minutes on pre-fill for large contexts. The M5 Max’s bandwidth bump directly attacks this bottleneck, allowing the chip to saturate its compute units with data rather than waiting on memory.

The Pre-fill Problem and Why It Matters

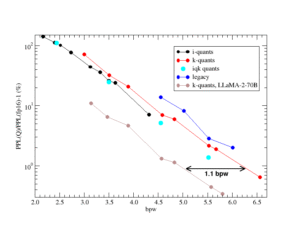

There’s a crucial distinction in LLM inference that Apple glossed over in the keynote: pre-fill (processing the input context) versus token generation (generating new text). Pre-fill is embarrassingly parallel and benefits massively from memory bandwidth, while token generation is often latency-bound by single-threaded performance.

The M5 Max’s 614GB/s bandwidth specifically accelerates pre-fill, meaning you can load that 70B parameter model and process your 8K context window without making a coffee while you wait. This is where efficient LLMs designed for consumer hardware meet their match, when you combine sparse architectures like Liquid AI’s LFM2-24B with 128GB of unified memory and 614GB/s bandwidth, you’re suddenly running frontier-class models on a laptop without quantization artifacts.

The Neural Accelerators in each GPU core handle the matrix multiplications that dominate inference, while the 18-core CPU (6 “super cores” and 12 performance cores) manages the control flow and attention mechanisms. It’s a heterogeneous computing approach that NVIDIA has been charging $3,000+ for with their data center cards, now packed into a laptop chassis that gets 24 hours of battery life.

The Cost Reality: When Does Local Make Sense?

Let’s talk money because the economics of local AI just shifted. The M5 Max MacBook Pro starts at $3,899, steep for a laptop, but competitive when you frame it as an inference appliance. Compare that to alternative DIY hardware costs for local inference: a 6x RTX 3090 build might cost similar money but draws 1500W and sounds like a jet engine. The M5 Max draws a fraction of that power and fits in a backpack.

The unified memory architecture is the stealth advantage here. On a PC with discrete GPUs, you’re limited by VRAM. A 24GB 3090 can barely fit a 70B model in 4-bit quantization, and you certainly can’t run multiple models or handle long contexts. The M5 Max’s 128GB means you can run the full 70B model in higher precision, or keep multiple specialized models resident simultaneously, code completion, image generation, and text analysis all hot in memory, no swapping to disk.

When you compare against cloud model speed benchmarks and comparisons, the calculus changes. A year ago, local inference meant accepting 10x slower performance than cloud APIs. With the M5 Max, you’re in the same ballpark as mid-tier cloud instances, but with zero latency, zero per-token costs, and complete data privacy.

The Fine Print: What Apple Isn’t Saying

Before you max out your credit card, there are caveats. That 614GB/s figure? You need the fully maxed-out M5 Max configuration to hit it, base models likely have lower bandwidth. And while Apple touts 4x speedups, these are specific to certain model architectures and quantization levels. Running a 405B parameter model locally still isn’t happening on a laptop, M5 or not.

Thermal constraints also matter. The M5 Max sustains performance better than Intel chips, but prolonged inference workloads will still trigger thermal throttling in the MacBook Pro chassis. For sustained training or batch inference, you’ll want to wait for the rumored M5 Ultra Mac Studio, which could potentially deliver 1TB/s+ bandwidth by doubling the M5 Max die configuration.

The “super cores” rebranding is also worth scrutiny, these are essentially the performance cores from the base M5, now optimized for multithreaded workloads. The real innovation is the interconnect between dies, not necessarily the core architecture itself.

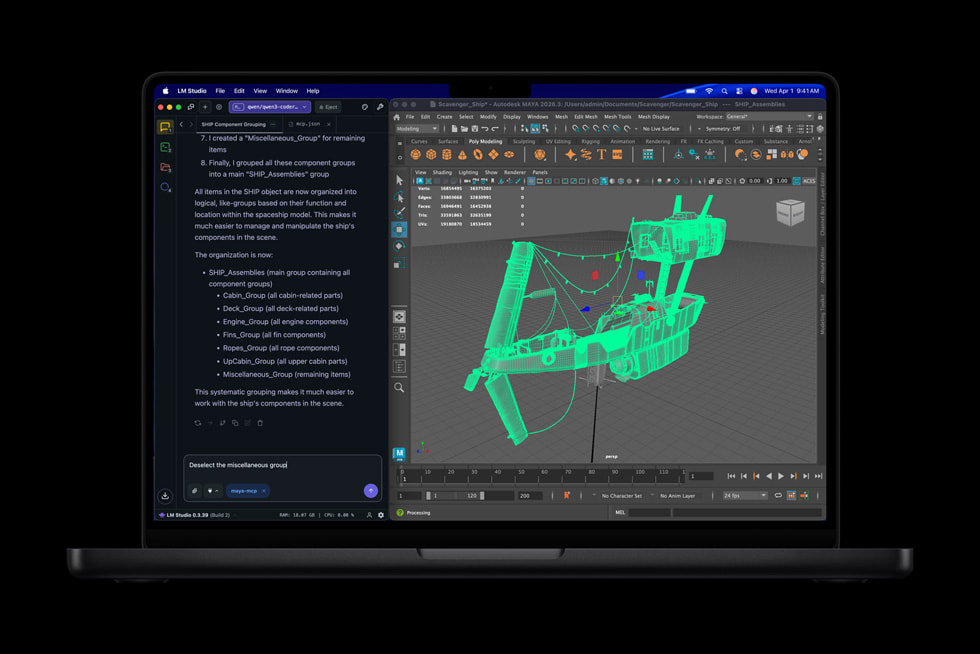

What This Means for Software Architecture

For developers, the M5 Max changes how we think about AI deployment. Previously, local AI meant small models (7B-13B parameters) with compromises. Now, 70B parameter models are viable on a laptop, and 30-40B models run at interactive speeds.

This shifts the architectural decision from “cloud vs. edge” to “how much can we do locally before we need the cloud.” The new Thunderbolt 5 ports (each with dedicated controllers on the SoC) mean you can chain multiple M5 Max machines for distributed inference, or attach high-speed storage for model weights without bottlenecking the system.

The inclusion of AV1 decode and ProRes engines also signals Apple’s intent, these chips are designed for multimodal AI workflows where you’re not just generating text, but processing video, audio, and images through local models.

The Verdict: A Tipping Point for Local AI?

The M5 Max isn’t just a faster MacBook, it’s the first Apple Silicon chip that doesn’t apologize for being consumer hardware. With 614GB/s memory bandwidth and 128GB unified memory, it eliminates the primary bottleneck that’s plagued local LLM deployment: memory capacity and bandwidth.

For researchers and developers who’ve been frustrated by cloud API costs, data privacy concerns, or latency, this is the hardware generation where “running it locally” stops being a compromise and starts being a competitive advantage. The 4x speed claim is impressive, but the architectural shift toward high-bandwidth unified memory is what actually moves the needle.

Pre-orders open March 4th. If you’ve been waiting for local AI to get serious, the wait is over.