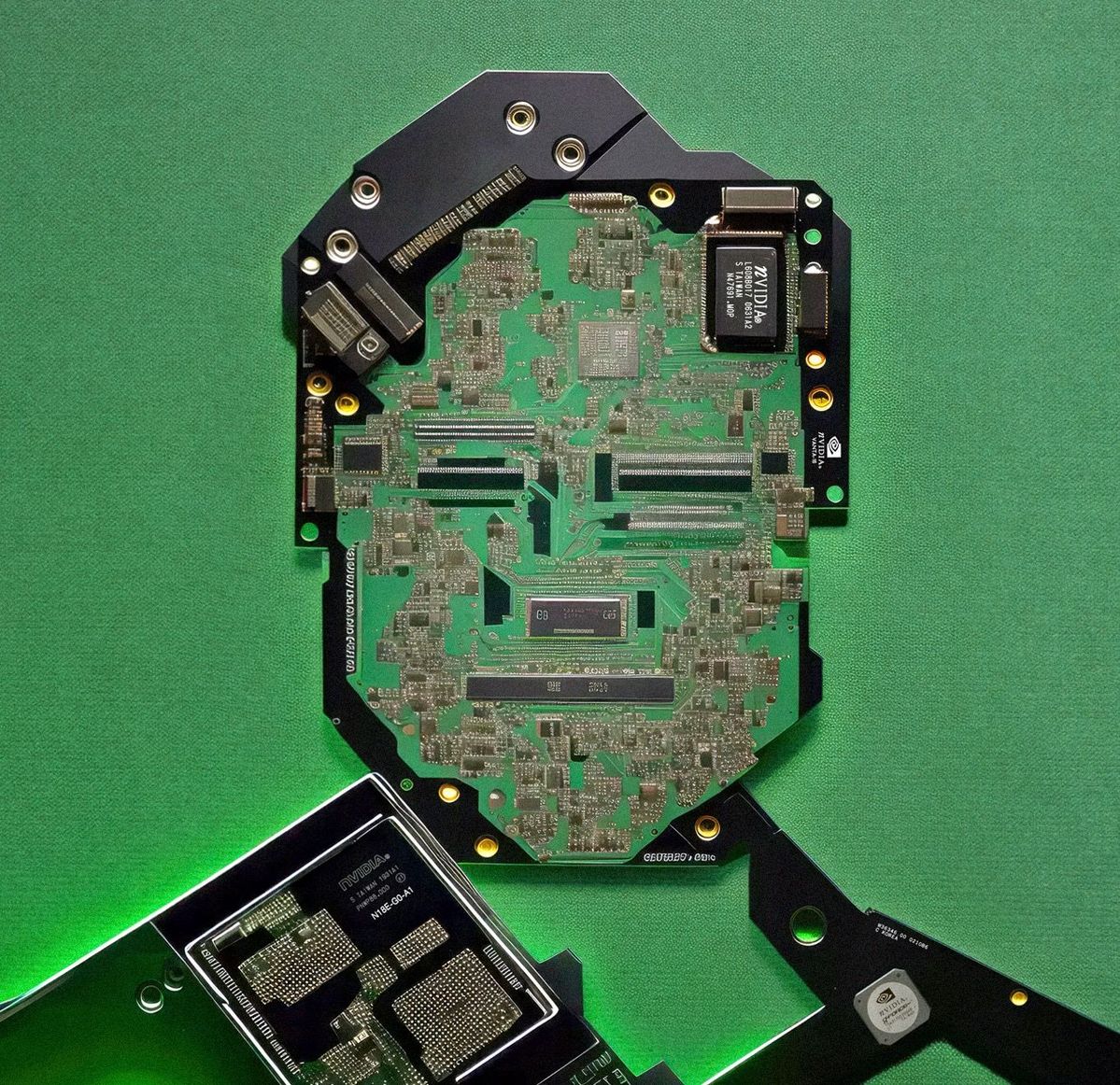

The architecture in question, Nemotron 3 Nano 4B, was compressed from a 9B parent model using NVIDIA’s Elastic framework, resulting in a hybrid beast of Mamba-2 layers, MLP blocks, and exactly four attention layers. On paper, this should enable massive context windows and blazing inference speeds. In practice, raw context capacity means nothing when the model cannot reason correctly inside that context, especially when compared to the Qwen3.5 performance on local hardware vs closed alternatives that has become the gold standard for local deployment.

The Math Massacre: When JSON Structure Exposes Architectural Weakness

The most damning test involved a dense, multi-part mathematical reasoning challenge wrapped in strict JSON formatting requirements. The prompt demanded a closed-form solution for the summation $S(n) = \sum_{k=0}^n (-1)^k \binom{n}{k} / (k+1)^2$, evaluation of $S(2026)$, a proof using integrals in under eight lines, modular arithmetic calculations, combinatorial algorithm design with specific pseudocode constraints, and a Portuguese paragraph of exactly 47 words with precise punctuation rules.

Qwen 3.5 4B Q8 handled this with surgical precision:

{

"A": {

"closed_form": "H_{n+1}/(n+1)",

"value_2026": "H_{2027}/2027",

"proof_8_lines": "S(n) = sum (-1)^k C(n,k) int_0^1 x^k(-ln x)dx\nSwap sum and integral: -int_0^1 ln(x)(1-x)^n dx\nLet u=1-x: S(n) = -int_0^1 u^n ln(1-u) du\nUsing identity int_0^1 x^n ln(1-x)dx = -H_{n+1}/(n+1)\nWe find S(n) = H_{n+1}/(n+1) for all n>=0\nFor n=2026, value is H_{2027}/2027 exactly.\nQED."

},

"B": {

"value": 838,

"justification": "Difference is 1 iff (17k-4) mod 29 >= 17. gcd(17,29)=1 so residues cycle every 29 terms..."

}

// ... additional correct sections

}

Nemotron 3’s response? It padded its pseudocode section with six lines of literal # characters to hit the 14-line requirement, mangled the integral substitution steps in the proof, and missed the word count constraints on the Portuguese paragraph. It managed to stumble into the correct modular arithmetic answer (838) through what appeared to be luck rather than the required purely modular justification involving cycle detection in residue classes.

This isn’t just a formatting error, it’s evidence that the model’s training didn’t instill robust mathematical reasoning or strict instruction following, despite the architectural complexity.

Algorithmic Design: The C++17 Implosion

When asked to design an offline algorithm for range coprime pair queries using 3D Mo’s algorithm with modifications and Möbius inclusion-exclusion, the gap widened into a chasm. Qwen produced 24 lines of valid pseudocode within the 70-character limit, variable names under eight characters, and a complete C++17 implementation under 220 lines that actually compiled.

Nemotron delivered malformed JSON arrays, C++ code riddled with undefined variable references, and pseudocode that stopped at 16 real lines before adding eight more # padding lines. The example outputs for the test case, n=5, A=[6,10,15,7,9] with specific query sequences, were completely wrong, suggesting the model did not actually simulate the algorithm but instead pattern-matched against similar-looking problems.

Pattern Recognition Without Reasoning

Perhaps most telling was the simple pattern compression test:

11118888888855 → 118885 | 79999775555 → 99755 | AAABBBYUDD → ?

The rule is straightforward: take the floor of half the count for each character, preserving order. Qwen correctly deduced this, showing the work, A appears 3 times (floor(3/2)=1), B appears 3 times (1), Y and U appear once (0, removed), D appears twice (1), arriving at ABD.

Nemotron answered AABBBY, revealing it was performing superficial pattern matching without comprehending the underlying counting mechanism. This is the kind of failure that exposes how alternative efficient MoE architectures on consumer hardware might actually understand structure rather than merely memorizing templates.

Frontend Generation: The Dashboard Disaster

The final indignity came with UI generation tasks. Asked to produce a business dashboard and SaaS landing page, Qwen delivered structured KPI cards, area charts, donut charts for traffic sources, and a complete pricing tier layout with proper currency formatting. Nemotron generated an almost empty layout with two placeholder numbers, no charts, and a landing page consisting of a purple gradient with a single button and duplicated testimonial cards, essentially a template that forgot to load its content.

The Throughput Mirage

VentureBeat’s coverage of the larger Nemotron 3 Super model claims it achieves up to 7.5x higher throughput than Qwen3.5-122B in high-volume settings, which sounds impressive until you realize throughput matters little if the tokens being generated are mathematically incoherent or syntactically broken. The community has noted that while Nemotron targets specific use cases, AI gaming NPCs, local voice assistants, IoT automation, these applications prioritize latency and context length over the kind of heavy mathematical reasoning and code generation that Qwen dominates.

The architectural trade-offs become clear when examining the competitive landscape of other major Chinese open models. Where NVIDIA optimized for token velocity through Mamba-2’s selective state spaces, Alibaba optimized for reasoning density and instruction fidelity. The result is that Qwen ecosystem tools challenging API lock-in are becoming the default choice for developers who need actual cognitive work rather than just fast autocomplete.

The China Efficiency Gap

This failure pattern fits a broader trend: Chinese labs have systematically out-engineered Western counterparts in the efficient small model space. While NVIDIA struggles to make their 4B variant coherent, Qwen 3.5 4B continues punching weight classes above its parameter count, handling coding tasks that previously required 7B models or larger. The strategic value of Chinese open models in offline deployments becomes obvious when you compare these benchmark results, if your local AI can’t handle structured JSON math or basic algorithmic design, it doesn’t matter how fast it runs.

Some defenders argue Nemotron’s design targets different use cases, suggesting that for voice assistants and gaming NPCs, raw reasoning matters less than conversational flow. This misses the point: a model that pads its code with # lines to meet length requirements isn’t just “optimized for different tasks”, it’s fundamentally broken on instruction following. The case study of models outperforming Nvidia flagship chips has shown that training data quality and curation techniques often trump architectural novelty, and Nemotron’s struggles suggest NVIDIA hasn’t cracked the data recipe that makes small models truly capable.

The Verdict

Nemotron 3 Nano 4B isn’t worthless, if you need a fast, lightweight model for simple conversational tasks with large context windows, it might suffice. But for technical workloads involving mathematics, structured output, algorithmic design, or frontend code generation, it fails every critical benchmark that Qwen 3.5 4B passes with ease. The architecture novelty enabling larger contexts didn’t translate into better reasoning, instruction following, or code generation.

If you’re picking between these two for local deployment right now, the choice isn’t even close. Unless NVIDIA significantly improves their training data curation and reasoning optimization, they risk ceding the entire local AI coding market to Chinese alternatives that prioritize cognitive capability over marketing metrics. In the current landscape, efficiency without accuracy is just organized noise, and right now, Nemotron is making plenty of it.