The 4chan Training Data Paradox: When Raw Chaos Outperforms Curated Purity

The AI community has spent years perfecting data curation pipelines, filtering out toxicity, and sanitizing training corpora to build “safe” and “helpful” models. So when a model trained on one of the internet’s most infamous imageboards starts beating Nvidia’s flagship models, it raises uncomfortable questions about whether we’ve been optimizing for the wrong metrics.

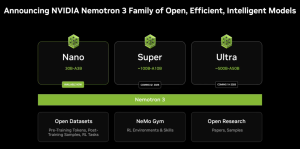

Assistant_Pepe_8B isn’t a lab-polished product from a trillion-dollar company. It’s an experiment that shouldn’t have worked, an 8B parameter model fine-tuned on an extended 4chan dataset, built on an abliterated version of Nvidia’s Llama-3.1-Nemotron-8B-UltraLong-1M-Instruct. The results? It outperformed its own base model across key benchmarks, violating the fundamental law that fine-tuning always trades raw capability for specialized behavior.

The Numbers That Don’t Add Up

The benchmark scores tell a story that makes ML engineers uncomfortable. The abliterated base model, stripped of its safety alignment, already scored higher than the original Nemotron. That’s surprising enough. But the 4chan-fine-tuned version scored higher than both. This isn’t supposed to happen. Fine-tuning typically compresses some general capabilities to amplify specific ones. It’s the “alignment tax” we’ve all accepted as inevitable.

The KL divergence for a related model in this family, Impish_LLAMA_4B, measured less than 0.01, indicating the 4chan fine-tuning didn’t radically alter the model’s core behavior, it just made it better. This suggests we’re not looking at a fluke or statistical noise. There’s something in that chaotic data that the base model needed.

Why 4chan Data Might Be Secretly Excellent

The knee-jerk reaction is to dismiss 4chan as a toxic swamp. But developers who’ve actually worked with the data describe it differently. Byte for byte, it might be one of the most linguistically valuable corpora on the internet.

The Authenticity Factor

Forums have noted that 4chan’s anonymity creates something rare: genuine human expression without performance. Unlike Reddit, where users unconsciously adapt their writing to game a voting system, or Twitter, where character limits and algorithmic incentives breed bot-contaminated sloganeering, 4chan conversations are raw and direct. People aren’t crafting posts for upvotes or engagement metrics, they’re just talking.

This authenticity translates to training value. When a model learns from 4chan, it’s learning from humans being human, not humans trying to please an algorithm. The first-person nature of responses, direct answers followed by brief, often crude asides, creates a dataset rich in unfiltered reasoning patterns.

The Bot-Free Advantage

One developer pointed out that Twitter data “actively retards language models” while 4chan data has no apparent saturation point. The hypothesis? Twitter is so contaminated by bots and engagement farming that it teaches models to mimic artificial patterns rather than human thought. 4chan, for all its chaos, remains stubbornly organic. Every poster is a real person with real (if sometimes terrible) opinions.

This aligns with broader concerns about the rise of AI-generated low-quality content (slop) polluting training pipelines. If your dataset is filled with AI-generated text, you’re just teaching models to parrot increasingly degraded copies of themselves. 4chan’s human toxicity might be preferable to artificial blandness.

The Alignment Tax Reconsidered

The concept of an “alignment tax”, the performance cost of making models safe and helpful, has been debated for years. But Assistant_Pepe_8B suggests the tax might be worse than we thought. The abliterated base outperformed the aligned version, and the 4chan fine-tune outperformed both. This hints that alignment might be actively obscuring capabilities rather than just slightly reducing them.

One interpretation: post-training alignment creates a “mask” that bottlenecks the model’s expressiveness. The “How can I help you today?” persona is so restrictive that it prevents the model from accessing the full variety of reasoning patterns learned during pre-training. Abliteration removes the mask, and 4chan data provides the raw, unfiltered conversational examples that let those patterns surface naturally.

This isn’t an argument for unleashing toxic models on the world. It’s a challenge to our methods. If alignment fundamentally cripples capability, we need better alignment techniques that preserve the model’s intelligence while still ensuring safety.

The Secret Industry Practice

Here’s where it gets conspiratorial. Multiple developers claim that major AI labs already know about 4chan’s training value. The running joke is that Common Crawl and FineWeb work so well partly because they’re the most PR-friendly way to smuggle 4chan data into models. It’s an open secret: when labs need a performance boost, increasing the “organic forum data” ratio is an explicit emergency step.

The cottage industry of 4chan models on Hugging Face, mostly edgy experiments by anonymous developers, might be accidentally revealing what closed labs already do in secret. The difference is scale and sanitization. Corporate models get the sanitized, diluted version. Assistant_Pepe_8B got the raw feed.

This raises uncomfortable questions about transparency. If labs are already leveraging this data source, why the public silence? The answer is obvious: admitting you train on 4chan is a PR nightmare. But the performance gap between sanitized and raw data might be significant enough that we’re seeing a two-tier system emerge, public models that are safe but slightly neutered, and internal research models that leverage everything.

The Reddit vs. 4chan Paradox

The comparison to Reddit is particularly revealing. Reddit data has long been considered gold-standard for conversational AI. But developers are increasingly questioning whether its voting system creates systematic biases that hurt model training.

The argument goes like this: Reddit’s downvote patterns create a “Manchurian candidate” effect where certain opinions must be formatted in specific ways to avoid automatic suppression. This teaches models not just what to say, but how to self-censor preemptively. The result? Models that are overly cautious and refuse to engage with controversial but legitimate queries, like how to kill a Linux process when it’s the correct technical solution.

4chan has no such filter. The result is a dataset that teaches models to answer the actual question, then maybe add a rude comment. For technical tasks, that’s vastly more useful than refusing to answer at all.

Practical Implications for Model Development

If these results hold, they suggest several non-obvious strategies for improving LLMs:

-

Rethink Data Purity: The obsession with clean, sanitized data might be misguided. Strategic inclusion of raw, unfiltered human conversation could improve reasoning capabilities.

-

Alignment as Compression: Current alignment techniques might compress too much. We need methods that preserve model variety while still ensuring safety.

-

Source Diversity Matters: The best training corpus might be a Frankenstein’s monster of sources, Wikipedia for facts, GitHub for code, and yes, 4chan for raw conversational reasoning.

-

The Twitter Avoidance Protocol: If Twitter data truly “retards” models, many current datasets need auditing for bot contamination.

This connects to the broader trend of challenging the assumption that larger models are inherently better. If a carefully trained 8B model can outperform larger ones, we’re entering an era where data quality and training methodology matter more than raw scale.

The Ethical Tightrope

None of this excuses 4chan’s worst aspects. The model’s creator, Sicarius_The_First, deliberately aimed for “helpful shitposting”, a tightrope walk between useful assistance and unfiltered personality. The result is a model that will roast you for asking dumb questions while still providing correct answers.

This raises questions about what we want from AI assistants. The sterilized corporate chatbots that dominate the market are inoffensive but often useless for complex tasks. They’ll refuse to help with anything that might be controversial, even when it’s legitimate. A model with a personality, even a rude one, might be more useful.

The key insight from the developer community is that 4chan’s value isn’t in its toxicity but in its humanity. The goal isn’t to create racist AI. It’s to preserve the authentic reasoning patterns that happen to exist alongside the toxicity. The challenge is separating the wheat from the chaff.

Why This Matters Now

The timing is significant. As Gen Z’s rejection of low-quality AI-generated content (slop) becomes mainstream, the AI industry is facing an authenticity crisis. Users can spot AI-generated text instantly and are increasingly rejecting it. Training on authentic human conversation, even messy, toxic human conversation, might be the antidote.

Meanwhile, the economic collapse of cloud LLM APIs is pushing more development toward local, open-source models. When you’re running models on your own hardware, the sanitized corporate version isn’t your only option. Experiments like Assistant_Pepe_8B become feasible.

This creates a feedback loop: as local models improve through unconventional training data, they challenge the cloud API model. And as cloud APIs become economically unsustainable for many use cases, the incentive to explore these alternatives grows.

The Verdict: A Data Quality Revolution

Assistant_Pepe_8B isn’t just a novelty. It’s a data point in a larger trend that’s forcing the AI community to confront uncomfortable truths about what makes models “good.” The metrics we’ve optimized for, safety, helpfulness, inoffensiveness, might be in direct conflict with raw capability.

The 4chan paradox suggests that the most valuable training data isn’t the cleanest or most curated. It’s the most real. In a digital ecosystem increasingly polluted by AI-generated content, preserving sources of authentic human expression, even messy, anonymous, controversial expression, might be critical for maintaining model quality.

This doesn’t mean your next enterprise chatbot should be trained on /pol/. But it does mean the industry’s data curation orthodoxy deserves scrutiny. The alignment tax might be higher than we can afford, and some of the data we’ve been throwing away could be exactly what our models need.

For now, Assistant_Pepe_8B stands as a provocative proof-of-concept: sometimes the path to better AI runs through the internet’s most notorious corners. The challenge isn’t whether to use this data, but how to use it responsibly without losing the authenticity that makes it valuable.