For years, the conventional local AI wisdom has been simple: use llama.cpp when you’re memory-constrained or want simplicity, but switch to vLLM when you need raw generation speed and production features. A beta pull request currently merging into llama.cpp is about to make that decision a lot more complicated.

By adding native Multi-Token Prediction (MTP) support, effectively Medusa-style speculative decoding baked into the model architecture, llama.cpp isn’t just catching up with vLLM’s performance, it’s targeting the very feature that gave vLLM its throughput advantage.

The early benchmarks, like those in noonghunna/club-3090, hint at a coming disruption: the llama.cpp single-card, Qwen3.6-27B, 262K context config is now competing on throughput where only vLLM’s dual-card setup used to dominate. This isn’t an incremental tweak to a memory allocator, it’s a fundamental change in how local inference engines compete. If MTP delivers the 1.5x-2.5x speedups being reported, the cost calculus for self-hosting or building local AI tooling is about to shift sharply.

The Gap That Needed Closing

Let’s be honest: for pure token generation speed, vLLM has been the undisputed king. It’s not just the PagedAttention algorithm or the efficient KV cache management, it’s the engine’s first-class support for speculative decoding techniques like MTP and draft models that let it generate multiple tokens per costly forward pass of the main model. This is how vLLM achieves throughput numbers like 105 tok/s on a Qwen 27B that leave pure autoregressive runs in the dust.

Llama.cpp, meanwhile, built its reputation on a different set of strengths: unparalleled memory efficiency, broad hardware support (including Apple Silicon and AMD ROCm with significant recent improvements), and the ability to serve massive context windows on consumer-grade hardware.

Its

single-card-max-ctx.shrecipe for Qwen3.6-27B could already handle a full 262K context on a single 3090, something vLLM couldn’t touch without a dual-card setup. But when it came to raw tokens-per-second for agentic tasks or interactive chat, it lagged.

MTP: The Difference Between a Draft Model and a Draft Head

Speculative decoding isn’t new. The core idea is simple: use a cheap, fast method to propose (“draft”) a sequence of future tokens, then use the expensive target model to verify them in parallel. If they’re correct, you accept them all, if not, you roll back and try again. The trick is in the “cheap, fast method.” Historically, this has meant running a separate, smaller “draft model” alongside your main model.

MTP (Multi-Token Prediction), and its popular implementation Medusa, takes a different approach. Instead of a separate model, the target model itself is trained with extra “heads” that predict multiple future tokens from the same hidden state. Think of it as a single forward pass that whispers, “Here’s what I think the next token is… and the next one… and the next one.”

The technical summary from the Reddit discussion lays out the landscape well:

What makes the PR #22673 so significant is that it brings first-class MTP support to llama.cpp’s speculative decoding framework. Aman (@am17an)‘s implementation is notable for a few key design choices:

1. The MTP “model” loads from the same GGUF file as the main model, avoiding the need to distribute separate artifacts.

2. It has its own dedicated context and KV cache, preventing the hidden-state propagation issues seen in earlier attempts like the EAGLE-3 PR.

3. It leverages a separate speculative decoding class but depends on another PR (#22400) that enables partial sequence rollback for Gated Linear Networks (GLNs), reducing wasted computation when drafts are rejected.

The numbers speak for themselves. On a DGX Spark running Qwen3.6-27B-Q8_0, enabling MTP with --spec-draft-max-n 3 resulted in a steady-state acceptance rate of ~72% and more than doubled the total wall-clock token generation speed.

| Task | Baseline (tok/s) | MTP N=3 (tok/s) | Speedup |

|---|---|---|---|

code_python |

7.0 | 21.6 | ~3.1x |

code_cpp |

7.3 | 18.7 | ~2.6x |

stepwise_math |

7.2 | 19.3 | ~2.7x |

| Aggregate | 7.1 avg | ~17.6 avg | ~2.5x |

The Real-World Speedup: Catching vLLM on Consumer Hardware

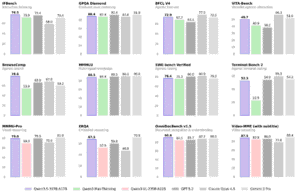

Benchmarks from passionate early adopters are even more telling. In the club-3090 repository community benchmarks, vLLM with dual 3090s using DFlash speculative decoding achieved 127 TPS on code tasks. That’s the high-water mark.

Now, look at the early llama.cpp-with-MTP numbers. A user on an RTX 5090 reported Qwen 27B jumping from 55 to 105 tok/s, nearly matching the vLLM result but on a single, more modern card. Another tested on a single 3090 with Qwen3.6-27B-MTP-Q6_K:

- MTP Disabled: 22.39 tok/s

- MTP Enabled (

--spec-type mtp --spec-draft-n-max 3): 42.45 tok/s - Speedup: ~1.9x

This brings the single-card llama.cpp performance (~42 tok/s) much closer to vLLM’s single-card performance (~55-70 tok/s depending on config), and it does so while still maintaining llama.cpp’s signature massive 262K context capability.

This is the game-changer. It’s not about beating vLLM’s peak dual-card numbers on paper. It’s about collapsing the performance-per-watt and performance-per-dollar gap for the vast majority of users who run on single, consumer-grade GPUs. The trade-off between “vLLM for speed” and “llama.cpp for context” is effectively disappearing.

A glance at the performance landscape from club-3090 shows the competitive tiers. MTP-supported llama.cpp now threatens to occupy the “high context, high speed” quadrant.

The Devil (and the Speed) Is in the Details

This isn’t a free lunch. The PR and related discussion highlight important trade-offs and current limitations that developers need to understand.

1. Memory Overhead

MTP isn’t magic, it requires extra VRAM. While far more efficient than loading a separate draft model, the extra layer and its dedicated cache cost space. One tester noted that while the MTP layer itself was only ~440 MB, total VRAM usage increased by ~2.7 GB at a 16K context length and ~3.1 GB at 128K. This is manageable on a 24GB 3090 but could be the difference between fitting a model or not on more constrained hardware.

2. Model Compatibility

This is the big one. MTP only works with models trained with MTP heads. You can’t simply flip a switch on your existing Llama 3.1 or Mistral 7B GGUF. Currently, Qwen3.5 and Qwen3.6 models are the primary public examples, with DeepSeek V3/R1 also being candidates. This constitutes a relatively small subset of the open-weight model landscape. Community tools are emerging to “graft” MTP layers onto compatible base models, but it’s an extra step.

3. Prefill Penalty

There’s a known, but fixable, performance quirk in the current beta. The same benchmark that showed a ~1.9x decode speedup also showed prefills slowing down from ~1260 tok/s to ~665 tok/s, roughly halving. The PR author acknowledges this issue and is working on a fix. For workloads with very long prompts relative to generations, this could temporarily negate the decode gains.

4. Backend Support

The implementation relies on partial sequence rollback support for GLNs (PR #22400), which currently only exists for the CUDA backend. This means Vulkan and Metal users are left waiting, as one user discovered when their Radeon 9700 produced garbled outputs with MTP enabled. As with many of llama.cpp’s advanced quantization features, cutting-edge support often rolls out platform-by-platform.

Implications for the Local AI Stack

The beta status of this PR means it’s not yet ready for plug-and-play production. But its trajectory points to a near-future where the local inference ecosystem looks different.

What This Means for You

If you’re a developer building applications that rely on running state-of-the-art models locally, this shift matters.

- For New Projects: Strongly consider starting with a Qwen3.6 model and the llama.cpp beta (once it stabilizes). You get the best of both worlds: vLLM-class generation speed and llama.cpp’s legendary context capacity and stability.

- For Existing vLLM Setups: Don’t panic. vLLM still holds advantages in areas like advanced continuous batching for multi-user serving and a more mature ecosystem for production deployment. But your performance edge for single-stream, interactive use cases is narrowing fast. Keep an eye on your throughput benchmarks against the latest llama.cpp builds.

- For the Performance-Obsessed: Start testing. The

am17anGGUF files are available, and the PR is mergable. Run your own benchmarks. Is that 1.9x speedup universal across your workload, or does your specific task see more or less benefit? The only way to know is to measure.

The Verdict: Not a Knockout, But a TKO in the Making

The “beta” tag is important. Prefill slowdowns, backend limitations, and the constrained model ecosystem mean vLLM isn’t obsolete tomorrow. But the writing is on the wall. The ~2x decode speedup is real, and it fundamentally changes the performance profile of llama.cpp.

This isn’t just about closing a gap, it’s about redefining the race. When one engine can match another’s signature feature while maintaining its own unique strengths, the entire landscape of local LLM efficiency tradeoffs gets redrawn. The era of choosing between speed and context is ending. The next battle will be about who can deliver both, most reliably, to the most users. And right now, llama.cpp’s MTP beta is delivering a compelling opening salvo.