Luce DFlash’s 2x Throughput Isn’t Magic, It’s a Hardware Exorcism

ggml and fused with an emerging research technique called DFlash speculative decoding. It forces a question: in an era of ever-larger models, is brute-force scaling the only path, or can we make the hardware we already own radically more efficient?

Let’s dissect what’s actually happening.

The Core Claim: Doubling Speed on a 2020 Flagship GPU

The Luce-Org/lucebox-hub repository introduces Luce DFlash: a standalone C++/CUDA stack porting the DFlash (Diffusion Flash) speculative decoding algorithm to the GGUF ecosystem. The headline result is stark:

Running on an RTX 3090 (24GB VRAM) with a Qwen3.6-27B Q4_K_M target model (~16GB) and a matched BF16 draft model (~3.46GB), the system benchmarks show:

| Benchmark | Autoregressive (tok/s) | DFlash + DDTree (tok/s) | Acceptance Length (AL) | Speedup |

|---|---|---|---|---|

| HumanEval | 34.90 | 78.16 | 5.94 | 2.24x |

| Math500 | 35.13 | 69.77 | 5.15 | 1.99x |

| GSM8K | 34.89 | 59.65 | 4.43 | 1.71x |

| Mean | 34.97 | 69.19 | 5.17 | 1.98x |

This isn’t a paper number or a lab result. It’s a reproducible, C++ binary (test_dflash) that loads weights from Hugging Face and spits out real tokens, twice as fast. The secret isn’t a better quant (though Q4_K_M from Unsloth helps). It’s an orchestrated attack on the fundamental latency bottleneck of large language models: sequential token generation.

The Engine Room: Speculative Decoding and the DDTree Verify

To understand the speedup, you need to understand speculative decoding. The core idea isn’t new: use a small, fast “draft” model to predict a sequence of future tokens, then use the large, accurate “target” model to verify them in parallel, accepting correct predictions and rejecting/regenerating incorrect ones. The goal is to get more than one correct token per expensive target model forward pass. The fundamental challenge is making this actually faster on real hardware, where memory bandwidth and kernel launch overhead can erase any theoretical gains.

Luce DFlash implements two key research components:

1. DFlash (Wang et al., 2026): A “block-diffusion” draft model conditioned on the target’s hidden states. The draft isn’t just a smaller version of Qwen, it’s a specially trained network designed to predict blocks of tokens based on the target model’s internal representations. The draft weights (z-lab/Qwen3.6-27B-DFlash) are publicly available.

2. DDTree (Ringel & Romano, 2026): A tree-structured verification algorithm that outperforms simpler “chain” verification. Instead of linearly checking draft tokens, it explores multiple potential acceptance paths in a tree, finding longer sequences of correct tokens per verification step. The Luce implementation tunes this for a budget=22 on the RTX 3090.

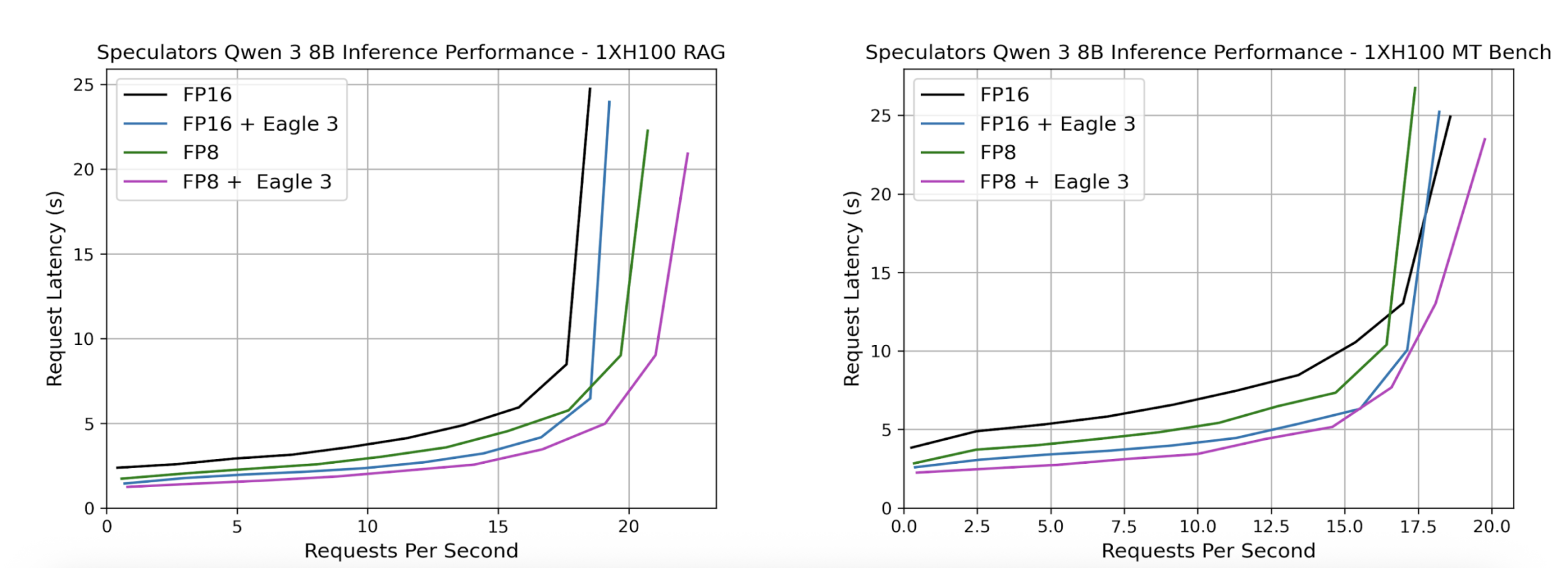

This approach is gaining traction. The vllm-project/speculators library aims to be a “unified library for building, evaluating, and storing speculative decoding algorithms for LLM inference in vLLM.” Luce DFlash’s contribution is bringing this advanced technique to the GGUF format and the ggml backend, the core of the massively popular llama.cpp project.

The Real Innovation: A Hardware-Constrained Redesign

What makes Luce DFlash notable isn’t just the algorithm choice, it’s the extreme architectural constraints they embraced and the subsequent optimizations they built. The README lays it bare: “The constraint that shaped the project.” An AWQ INT4 quant of Qwen3.6-27B plus the BF16 draft simply wouldn’t fit in 24GB VRAM alongside the DDTree verification state. Their solution was a multi-faceted engineering sprint:

- GGUF Q4_K_M Target: The largest quantization that fits the target (~16GB), draft (~3.46GB), tree state, and KV cache in 24GB. This forced a new port on top of

ggml, as no public DFlash runtime supported GGUF targets. - TQ3_0 KV Cache Compression: They compress the Key-Value cache to 3.5 bits per value (bpv), a ~9.7x compression versus F16, using a TurboQuant format. This, combined with a sliding

target_featring buffer, allows 256K context to fit within the same 24GB. - Memory-Aware Scheduling: The system auto-tunes. It bumps the prefill batch size from 16 to 192 for prompts longer than 2048 tokens (achieving ~913 tok/s prefill). It applies sliding-window flash attention during decoding so a 60K context still decodes at 89.7 tok/s instead of crashing to 25.8 tok/s.

- Custom CUDA Kernels: Three of them (

ggml_ssm_conv_tree,ggml_gated_delta_net_tree,ggml_gated_delta_net_tree_persist) handle the tree-aware SSM state rollback needed for DDTree verification.

This is the opposite of abstract framework-building. It’s hand-tuning for one specific chip (RTX 3090) and one model family (Qwen 3.5/3.6 architecture) to extract every last drop of performance. As their manifesto states: “We don’t wait for better silicon. We rewrite the software.” This philosophy of aggressive, hardware-specific optimization is what made projects like llama.cpp successful in the first place.

The Trade-Offs and the Fine Print

No speed boost comes free. The Luce DFlash approach has clear, documented constraints that define its ideal use case:

- CUDA-Only, NVIDIA-Only: No Metal, ROCm, or multi-GPU support. It’s built for Ampere (

sm_86), Ada (sm_89), Blackwell consumer (sm_120), DGX Spark (sm_121), and Jetson AGX Thor (sm_110). - Greedy Verify Only: The OpenAI-compatible HTTP endpoint accepts temperature and

top_pparameters, but ignores them during verification. This is for deterministic, high-accuracy generation. If you need creative sampling, this isn’t your tool. - Quantization Impact: As some discussions on the Unsloth model page highlight, lower quantization (like Q4) can impact accuracy on complex reasoning tasks. The speed-vs-accuracy trade-off is real, and Luce DFlash leans into speed.

- Memory as the Ultimate Limiter: The entire architecture, the choice of Q4_K_M, the TQ3_0 KV cache, the

budget=22for DDTree, was dictated by the 24GB ceiling of the RTX 3090. Cards with more VRAM (like the RTX 5090’s 32GB or a DGX Spark’s 128GB unified) could theoretically support larger trees, less aggressive quantization, or no KV cache compression, potentially unlocking even greater speedups.

This last point connects directly to the broader world of local LLM efficiency benchmark breakdown. Projects like Luce DFlash are part of a trend that moves beyond just comparing model sizes and looks holistically at the entire inference pipeline, quantization, memory layout, kernel fusion, and decoding strategy, to define what’s possible on a given piece of hardware.

Why This Matters: The Shift from Scaling Up to Wringing Out

For years, the local AI narrative has been about access: can you run the latest model on your hardware? As models like Qwen3.6 push boundaries, that question is getting harder to answer positively without significant Qwen model context window optimization challenges. Luce DFlip represents a different, complementary path.

It asks: Given a fixed, consumer-grade GPU, how can we make a capable model run not just adequately, but well?

This aligns with a growing recognition in the community. As seen in forums, users are frustrated when newer models like Qwen 3.6 feel slower than their predecessors on the same hardware, a point raised in discussions about performance bottlenecks and optimization tips. The answer isn’t always “buy a 5090.” Sometimes, it’s a fundamental rewrite of the software stack, as Luce has done.

This is the same ethos behind other projects pushing the boundaries of what’s possible locally, from the precision of real-world local model accuracy validation to the integrated approach of open-source inference and training tools. It’s about maximizing the utility of the silicon already on our desks.

The Verdict: A Proof of Concept for a New Local AI Workflow

Luce DFlash is not a drop-in replacement for your existing llama.cpp or vLLM setup. It’s a specialized, high-performance instrument for a specific task: fast, deterministic, memory-constrained generation with Qwen 3.5/3.6 models on NVIDIA GPUs.

Its importance is as a proof of concept. It demonstrates that the 2x speedup promised by speculative decoding research is achievable today on last-gen consumer hardware, but it requires abandoning general-purpose frameworks and writing custom, hardware-tuned code. It shows that the Qwen architectural performance improvements can be fully leveraged, but it takes work.

For developers and researchers willing to operate within its constraints, it offers a tantalizing glimpse of near-future local inference: not just running models, but running them fast. For the broader ecosystem, it’s a call to action. The algorithms (DFlash, DDTree) are published. The weights are available. The ggml foundation is solid. The next step is for these optimizations to migrate into the mainstream tools.

The age of simply throwing bigger models at bigger GPUs is ending. The age of sophisticated, algorithmic inference optimization, making the most of what we have, is just beginning. Luce DFlash is one of its first and most compelling manifestos.