Every decade, the software industry convinces itself it has invented the next universal glue. HTTP cracked open the network. REST untangled the monolith. Now, Starburst’s engineering leadership claims Model Context Protocol (MCP) belongs in that same pantheon, a “fork in the road” as historically significant as its predecessors.

The claim is bold, perhaps too bold. HTTP made information accessible. REST made systems modular. MCP, according to its evangelists, makes systems adaptive. But between the Linux Foundation governance announcements and the breathless LinkedIn posts, there’s a messier reality: a protocol whose specifications change monthly, whose original transport is already deprecated, and whose enterprise security model remains half-baked.

Is MCP the architectural reset we’ve been waiting for, or just another integration standard destined for the graveyard of forgotten acronyms?

What MCP Actually Is (When You Strip Away the Marketing)

MCP is, at its bones, a standardized way for Large Language Models to discover and invoke external capabilities. Born at Anthropic in late 2024 and now shepherded by the Agentic AI Foundation under the Linux Foundation, it defines how an AI agent negotiates access to tools, data sources, and business logic without hard-coded integrations.

The technical reality is less revolutionary than the “USB-C for AI” taglines suggest. MCP servers expose capabilities over HTTP (specifically the Streamable HTTP transport introduced in March 2025), authenticate via OAuth 2.1, and communicate via JSON-RPC. When an agent connects, the server delivers a manifest describing available tools, effectively a machine-readable API documentation that LLMs can parse to decide what to call.

The shift from REST is subtle but real. In REST, you define endpoints, contracts, and flows upfront. Developers pre-wire integrations. MCP flips this: instead of “here’s the API, call it like this”, the model asks “here’s my objective, what capabilities are available?” It’s dynamic capability discovery versus static contract adherence.

As one engineer noted in recent community discussions, the protocol essentially allows an agent to interpret documentation in real-time, a task REST never fully solved. But that interpretation is only as good as the governance behind the data layer, a point we’ll return to.

The Velocity Problem: Moving Fast and Breaking Transports

Here’s where the HTTP comparison starts to crack. HTTP took years to standardize. REST architectural patterns stabilized over decades. MCP, by contrast, is moving at AI-market speed, and the whiplash is showing.

The protocol has already deprecated its original transport mechanism. The November 2025 specification introduced asynchronous operations, stateless server design, and server identity features, core architectural changes less than a year after release. SDKs for Python and TypeScript now see 97 million monthly downloads combined, suggesting explosive adoption, but the GitHub discussions reveal a community grappling with breaking changes.

Enterprise teams are noticing. The specs change monthly. Features that worked in Claude Desktop last quarter break in Cursor this quarter. One developer reported that Codex increasingly ignores protocol instructions, basing decisions “mostly on tool names” rather than the rich context MCP provides. When models “upgrade”, previous behaviors that worked become “nightmares”, forcing constant recalibration of agent skills.

This isn’t the stability of HTTP. It’s the volatility of a bleeding-edge framework masquerading as infrastructure.

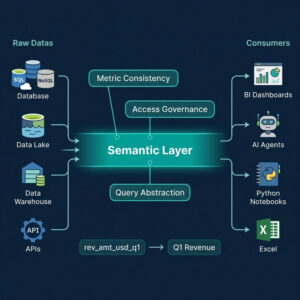

The Three-Layer Reality Check

| Layer | Component | Responsibility |

|---|---|---|

| Interaction | MCP | Discovery & capability exposure |

| Reasoning | Models & Agents | Inference, orchestration, decision-making |

| Data | Governed Context | Federated access, semantics, trust |

The uncomfortable truth? MCP doesn’t simplify your architecture. It moves complexity elsewhere, specifically into the data governance layer that most enterprises haven’t solved. Connect MCP to a fragmented data environment, and you don’t get intelligence. You get “partial answers, inconsistent results, and a system that looks more capable than it actually is.”

This aligns with broader enterprise AI adoption economics concerns. The cost of integration isn’t disappearing, it’s shifting from API development to data engineering. Teams still spend months building connections that should be reusable, only now they’re debugging OAuth 2.1 flows and session management instead of REST endpoints.

Production MCP: Not as Simple as the Tutorials Claim

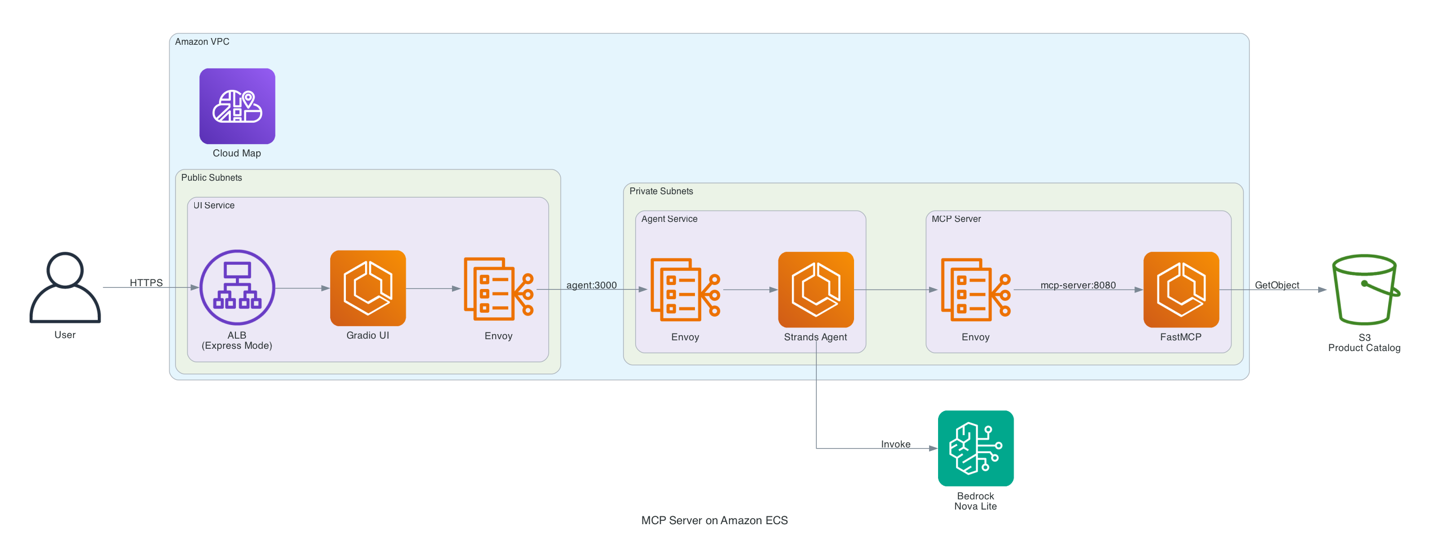

AWS’s recent deployment guide for MCP servers on ECS illustrates the production gap. What starts as a “simple” protocol requires a three-tier architecture: Gradio UI frontends, Agent services running Strands SDK, FastMCP servers, Service Connect for discovery, and Express Mode for load balancing.

The Streamable HTTP transport supports stateless operations, essential for horizontal scaling, but introduces complexity around session management via Mcp-Session-Id headers. For stateful workflows, you’re back to managing server-side context, load balancer affinity, and the same distributed systems headaches MCP was supposed to solve.

Security, too, remains a work in progress. The protocol bridges MCP’s OAuth 2.1 standard with AWS’s native IAM/SigV4 models, requiring defense-in-depth architectures that many organizations underestimate. Agentic model security implications become critical when your AI assistant can query production databases through an MCP server. Supabase’s documentation explicitly warns against connecting MCP to production data, citing prompt injection risks where malicious user content could trick an LLM into exposing sensitive tables.

The REST Comparison Falls Apart Under Scrutiny

REST succeeded because it imposed constraints that enabled scale: statelessness, uniform interfaces, cacheability. MCP introduces adaptive capability discovery, but trades away REST’s simplicity for flexibility that may not be necessary.

Consider the long-context reasoning reliability challenges in modern LLMs. If your model can’t reliably process 60,000 tokens of codebase context, does dynamic tool discovery matter? Or would you be better off with small model agent efficiency and explicit, well-tested API contracts?

REST solved a composition problem: how do we make services talk? MCP solves an autonomy problem: how do we let AI decide what to talk to? But autonomy without governance is just chaos with better branding. The “revolution” of MCP assumes that dynamic discovery is universally preferable to explicit contracts, a assumption that breaks down in regulated industries, financial services, and healthcare where audit trails and deterministic behavior are non-negotiable.

Where MCP Actually Wins (And It’s Not Sexy)

Despite the architectural hand-waving, MCP delivers real value in specific, narrow domains. For data engineering teams drowning in bespoke ETL pipelines, MCP servers offer standardized connectors that reduce integration time by factors of ten, according to early enterprise reports. Block, Apollo, and major financial services firms are deploying MCP not because it’s philosophically pure, but because it cuts the glue code.

- Tool discovery needs to happen at runtime (e.g., multi-tenant SaaS where capabilities vary by customer)

- Semantic understanding of tools matters more than deterministic execution

- Development velocity outweighs operational stability (prototyping, internal tools)

But for extreme data infrastructure scaling or frontier model cost disruption scenarios, MCP remains a secondary concern. CERN isn’t rebuilding its 40,000 exabyte data pipeline around MCP. They’re solving physics.

The Pragmatic Path Forward

MCP is not the new HTTP. It’s not even the new REST. It’s a useful intermediate step, a standardized way to expose capabilities to AI agents that will likely evolve into something else entirely within 18 months.

For infrastructure teams, the play is clear: treat MCP as an integration layer, not an architecture. Deploy it where the value is obvious (internal tooling, dev environments, low-stakes automation) and the data is at least somewhat reliable. Don’t rebuild your governance strategy around a protocol that deprecates its own transport mechanisms.

The companies winning with AI right now aren’t betting on protocol purity. They’re solving data access, governance, and trust, the hard problems MCP explicitly punts to the “Data Plane” layer.

HTTP changed the world because it was simple enough to implement in a weekend and robust enough to run for decades. MCP, for all its promise, still changes specs faster than most enterprises change quarterlies. That’s not a revolution. That’s just another integration standard in a crowded market, hoping this time the hype will stick.