The 24GB VRAM Hunger Games: Qwen 3.5 vs Gemma 4 in Long-Context Hell

Enter the two heavyweights currently dominating the 24GB VRAM tier: Qwen 3.5 27B and Gemma 4 31B (and its MoE sibling, the 26B). Both claim state-of-the-art status. Both fit on consumer hardware. But when you feed them 50,000+ tokens of complex source material and ask them to cross-reference obscure details, they behave radically differently. One becomes a thorough but occasionally overconfident research assistant. The other transforms into a slower, more contemplative writing partner that occasionally misses the footnotes but nails the vibe.

Community testing on RTX 3090/4090 hardware reveals a split personality in the current local LLM landscape. And if you’re betting your workflow on benchmark scores alone, you’re setting yourself up for disappointment.

The Setup: Why 24GB Is the New Battleground

For years, local LLM enthusiasts have been trapped in an 8GB VRAM ghetto, squinting at 4-bit quantized 7B models and pretending they didn’t see the quality degradation. The release of Qwen 3.5 and Gemma 4 changed the equation. Suddenly, 24GB cards like the RTX 3090 Ti or 4090 aren’t just “entry-level enthusiast” hardware, they’re legitimate workstations for serious long-context analysis.

The specs look deceptively similar on paper. Qwen 3.5’s massive context window requirements give it a theoretical 262K token window across all sizes, extensible up to ~1M tokens. Gemma 4 tops out at 256K on its larger models (26B/31B), with edge variants limited to 128K. But theoretical context windows mean nothing if the model starts generating confabulated nonsense at 90K tokens or slows to a crawl that makes Windows Vista look responsive.

Real-world deployment tells a more complex story. On an RTX PRO 4500 32GB, testers report squeezing 115K context with Qwen 3.5 27B because its dense architecture occupies less VRAM than Gemma’s MoE overhead. That extra headroom isn’t just academic, it determines whether you can process that massive PDF dump in one shot or have to chunk it and lose coherence.

The Torture Test: 60K Tokens of Lore and Code

Community benchmarks have moved beyond sanitized leaderboards. The new standard is brutal: dump 50,000 to 100,000 tokens of complex material, legal documents, game lore repositories, multi-file codebases, and ask specific questions requiring cross-referencing details buried in the middle.

The results expose the architectural differences between these models. Qwen 3.5 27B approaches long-context tasks like a diligent grad student with a highlighter, throwing references at you until the context window screams. It maintains coherence across 20,000-token outputs (yes, you can ask it to write a 20K analysis and it will actually do it), and it cites obscure details that Gemma 4 sometimes filters out as irrelevant.

But that thoroughness comes at a cost. At around 90K tokens, Qwen starts hallucinating minor details, confidently citing page numbers that don’t exist or slightly misattributing quotes. It’s the “book report” problem: lots of references, not always consolidated into insight.

Gemma 4 31B, meanwhile, operates more like a critic writing a review column. It’s slower, initially crawling at 0.6 to 3 tokens per second at high context (70K-100K) before Unsloth’s optimization updates doubled the speed to around 7 tok/sec minimum. But it hallucinates less frequently and seems to “get” the material at a deeper level, pulling out poignant connections that Qwen misses while drowning in its own thoroughness.

One tester running 18 real business use cases on an RTX 4090 found Gemma 4 26B winning 13 to 5 against Qwen 3.5 27B. The deciding factor? Intent understanding and cleaner structured outputs for agentic workflows, even if Qwen technically referenced more source material.

The Speed vs. Substance Tradeoff

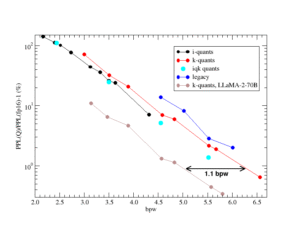

If you’re optimizing for raw throughput, Qwen 3.5 is the clear winner. Even at higher quantization levels (Q5/Q6_K_XL), it maintains significantly faster inference than Gemma 4 at equivalent quality settings. For coding workflows where you’re iterating rapidly, that latency matters. One developer found the sweet spot for Qwen family architectural advancements at around 8192 output tokens for multi-file edits on llama.cpp, any higher and the latency becomes unbearable for interactive use.

Gemma 4 requires more patience. Initial reports of 0.6 tok/sec at high context made it virtually unusable for real-time work, though post-update speeds of 7+ tok/sec have made it viable for background processing. The model also needs more explicit prompting to generate long outputs, where Qwen will ramble for 20K tokens unprompted, Gemma 4 needs explicit instructions like “reason longer and provide a 10K token analysis” to stretch its legs.

But Gemma 4’s MoE architecture (activating only 4B parameters per token on the 26B variant) offers a different efficiency profile. While total VRAM requirements remain substantial, the per-token compute can be lighter than Qwen’s dense 27B approach. Comparative efficiency breakdowns in model architecture suggest we’re entering an era where parameter count matters less than activation patterns, and Gemma 4’s routing mechanism seems to preserve coherence better at extreme context lengths, even if it sacrifices some of Qwen’s raw reference-recall ability.

The Personality Split: Reference Machine vs. Cowriter

The divergence becomes philosophical when you analyze output quality. Qwen 3.5 is the technician’s model. It handles complex, multi-step instructions with ease, “analyze this codebase, identify security vulnerabilities, and suggest patches ranked by CVSS score” is the kind of structured task it eats for lunch. Its technical reasoning is sharper, and for code generation, it remains the local standard.

Gemma 4 is the writer’s model. Its outputs are more pleasurable to read, with a narrative voice that feels less like a Wikipedia entry and more like a thoughtful analysis. It makes connections between ideas that aren’t explicitly stated in the source material, functioning less like a search engine and more like a brainstorming partner. When processing game lore or narrative content, it doesn’t just cite the lore, it digests it, bringing its own interpretive ideas to the table.

This creates a workflow reality that neither benchmark nor marketing material captures: you probably need both. Qwen 3.5 for the heavy technical lifting, code analysis, and tasks requiring exhaustive reference checking. Gemma 4 for creative analysis, writing assistance, and tasks where understanding intent matters more than citing chapter and verse.

The Multilingual and Licensing Reality Check

For teams building global applications, Qwen 3.5 holds a structural advantage with support for 201 languages and dialects compared to Gemma 4’s English-centric optimization. Alternative model comparison for agentic workflows suggests that multilingual capability is becoming a key differentiator in the local LLM space, and Qwen’s breadth here is unmatched.

Both models now ship under Apache 2.0 licenses, a significant shift for Gemma 4, which finally ditched Google’s restrictive custom terms. This means commercial deployment, fine-tuning, and redistribution are fair game for both, removing what was previously a major friction point in the Gemma ecosystem.

The Verdict: Choose Your Fighter

If forced to pick one model for a 24GB VRAM setup, Qwen 3.5 27B wins on versatility and speed. It’s the safer default for technical tasks, coding, and situations where you need to trust that the model isn’t ignoring relevant details. Its ability to maintain coherence across 20K+ token outputs makes it uniquely powerful for document generation and complex analysis.

But Gemma 4 31B (or the 26B MoE variant) offers something Qwen doesn’t: a genuinely different cognitive style. When you need insight over information, when you want a model that punches above its weight on creative synthesis rather than just recall, Gemma 4 delivers. Just be prepared to wait for it, and to prompt it explicitly to unlock its full output length.

The real power move? Run both. Use Qwen for the initial technical pass and reference extraction, then feed its output to Gemma for synthesis and narrative refinement. In the local LLM wars, the winner isn’t the model with the highest benchmark score, it’s the workflow that knows when to switch between them.