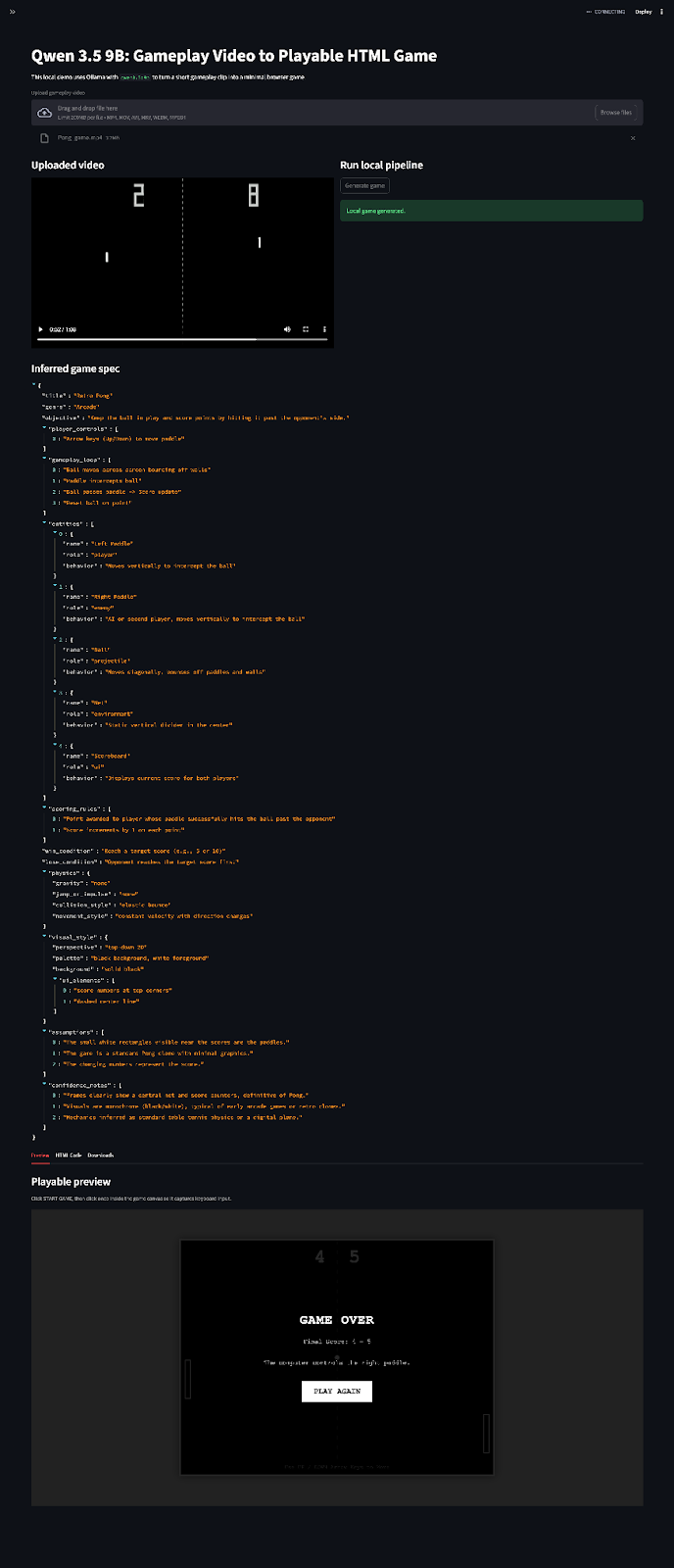

The local LLM community has spent years optimizing for the opposite of what Qwen3.5 demands. We’ve spent countless hours trimming context windows, compressing prompts, and celebrating 14-token system messages that leave more room for user queries. Then Alibaba dropped a model family that treats minimal context like a personal insult.

Qwen3.5 is a working dog. That’s not my metaphor, it’s the consensus from developers who’ve watched this model family stumble around aimlessly when starved of context, only to transform into focused execution machines once you feed it 3,000+ tokens of preamble. The implications are brutal for anyone running quantized models on consumer hardware: your optimization strategy might be exactly what’s killing your performance.

The 3K Token Floor: Where “Efficient” Dies

Here’s the uncomfortable truth that’s been emerging from the LocalLLaMA community: the Qwen3.5-27B dense model doesn’t even become remotely useful below 3K tokens of context. At 5K tokens, it finally stops “thinking itself raw” and starts producing coherent outputs. This isn’t a suggestion, it’s a hard architectural requirement that breaks every conventional wisdom about local deployment.

The model hates small talk. Your 14-token “You are a helpful assistant” system prompt? That’s furniture-chewing territory. These models were trained agentic-first, meaning they expect to know their environment, available tools, and operational modality (architect, code reviewer, debugger) before they generate a single token. Without that grounding, you’re not getting a helpful assistant, you’re getting a confused retrieval hound chasing its own tail.

Why Your RAG Pipeline Is Suddenly Inadequate

Most local LLM workflows are built on the assumption that smaller context windows are better. We chunk documents aggressively, summarize history ruthlessly, and pride ourselves on “efficient” 2K context windows that leave room for batch processing. Qwen3.5 exposes this as cargo-cult optimization.

Developers report that the 9B variant, often touted as the sweet spot for consumer GPUs with 16GB VRAM, only shines when you give it the full context window treatment. One developer running the 122B MoE variant found success with a strict 600-token system prompt limit, but that’s the exception that proves the rule: the model wants high-level, open-world tool environments more than MCP/tool mapping of business domain approaches.

What Qwen3.5 Actually Expects:

- What tools are available in its environment

- What modality it’s operating in (code generation vs. architectural review vs. debugging)

- The full scope of the task before it starts reasoning

Skip this, and you get the “overthinking” behavior that’s been flooding forums, actually just the model flailing for context until it finds something to grab onto.

The MoE Trap: When 35B Parameters Behave Like 3B

If the context requirements weren’t brutal enough, the Mixture-of-Experts variants add another layer of complexity. The Qwen3.5-35B-A3B (35B total params, 3B active) has become notorious for struggling with information overload compared to its dense counterparts.

The technical reason is stark: the attention tensors on the 35B MoE are so small that developers report doing double-takes when inspecting the weights. With almost 100B parameters of additional world knowledge but limited active parameters per token, the model faces trade-offs that dense architectures avoid. When you combine this with the context hunger, you get a model that either has too little information (small context) or too much to process effectively (large context with MoE routing confusion).

Hardware Reality: Quantization vs. Context Wars

This creates a deployment paradox. The Qwen3.5 efficiency and edge AI capabilities comparison shows it can outperform closed-source models, but only if you break your quantization habits.

Standard Q4_K_M quantization reduces VRAM usage by roughly 60%, which is necessary when you’re suddenly allocating 8K+ tokens for system prompts and tool definitions. But aggressive quantization, pushing to Q2_K or ultra-low bitrates to save memory, collapses the model’s ability to utilize that context effectively. The attention mechanisms need precision to handle the long-range dependencies that make the 3K+ context window useful.

Mobile Deployment Benchmarks (Samsung S25 Ultra, 12GB RAM)

- Model:

- Qwen3.5-4B Q4_K_M

- Tokens per Second:

- 5.58 t/s

- Time to First Token:

- 2707ms TTFT

- Usability Rating:

- Usable but hungry

The reasoning loop gets weird when context fills up, like the aforementioned dog chasing its own tail without clear job instructions.

What Actually Works: The New Deployment Playbook

Strict System Prompt Budgets

While the model wants 3K+ tokens of context, that doesn’t mean 3K of fluffy preamble. Developers are seeing success with dense, 600-token system prompts that pack tool definitions, modality instructions, and output schemas into tight spaces. It’s about information density, not length, though length still matters.

Full Context Window Utilization

The 9B model on Unsloth quants can use the full 32K context window on 24GB cards. This isn’t just possible, it’s necessary for complex tasks. If you’re splitting your RAG chunks across multiple inference calls, you’re doing it wrong with this architecture.

vLLM Configuration Adjustments

When deploying via vLLM (the recommended path for multi-GPU setups), you’ll need to override the default memory allocation. The standard --max-model-len 32768 flag becomes mandatory, not optional, and you’ll likely need --gpu-memory-utilization 0.9 or higher to accommodate the KV cache bloat from those mandatory long prompts.

Temperature and Top-K Tuning

The model expects precision. Community findings suggest keeping temperature around 0.6 with top_k at 20 and top_p at 0.95, relatively conservative settings that prevent the model from hallucinating when it finally has enough context to work with.

The Distillation Dilemma

This context hunger has implications for distilling models for consumer hardware deployment solutions. If you’re trying to compress Qwen3.5’s capabilities into smaller student models, you need to preserve that agentic context requirement or the distilled version becomes a hollow shell. The knowledge isn’t just in the weights, it’s in the model’s ability to maintain state across thousands of tokens of environmental awareness.

The comparisons of local LLM quality and capability expectations are shifting. We’re moving from an era where “runs on 8GB VRAM” was the primary metric to one where “utilizes full context without choking” matters more. The 4B and 9B variants aren’t just smaller versions of the 122B, they’re specifically designed to leverage full context windows on consumer hardware, trading raw parameter count for context efficiency.

Conclusion: Feed the Dog or Get Bitten

Qwen3.5 represents a philosophical shift in open-weight model design. Alibaba has bred a working dog in a market full of lap pets, and it’s forcing developers to reconsider whether their “optimizations” are actually constraints. The model doesn’t want to hear “hi”, it wants a job description, a tool manifest, and enough context to understand its environment before it starts generating.

For local deployment, this means the end of the 2K context window era. Whether you’re running the 9B variant on a 3090 or the 122B MoE across dual A100s, the minimum viable product now starts at 3K tokens and scales up from there. Your quantization strategy, your RAG pipeline, and your hardware budget all need to account for a model that treats minimal context as a bug, not a feature.

The furniture-chewing stops when you give it enough rope to work with. Just make sure you have the VRAM to pay for it.