Anthropic spent February 2026 positioning itself as the privacy-respecting alternative to OpenAI, absorbing millions of users who fled after the Pentagon contract announcement. The company’s own marketing emphasized that it had rejected defense contracts over surveillance concerns. Six weeks later, those same privacy-conscious developers are being asked to upload government-issued photo IDs and submit to live facial recognition scans to continue using Claude, a requirement no other major AI provider currently imposes.

The irony is thick, but the technical and economic implications are thicker. Anthropic isn’t just asking for passports, it’s systematically degrading consumer plan functionality while pushing users toward enterprise tiers that cost significantly more. The data suggests a deliberate strategy of "constructive termination", making consumer subscriptions increasingly unusable until users either upgrade to enterprise contracts or leave entirely.

The Verification Mandate: Financial-Grade KYC for a Chatbot

Anthropic quietly updated its Help Center on April 14, 2026, announcing a partnership with Persona Identities, the same KYC infrastructure used by banks and fintech companies, to verify user identities. The requirements are stringent: users must possess a physical, undamaged government-issued photo ID (passport, driver's license, or national identity card) and submit to a live selfie match. Digital IDs, photocopies, and student cards are explicitly rejected.

The company claims this applies only to "a few use cases" and triggers during "routine platform integrity checks" or when accessing certain capabilities. However, multiple Max tier subscribers report being prompted for verification immediately upon subscription, suggesting the policy is expanding beyond the initially stated scope.

Data handling promises follow the standard Silicon Valley script: Persona stores the data, not Anthropic, it's encrypted in transit and at rest, it won't be used for model training. But the precedent set by Discord's October 2025 breach, where roughly 70,000 government IDs submitted for age verification were exposed, has developers questioning whether any third-party custody of identity documents can be considered secure. The shifts in local AI privacy and cloud pivots we've seen elsewhere in the industry suggest these "temporary" verification measures have a habit of becoming permanent infrastructure.

The Silent Degradation: When Five Minutes Costs You Seventeen Percent

While identity verification grabs headlines, the more insidious change involves cache time-to-live (TTL) settings that silently inflate costs for subscription users. A detailed analysis of 119,866 API calls across two independent machines reveals that Anthropic silently changed the default cache TTL from 1 hour to 5 minutes in early March 2026.

The data shows a clear regression window:

| Phase | Dates | TTL Behavior | Evidence |

|---|---|---|---|

| Phase 1 | Jan 11, Jan 31 | 5m ONLY | ephemeral_1h absent/zero |

| Phase 2 | Feb 1, Mar 5 | 1h ONLY | ephemeral_5m = 0 across 33+ consecutive days |

| Phase 3 | Mar 6, 7 | Transition | First 5m tokens reappear |

| Phase 4 | Mar 8, Apr 11 | 5m dominant | 5m tokens surge to majority |

The financial impact is substantial. Applying Anthropic's official pricing (cache_write_5m = $3.75/MTok, cache_write_1h = $6.00/MTok, cache_read = $0.30/MTok), the regression results in 17.1% cost waste across the analyzed period:

Claude-Sonnet-4-6 Cost Impact:

– January 2026: 52.5% waste ($41.45 overpaid)

– February 2026: 1.1% waste (baseline with 1h TTL)

– March 2026: 25.9% waste ($719.09 overpaid)

– April 2026: 14.8% waste ($176.23 overpaid)

Claude-Opus-4-6 Cost Impact:

– Total overpayment: $1,581.80 across 119,866 calls

The mechanism is brutal in its simplicity. With 5-minute TTL, any pause longer than five minutes causes the entire cached context to expire. When the user resumes, Claude Code must re-upload the entire context at the write rate ($3.75/MTok for Sonnet) rather than the read rate ($0.30/MTok), a 12.5× cost multiplier. For long coding sessions, the primary use case for Claude Code, this creates a compounding penalty where the longer your session, the more expensive each cache expiry becomes.

Subscription users began hitting their 5-hour quota limits for the first time in March 2026, directly coinciding with this regression. Anthropic has not confirmed whether the change was intentional cost management or an infrastructure accident, but the silence itself speaks volumes.

Constructive Termination: The Enterprise Pivot Theory

Developer forums have coined a specific term for what's happening: "constructive termination." The theory, supported by analysis of Anthropic's recent business moves, suggests the company is effectively terminating its Max subscription plans through silent degradation rather than transparent communication.

The economic rationale is straightforward. Frontier models are catastrophically expensive to run at consumer scale. At $20/month for Pro or $100/month for Max, heavy users generate negative margins. Enterprise contracts, however, start at significantly higher price points with volume commitments that actually cover inference costs.

Community analysis indicates Anthropic is willing to slowly attrit and lose customers to churn through ambiguous technical explanations for regressions, while salvaging subscription ARR for as long as possible. The identity verification requirements conveniently filter out users in unsupported regions (particularly China) who access Claude through VPNs and intermediaries, users who likely generate support overhead without contributing to enterprise pipeline value.

This mirrors broader concerns about the economic viability of cloud LLM APIs and whether the current pricing models represent a temporary subsidy period that's now ending.

The Local Exodus: When Cloud Access Becomes Identity-Gated

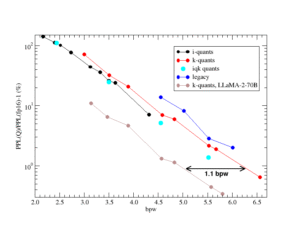

The developer response has been immediate and technical. Rather than comply with identity verification, many are accelerating migration to local inference stacks. Community recommendations for hardware-accelerated local models include specific GGUF quantizations optimized for consumer hardware:

unsloth/gemma-4-31B-it-GGUF:Q5_K_Sunsloth/Qwen3.5-27B-GGUF:Q8_0unsloth/Qwen3.5-122B-A10B-GGUF:UD-Q6_K_XLunsloth/Mistral-Small-4-119B-2603-GGUF:UD-Q5_K_XLunsloth/NVIDIA-Nemotron-3-Super-120B-A12B-GGUF:UD-Q4_K_XL

These models, running on hardware like the AMD Ryzen 395 with 128GB RAM or Apple Silicon with unified memory, now match cloud API performance for many coding tasks while eliminating vendor surveillance entirely. The browser-based AI offering privacy-first inference through WebGPU is also gaining traction as developers seek alternatives to identity-gated cloud services.

The irony is that Anthropic's own safety posturing, positioning AI as too dangerous for unrestricted access, may accelerate the exact outcome they claim to oppose: powerful models running entirely outside monitored infrastructure. When local LLMs gaining web-search capabilities combine with efficient MoE models running on consumer RAM, the technical justification for cloud dependency evaporates.

What This Means for the AI Stack

For engineering teams, the implications are immediate. If you're building on Claude's API, you now face two risks: sudden cost inflation through silent infrastructure changes, and potential loss of access if your usage patterns trigger identity verification requirements. The latter is particularly problematic for distributed teams in regions not on Anthropic's supported list.

The identity verification policy also creates legal exposure. A recent federal ruling confirmed that private conversations with Claude are discoverable in criminal proceedings. When combined with verified identity data, this creates a surveillance apparatus that makes traditional cloud API risks look trivial.

Teams should evaluate consumer hardware disrupting cloud AI jobs and consider whether sub-60GB models reducing cloud API reliance can handle their workloads. The technical gap between local and cloud inference is closing faster than most enterprise procurement cycles can adapt.

Anthropic's pivot reveals the fundamental tension in commercial AI: the models are too expensive to give away, too dangerous (by their own admission) to democratize, and too valuable to abandon. The result is a squeeze on individual developers and small teams that will likely define the next phase of AI adoption, one where frontier access becomes a corporate privilege rather than a developer tool.

The "AI for everyone" era was nice while it lasted. Now bring your passport, and your enterprise procurement budget, or get out.