Spend six months on architecture diagrams and detailed specifications, or ship a working prototype in six days? For decades, this has been a false dichotomy forced by the economics of software development, human hours are expensive, compute is cheap, so we plan meticulously to avoid costly rework. But when rapid framework reconstruction via AI automation costs $1,100 and a single week, the math stops working. The blueprint-first approach isn’t just slowing you down, it’s becoming a competitive liability.

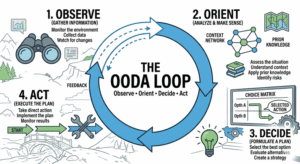

The shift is already visible in engineering teams evaluating 2 to 4 times as many design variants per program when using AI-enabled workflows, compared to those stuck in conventional approaches. When simulation and coding bottlenecks disappear, exploration becomes the default, not the exception. This isn’t about generating boilerplate faster, it’s a fundamental restructuring of what “architecture” means in an agentic development environment.

The Feedback Loop Imperative

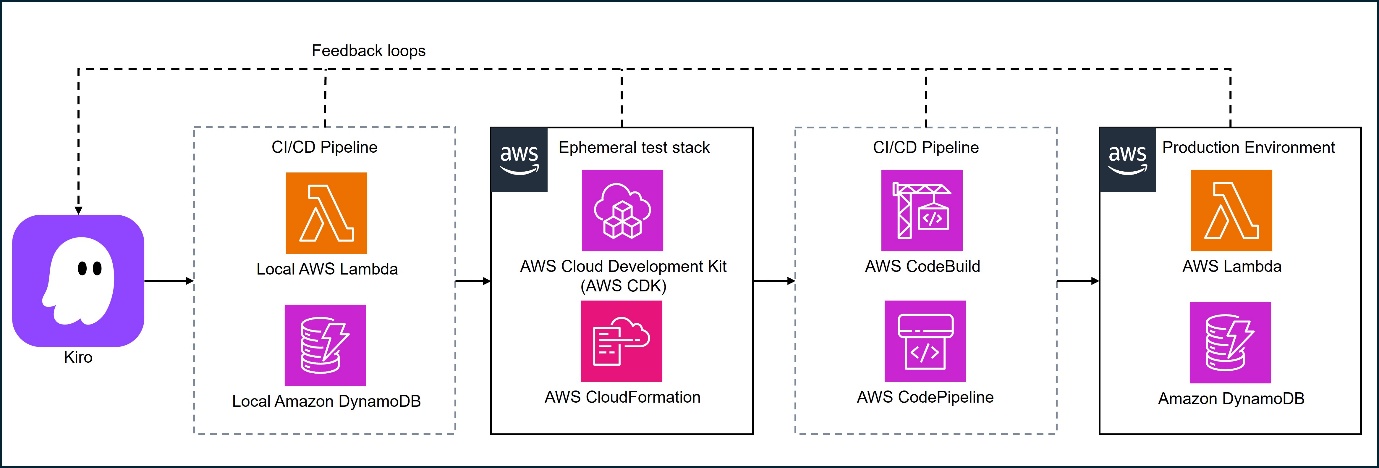

Traditional cloud architectures were built for human-driven development: long-lived environments, manual testing gates, and deployment pipelines that move at human speed. In an agentic workflow, where AI agents write, test, deploy, and refine code autonomously, these assumptions collapse.

AWS architecture teams have identified the critical bottleneck: when an AI agent generates code, it often takes minutes or hours before validation occurs. Slow deployment cycles and tightly coupled services force developers back into manual validation loops, effectively neutering the agent’s autonomy. The solution isn’t better prompts, it’s architectural redesign.

The new architectural mandate prioritizes local emulation as the default feedback path. Serverless applications built with AWS Lambda and API Gateway can be emulated locally using AWS SAM (sam local start-api), allowing agents to iterate in seconds rather than minutes. For data persistence, Amazon DynamoDB Local enables CRUD testing without cloud round-trips. Even complex data processing pipelines using AWS Glue can run locally via Docker images, letting agents validate transformations against sample datasets before touching cloud resources.

This creates a hybrid testing model where the cloud becomes a sparse dependency, used for final validation, not iterative experimentation. Combined with ephemeral preview environments and contract-first API design using OpenAPI specifications, agents can validate complete integration behaviors without provisioning permanent infrastructure.

From Diagrams to Steering Files

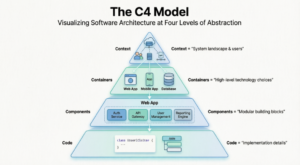

If system architecture accelerates feedback, codebase architecture determines whether an AI agent can actually understand what it’s changing. The era of shift from diagram-based to code-first architecture documentation is here, replaced by machine-readable constraints that guide autonomous agents.

Domain-driven structure is no longer academic luxury, it’s operational necessity. Organizing code into explicit layers (/domain, /application, /infrastructure) separates business logic from cloud-specific dependencies. Hexagonal architecture patterns reinforce this by treating external systems as adapters rather than entanglements, allowing agents to modify business logic locally without triggering cloud-side effects.

But structure alone isn’t sufficient. Tools like Kiro introduce steering files, Markdown documents stored under .kiro/steering/ that encode architectural constraints and coding conventions. Rather than restating rules in every prompt, agents consult these files automatically. A steering rule might mandate that database access flows exclusively through repository classes in the infrastructure layer, or that specific error handling patterns must wrap external API calls.

Tests serve as executable specifications in this environment. Unit tests validate domain logic in isolation for rapid iteration, contract tests verify service interfaces, and smoke tests catch runtime issues like missing IAM permissions. When a test fails, the agent infers expected behavior and refines its approach, a closed learning loop that replaces the traditional “write code, wait for review, fix defects” cycle.

The Economic Reality of 4x Design Exploration

The business implications extend beyond developer ergonomics. Engineering organizations report that AI-accelerated design exploration reduces RFQ response risks and directly impacts revenue. When open-source coding tools reducing API dependency democratize access to high-quality generation models, the constraint shifts from “can we build this?” to “which of these twelve approaches should we pursue?”

Accenture’s industrial AI practice demonstrates this with battery frame design: an agentic system reads requirements, iterates overnight across hundreds of design variants, and surfaces passing designs to engineers in the morning. The result is 40% time savings in the trial-and-error phase. The architect’s role shifts from creating the initial design to defining the constraints and evaluation criteria that govern autonomous exploration.

This aligns with broader labor market data showing when AI already handles majority of programming tasks, the value of upfront specification work diminishes rapidly. If three-quarters of coding tasks are already covered by AI, spending weeks on detailed class diagrams before writing a line of code is economically irrational.

The Controversy: What Architects Actually Do Now

The uncomfortable question circulating in developer forums asks whether extensive upfront architecture is becoming obsolete. The tension is palpable: AI makes writing code cheaper and faster, but bad decisions still scale quickly, and changing architecture later remains expensive. Many experienced practitioners argue that solid MVP definition still prevents costly scalability and maintenance issues down the line.

Yet the consensus is shifting toward an iterative alternation between big-picture architecture and implementation details. When stuck on one, switching to the other reveals new information, a process accelerated by agents that can explore implementation paths in minutes rather than days.

The critical distinction is between “design first” and “constraints first.” Modern architectural work focuses on defining system boundaries, data governance rules, and feedback mechanisms rather than prescribing implementation details. Documentation becomes truth stored in engineering docs and steering files, not mental models. For global teams relying on async communication, this shift from implicit knowledge to explicit, machine-readable architecture is non-negotiable.

Actionable Migration Path

Moving from blueprint-heavy processes to constraint-first architecture requires specific infrastructure investments:

Immediate (This Sprint):

- Implement local emulation for your primary stack (SAM Local, DynamoDB Local, or containerized equivalents)

- Create a

.kiro/steering/directory with foundational rules covering your top three architectural constraints - Establish ephemeral preview environments via Infrastructure as Code (IaC) for safe agent experimentation

Short-term (Next Quarter):

- Refactor monolithic codebases toward domain-driven layers with clear infrastructure separation

- Define machine-readable API contracts using OpenAPI before implementation

- Integrate agents into CI/CD pipelines with required test execution and branch protections

Strategic (Next Year):

- Transition documentation from static diagrams to executable specifications and steering files

- Build agent registries for autonomous workflow automation in non-coding domains (simulation, testing, deployment)

- Retrain architects as constraint engineers and system boundary specialists

The transition won’t be painless. Job market disruption from AI adoption across tech roles is already visible, and architectural roles face similar pressure. The architects who survive won’t be the ones producing the most beautiful UML diagrams, they’ll be the ones who can define the constraints that let AI agents generate the right code without supervision.

The blueprint is dead. Long live the guardrails.