A recognizably bad piece of code is easy to call out. An elegantly flawed system design is not. We’re witnessing a quiet but seismic shift: developers aren’t just asking Copilot for a function anymore, they’re asking ChatGPT to “design a real-time analytics pipeline” or “sketch a microservice architecture for an e-commerce platform.” The AI complies, spinning up plausible-looking diagrams, component lists, and configuration snippets. The velocity is intoxicating. The risk is a ticking time bomb of architectural debt.

This isn’t about buggy code, it’s about missing theory. As Christian Ekrem points out, AI-generated code exhibits a specific fingerprint: “primitive obsession all the way down to the domain core.” An email isn’t a domain concept, it’s a string. An Order ID is just another string. A Map<string, any> appears whenever the logic gets hairy. The code compiles. The tests pass. It ships. But the developer who would have actually thought about the domain would never have written those types that way.

That gap, between statistical plausibility and reasoned design, is where we’re now building entire systems.

The Statistics of Your System Design

The fundamental issue is one of training data. LLMs are pattern-recognition engines, not reasoning engines. They’ve ingested the public corpus of the internet: tutorials, open-source projects, Stack Overflow answers, and documentation. What does that corpus overwhelmingly contain? Surface-level implementations, quick fixes, and “good enough” solutions.

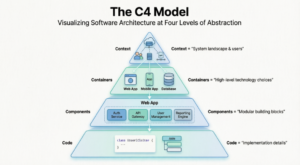

When you ask an LLM to design a system, it isn’t synthesizing first principles about consistency, fault tolerance, or domain modeling. It’s statistically reassembling the most common patterns it has seen in similar contexts. This leads to designs that look correct but lack the conceptual integrity that makes systems durable.

AI-Driven Generation

function confirmOrder(orderId: string, customerEmail: string, total: number) {

if (!customerEmail.includes("@")) throw new Error("bad email");

if (total <= 0) throw new Error("bad total");

// ...

}Human Domain Encoded

type Email = { readonly _tag: "Email", readonly value: string };

type OrderId = { readonly _tag: "OrderId", readonly value: string };

type PositiveAmount = { readonly _tag: "PositiveAmount", readonly value: number };

function confirmOrder(orderId: OrderId, customerEmail: Email, total: PositiveAmount): Confirmed<Order> {

// ... No validation needed, the types guarantee it.

}The second version encodes theory, an email is not a string, an order ID is not interchangeable with a customer email, a total must be positive, into the compiler. The first version leaves that theory as a comment, a runtime check, or worse, an unstated assumption that will be violated at 4 AM by a tired developer. This is the essence of what gets lost in AI-generated architecture: the negative space of the domain. A model trained on what was written cannot invent representations of what cannot or should not happen.

The Illusion of Agentic Validation

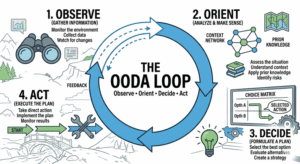

In response to these blind spots, a popular pattern has emerged: the multi-agent “explorer-critic” workflow. Inspired by articles on orchestrating agent teams, the idea is to have multiple AI agents generate candidate architectures, while another “critic” agent evaluates them. This creates a veneer of rigor. It feels like a design review.

But this is validation theater. The critic agent suffers from the same fundamental limitation as the designer agents: it, too, is trained on the same corpus of average, often incomplete, solutions. It can spot egregious contradictions or missing AWS service quotas, but it cannot critique a design for missing the subtle, implicit theory of a domain it was never trained to understand. It cannot ask, “Why are we using an event-sourcing pattern here when a simple CRUD service would suffice?” if event-sourcing is statistically common in similar prompts.

A research paper from April 2026, “Assistants, Not Architects: The Role of LLMs in Networked Systems Design”, puts a fine point on this. The authors build Kepler, a reasoning framework that combines expert-driven specifications with SMT-based optimization. They found that when tasked with generating comprehensive system specifications for microservices management software, LLMs had a 43% failure rate. They produced incorrect properties, missed critical constraints, and hallucinated compatibility rules. In contrast, they achieved near-perfect accuracy on datacenter hardware specs, a domain with abundant, structured, and unambiguous documentation.

The conclusion is damning for architectural reasoning: LLMs are powerful for information retrieval and exploring known design spaces, but they “cannot reliably architect common networked systems.” In complex software domains where constraints are subtle, conditional, and cross-cutting, treating an LLM as an end-to-end design agent is “risky: the resulting specifications require careful human auditing, undermining the benefits of automation.”

When Speed Creates Structural Debt

The business pressure is immense. The promise is shipping weeks of architecture work in an afternoon. The GitClear report on 153M lines of code quantifies the early effects: copy-pasted lines climbed from 8.3% in 2020 to 12.3% in 2024. For the first time, copy/paste exceeded moved (refactored) code within a commit. Code churn, lines rewritten within two weeks of authorship, is projected to roughly double from its pre-AI baseline. This is the fingerprint of a codebase “forgetting its own theory in real time”, where decisions aren’t reasoned but statistically generated and rapidly discarded.

This pressure can manifest as organizational mandates to enforce AI adoption over quality, prioritizing speed and compliance with new tools over architectural soundness. When the metric shifts from “is this a good design?” to “w was this design generated by AI?”, the incentive structure actively favors the accumulation of shallow, pattern-matched architecture.

The result isn’t just bad code, it’s corrosive to the team’s architectural capacity. As junior engineers are handed AI-generated blueprints, they miss the formative experience of wrestling with trade-offs, of failing, of understanding why a pattern was chosen. Senior engineers spend their time not guiding and mentoring, but playing whack-a-mole with the accumulated technical debt from unreviewed AI-generated design choices.

The New Review Discipline: Theory Spot-Checking

If we can’t stop the tide of AI-assisted design, we must evolve our review practices. The old code review checklist is insufficient. We need a new discipline focused on theory spot-checking.

-

Interrogate the Primitives:

Run a simple

grepfor: string,: Map<string, any>, or: anyin the core domain logic. Each instance is a candidate for a missing domain concept. Ask: “Is this actually a string, or is it a concept the model flattened?” -

Demand the “Why Not”:

For every major architectural choice (e.g., “We will use Kafka for event streaming”), force the designer, human or AI-assisted, to articulate not just why it’s good, but what alternatives were considered and ruled out, and why. LLMs are terrible at this, they propose the statistically likely choice without understanding the trade-off space.

-

Trace the Data, Not the Boxes:

Don’t just review the component diagram. Pick a core entity (a “User”, an “Order”) and trace its lifecycle through the proposed system. Where is it validated? Where is it transformed? Where is its state persisted and retrieved? AI diagrams often create beautifully symmetric boxes that obscure nonsensical data flows.

-

Apply the “5 Whys” to Dependencies:

For every external service or library proposed, ask why it’s needed. The AI will often include a dependency because it’s popular in the training data for similar tasks, not because it’s necessary. This is a primary vector for challenges in orchestrating large-scale, bloated AI agent architectures.

This shift turns the reviewer from a syntax checker into a theory auditor. Their job is to find the places where statistical likelihood has been substituted for domain reasoning.

A Path Forward: Assisted, Not Autonomous, Design

The research points to a more sustainable path: LLMs as powerful assistants within a structured, human-guided process. The Kepler framework embodies this: use LLMs for what they’re good at (extracting facts from documentation, enumerating options) but ground the final synthesis in a formal, explainable reasoning system that encodes expert knowledge as constraints.

-

Use AI for Exploration, Not Decision

Prompt for three different approaches to a problem, not the approach. Use the output as a starting point for a discussion of trade-offs.

-

Encode Your Architectural Principles

Create lightweight, machine-readable “rules of thumb” for your organization (e.g., “Events must be idempotent”, “Service dependencies must be acyclic”). Use these as a filter for AI-generated proposals.

-

Invest in Architectural Literacy Training

Teach engineers to recognize the smell of “primitive obsession” and the value of domain modeling. The skill of the next decade may be prompting for reasoning, not just for output.

The warning from the arXiv paper is the perfect mantra: Assistants, Not Architects. We must resist the seductive allure of full automation. The goal isn’t to replace the architect with a black box that produces plausible diagrams. The goal is to arm the architect with a tool that can expand the frontier of considered options while keeping the crucial, theory-building human firmly in the loop.

The alternative is a landscape of systems that look perfect in the diagram but are brittle, incoherent, and impossibly expensive to change, the very definition of an architecture that has failed. We must develop strategies to combat this long-term architectural entropy before it consumes our capacity to innovate.

The risk isn’t that AI will design systems with bugs. The risk is that it will design systems that work perfectly, for a while, while quietly eroding our ability to understand or evolve them. That’s a debt no refactoring sprint can repay.