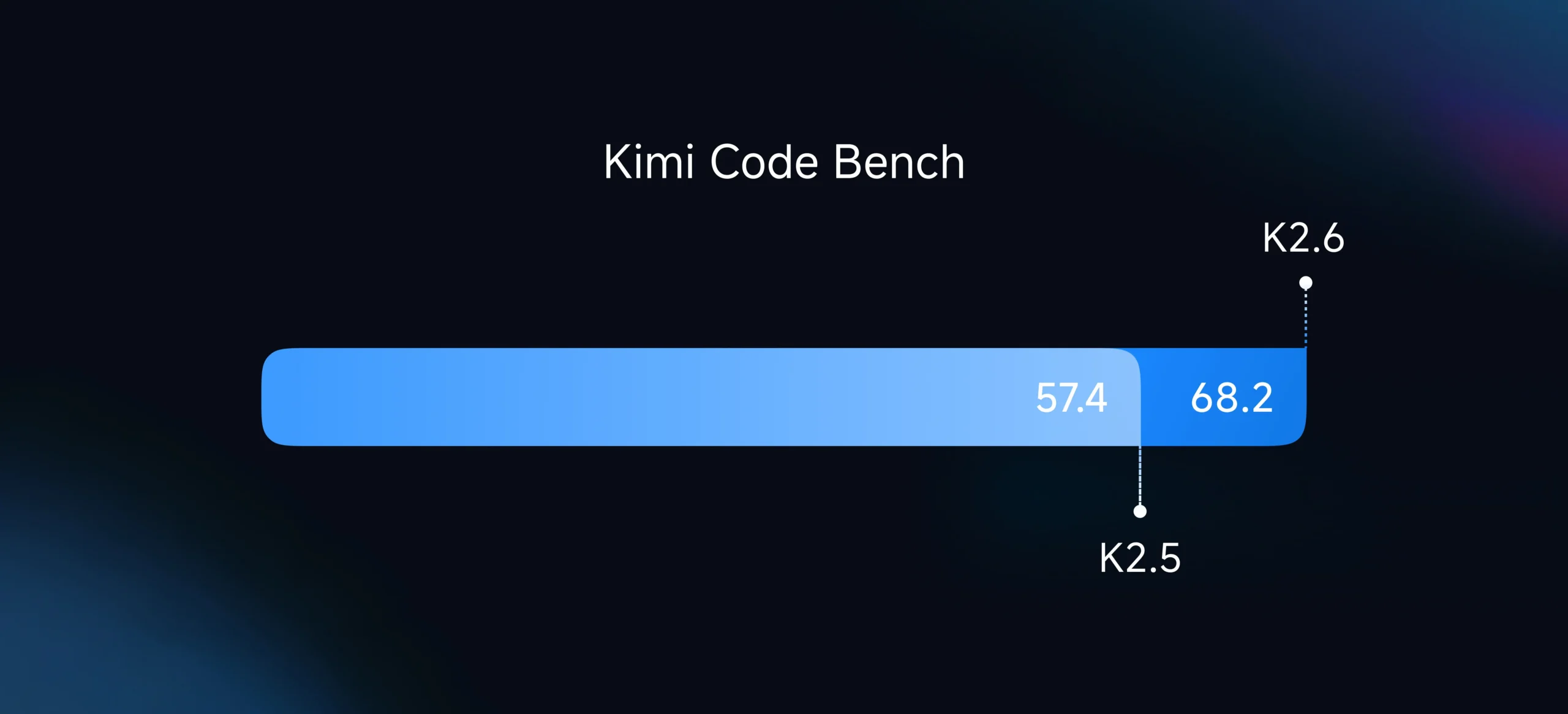

The announcement landed like a grenade in the water cooler Slack channels of every AI infrastructure team: Kimi K2.6 can now orchestrate 300 sub-agents executing across 4,000 coordinated steps simultaneously. Not theoretically. Not in a lab. In production, with benchmarks showing it outperforming GPT-5.4 on Humanity’s Last Exam (54.0 vs 52.1) and crushing previous iterations on long-horizon coding tasks.

If you’re still architecting your AI systems around the assumption that “intelligence” equals “API calls to OpenAI”, you’re not just behind, you’re burning money. The new generation of open-source models isn’t just catching up to cloud APIs, they’re forcing a fundamental rethink of how we structure compute, memory, and trust boundaries in modern stacks.

The Latency Wall Meets the Agent Swarm

Here’s the uncomfortable math that infrastructure engineers are starting to confront: cloud APIs are architecturally incapable of handling the coordination patterns that next-gen models enable. When Kimi K2.6 spawns 300 specialized agents to simultaneously analyze financial documents, generate code, and conduct deep research, each sub-agent might trigger dozens of tool calls. Over 4,000 steps, that’s potentially 120,000 API round-trips.

At 500ms latency per call (generous for complex reasoning tasks), you’re looking at 16 hours of wall-clock time just for network overhead. The model itself might process in minutes, but the architecture collapses under the weight of HTTP requests.

This isn’t a bug, it’s a physical constraint. The solution isn’t “faster internet”, it’s local inference. Running models like Qwen3.5-35B-A3B or GLM-4.7-Flash via llama.cpp on dedicated hardware eliminates the network hop entirely, bringing coordination latency down to microseconds instead of milliseconds.

The performance gains are stark. In internal benchmarks, Kimi K2.6 demonstrated a 185% throughput improvement on the exchange-core financial engine optimization task, completing in 13 hours what would have taken cloud APIs days just in network latency. When your agents need to iterate through 12 optimization strategies and 1,000+ tool calls, local execution isn’t a preference, it’s a requirement.

The Three-Layer Architecture: Beyond RAG

The community has been flailing around with RAG (Retrieval-Augmented Generation) for two years, treating it like a silver bullet for context management. But RAG is a band-aid on a broken paradigm, it retrieves, it doesn’t understand.

Enter the LLM Wiki architecture, a pattern inspired by Andrej Karpathy that treats knowledge as a persistent, evolving structure rather than a search index. The three-layer stack is deceptively simple:

- Raw Sources (immutable ingested documents)

- Wiki (LLM-generated, interlinked knowledge pages)

- Schema (structural rules and configuration)

Instead of retrieving chunks of text for every query, the system maintains a living knowledge graph. When Kimi K2.6 or Qwen3.6 processes a new document, it performs a two-step chain-of-thought ingest: first analyzing entities and contradictions, then generating structured wiki pages with full source traceability via YAML frontmatter.

This approach fundamentally changes your resource constraints for Qwen models. A 35B parameter model running locally with 24GB VRAM can maintain a 131,072-token context window, but more importantly, it can perform complex graph traversals and community detection (via Louvain algorithms) without shipping embeddings back and forth to a vendor’s GPU cluster.

The result? A knowledge system that scales with your hardware, not your API budget.

Heterogeneous Agent Swarms: The Death of the Monolithic Model

Perhaps the most radical shift is the abandonment of the “one model to rule them all” philosophy. The Hermes Agent multi-profile architecture demonstrates what modern stacks actually look like: specialized agents running different models based on task requirements.

Consider a production deployment:

– Dev Agent: Claude Sonnet 4.6 for complex coding tasks ($30-60/month)

– Ops Agent: GPT-4o Mini for monitoring and alerts ($5-15/month)

– Private Data Agent: Local Qwen3.6 or Gemma 4 via Ollama ($0, zero data egress)

Each profile maintains isolated memory, skills directories, and MCP (Model Context Protocol) server connections. When the dev agent needs infrastructure context, it doesn’t query a central “smart” model, it communicates with the ops agent via shared filesystem bridges or MCP server relays.

This heterogeneity requires formalizing LLM interactions in ways that make cloud architects nervous. You’re no longer dealing with a single API contract, you’re managing multiple inference endpoints, each with different token limits, tool capabilities, and failure modes. But the payoff is resilience: when your coding agent hallucinates a function name (and they all do), your fact-checking agent, running a smaller, specialized model, catches it before it hits production.

The Security Reckoning

If performance were the only driver, local models would be a nice-to-have optimization. But the security implications are forcing hands. According to Forbes research from early 2025, 44% of companies cited security and privacy risks as the primary barrier to adopting cloud LLMs. That’s not paranoia, that’s empirical response to security risks with uncensored models and the reality that every API call ships your data to someone else’s jurisdiction.

The motivations for local over cloud AI have shifted from tinfoil-hat privacy to regulatory necessity. When Kimi K2.6 can process sensitive financial data or proprietary codebases entirely on-device, maintaining a 5-day autonomous execution log without ever touching a network cable, suddenly the “cloud premium” looks like a liability, not a feature.

Building the New Stack: Practical Patterns

So what does this architecture actually look like in implementation? The awesome-llm-apps repository catalogs the emerging patterns:

Pattern 1: The Quantized Workhorse

Deploy Qwen3.5-35B-A3B using Unsloth’s Dynamic GGUFs with UD-Q4_K_XL quantization. Fits in 24GB VRAM, runs at ~193 tokens/sec on optimized Zig implementations, and maintains tool-calling accuracy above 96%.

Pattern 2: Context Window Management via KV Cache

Modern local inference requires sophisticated cache management. Using --cache-type-k q8_0 --cache-type-v q8_0 with llama-server reduces VRAM usage while preserving accuracy, critical when you’re mitigating prompt injection attacks by keeping the entire conversation context local and auditable.

Pattern 3: Agent Skill Persistence

Instead of few-shot prompting on every request, architectures now use persistent skill directories (Markdown files with structured examples) that local models load into context. This reduces token costs by 50-90% while improving consistency, your agent actually learns from previous sessions because the knowledge is stored in a local SQLite database, not evaporated after the API call closes.

Pattern 4: Reliability Guardrails

With great autonomy comes great responsibility. Local architectures require reliability guardrails for AI pipelines, automated linting of generated code, cross-validation between multiple local models, and circuit breakers when agent swarms diverge from expected behavior patterns.

The Hardware Reality

Let’s not sugarcoat it: this architecture demands hardware. The “run it on your laptop” dream dies hard when you’re coordinating 300 agents. You’ll need:

– 24GB+ VRAM for the primary reasoning models (RTX 4090 or Apple Silicon with unified memory)

– 64GB+ system RAM for context management and concurrent operations

– NVMe SSDs for model loading, spinning rust won’t cut it when you’re hot-swapping between Qwen3.6 and Kimi K2.6 based on task requirements

But compare that to the operational cost of 4,000 API calls with 128k context windows, and the CapEx starts looking like OpEx savings. Plus, with local model training infrastructure improving rapidly, you can fine-tune these open weights on your own data without shipping it to a third party.

Conclusion: The Post-Cloud AI Stack

The transition is already underway. When Blackbox.ai reports that Kimi K2.6 “sets a new bar for reliable coding” with “extended coding sessions with remarkable stability”, they’re not just reviewing a model, they’re describing a new architectural primitive.

The future stack isn’t “cloud with a local fallback.” It’s local-first, with cloud augmentation for specific, bounded tasks. It’s 300 agents running on a rack of GPUs in your basement, coordinating via MCP protocols, maintaining persistent knowledge graphs in LanceDB, and only reaching out to the internet when they need to verify a fact or pull a web clip.

Your API bill won’t thank you for ignoring this shift. But your latency metrics, your security audit, and your 3 AM pager duty will.