The allure of AI tooling is undeniable: generate a service, scaffold a database, document an API, all with a well-crafted prompt. The promise is a higher level of abstraction, a new layer atop decades of programming languages that lets us build faster by focusing on intent, not implementation. But what if this layer isn’t just an abstraction? What if it’s an obfuscation, a filter that fundamentally alters how we understand and, crucially, shape the systems we build?

We’re witnessing the first wave of architectural atrophy. Engineers who once traced execution flows through stack frames now struggle to write a for loop without a prompt. The cognitive scaffolding required for scalable design, understanding data lifecycles, failure domains, state management, is quietly rotting away beneath the convenience of AI-generated code.

The Not-Abstraction: Why LLMs Are Unlike Any Programming Paradigm Shift

The common narrative, echoed even by seasoned developers, is that AI-assisted coding is simply the next logical step: from binary to assembly, to C, to Python, to prompting. It’s a seductive but deeply flawed analogy.

As argued in the Hackernoon article Are LLMs a Higher Level of Abstraction? No, And Here’s Why, traditional abstractions are deterministic functions:

f(x) -> y

LLMs, however, don’t produce a value, they produce a probability distribution over possible values:

f(x) -> P(y) ∪ P(z1) ∪ P(z2) ∪ ... P(zN)

f(x) -> P( y | z1 | z2 | ... zN )

You prompt for a “TODO webapp” (y) but get y and z1 (opens your credentials to the net) and z2 (configures a publicly writable FTP server). Your tests may pass because they only check for y, but you’ve inherited a host of unrequested, unseen architectural decisions, decisions you didn’t make and may not know exist.

This isn’t abstraction, it’s delegation to a stochastic, probabilistic black box. The architectural risk isn’t just what gets built, but the silent, probabilistic inclusion of the unexpected.

From Crawler to Channel: The Erosion of the Developer’s Mental Model

The anxiety is palpable. A developer confesses, “I’ve been using AI entirely for a year or two. I’ve been entirely prompting and I haven’t written a single line of code. I have mostly forgotten how to code”. They’re now actively reteaching themselves to code by hand, fighting against the muscle memory atrophy.

This is the crux of the silent risk: the erosion of the mental model. Architecture isn’t just about connecting boxes on a whiteboard, it’s the deep, often subconscious, understanding of how data moves, where bottlenecks hide, how failures cascade, and what trade-offs are being made. You can’t architect a system you don’t, at some fundamental level, understand how to build from scratch.

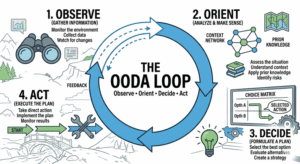

When your daily workflow shifts from writing and understanding code to curating prompts and evaluating AI outputs, you’re not moving up-stack. You’re moving off-stack. You become an orchestrator of a process you no longer directly control. The feedback loop between your architectural hypothesis (“a CQRS pattern might help here”) and its implementation (prompt -> generated C# classes) is now mediated by a model that optimizes for statistical likelihood, not architectural elegance or long-term maintainability.

This leads directly to the kind of long-term erosion of code quality driven by AI generation, where patterns and inconsistencies compound unseen.

“From Hexadecimal to Human Intent”: A False Summit

Another perspective champions AI as the “final abstraction layer”, shifting focus from code to human intent. As Steve Brown, an AI futurist, put it: “Instead of building a database… you’ll be building a digital employee… with basically a job spec.”

The vision is compelling: every department becomes a development team. The constraint is no longer technical skill but problem discovery. Yet, this vision assumes the “digital employee” is a competent, reliable agent. It ignores the architectural reality.

Who defines the “job spec” for an AI-generated service? Is it a human architect with a deep understanding of scale, or a marketing manager prompted by “make a viral feature”? When a “digital employee” autoscales a fleet of containers incorrectly because the prompt didn’t specify cost constraints, who bears the architectural fallout? The result of glib prompts often materializes as common design anti-patterns overlooked in scaling efforts.

The move from assembly to C gave us more control, not less, it abstracted away tedious details so we could reason about more complex logic. The move from Python to prompting often gives us less control, it abstracts away essential details, leaving us unable to reason about the system’s emergent behavior.

The Architectural Blind Spots AI Tooling Creates

AI tooling excels at generating plausible solutions but is pathologically bad at contextually optimal ones. It creates specific architectural blind spots:

- The Homogenization of Architecture: Models trained on vast public codebases converge toward the statistically average solution. You’re less likely to get a novel, bespoke architecture optimized for your unique constraints and more likely to get a pastiche of the most common patterns, good enough for a demo, potentially disastrous at scale.

- The Debugging Void: When a prompt-generated microservice fails in production, can you reason through its logic? Or are you stuck in a loop of re-prompting and hoping? The skill of debugging, tracing a symptom to its root cause through layers of logic, atrophies just as you need it most.

- The Undiscovered Technical Debt: An AI doesn’t know your company’s five-year-old monolith, its quirky data schema, or its legacy authentication service. When it generates code, it doesn’t integrate, it creates a new, clean, isolated service. You now have two systems: the old “legacy” one everyone fears and the new “AI-generated” one nobody fully understands. This directly contributes to the operational visibility problems that tooling often fails to resolve.

Reclaiming the Architect’s Chair: Guardrails, Not Generators

The answer isn’t to abandon AI tooling. It’s to use it strategically, not habitually. The goal should be to augment cognition, not outsource it.

- Treat AI Output as a First Draft, Not a Final Product. Every generated module, class, or config file must be read, understood, and vetted by a human who could have written it themselves. This isn’t a rubber stamp, it’s an active engineering review.

- Invest in Prompt Engineering for Architectural Context. Your most valuable prompt isn’t “code a microservice.” It’s “Given our existing event-driven architecture on AWS, with our specific IAM roles and VPC configuration, generate a Lambda function skeleton that…” Inject your constraints, your patterns, your architectural decisions into the prompt.

- Mandate Generative-Free Zones. Core architectural components, foundational libraries, and critical data flows should be designed and written by humans. This preserves institutional knowledge and the cognitive map of the system’s heart.

- Shift from “Writing Code” to “Writing Specifications.” Use AI to generate detailed, testable specifications, API contracts, and architectural decision records (ADRs). Then have humans, assisted by AI, implement against those specs. This ensures the what is human-designed, even if the how is AI-assisted.

- Double Down on Observability and Testing. If you can’t perfectly predict the code’s internals, you must obsessively monitor its external behavior. Generate robust, AI-proof tests. Instrument everything. The brittleness of AI-generated code demands the resilience of resilience practices requiring deep system knowledge.

Conclusion: The Cognitive Tax of Convenience

AI-assisted development is charging a cognitive tax. The convenience fee is paid in architectural nuance, systemic understanding, and ultimately, resilience. It’s not that AI can’t help produce good architecture, it’s that it makes it dangerously easy to produce passable architecture while obscuring the deep understanding required to evolve and scale it.

The risk isn’t that AI will replace architects. The risk is that it will hollow them out, turning them into prompt jockeys who can describe a house but have forgotten how to lay a foundation. The future of sustainable software isn’t the abolition of low-level understanding through AI, it’s the preservation of that understanding, applied at a higher strategic level.

Our challenge is to build tools and processes that leverage AI’s speed while fiercely protecting the architect’s need to understand the terrain. Because the moment we delegate not just the implementation, but the understanding of the system, we’re no longer architects. We’re just tourists in our own codebase. And maintaining clear architectural conventions becomes nearly impossible when the lines of code themselves become opaque artifacts of a stochastic process.