The Microservices Maturity Trap: When Complexity Outweighs Benefits

Your phone buzzes at 3 AM. Not one alert, but fourteen. The payment service is down, but that’s just the symptom. The inventory service timed out, which triggered a retry storm, which saturated the message queue, which caused the notification service to OOM. You split your monolith into fourteen services to “increase resilience”, and now you have fourteen places for things to quietly die.

Welcome to the microservices maturity trap: the point where your architecture requires more operational sophistication than your organization can deliver, and you end up with all the distributed pain and none of the promised autonomy.

The Netflix Fallacy and Resume-Driven Development

The industry has spent a decade treating microservices as the default “modern” architecture, as if monoliths were some embarrassing relic to be migrated away from immediately. The prevailing narrative in engineering forums suggests this adoption often stems from looking at billion-dollar companies like Amazon or Netflix and assuming their solutions fit your Series A reality.

But Netflix didn’t start with microservices. They arrived there after years of scale, with hundreds of platform engineers and custom internal tooling to manage the complexity. Adopting their architecture without their operational capacity produces the cost without the benefit, a phenomenon that has led many to warn against premature architectural distribution for early-stage companies.

The trap springs when teams confuse “independent deployment” with “independent operation.” You can deploy a service without deploying the monolith, sure. But can you debug it at 3 AM without waking up three other teams? Can you scale it without checking if the downstream services can handle the load? If not, you’ve built a distributed monolith, a system with all the network latency of microservices and none of the actual independence.

The Four Taxes You Didn’t Budget For

Moving from a monolith to microservices isn’t a refactor. It’s a shift from local function calls to distributed systems programming, and it comes with taxes that compound quietly until they dominate your engineering budget.

1. The Debugging Tax

In a monolith, a failed order produces one stack trace in one log file. In microservices, that same failure becomes an archaeological expedition across distributed traces, correlation IDs, and missing spans. The technical term is observability debt, and without heavy investment in Jaeger, Tempo, or similar distributed tracing infrastructure, you’re just guessing which hop failed.

2. The Deployment Tax

Microservices promise independent deploys, but many teams end up with version pinning between services, “deploy order” runbooks, and breaking changes because contracts drifted. Independent deploys are real, but only if teams can maintain contract discipline. Otherwise, you get technical debt created by cross-service coordination requirements that slow you down more than the monolith ever did.

3. The Data Consistency Tax

Remember when transactions were atomic? In microservices, “place order” isn’t one SQL transaction anymore. It’s a choreography of events, retries, idempotency keys, compensating actions, and partial failures. One SQL commit turns into five distributed steps:

4. The Performance Tax

In-process function calls happen in nanoseconds. Network calls happen in milliseconds and carry the overhead of serialization, deserialization, network hops, and potential retries. A request passing through five services accumulates latency at every hop, latency that users feel in checkout, search, and login screens.

Data Consistency: Monolith vs Microservices

-- Monolith. Atomic. Safe. Boring.

BEGIN;

UPDATE inventory SET stock = stock - 1 WHERE product_id = 42;

INSERT INTO orders (user_id, product_id) VALUES (101, 42);

COMMIT;

// Step 1: Order Service creates order, status=PENDING

// Step 2: Publish OrderCreated

// Step 3: Inventory Service reserves stock

// Step 4: Publish StockReserved

// Step 5: Order Service updates status=CONFIRMED

// Step 3 fails?

// → Publish StockFailed

// → Order Service cancels order

// → Hope the compensating transaction doesn't get lost

// → Hope the queue doesn't drop the message

// → Good luck debugging the Saga at 2 AM

You will debug a broken Saga. Not maybe. When.

When the Giants Retreat

The most damning evidence against premature microservices adoption comes from the companies that helped popularize them. Amazon’s Prime Video team moved their video monitoring system from distributed microservices to a monolith and cut infrastructure costs by over 90%. Twilio Segment consolidated 140+ microservices into a single application after realizing their engineering team was spending more time managing service boundaries than shipping features.

These aren’t legacy enterprises clinging to outdated tech. These are well-resourced companies with strong engineering cultures concluding that their distributed architecture was costing more than it delivered. Real-world case studies of large platforms moving away from strict microservices consistently show the same pattern: consolidation beats distribution when the operational model can’t support the complexity.

Shopify still runs one of the largest Ruby on Rails monoliths in production, serving millions of merchants. They didn’t break into microservices, they invested in a modular monolith with strict internal boundaries using a tool called Packwerk. Basecamp has operated as a monolith for over two decades, with their CTO coining the term “The Majestic Monolith” and later writing about how to recover from premature microservices adoption.

The Modular Monolith: The Third Option

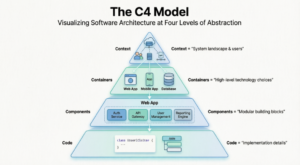

The false choice most teams face is “big ball of mud” or “30 microservices.” There’s a third option that keeps deployment simple while maintaining clean boundaries: the modular monolith.

A modular monolith is one deployable unit with strict internal modules that communicate through explicit interfaces, not HTTP. Each module owns its own data access logic and exposes functionality through internal APIs rather than direct function calls into another module’s internals.

// This is fine - explicit contract

import { PaymentService } from '@modules/payments';

// This is how you end up with spaghetti

import { calculateTax } from '@modules/payments/utils/tax-calculator';Hard rules: separate assemblies per module, expose only module contracts, prevent forbidden references with architecture tests, and isolate database boundaries by schema even if sharing one database. When you eventually need to extract a module into a standalone service, the boundary is already there. The extraction becomes boring work instead of archaeology.

This approach offers the pragmatic alternative of modular monoliths before splitting services, keeping the option open to extract services later when the evidence is real, not when the conference schedule demands it.

The Maturity Checklist: When Microservices Actually Make Sense

Microservices solve coordination problems at scale. If your team isn’t experiencing those coordination problems, the architecture adds cost without delivering benefits. Before splitting, ask these four questions honestly:

- Can this deploy without coordinating with other teams?

NO → don’t split. - Does it have a genuinely different scaling profile?

NO → reconsider. (If your login endpoint and video transcoder have the same resource needs, splitting them is just overhead.) - Do you have distributed tracing and centralized logs running?

NO → build that first. Without observability, you’re flying blind. - Does your team have the bandwidth to own this boundary end-to-end?

NO → wait. Platform engineers cost $140k-$180k annually, and you’ll need 2-4 of them before microservices become manageable.

The 150-engineer threshold is often cited as the point where Conway’s Law wins, where your architecture must reflect your org chart because synchronized releases become untenable. Below that, you’re likely borrowing solutions to problems you don’t have yet.

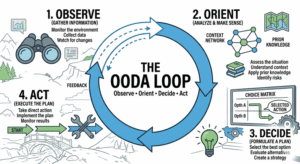

Migration Without the Big Bang

If you’re already trapped in microservices hell, don’t rewrite. Use the Strangler Fig pattern: route specific functionality to a new service (or back to a consolidated monolith) while the old system continues handling everything else. Over time, the new architecture takes over more functionality until the old one is either fully replaced or reduced to a small core.

This applies equally to signs of architectural decay hidden behind standard performance metrics, if your CPU is green but your developers are spending 40% of their time on cross-service coordination, your architecture is already dying.

Architecture Is a Business Decision, Not an Engineering Ego Trip

The best architecture is the one your team can build, operate, and evolve at your current stage without borrowing operational maturity from the future. A monolith built with discipline and clear internal boundaries will outperform a microservices architecture that the team cannot effectively operate.

Stop treating microservices as the default. Start with the simplest architecture that meets your current needs. Build it well. Add complexity when specific, measurable business requirements demand it, not when a blog post about Netflix makes it sound appealing.

If you’re spending more time managing deployments than shipping features, or tracing requests than fixing issues, you’re probably paying the microservices tax without getting the benefits. In 2026, the quiet trend isn’t anti-microservices, it’s pro-sanity. Choose the shape that matches your reality, and keep the option open to extract services later, when the pain is real and the maturity is present.

And if you’re evaluating your architecture or planning a migration, remember: operational tooling overhead often mistaken for necessary maturity is usually a sign you’re solving the wrong problem. Fix your module boundaries before you fix your network topology.