Event-driven architecture sells you a dream: services that blissfully ignore each other, communicating through pure, asynchronous events. Your systems become loosely coupled, infinitely scalable, and magically resilient. It’s the architectural equivalent of a timeshare presentation, until you’re the team that has to manage 1,000 downstream consumers processing trillions of messages daily. Then the maintenance fees come due.

Uber just open-sourced the receipts. Their Uforwarder project isn’t just another Kafka tool, it’s a confession. A recognition that the consumer side of event-driven systems becomes a nightmare of partition management, language inconsistencies, and operational overhead that makes your original monolith look like a weekend project. And if Uber’s massive engineering team struggled with this, your 12-person squad doesn’t stand a chance without understanding the trap.

The Four Horsemen of Consumer Management Hell

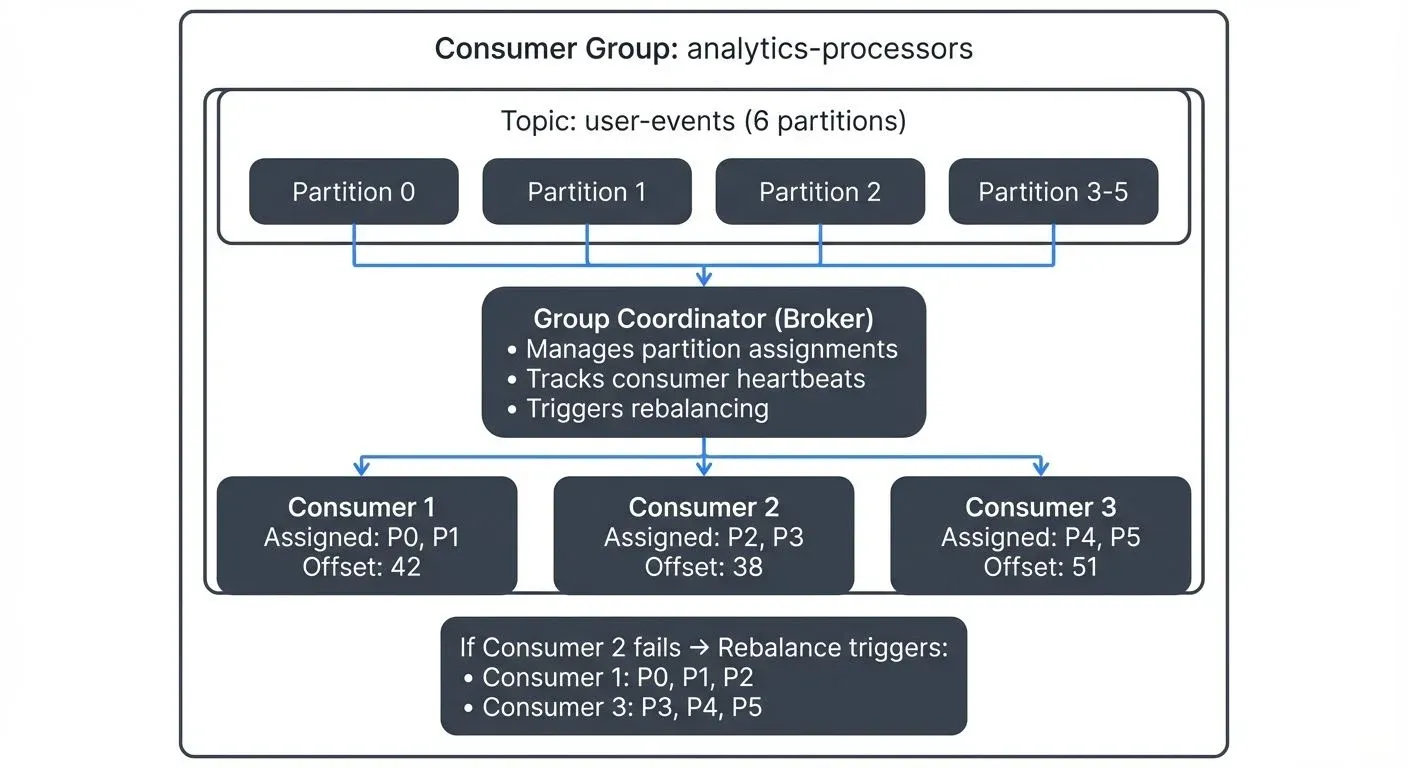

Uber’s previous internal consumer proxy revealed four specific failures that standard Kafka consumer groups couldn’t solve at scale. These aren’t edge cases, they’re inevitable consequences of success.

Head-of-line blocking sounds academic until a single oversized payload or dead service instance stalls an entire partition. Your sequential processing guarantee becomes a weapon against your own throughput. One bad message creates a traffic jam that backs up thousands of legitimate events behind it.

Resource inefficiency hits when you’re running thousands of proxy servers with wildly uneven consumption patterns. Some servers sit idle while others drown. Your infrastructure bill becomes a random number generator, and scaling challenges in distributed job scheduling compound the problem, adding more servers doesn’t help when the work distribution is fundamentally broken.

Bespoke delay semantics mean every team implements their own retry logic, backoff strategies, and poison pill handling. Some use exponential backoff, others linear. Some retry 3 times, others 300. The result? A fragmented operational landscape where debugging requires tribal knowledge of 47 different failure patterns. This is where quantifying architectural trade-offs in distributed systems becomes critical, what looks like “team autonomy” is actually technical debt with a fancy name.

Workload isolation becomes a nightmare. Separating production from staging or routing events by region requires either topic proliferation (hello, 10,000 topics nobody can track) or complex load-balancing gymnastics that make your infrastructure team question their career choices.

Inside Uforwarder: The Proxy That Changes Everything

Uforwarder doesn’t incrementally improve consumer groups, it fundamentally rearchitects the relationship between Kafka and services. Instead of direct consumer clients, it inserts a gRPC-driven push interface that acts as a shock absorber between your streaming platform and your microservices.

The architecture is deceptively simple: a centralized proxy layer handles the messy business of partition assignment, offset management, and failure handling, then pushes messages to consumers via gRPC. But the devil is in the implementation details.

Context-Aware Routing: Infrastructure-Level Smarts

Instead of consumers receiving every message and filtering at the application layer, Uforwarder uses Kafka message headers to propagate routing metadata. Load balancers then deliver events only to matching consumer instances based on region, tenant, or environment. It’s the difference between mailing every resident in a city a letter and having the postal service sort by ZIP code, one creates unnecessary work, the other operates at the right abstraction level.

This approach solves the workload isolation problem without topic proliferation. Your staging environment doesn’t need a separate topic, it just needs a routing header that the proxy respects. This is particularly powerful when defining service boundaries in event-driven systems, you can evolve your domain model without rearchitecting your entire streaming infrastructure.

Out-of-Order Commit Tracker: Defeating Head-of-Line Blocking

The out-of-order commit tracker is Uforwarder’s secret weapon. It monitors commit progress independently and detects stuck offsets based on configured thresholds. When a message fails delivery, it gets redirected to a dead letter queue while the commit pointer advances. The partition keeps moving.

This is a radical departure from Kafka’s traditional sequential processing guarantee. It’s also a necessary one. At Uber’s scale, waiting for every message to succeed means waiting forever. The tracker transforms the failure model from “stop everything” to “isolate and continue”, which is exactly what you need when processing multiple petabytes daily.

Consumer Auto Rebalancer: Dynamic Load Distribution

The auto rebalancer continuously evaluates CPU usage, memory pressure, and throughput across worker instances, redistributing partitions in real-time. It scales up quickly during traffic spikes and scales down gradually to prevent instability. This addresses the resource inefficiency problem by making consumption elastic at the partition level, not just the instance level.

For teams struggling with trade-offs between code reuse and operational independence in pipelines, this centralized approach offers a compelling middle ground: shared infrastructure intelligence with application-level autonomy.

DelayProcessManager: Surgical Backpressure

Instead of halting an entire consumer when dependencies are unavailable, DelayProcessManager enables partition-level pause and resume. Only blocked partitions buffer, others continue processing. This preserves throughput while simplifying delay handling within services, no more every-team-implements-their-own-retry-library chaos.

The Hidden Complexity Manifest: This Isn’t Just Uber’s Problem

Here’s the uncomfortable truth: these problems exist at every scale, but they’re hidden until you hit a certain velocity. Your five-consumer system works fine because the failure modes don’t have enough volume to manifest. But the complexity is already there, lurking in your bespoke retry logic and manual partition assignment scripts.

The event-driven architecture community has been guilty of selective storytelling. We celebrate the loose coupling but downplay the operational coupling that emerges in consumer management. Every consumer service becomes tethered to Kafka’s client library versions, partition assignment strategies, and rebalance protocols. Your “independent” services are secretly more coupled than they were in your monolith, just in ways that don’t show up in architecture diagrams.

This is why managing legacy system boundaries during incremental modernization becomes critical. The strangler fig pattern fails without an anti-corruption layer because the old system and new system develop hidden dependencies through shared event schemas and consumer expectations. Uforwarder is, in many ways, the ultimate anti-corruption layer for Kafka-based systems.

The Controversy: Elegant Solution or Complexity Layer Cake?

The architectural community is split. Critics argue that Uforwarder solves problems Kafka should have solved natively, why add another network hop and operational component when the underlying platform should be more robust? They see it as evidence of Kafka’s limitations rather than a triumph of engineering.

Proponents counter that distributed systems always require operational layers at scale. You don’t run raw TCP for HTTP, you use a reverse proxy. Uforwarder is the reverse proxy for event streaming, providing the observability, routing, and failure isolation that any mature platform needs.

The reality is messier. Uforwarder absolutely adds complexity, another component to monitor, scale, and debug. But it replaces a thousand different complexity implementations with one standardized, observable system. For Uber, that trade-off is obvious. For a startup with three consumers, it’s overkill.

Lessons for Teams Not Operating at Uber Scale

You probably don’t have 1,000 consumer services. But you likely have:

- Inconsistent consumer implementations across teams

- Manual offset management that’s error-prone

- Bespoke retry logic that nobody documents

- Partition rebalancing that causes incidents

- Consumer lag monitoring that’s an afterthought

The lessons from Uforwarder apply even at smaller scales:

-

Centralize offset management logic. Don’t let every team implement their own. Whether you use Uforwarder or a shared library, consistency is more important than perfection.

-

Implement out-of-order commit tracking for poison pills. One bad message shouldn’t stall your entire pipeline. The pattern of detecting stuck offsets and moving them to a DLQ is universally valuable.

-

Use context-aware routing from day one. Add headers for environment, tenant, and region even if you don’t need them yet. Retrofitting this is painful.

-

Monitor consumer lag as a first-class metric. Everything else, throughput, latency, resource usage, means nothing if your consumers can’t keep up. The data migration and system integration complexity posts show how ignoring lag creates cascading failures.

-

Question the “just add more consumers” mantra. As the distributed scheduler’s dilemma demonstrates, scaling consumers without understanding partition distribution and resource constraints creates a death spiral.

When to Use Uforwarder (and When to Run Away)

Use Uforwarder if:

– You have more than 20 consumer services and growing

– Consumer lag is a recurring production issue

– Teams are duplicating retry and offset logic

– You need multi-tenant or multi-region isolation

– Your infrastructure team can support another critical component

Don’t use Uforwarder if:

– You have fewer than 10 consumers (overkill)

– Your message volume is under 1M/day (complexity tax too high)

– You can’t dedicate engineering time to operational tooling

– Your team is still mastering basic Kafka concepts (fix fundamentals first)

The decision isn’t about scale, it’s about operational maturity. Uforwarder solves organizational complexity more than technical complexity. If your teams can’t agree on consumer patterns, the proxy enforces consistency. If they can, you might not need it.

The Bottom Line: Event-Driven Isn’t Wrong, But It’s Not Free

Uber’s Uforwarder isn’t an indictment of event-driven architecture, it’s a necessary evolution. The pattern works, but the implementation details matter more than the architecture diagrams suggest. Loose coupling at the service level requires tight coupling at the operational level, and someone has to pay that tax.

The real controversy isn’t whether Uforwarder is good or bad. It’s that we’ve been selling event-driven architectures without disclosing the full price tag. The next time someone draws three boxes with arrows and declares “loose coupling”, ask them which consumer proxy they’re using. If they look confused, send them Uber’s way.

Event-driven microservices aren’t a lie. But they’re not the whole truth either. Uforwarder is what happens when the bill comes due, and it’s a bill every team running Kafka at scale will eventually have to pay, one way or another.