This is where aggregate design stops being academic and starts feeling like a hostage negotiation between your domain model and your database.

The Transactional Boundary Fallacy

The first mistake most developers make is conflating composition with transactional boundaries. Yes, a branch conceptually belongs to a restaurant. No, that doesn’t automatically mean they should share an aggregate root. Aggregates are about transactional boundaries, not relationships?

The distinction matters. When you need to enforce “a restaurant can’t have more than 50 branches”, your brain screams “same aggregate!” But that domain invariant might be better handled as an eventual consistency rule enforced by a domain service or a database constraint. The real question isn’t about ownership, it’s about what needs to be consistent right now versus what can be consistent soon enough.

One commenter pointed out the write operation reality check: you only need to fully load aggregates on write operations, which constitute less than 10% (often less than 1%) of total operations. The math is sobering. If you’re loading a 1MB aggregate for a simple name change, you’re not protecting domain integrity, you’re committing performance seppuku. Around 1MB of data is the practical ceiling before you need to start questioning your life choices.

The 50-Branch Rule: Concurrency Makes Cowards of Us All

The “max 50 branches” rule exposes another uncomfortable truth: concurrency changes everything. If you have multiple admins creating branches simultaneously, optimistic locking and database constraints become non-negotiable. The aggregate boundary that looks clean in a single-user scenario turns into a contention nightmare under parallel access.

This is where real-world concurrency issues that affect aggregate design and transactional integrity rear their ugly head. Your elegant domain model doesn’t account for two admins racing to create the 50th branch. Suddenly, that beautiful aggregate boundary needs versioning, conflict resolution, and retry logic. The purity you sought becomes a distributed systems problem you didn’t sign up for.

The pragmatic approach? Use a database constraint for the count check and handle the violation as a domain exception. Yes, it’s “leaking” infrastructure concerns into your domain. No, you shouldn’t care when the alternative is a 3-second aggregate load time and lock contention that brings your system to its knees.

Performance-Based Splitting: The Technical Excuse That Works

Here’s the controversial bit: sometimes you split aggregates for purely technical reasons, and that’s okay. The DDD purists will clutch their pearls, but practitioners who’ve stared down production performance metrics know the truth.

If your restaurant aggregate is pulling 2MB of branch data for a simple status change, you don’t have a modeling problem, you have a performance emergency. Splitting Branch into its own aggregate and referencing it via typed IDs isn’t heresy, it’s engineering. The key is acknowledging the trade-off: you’re trading immediate consistency for performance and scalability.

This is where inter-module communication challenges similar to aggregate boundary decisions in DDD become instructive. The same patterns you use to decouple modules, anti-corruption layers, published languages, apply directly to aggregate design. Your aggregate boundary is just a smaller-scale bounded context.

The Bounded Context Escape Hatch

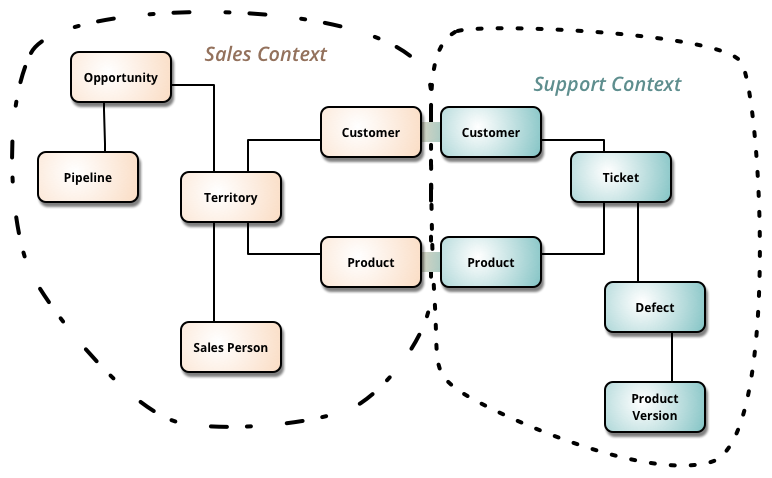

The most sophisticated solution to the restaurant-branch dilemma isn’t about aggregates at all, it’s about bounded contexts. You might have a “Restaurant Management” context where Restaurant is the aggregate root with lightweight branch references (just IDs and locations), and a separate “Branch Operations” context where Branch is its own aggregate root with menus, staff, and orders.

This approach acknowledges a fundamental reality: the concept of “branch” means different things in different contexts. In restaurant management, it’s a strategic asset count. In branch operations, it’s an operational hub. Forcing a single aggregate model to serve both contexts creates the performance nightmare you’re trying to avoid.

The context map becomes your friend here. Define the relationship between your contexts, customer-supplier, anti-corruption layer, or published language, and let each context optimize its own aggregate boundaries. The Restaurant context enforces the 50-branch rule via eventual consistency and domain events. The Branch context manages operational complexity independently.

Eventual Consistency: The Scapegoat and the Savior

Eventual consistency gets a bad rap from developers who’ve been burned by poorly implemented async systems. But for cross-aggregate rules, it’s often the only sane choice. When you create a new branch, publish a BranchCreated event. The Restaurant aggregate subscribes and validates the count. If you’re over 50, it publishes a BranchLimitExceeded event and triggers a compensating action.

This is where how event sourcing supports consistency across aggregates through immutable events becomes relevant. Event sourcing isn’t just an audit trail, it’s a mechanism for enforcing distributed invariants without crushing performance. The event store becomes your source of truth for cross-aggregate rules, and the aggregates themselves stay lean and focused.

The trade-off is complexity. You need message infrastructure, idempotency, and error handling. But that complexity is explicit and manageable. The alternative, hidden complexity in your ORM’s eager loading and lock management, is implicit and murderous.

The 1MB Rule and Other Pragmatic Heuristics

Let’s talk numbers. The 1MB aggregate size heuristic isn’t arbitrary, it’s born from production pain. Loading more than 1MB per write operation means you’re either modeling incorrectly or your domain is genuinely complex enough to warrant distributed systems patterns. In the latter case, pretending a single aggregate will save you is architectural malpractice.

Do the back-of-envelope calculation. If your average branch entity is 20KB (location, hours, minimal data), 50 branches puts you at exactly 1MB. Add menus, employees, and orders, and you’re way over. The math forces the question: are you building a system for a theoretical restaurant chain with 3 branches, or for a real one with 500?

Operational metrics that reveal performance bottlenecks in aggregate-heavy systems tell the story your CPU graphs won’t. Look at aggregate load times, lock wait durations, and transaction retry rates. When those numbers climb, your aggregate boundaries are too large, regardless of what the domain experts say.

The Unlearning Curve

“Learn it, then unlearn it, then learn it again.” This isn’t DDD heresy, it’s maturity. The first pass is about purity and rules. The second pass is about pragmatism and exceptions. The third pass is about judgment.

That judgment means recognizing when domain invariants are actually just business policies that can change. The “50 branch limit” might be a pricing tier constraint, not a fundamental domain rule. If it changes to 100 next quarter, does your aggregate boundary change? If the answer is yes, you’re modeling policy, not domain essence.

Judgment also means admitting that some aggregates are technical necessities. You might have a RestaurantSummary aggregate that exists solely for fast reads, with eventual consistency to the “real” Restaurant aggregate. That’s not impure, that’s CQRS at the aggregate level.

Concrete Strategies for the Real World

Here’s what actually works when you’re wrestling with aggregate boundaries:

- 1. Use Typed IDs for References: Instead of embedding branches, reference them with

BranchIdvalue objects. This makes the boundary explicit and prepares you for extraction.

public class Restaurant {

private RestaurantId id;

private String name;

private Set<BranchId> branchIds, // Not Branch entities

// ...

}- 2. Enforce Invariants Through Domain Services: For cross-branch rules, create a

BranchCreationServicethat checks constraints before publishing events. - 3. Implement Anti-Corruption Layers: When you do split aggregates, use ACLs to translate between models. The Order context doesn’t need the full Branch entity, just a

BranchReferencewith location and operating hours. - 4. Measure Aggregate Load Times: Instrument your repository implementations. When median load time exceeds 50ms for a simple operation, you have a boundary problem.

- 5. Start with the Write Model: Design aggregates for writes, then build read models for queries. Don’t let read concerns bloat your transactional boundaries.

The Takeaway

The restaurant-branch problem doesn’t have a single right answer because it’s not a technical problem, it’s a business priority question. If you’re building a system for franchise management where the 50-branch rule is legally binding and constantly enforced, maybe you accept the performance hit. If you’re building a high-volume ordering platform, you split those aggregates and handle the rule eventually.

The spicy truth is that most DDD advice assumes a level of performance headroom that doesn’t exist in competitive systems. The aggregate patterns from the blue book were written when a 10ms database round-trip was fast. Today, that’s a lifetime. Your aggregate boundaries need to reflect today’s performance realities, not 2003’s.

Stop asking “what’s the correct aggregate boundary?” Start asking “what can we afford to keep consistent, and what can we afford to load?” The answer will make your domain model less pure, but your system more useful. And at the end of the day, working software beats pure domain models every single time.