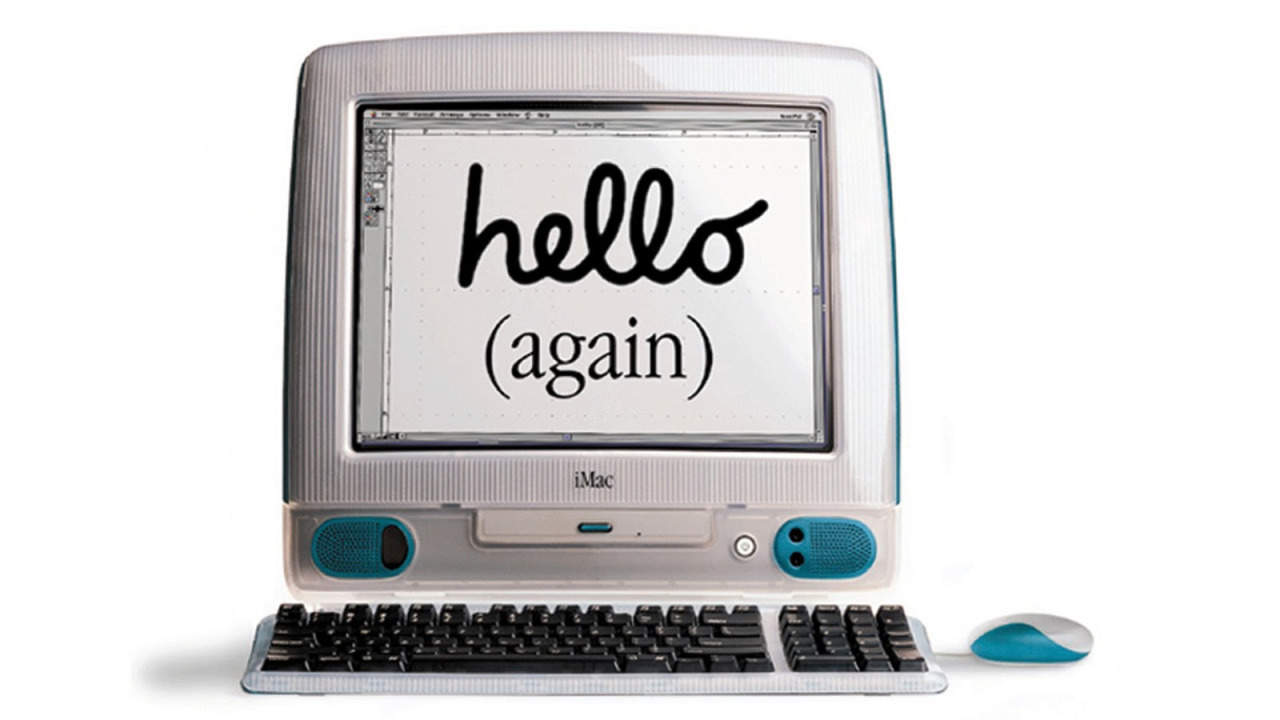

While the AI industry burns billions on rising AI hardware prices and memory supply constraints that would make a crypto miner blush, someone just ran a transformer neural network on a computer released when Bill Clinton was still in office. Not in a museum. Not emulated. Actually running inference on a stock 1998 iMac G3 with 32MB of RAM and a 233MHz PowerPC 750 processor under Mac OS 8.5.

This isn’t a stunt. It’s a surgical strike against the assumption that AI requires cloud-scale infrastructure.

The Hardware That Shouldn’t Work

Let’s be clear about what we’re dealing with. The iMac G3 Rev B, Bondi Blue, translucent, the computer that saved Apple, ships with specs that wouldn’t even boot a modern Electron app:

| Component | Spec | Modern Equivalent |

|---|---|---|

| CPU | 233 MHz PowerPC 750 | ~1/40th of a Raspberry Pi 4 |

| RAM | 32 MB | 500x less than a base MacBook Pro |

| Storage | 4 GB HDD | Smaller than a Windows update |

| OS | Mac OS 8.5 | No SSH, no terminal, no memory protection |

And yet, it generates coherent text. Not quickly, but correctly. Using Andrej Karpathy’s 260K TinyStories model, a Llama 2 architecture compressed to roughly 1MB, it reads a prompt from a text file, tokenizes it with BPE encoding, runs the full transformer forward pass (matrix multiplies, RoPE, attention, SwiGLU), and writes the continuation back to disk.

Thirty-two tokens generate in under a second. On hardware that predates USB 2.0.

The Cross-Compilation Pipeline From Hell

Getting modern C code to run on classic Mac OS requires Retro68, a GCC-based cross-compiler that targets PowerPC classic Mac OS and outputs PEF binaries. The build process alone takes 30-60 minutes on a modern machine and involves resurrecting toolchain components that haven’t seen active development since the Y2K panic.

But the real nightmare is endianness. The PowerPC 750 is big-endian. Every modern LLM checkpoint and tokenizer file is little-endian. That means every single 32-bit integer and float in the ~1MB model needs byte-swapping before the iMac can read it.

# From endian_swap.py - converting little-endian to big-endian

# for every weight and parameter in the checkpointThe model and tokenizer get endian-swapped via Python script, transferred over FTP (because Mac OS 8.5 doesn’t have SSH or a terminal), and loaded into an operating system that thinks memory management is a suggestion rather than a guarantee.

Memory Management War Crimes

Mac OS 8.5 allocates applications a fixed memory partition, defaulting to well under 1MB. The model weights alone consume ~1MB. This is where the project descends from “clever hack” to “systems engineering black magic.”

The developer had to bypass the standard C memory allocator entirely and interface directly with the Mac Memory Manager using APIs that date back to System 7:

MaxApplZone(): Expands the application’s heap to its absolute maximum allowed sizeNewPtr(): Allocates directly from the Mac Memory Manager instead of usingmalloc, which would fail catastrophically on 32MB of physical RAM- Static buffers: All inference state, the KV cache, attention matrices, activation buffers, uses compile-time static arrays instead of dynamic allocation

- Sequence length caps: The

max_seq_lenis hard-capped from 512 to 32, shrinking the KV cache by 16x

Users must manually open the Get Info panel and set the Preferred Memory Size to 3000KB just to give the app breathing room. This is operating legacy systems at scale reduced to its most absurd and elegant extreme.

The Grouped-Query Attention Bug That Broke Everything

The hardest technical challenge wasn’t the cross-compilation or the memory constraints. It was a silent pointer arithmetic bug in the weight layout.

The 260K model uses Grouped-Query Attention (GQA) with n_kv_heads=4 and n_heads=8. The original llama2.c checkpoint_init_weights function calculates offsets for the key and value weight matrices (wk and wv) assuming n_kv_heads == n_heads, a standard assumption that collapses when they differ.

The original code:

// Broken for GQA models

w->wk = ptr, ptr += p->n_layers * p->dim * p->dim;

w->wv = ptr, ptr += p->n_layers * p->dim * p->dim;When n_kv_heads (4) doesn’t equal n_heads (8), those matrices are half the expected size. Every subsequent weight pointer, wo, rms_ffn_weight, w1, w2, w3, rms_final_weight, freq_cis, ends up pointing to garbage memory. The result? freq_cis_real values of -1.87 trillion instead of ~1.0, propagating NaN through the entire forward pass.

The fix requires calculating the actual matrix size using n_kv_heads * head_size:

// Correct for GQA

w->wk = ptr, ptr += p->n_layers * p->dim * (p->n_kv_heads * head_size);

w->wv = ptr, ptr += p->n_layers * p->dim * (p->n_kv_heads * head_size);This is the kind of bug that doesn’t exist in cloud environments with 80GB A100s and containerized debugging tools. It only surfaces when you’re optimizing model weight formats for hardware that considers 32MB a luxury.

Debugging Without a Terminal

Mac OS 8.5 has no terminal emulator. No SSH. No printf to stdout. The Retro68 RetroConsole library crashes on the iMac G3 Rev B, so the developer resorted to 1980s-style debugging: writing diagnostic info to output.txt and checking it in SimpleText after each run.

Imagine debugging transformer inference by opening a text file in a GUI text editor. Every. Single. Time. It’s a workflow that makes LLM fine-tuning frameworks look like they’re running on futuristic alien technology, which, relatively speaking, they are.

Why This “Useless” Project Matters

The immediate reaction from the developer community was predictably split between “this is sick” and “but what’s the use case?” The output is short. The setup is fragile. You could get better results running local desktop inference speeds on literally any modern machine.

But that’s missing the point entirely.

This project demonstrates that the current AI hardware arms race is partially a software bloat problem. If a 260K parameter model can generate coherent stories on a 233MHz processor with 32MB RAM, what could we do with modern hardware if we optimized with this level of desperation?

It also represents a critical counterpoint to the narrative that AI requires massive centralized infrastructure. While companies hoard GPUs and open-source AI on modest hardware becomes increasingly vital for accessibility, this iMac G3 stands as proof that intelligence, artificial or otherwise, doesn’t inherently require a data center.

The feat joins a lineage of “impossible” ports: Doom on pregnancy tests, Linux on toasters, and now LLMs on Bondi Blue. It’s a reminder that constraints breed creativity, and that sometimes the most instructive engineering happens when you have 32MB of RAM and no choice but to make it work.

The green goblin had a big mop. She had a cow in the field too. I

Thirty-two tokens. Under a second. On a computer old enough to vote.