For years, the AI community has operated under a simple, brutal assumption: fine-tuning modern language models requires enterprise-grade hardware. If you wanted to train Google’s latest models, you needed NVIDIA A100s or at least a beefy RTX 4090 with 24GB of VRAM. The idea of fine-tuning a 4-billion parameter multimodal model on an 8GB laptop GPU wasn’t just optimistic, it was technically impossible.

Until this week.

Unsloth just dropped a set of optimizations that collapse Gemma 4’s VRAM requirements by roughly 60%, enabling the E2B variant to train on 8GB of VRAM. But the real story isn’t just the memory savings, it’s the four critical bugs they had to squash to make it work, including a particularly nasty issue where standard QLoRA tutorials were actively corrupting model weights.

The Architecture of a Disaster

Gemma 4 arrived with a sophisticated multimodal architecture supporting vision, text, and audio across four variants: the lightweight E2B (2B active parameters), the balanced E4B (4B active), the MoE-based 26B-A4B, and the dense 31B flagship. On paper, the smaller variants looked perfect for consumer hardware. In practice, they were broken out of the box.

The most insidious issue involved use_cache=False, a setting found in virtually every QLoRA fine-tuning tutorial on the internet. When training most models, disabling the KV cache during the forward pass saves memory during gradient computation. Standard practice. Harmless, even.

For Gemma 4, it was a silent killer.

Gemma 4’s E2B and E4B variants share KV state across layers, 20 layers for E2B, 18 for E4B. The cache isn’t just an optimization, it’s the only mechanism allowing early layers to communicate KV tensors to later layers. When use_cache=False is set (or forced by gradient_checkpointing=True in standard transformers), the model skips cache construction entirely. The shared layers fall back to recomputing K and V from current hidden states, producing garbage logits with maximum absolute differences of 48.9 compared to cached inference.

Training loss would explode to 300-400 (effectively random chance on a 262K vocabulary) instead of the expected 10-15. Worse, model.generate() would work perfectly during evaluation because it internally forces use_cache=True, creating a maddening debugging experience where inference looked fine but training was completely broken.

Four Bugs and a Funeral (for Your Cloud Bill)

Unsloth’s latest release doesn’t just optimize memory, it performs surgery on Gemma 4’s training pipeline. Here are the landmines they defused:

1. Gradient Accumulation Arithmetic

Standard implementations of gradient accumulation were causing loss explosions to 300-400 on Gemma 4. The issue stemmed from improper accumulation accounting during backpropagation. Unsloth fixed the gradient accumulation logic to maintain proper loss scaling, bringing training losses back down to the expected 10-15 range for multimodal models.

2. The KV Sharing Catastrophe

The use_cache=False bug required restructuring how Gemma 4 handles KV tensors when caching is disabled. Unsloth’s fix ensures that even with use_cache=False, the shared KV state across layers maintains bit-exact parity with cached inference (diff of 0.000000). This means you can use gradient checkpointing without corrupting your model’s attention mechanism.

3. The Zero-Layer IndexError

Gemma 4’s 26B and 31B variants ship with num_kv_shared_layers = 0. In Python, -0 == 0, which means layer_types[:-0] collapses to layer_types[:0], returning an empty list. The cache gets built with zero layer slots, causing an immediate IndexError: list index out of range on the first attention forward pass. Unsloth patched the cache initialization to handle the zero-case correctly.

4. Float16 Audio Overflow

Gemma4AudioAttention uses config.attention_invalid_logits_value = -1e9 for masked fill operations. On FP16 hardware (like Tesla T4s), -1e9 overflows the FP16 maximum of 65504, throwing RuntimeError: value cannot be converted to type c10::Half without overflow. Unsloth clamped these values to prevent overflow while maintaining masking semantics.

The VRAM Reality Check

With bugs fixed, here’s what actually fits on consumer hardware:

| Model | Configuration | Minimum VRAM | Notes |

|---|---|---|---|

| Gemma-4-E2B | LoRA | 8GB | Full multimodal fine-tuning (vision/text/audio) |

| Gemma-4-E4B | LoRA | 17GB | Recommended over E2B for quality |

| Gemma-4-31B | QLoRA | 22GB | Dense model, fits on RTX 3090/4090 |

| Gemma-4-26B-A4B | LoRA | >40GB | MoE variant, QLoRA support coming |

The E2B achievement is particularly significant. We’re talking about a multimodal model, handling vision, text, and audio, that can be fine-tuned on hardware like an RTX 3070 or even a laptop 4070. This follows unsloth’s earlier Triton kernel implementations for even lower VRAM, continuing their campaign to end the VRAM arms race.

For the 26B-A4B MoE variant, you’ll still need serious hardware (>40GB), though Unsloth’s previous optimizations reducing VRAM demands for MoE models suggest this threshold will drop soon. The dense 31B variant is surprisingly accessible at 22GB, fitting comfortably on consumer 3090s.

Why Standard Tutorials Were Breaking Your Models

The use_cache=False issue represents a fundamental mismatch between Gemma 4’s hybrid attention architecture and standard training practices. Gemma 4 employs both sliding-window attention (SWA) layers and full attention layers that share a global cache. When the cache is disabled, the coordination between SWA and global layers breaks down, the SWA layers that should see only a 1024-token window can’t correctly bound their attention without cache metadata indicating position recency.

This explains why historical limitations of 8GB laptops preceding current optimizations were so frustrating: even when you could squeeze a model into memory, architectural bugs would silently destroy training quality.

The workaround before Unsloth’s fix was avoiding use_cache=False entirely and relying solely on gradient checkpointing for memory savings, a solution that worked but limited training context lengths. Unsloth’s patch removes this constraint entirely.

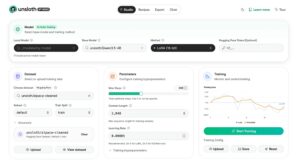

Running It Yourself

Unsloth provides free Colab notebooks for immediate testing:

For local training with the Unsloth Studio UI:

# Install

curl -fsSL https://unsloth.ai/install.sh | sh

# Launch

unsloth studio -H 0.0.0.0 -p 8888Then navigate to http://localhost:8888 and select your Gemma 4 variant.

When configuring training, note that multimodal models (E2B/E4B) naturally run higher loss (13-15) compared to text-only variants (1-3). If you see losses hitting 100-300, you’re likely hitting the gradient accumulation bug present in standard transformers, switch to Unsloth’s implementation.

For vision fine-tuning, Unsloth supports selective layer training:

from unsloth import FastVisionModel

model = FastVisionModel.get_peft_model(

model,

finetune_vision_layers=False, # Start with text-only

finetune_language_layers=True,

finetune_attention_modules=True,

finetune_mlp_modules=True,

r=32,

lora_alpha=32,

)The Democratization Angle

This isn’t just about saving money on cloud compute. It’s about removing the hardware barrier to AI research. When strategies for running high-performance models efficiently on edge devices become accessible to hobbyists with gaming laptops, the innovation surface area explodes.

Developers can now fine-tune vision-language models on local hardware without shipping data to third-party APIs. The economic incentives for choosing local deployment over cloud APIs just became overwhelming for privacy-sensitive applications, why pay per-token when you can own the weights?

And for those running on Apple Silicon or other constrained environments, this joins parallel success stories of running AI on similarly constrained Apple Silicon in proving that the future of AI isn’t just cloud-scale clusters, it’s distributed, local, and private.

The Bottom Line

Unsloth didn’t just optimize Gemma 4, they fixed fundamental architectural bugs that were rendering the model unusable for standard training workflows. The 8GB VRAM threshold for fine-tuning a multimodal 2B model isn’t a hack or a degraded experience, it’s full LoRA training with vision and audio support.

If you’ve got an 8GB GPU sitting in your desktop right now, you can fine-tune Google’s latest multimodal architecture this afternoon. The only prerequisite is abandoning broken standard implementations for Unsloth’s patched stack.

The VRAM arms race isn’t over yet, but for once, the hobbyists are winning.