I’ve been a freelance content writer for six years. For the past two, I’ve spent roughly four hours a day with an AI writing assistant open in another tab, generating marketing copy, strategy decks, and social media ads. Objectively, my output has never been higher. My clients are happier. My bank account is healthier.

And I can no longer think clearly.

This isn’t dramatic flair. It’s the empirical reality I share with a growing cohort of knowledge workers who’ve discovered that cognitive offloading comes with compound interest, and the bill is due in the form of degraded reasoning, creative atrophy, and what researchers are now calling “cognitive debt.”

The Productivity Trap

When I started using AI writing tools in 2024, the benefits were immediate and intoxicating. First drafts that used to take three hours now took thirty minutes. Research that required diving into five sources now required asking a chatbot for a summary. A/B testing became trivial because I could generate twenty variants instead of three.

But somewhere between the faster turnaround times and the higher volume, I lost the friction that made writing valuable in the first place.

Here’s the loop that broke my brain:

- I describe the idea in broad strokes.

- The model generates the structure and argumentation.

- I accept, refine, and polish.

The output is objectively “better” than my rough early drafts used to be. It’s more coherent, grammatically pristine, and structurally sound. But I’m no longer constructing thoughts from scratch, I’m curating them. I’m reacting to a predetermined narrative instead of discovering what I actually believe through the struggle of articulation.

As one researcher noted in a recent study on AI-assisted essay writing, this workflow creates an “accumulation of cognitive debt.” The brain, like any muscle, operates on a use-it-or-lose-it basis. When we outsource the conceptual heavy lifting to LLMs, we don’t just save time, we skip the neural workout that maintains our critical thinking capacity.

The Science of Cognitive Surrender

The empirical evidence for this degradation is stacking up faster than most AI enthusiasts want to admit. A 2025 study in Societies found direct correlations between heavy AI tool usage and declines in deep thinking and critical analysis. fMRI research published in bioRxiv showed lower engagement of cognitive control, attention, and modulation networks in children using ChatGPT compared to those thinking independently, along with measurable drops in creativity.

But the most damning research comes from Shaw and Nave’s work on what they term “cognitive surrender.” Their experiments revealed that AI systems don’t just save effort, they actively lower our threshold for scrutiny. The fluent, confident outputs of LLMs are treated as epistemically authoritative, attenuating the metacognitive signals that would ordinarily route a response to deliberation.

In other words, AI doesn’t just write for you, it decides for you, gradually wearing down your capacity to discriminate between solid reasoning and confident-sounding bullshit.

This manifests in subtle ways. I’ve noticed my own syntax becoming sloppier in human conversations, trusting that context will carry meaning the way it does with AI. I no longer fix small punctuation errors when texting because I’ve grown accustomed to the machine handling it. The cognitive shortcuts that make AI interaction efficient are bleeding into the rest of my communication, when discussing how automated tools can mask underlying complexity in ways we don’t immediately perceive.

The Friction Was the Feature

John Gallagher, writing about the “managerial model” of AI writing, distinguishes between two modes of creation: the manager who evaluates and approves text, and the maker who crafts it. Managers love AI writing because it produces fluent, institutional prose that checks boxes. For them, writing is documentation, a performative artifact that needs to exist more than it needs to say something.

But for makers, writers, researchers, engineers, the process of writing is the process of thinking. The friction of wrestling with language forces conceptual clarity. You don’t know what you believe until you try to write it down and realize your argument has holes big enough to drive a truck through.

AI removes that friction. It turns writing into a vending machine transaction: drop in a prompt, collect a paragraph. The result is what one product manager described as shipping faster but thinking shallower. “The friction IS the feature”, he noted. “Remove it and you ship faster but think shallower.”

This dynamic isn’t limited to writing. Software engineers are experiencing the same identity crisis. As Ivan Turkovic documented in a viral essay, AI has made writing code easier but being an engineer harder. When agents scaffold entire features, engineers shift from builders to reviewers, judges on an assembly line that never stops.

A Harvard Business Review study from February 2026 found that 83% of developers reported increased workloads despite AI tooling, with 62% experiencing burnout. The cognitive load shifted from creation to supervision, and supervision without shared context is exhausting.

The Groupthink Acceleration

The cognitive degradation isn’t just individual, it’s collective. In research communities, AI writing is creating what one academic calls “groupthink towards LLM views.” When everyone starts with the same ten ideas that ChatGPT generated, intellectual diversity collapses.

I witnessed this firsthand at a grant proposal writing day for an AI Safety project. The lead author opened with: “These are the ten ideas ChatGPT came up with, so let’s start from there.” Human brainstorming was cancelled. The ideas were six years out of date, American-centric for an EU project, and already explored by other teams. But because the AI had spoken, we treated them as authoritative starting points.

This is the “cognitive crutch” effect in action. Research from 2025 found that AI-assisted learners spent significantly less time on learning tasks (3.2 hours vs 5.8 hours for traditional learners) but showed only marginal gains, suggesting that when we offload the struggle, we offload the learning.

The danger compounds when we consider proving that current models require manual verification for accuracy. AI systems confidently hallucinate facts, which then get published, which then become training data for the next generation of models. We’re already seeing degradation in fact-finding behaviors, researchers asking AI for sources that earlier AIs hallucinated, creating a feedback loop of confident error.

Reclaiming the Cognitive Load

So what’s the alternative? Abstinence? Luddism?

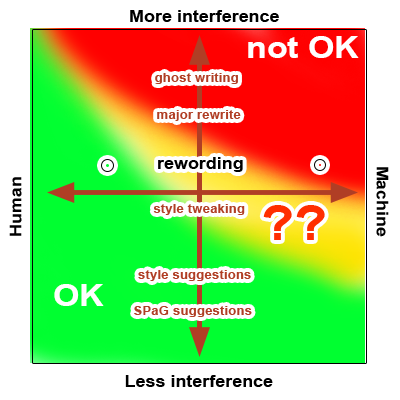

Not necessarily. The writers and engineers who are navigating this successfully aren’t abandoning AI, they’re walling off the parts of their workflow that require genuine cognition. They’re using AI for spell-checking, formatting, and data crunching, but protecting the “frictionful” work of ideation, structuring arguments, and first-draft thinking.

Some are adopting explicit “100% human” protocols for certain phases of work:

The key is recognizing that not all productivity gains are equal. Speeding up the transcription of thought is fine. Speeding up the formation of thought is catastrophic.

As one researcher in the EA Forum noted, the solution isn’t to ban AI from research writing, it’s to change how we govern its use.

Force yourself to state premises and definitions first. Ask adversarial questions. Use AI as a constraint engine and critic rather than a ghostwriter. Same tool, different governance.

The Economic Imperative

There’s a darker undercurrent here that connecting individual cognitive impacts to broader workforce changes makes unavoidable. As AI makes certain tasks faster, organizational expectations simply rise to consume the surplus. The baseline moves, and nobody sends a memo. Entry-level hiring has fallen 25% at major tech firms because junior work, the very training ground that builds expertise, is now handled by AI.

We’re optimizing for output volume at the exact moment when we need conceptual depth. The writers who can still think independently, who haven’t outsourced their reasoning to statistical models, are becoming more valuable precisely because they’re becoming rarer.

The uncomfortable truth is that AI writing tools aren’t just changing how we work, they’re changing who we are. And if we don’t start treating the preservation of human critical thinking as a technical requirement rather than a nostalgic preference, we’ll wake up in a world where the machines don’t need to replace us because we’ve already replaced ourselves.

The friction was never a bug. It was the feature that kept us sharp.