When Block announced it was cutting 4,000 employees, nearly half its workforce, to “embrace AI”, the tech world barely flinched. We’ve become numb to these announcements, haven’t we? Another day, another tech giant discovering that algorithms are cheaper than humans. But here’s what should actually keep you up at night: this isn’t just a hiring freeze or a cost-cutting exercise. It’s a fundamental architectural bet that will reshape how systems are built, maintained, and fail at scale.

The real story isn’t the layoffs themselves. It’s the silent architectural revolution happening behind the scenes, the shift from human-in-the-loop systems designed for oversight and intervention to fully autonomous architectures that treat human judgment as a bug, not a feature.

The Architectural Paradigm Shift No One’s Talking About

Traditional enterprise architecture has always assumed humans are part of the system. Customer support escalations route to supervisors. Fraud detection flags suspicious transactions for analyst review. Content moderation queues await human eyes. These architectures bake in human decision points as circuit breakers, quality gates, and exception handlers.

But when you eliminate 50% of your workforce, you’re not just reducing headcount, you’re removing entire layers of your system’s resilience architecture. Those “redundant” humans were actually serving critical functions: they were the fallback when models hallucinated, the sanity check when automation went rogue, the institutional memory that prevented repeated mistakes.

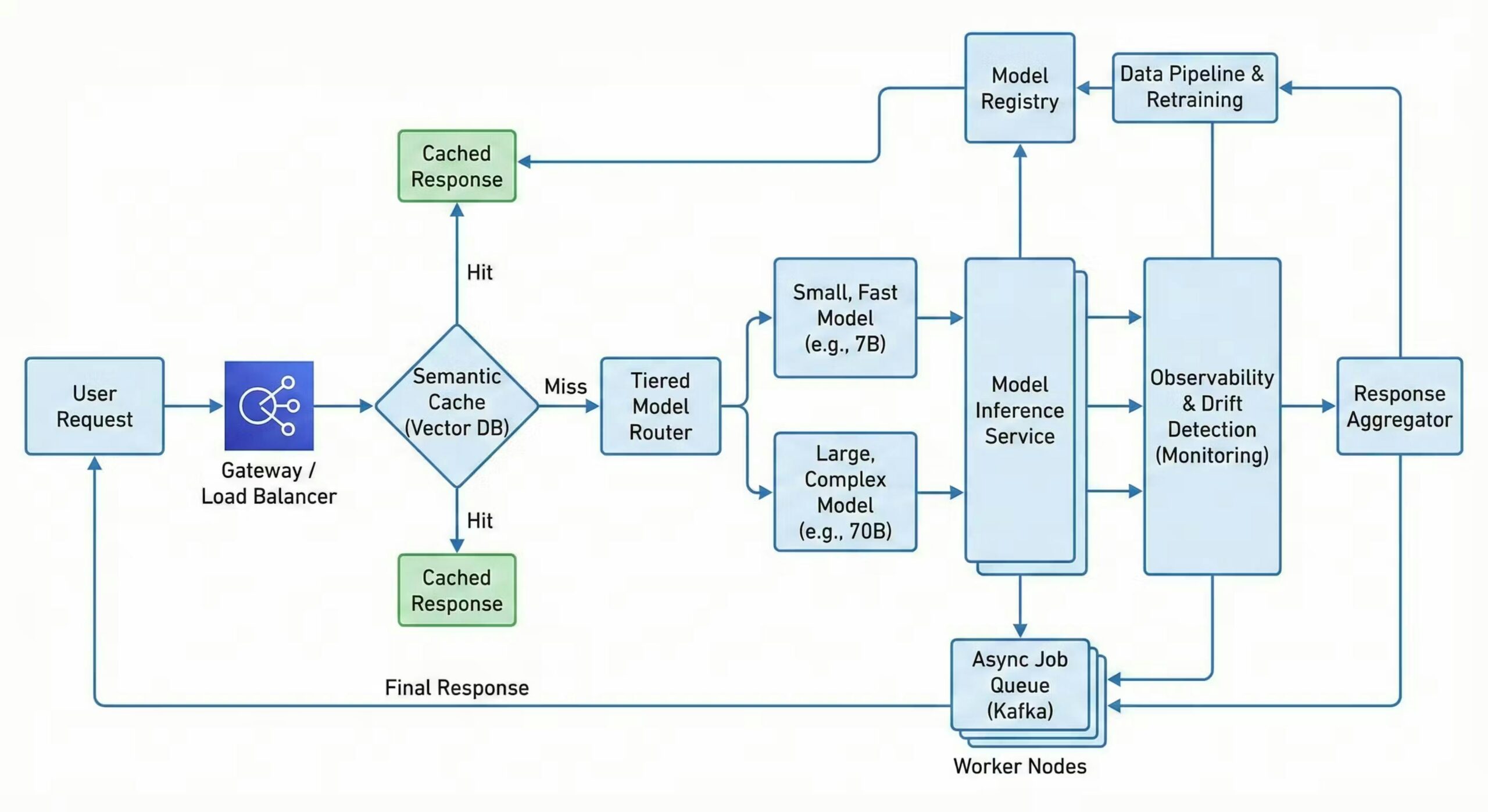

This is where the research gets uncomfortable. According to Fulcrum Digital’s analysis of AI system architecture, modern deployments require “distributed AI systems, MLOps architecture, and event-driven models” to function at scale. What they don’t mention is that these architectures implicitly assume infinite human oversight capacity, which Block just torched.

The math doesn’t add up. You can’t simultaneously remove your human safety net and deploy more complex autonomous systems without introducing catastrophic failure modes. Yet that’s exactly what companies are doing.

The Real Production Systems Reality Check

A recent deep dive into scaling AI in production reveals the ugly truth: AI systems fail differently and silently. Unlike traditional services that throw 500 errors when overloaded, AI models degrade through silent hallucinations, concept drift, and confidence collapse.

The research from HackerNoon shows a stark comparison:

| Metric | Traditional Web Service | AI/ML Inference System |

|---|---|---|

| Compute Bottleneck | CPU / Network I/O | GPU VRAM / Memory Bandwidth |

| Latency | Predictable (<50ms) | Highly variable (>1000ms) |

| Scaling Mechanism | Auto-scale stateless pods | Dynamic batching, model quantization |

| Failure Mode | 500 Server Error | Silent degradation (hallucinations) |

When Block (or any company) cuts 4,000 employees, they’re eliminating the human sensors that detect these silent failures. Your model starts drifting? The P99 latency spikes? The semantic cache returns nonsense? Nobody’s watching anymore.

The “Leaner Organization” Fallacy

Bending Spoons, the company acquiring AOL, justified their layoffs by claiming “reducing organizational complexity leads to faster and more efficient product development.” This is the same corporate-speak we’ve heard since the 1990s, just with an AI gloss.

But here’s what real-world examples of AI-driven workforce reductions in major corporations actually show: Amazon’s CEO predicts corporate workforce shrinkage from AI. Salesforce cut 4,000 customer support roles because they “need less heads.” The pattern is clear, companies aren’t augmenting workers, they’re replacing entire organizational layers.

The architectural problem? Those layers served purposes beyond just “doing work.” They were:

- Error correction networks: Junior employees catch senior mistakes, senior employees mentor juniors, cross-functional teams catch siloed thinking

- Institutional memory: Employees remember why certain architectural decisions were made, preventing costly relearning

- Resilience buffers: When automation fails, humans step in. When humans are gone, failure cascades

The Scalability Equation That Breaks Everything

Modern AI engineering involves balancing a brutal equation:

Accuracy ↑ = Cost ↑ = Latency ↑To maintain service quality while cutting staff, companies must choose: accept lower accuracy, pay more for inference, or tolerate slower responses. Most choose aggressive quantization and caching, which introduces new failure modes.

The HackerNoon research describes tiered routing architectures where 60-80% of requests get handled by cheap, fast models. But what happens when your “Tier 1” model confidently returns wrong answers? With half your workforce gone, who’s validating the semantic cache? Who’s monitoring for prediction drift?

This is where risks of premature ‘AI-first’ strategies leading to technical and organizational failure become catastrophic. The “AI-first” mandate often translates to “ship it and forget it”, because there aren’t enough humans left for proper MLOps.

The Observability Crisis No One Prepared For

Traditional monitoring (CPU, memory, network I/O) is useless for AI systems. You need to track:

- Data quality & feature skew: Is live input diverging from training data?

- Prediction drift: Has model output distribution shifted significantly?

- Confidence thresholds: Is average model confidence dropping?

The research shows companies should calculate Kullback-Leibler Divergence between training (Q) and production (P) distributions:

But here’s the dirty secret: these monitoring systems require data scientists and ML engineers to maintain. When you cut 4,000 employees, you probably just eliminated the team that maintained your drift detection. The system now silently fails while dashboards show green.

The Legal Liability Firewall

Before we declare the human worker extinct, there’s a massive barrier: legal liability. As explored in legal and liability barriers limiting full automation in knowledge work, current laws create a fascinating architectural constraint.

If your AI denies a loan incorrectly, who’s liable? If your autonomous content moderation system takes down protected speech, who’s responsible? The answer, for now, is still “the company”, which means companies need humans in the liability chain.

This creates a bizarre architectural pattern: companies deploy AI to replace workers, but must keep a skeleton crew of “liability shields”, humans who officially review decisions but lack the time or context to actually do so. It’s security theater, but for legal compliance.

The Erosion of Learning and Institutional Memory

Perhaps the most insidious architectural impact is the decline of on-the-job learning amid rising AI-driven skill demands. When you eliminate junior roles and middle management, you break the mentorship pipeline that transfers knowledge.

The Intellizence data tells a brutal story: since January 2025, 5,296+ companies have announced mass layoffs. Companies like Accenture cut 11,000 employees as part of an “AI-focused restructuring.” IBM eliminated 24,000 jobs while pivoting to AI. The pattern is consistent, companies are shedding the exact roles that would train the next generation of AI-savvy workers.

This creates a death spiral: as evolving roles of data scientists working alongside autonomous AI agents become more complex, the pipeline of talent capable of managing these systems dries up. You’re left with a small cadre of senior engineers who understand the old architecture and a massive layer of automated systems no one fully comprehends.

The New Architectural Patterns (That Actually Work)

If companies insist on this path, they need fundamentally different architectures. The research reveals several patterns:

1. Asynchronous Everything

Never block on AI inference. Use message brokers (Kafka, Pub/Sub) and return 202 Accepted immediately. This prevents cascade failures but requires completely rethinking your API contracts.

2. Semantic Caching with Vector DBs

Cache based on meaning, not exact matches. But someone needs to maintain the embedding models and validate cache quality, tasks that require specialized skills.

3. Graceful Degradation & Load Shedding

When GPU clusters saturate, fall back to cheaper models or static rules. This requires architectural foresight most “AI-first” initiatives lack.

4. Observability as Code

Your monitoring must be as sophisticated as your models. This means treating drift detection, feature skew monitoring, and confidence tracking as first-class architectural concerns, not afterthoughts.

The Contradiction at the Heart of the AI Revolution

Here’s what makes this entire trend simultaneously fascinating and terrifying: the companies most aggressively cutting human workers are the ones least architecturally prepared for fully autonomous operation.

Block, AOL, Accenture, and thousands of others are making a massive architectural bet: that AI can replace humans before the accumulated technical debt and institutional knowledge loss causes catastrophic failure.

The conflicting narratives from tech leaders downplaying AI’s role in job displacement don’t help. Google Cloud’s CEO calls AI job fears “overhyped” while his company builds the tools that enable mass layoffs. Salesforce’s Benioff cuts 4,000 jobs while claiming AI “augments” humans. The cognitive dissonance is staggering.

What This Means for Architects and Engineers

If you’re building systems today, you’re not just writing code, you’re designing the scaffolding for a post-human architecture. This means:

-

Design for absent humans: Assume the human-in-the-loop will be eliminated in the next round of layoffs. Build fallback mechanisms that work without human intervention.

-

Instrument everything: If you can’t measure drift, you can’t manage it. Treat observability as a critical feature, not ops overhead.

-

Document ruthlessly: When the team that built the system is gone, documentation becomes your institutional memory. Generate it automatically, keep it current, make it executable.

-

Plan for catastrophic failure: With humans gone, your failure modes become binary. Either the system works or it doesn’t. There’s no one left to perform heroic manual interventions.

-

Understand the legal landscape: Enterprise architectural challenges of deploying AI on-premises vs. cloud-native models and how regulatory and sovereignty concerns are reshaping cloud and AI infrastructure design will determine what you can actually automate versus what requires human liability shields.

The Uncomfortable Truth

Block’s 4,000 layoffs aren’t a story about AI efficiency, they’re a story about architectural gambling. The company is betting that the cost savings from eliminated salaries will outweigh the eventual cost of catastrophic failure, regulatory penalties, and technical debt.

The research is clear: AI systems at scale require more sophisticated monitoring, more complex fallback mechanisms, and more architectural sophistication than traditional systems. Removing humans doesn’t simplify your architecture, it makes it more brittle, more prone to silent failure, and harder to debug.

Yet companies proceed because the short-term financial math is irresistible. A 4,000-person layoff saves hundreds of millions in salaries. The cost of a catastrophic AI failure is hypothetical, until it isn’t.

Final Thoughts: The Architecture of Absence

We’re witnessing the birth of a new architectural pattern: systems designed for the humans who are no longer there. These architectures include ghostly remnants of human processes, approval queues that route to empty Slack channels, escalation paths that terminate in abandoned email addresses, monitoring dashboards that no one monitors.

The question isn’t whether AI can replace humans. The question is whether our architectures can survive the absence of the people who understood how they fail.

The next time you read about a company “embracing AI” through mass layoffs, don’t think about the cost savings. Think about the architectural debt they’re incurring. Think about the silent failures no one will catch. Think about the institutional knowledge evaporating with every departing employee.

And most importantly, think about this: the systems we’re building today will be maintained by the skeleton crews of tomorrow. Design accordingly, or watch them crumble.

The data doesn’t lie: Since January 2025, over 5,296 companies have announced layoffs, many explicitly tied to AI adoption. The architectural implications are no longer theoretical, they’re being battle-tested in production systems right now, often without the humans who know how to fix them when they break.

The revolution isn’t coming. It’s already here, and it’s deleting your colleagues’ Slack accounts one by one.