The Great Ambiguity: Why Nobody Can Agree What an AI Engineer Actually Does

You’ve fine-tuned LLMs, built RAG pipelines with LangGraph, and deployed agents via FastAPI on HuggingFace Spaces. You know your embeddings from your encodings. And yet, staring at a job posting for “AI Engineer, New Grad”, you’re paralyzed by a question that has nothing to do with transformer architecture: Do I need to know the Gang of Four design patterns? Should I understand Linux process scheduling? Is this just software engineering with a fresh coat of AI paint, or data science with extra Docker?

Welcome to the identity crisis of 2026. The AI Engineer title has exploded across LinkedIn and startup job boards, but shifts in data science job market demand have created a vacuum where nobody, not hiring managers, not senior engineers, and certainly not new graduates, can agree on what the role actually requires.

The Stats Major’s Dilemma

A recent discussion in the data science community highlighted this confusion perfectly. A statistics student with solid deep learning experience, RAG systems, agent tooling, modular Python code, asked what felt like a forbidden question: What am I missing from the CS degree I never took?

The anxiety is specific and technical. Do AI engineers need to understand thread scheduling and memory management? Is traditional system design (load balancers, database sharding, caching layers) relevant when your “system” is mostly vector stores and API calls to foundation models? The student had already mastered deployment via Docker and FastAPI, but worried that skipping Operating Systems 101 was a career-ending mistake.

The brutal truth? Even people who have spent years hiring for these roles can’t agree. One engineering manager with extensive hiring experience noted that we’ve reached a point where we can’t even define what a data scientist should do, let alone an AI engineer. Some organizations view the role as an LLM-specific data scientist, someone who builds agents, evaluates retrieval quality, and optimizes prompt engineering. Others want a software engineer who happens to be familiar with temperature settings and embedding dimensions.

The Two Tribes of AI Engineering

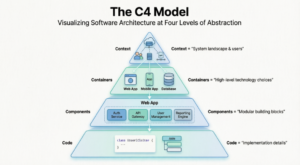

This ambiguity splits the industry into two distinct hiring philosophies, and knowing which one you’re interviewing for determines whether you should be studying red-black trees or retrieval evaluation metrics.

Tribe One: The Applied Researcher

These teams treat AI engineers as the evolution of the data scientist. They care deeply about your ability to evaluate model outputs, understand fine-tuning tradeoffs, and build with agents. If you can set up a FastAPI endpoint and populate it with LLM-based activities, you’re considered engineering-sufficient. They’ll ask you about RAG pipeline architecture and implementing semantic consistency for AI systems, but they won’t care if you can explain the Linux kernel’s process scheduler.

Tribe Two: The Infrastructure Builders

These teams are building production systems at scale, and they expect traditional software engineering rigor. They want you to understand why temperature=0.0 collapses your diversity scaling in self-consistency voting, but they also want you to discuss load balancing strategies and lessons from failed serverless database architectures. They’ll interview you on system design, expecting you to clarify read-to-write ratios and discuss collision handling in distributed hash generation, not just prompt engineering.

The disconnect is expensive. Candidates spend weeks cramming OS fundamentals that some interviewers consider irrelevant, while neglecting the architectural reasoning that others view as table stakes.

What Actually Matters (And What Doesn’t)

For new graduates specifically, the gap between “data science with deployment” and “software engineering with LLMs” narrows to a few concrete skills. You don’t need to be a Linux kernel hacker, but you do need to understand the mechanics of what happens when your Python code hits production.

The Non-Negotiables:

- Async vs. Threading: Understanding why blocking I/O destroys your LLM API throughput is crucial. If you’re calling OpenAI or Anthropic endpoints synchronously in a high-throughput service, you’ve already failed the latency/cost tradeoff analysis.

- Basic System Design for AI: Knowing how to structure a RAG pipeline is necessary but not sufficient. You need to reason about caching strategies, managing compatibility in distributed event systems, and why 1,000 tokens roughly equals 750 words when calculating your API bill.

- Cost Awareness: Interviewers are increasingly asking about the financial implications of architectural choices. Can you explain why cost and latency tradeoffs in edge AI deployment might favor a smaller, quantized model over a cloud API for certain use cases?

The Nice-to-Haves:

- Design Patterns: Clean code and solid OOP principles matter more than memorizing the Gang of Four. Nobody will ask you to implement an abstract factory pattern on a whiteboard, but they will ask you to structure a modular agent system that doesn’t become spaghetti code.

- Deep OS Knowledge: Understanding CPU vs. GPU memory management helps, but you don’t need to write a scheduler. Know that your model weights live in VRAM and that context switching has overhead, then move on.

The Interview Reality Check

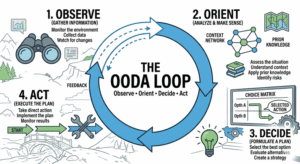

Modern AI engineering interviews have evolved to test whether you can think architecturally, not just whether you can import LangChain. One engineering leader detailed how they distinguish between surface-level knowledge and genuine expertise: they ask candidates to go deep on a project they claim to understand, probing specific design decisions, trade-offs, and failure modes.

This “going deep” tactic reveals whether you actually built the RAG system on your resume or just prompted Claude to generate it for you. If you can’t explain why you chose a particular chunking strategy, or how you’d handle a vector database outage, or why you set temperature=0.7 instead of 0.0 to ensure reasoning path diversity in your self-consistency voting, the interview ends quickly.

The grading rubric for these system design interviews typically weights trade-offs and bottlenecks at 25%, the same weight as detailed design. Junior candidates often ace the high-level architecture but fail because they can’t identify what breaks. They’ll propose a SQL database for a URL shortener without considering the read-to-write ratio, or suggest MD5 hashing without addressing collision handling. In AI engineering contexts, they’ll design a retrieval system without considering what happens when the embedding model updates and vectors shift, a problem related to establishing foundational database infrastructure that supports versioning and compatibility.

Bridging the Gap Without a CS Degree

If you’re coming from statistics or data science without the traditional CS background, the path forward isn’t to cram operating systems textbooks. It’s to demonstrate architectural reasoning with the tools you already know.

Start by instrumenting your projects for production realities. If you’ve built a chatbot with foundation model APIs, can you discuss the cost implications? Can you explain your caching strategy for repeated queries? If you’ve trained local models, can you discuss throughput optimization and building efficient local coding environments that respect memory constraints?

The most telling signal in interviews isn’t whether you can recite the four pillars of OOP, it’s whether you can explain the failure modes of your own system. What happens when the LLM hallucinates? How do you handle rate limiting? What’s your fallback when the vector database is down?

The Undefined Role Is the Opportunity

The ambiguity surrounding AI engineering isn’t a bug, it’s a feature of a field that’s still being invented. Unlike software engineering, where decades of practice have ossified interview standards (reverse a binary tree, anyone?), AI engineering is still fluid enough that a statistics major with Docker experience and a CS grad with PyTorch knowledge can both claim the title.

This flexibility is double-edged. It means you can shape the role around your strengths, whether that’s rigorous evaluation methodology or scalable system architecture, but it also means you must be prepared for interviews that test wildly different competencies. Some teams will drill you on managing compatibility in distributed event systems, others will ask you to optimize embedding retrieval latency.

The winning strategy isn’t to learn everything. It’s to know which tribe you’re interviewing with, and to be ready to go deep on the specific trade-offs that keep production AI systems running. Because in 2026, being an AI engineer means being comfortable with ambiguity, not just in your models’ outputs, but in your job description itself.