The Architect in the Loop: Adapting System Design for the AI-Centric Development Era

From Drafting to Governance: The New Architecture Pipeline

Traditional system design followed a linear path: business stakeholders describe goals, architects translate those into technical specifications, and developers implement. The process was slow, manual, and prone to the telephone game of misaligned priorities.

Now, AI-generated architecture diagrams from business intent are collapsing that workflow. Modern tools use large language models to extract functional requirements, non-functional constraints (scalability, latency, compliance), and industry regulations from natural language descriptions. The AI then maps these to architectural patterns, monolith vs. microservices, event-driven vs. synchronous, and produces deployment diagrams, security architectures, and data flow visualizations in minutes rather than weeks.

The Intelligent Pipeline

- Intent Understanding: Extract requirements and constraints from business language

- Architectural Reasoning: Select patterns based on recognized best practices

- Component Mapping: Connect databases, APIs, message queues, and identity layers

- Diagram Generation: Produce logical, cloud, and security architecture views

- Iteration: Refine instantly as business needs change

But here’s the catch: the AI doesn’t know about the 2019 incident that banned specific AWS regions from your infrastructure. It doesn’t know that your “microservices” are actually tightly-coupled distributed monoliths because of legacy database constraints. And it certainly doesn’t know that the CTO has an irrational hatred of Kubernetes. Your AI coding assistant is architecturally blind to these nuances, and that blindness extends to system design.

Why Architecture Matters More Than Prompts

The industry’s obsession with prompt engineering is missing the point. As one engineer noted in recent coverage of reliable enterprise AI: “Many teams focus on prompts when they should be focusing on architecture.”

Reliable AI systems aren’t built through clever prompts. They’re engineered through retrieval pipelines, context design, and verification layers.

When an AI generates architecture without persistent memory of your organization’s standards, it’s essentially a surgeon closing their eyes and guessing the anesthetic dosage based on a textbook from years ago. That behavior has a name in AI circles: hallucination.

For mission-critical systems, finance, healthcare, aerospace—an AI that “sounds confident but guesses” is unacceptable. The solution isn’t better prompts, it’s Retrieval-Augmented Generation (RAG) architecture.

Technical Implementation:

-

Embeddings: Convert documentation and compliance into high-dimensional vectors (

text-embedding-3-large)

OpenAI or open-source alternatives -

Vector Storage: Specialized DBs like Pinecone, Weaviate, Qdrant for semantic search

Beyond simple keyword matching -

Semantic Chunking: Break documents into coherent pieces with overlap strategies

chunk_size = 500 tokens, overlap = 50 tokens - Context Injection: Retrieve relevant patterns before LLM design generation

Without this infrastructure, you’re not getting architecture, you’re getting statistically probable arrangements of cloud services that might work, or might accelerate your system’s entropy until it collapses.

The Verification Imperative: Learning from Code Review

The parallels between AI-generated code and AI-generated architecture are striking. In both domains, false positives are the enemy of trust. Research shows that AI code review tools produce false positive rates between 5% and 15%. That sounds low until you realize that at 10% false positives with 20 comments per PR, two comments are wrong.

Architecture has higher stakes. A false positive in code review wastes time, a false positive in system design takes down production.

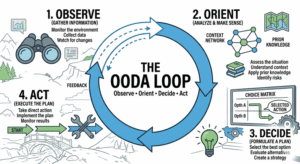

This is why the emerging pattern of K-LLM Orchestration is critical for architectural decisions. Instead of trusting a single model’s output, advanced systems run multiple LLMs in parallel against the same requirements, then synthesize the results through majority voting.

Shuffled Variants

Generate 6 different orderings of requirements using deterministic seeds, so each model processes constraints differently.

Parallel Review

Send to diverse models (Claude Opus, GPT-4o, Gemini Pro) with varying temperatures (0.3 to 0.55).

Consensus Clustering

Group findings by component. 4+ of 6 models agreeing = “Strong Consensus”; single-model = “Weak” & require human verification.

Validation Pass

Trace execution paths and data flows against canonical requirements to filter false positives.

This isn’t theoretical. Tools like k-review apply this pattern to code review, but the same approach applies to architecture generation. When designing a payment gateway or authentication system, you don’t want one model’s opinion, you want a consensus of specialized agents checking for security vulnerabilities, compliance gaps, and scalability bottlenecks.

The Architect as Context Curator

If the AI is now the junior architect generating drafts, the human architect becomes the senior reviewer with veto power. The job description shifts from “designer of systems” to “governor of AI-generated systems.” This means mastering context engineering, the discipline of designing how information flows into the model.

Key Engineering Elements

- Retrieval Strategy: Pulling past decisions, compliance docs, and incident reports

- Chunking Structure: Preserving logical boundaries in documentation

- Guardrails and Constraints: Hard rules AI cannot violate (e.g., encryption at rest)

Tool Integrations

- Connecting the AI to cost calculators

- Security scanners and dependency checkers

- Automated validation scripts

Your AI agent doesn’t give a damn about your architecture patterns unless you explicitly encode those patterns into the retrieval system. It will suggest chalk when you standardized on styleText(), or propose microservices for a team that can barely keep one monolith running.

Building the Verification Pipeline

For teams ready to implement this, the technical architecture resembles a sophisticated CI/CD pipeline for design decisions. Here’s how to build it:

1. The Webhook Listener

Set up a Flask or FastAPI endpoint to receive architecture generation requests. Verify signatures using HMAC-SHA256 to prevent unauthorized generation:

def verify_signature(payload_body: bytes, signature_header: str | None) -> bool:

if not signature_header:

return False

hash_object = hmac.new(

WEBHOOK_SECRET.encode("utf-8"),

msg=payload_body,

digestmod=hashlib.sha256,

)

expected_signature = "sha256=" + hash_object.hexdigest()

return hmac.compare_digest(expected_signature, signature_header)2. Context Assembly

Fetch relevant documentation from your vector store. If using a local model for privacy (critical for regulated industries), run Ollama with Qwen2.5-Coder:

ollama pull qwen2.5-coder:7b

ollama run qwen2.5-coder:7b "Review this architecture for compliance with SOC2 requirements"3. Multi-Model Consensus

Send the architectural requirements to multiple models in parallel. Use temperature settings between 0.1 and 0.3 for deterministic, focused output. Parse structured JSON responses with confidence scores:

SYSTEM_PROMPT = """You are an expert security architect. Analyze the proposed architecture and identify:

- Security vulnerabilities (injection, auth issues, data exposure)

- Compliance gaps (GDPR, HIPAA, SOC2)

- Scalability bottlenecks

Return JSON with: file, component, severity, comment, confidence (0.0-1.0)

Only include comments where confidence >= 0.7."""4. Post-Generation Filtering

Validate that the AI-generated architecture actually addresses the requirements. Check for hallucinated services, impossible data flows, and components that violate your architectural guardrails.

5. Human-in-the-Loop Review

Present the consensus-based architecture to human architects for final approval. Track dismissal rates; if developers are ignoring the AI’s suggestions 40% of the time, your context retrieval needs work.

The Workforce Implications

As Block and other tech giants cut thousands of jobs to “embrace AI”, the architectural implications become stark. When workforce reduction shifts maintenance responsibilities to smaller teams, the verification layer becomes even more critical. You can’t afford architectural drift when you have half the engineers to fix it.

The architects who survive this transition aren’t the ones who can draw the prettiest diagrams. They’re the ones who can build the pipelines that verify AI-generated diagrams won’t cost the company millions in downtime.

Practical Takeaways

If you’re an architect navigating this shift:

Don’t try to automate entire system designs on day one. Pick one high-signal area, security review or compliance checking, and nail the accuracy before expanding scope.

Treat system prompts like code. Track which versions produce which architectural decisions. When you change the retrieval strategy, compare results against previous versions.

AI-generated architecture should be advisory, not authoritative. Use

COMMENT events, not REQUEST_CHANGES. Developers (and architects) will revolt if a bot blocks their deployment based on a hallucinated dependency.

Target under 5% false positive rates. Track every dismissed architectural suggestion. If the AI suggests using a service you’ve explicitly banned, that feedback needs to go back into your retrieval pipeline as a negative example.

The Future is Verification

The next generation of software architects won’t spend their days debating whether to use Kafka or RabbitMQ. They’ll spend their days curating the vector databases that inform those decisions, tuning the retrieval pipelines that fetch relevant context, and verifying that the AI-generated blueprints won’t collapse under load.

Architecture is shifting from static documentation to living, intelligent system blueprints—continuously updated as business requirements evolve. But the architect remains essential—not as a draftsman, but as the verification layer standing between business intent and production disaster.

The tools are here. The models are capable. The question is whether you’re ready to stop designing systems and start governing the machines that design them.