SQLGlot’s 5x Speed Boost: You’re Not Paying for Python Anymore

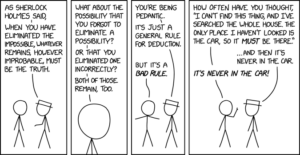

For years, the Python performance trade-off was a simple, if painful, equation: ease of development versus raw execution speed. If you needed the latter, you reached for C extensions, Cython, or fully rewrote critical paths in Rust. The SQL parsing library SQLGlot, a pure-Python powerhouse supporting 34 SQL dialects, lived on the “ease” side of that line. It powered tools like SQLMesh and Apache Superset, but parsing millions of queries meant milliseconds that added up.

Then the team at Fivetran, the library’s custodian, pulled off a stunt: they boosted its parsing speed by a factor of five. The kicker? They kept the exact same Python codebase. No Rust rewrite, no Cython annotations, no forking the project into a “fast” branch. They used mypyc, a compiler that turns type-annotated Python into C extensions, and in doing so, validated a path for performance-critical Python libraries that doesn’t involve abandoning the language’s primary virtues.

The resulting speedups aren’t uniform but are substantial where it counts most.

The Last Refuge of Pure Python

SQLGlot operates in a crowded space of SQL transpilers, where raw performance is a direct differentiator. Alternatives like the Rust/WASM-based Polyglot project benchmark themselves precisely against Python SQLGlot. The Fivetran team had already experimented with a Rust-based tokenizer (sqlglotrs), but it introduced cross-language build complexity, separate release cycles, and an added maintenance burden. Cython was a non-starter because it requires modifying the source with its specific syntax. PyPy, while fast, is a different runtime entirely, a non-option for most production deployments.

The goal was clear: accelerate the core library without sacrificing its “zero-dependency, pip-installable, hackable pure Python” identity. This ruled out the conventional solutions. The team needed something that could look at their existing, well-typed Python code and simply make it faster. Enter mypyc.

How mypyc Cuts the Interpreter’s Umbilical Cord

mypyc is not a new language or a runtime. It’s a transpiler built on top of the mypy type checker. It takes your type-annotated Python modules and compiles them into CPython C extension modules. The magic happens when those type annotations are precise enough that mypyc can bypass Python’s dynamic dispatch, the process that interprets each and every operation at runtime.

Consider a method call obj.parse(). In interpreted Python, the runtime must figure out what obj is, look up the parse method, resolve inheritance, and then call it. With compiled C extensions via mypyc, if the type of obj is known at compile-time (thanks to annotations), this can become a direct C function call. The overhead evaporates.

For SQLGlot’s hot loops, scanning strings character-by-character in the tokenizer, traversing AST nodes, dispatching generation methods, this was a game-changer. The team compiled over 100 modules into C extensions, covering the tokenizer, parser, generator, all ~950 AST expression classes, dialect definitions, and core optimizer passes. Yet, they deliberately left less-frequently-used modules, like the executor and some optimizer passes, as pure Python to avoid unnecessary compilation overhead.

The result is a dual-distribution package: pip install sqlglot gives you the pure Python library, pip install "sqlglot[c]" installs the sqlglotc package with pre-compiled wheels, which SQLGlot auto-detects and uses. If you can’t or don’t want to install C extensions, nothing changes, a crucial design decision for locked-down environments or users who monkey-patch internals.

The Devil (and the 5x Speedup) is in the Details

Merely compiling the code got them part of the way, but the massive gains came from specific adaptations and contributions. They didn’t just use mypyc, they helped build it.

The tokenizer, SQLGlot’s hottest code path, performs millions of character-level operations like str.isdigit() and str[i] indexing. The team discovered mypyc was calling the slow, generic Python C API for these operations. They contributed five new string primitives to mypyc’s core, unlocking dramatic speedups.

| Primitive | Speedup | PR |

|---|---|---|

str.isspace() |

~1.3x | #20842 |

str.isalnum() |

up to 3.2x | #20852 |

str.isdigit() |

up to 3.5x | #20893 |

str.lower()/str.upper() |

up to 2.6x | #20948 |

str[i] indexing (ASCII cache) |

3.9x | #21035 |

The last one is particularly clever. In CPython, single ASCII characters are interned, "a"[0] returns a cached, singleton string object. The original mypyc implementation was allocating a new string object on every index, a massive overhead. By teaching mypyc to reuse CPython’s cached characters, they scored a near-4x win on the most frequent operation in the library.

They also fixed fundamental mypyc bugs that blocked them, like a memory explosion when processing SQLGlot’s massive AST module (~950 classes in one file). The fix (python/mypy#20897) eliminated redundant work in an internal compiler pass, allowing compilation to finish on a 64GB machine.

The Real Cost of “Write Once, Run Anywhere”

While the performance story is compelling, the SQLGlot effort underscores that mypyc is not a magic toggle. Certain Python patterns break under compilation.

Compiled C extension types behave differently than pure Python classes. You can’t monkey-patch them at runtime. They don’t have a __dict__ (similar to __slots__), so you can’t arbitrarily add attributes. Subclassing from regular Python code requires explicit decorators. Functions lose their __code__ object. SQLGlot’s own tests, which create ad-hoc subclasses or patch methods, had to be adjusted, and the team maintains that pure Python must remain a first-class option for users who rely on these dynamic features.

This trade-off is the new frontier for performance-sensitive Python libraries. You can keep your codebase pure and hackable, but to unlock C-like speeds, you must accept constraints that make that code less dynamic. It’s a trade-off many are willing to make, especially for libraries forming the backbone of data pipelines where performance directly impacts cost and latency, similar to the trade-offs evaluated when benchmarking cloud data platform performance and cost for production workloads.

What This Means for the Typed Python Ecosystem

The SQLGlot project demonstrates that mypyc is ready for prime time on large, complex codebases, but it demands investment. The team spent significant time debugging mypyc internals and submitting patches. They also had to tighten their own type annotations, mypyc’s strict runtime type enforcement exposed real bugs where annotations didn’t match reality.

This work dovetails with the broader evolution of Python’s type checking landscape. As noted in a recent comparison of Python type checkers, mypy itself has become dramatically faster, with 1.18+ optimizations delivering a ~40% improvement on its own codebase. The performance bar for pure-Python tooling is rising, and compilation is a key lever.

For data engineers, this has immediate implications. Faster SQL parsing means faster data pipeline execution, lower cloud costs, and quicker iteration on transformation logic. It enables more aggressive use of SQL transpilation for vendor portability without a performance penalty. It also validates a development pattern where you can prototype rapidly in Python and later compile for production without a language switch, a pattern that could be applied to other CPU-bound tasks, from custom parsers to financial calculations, or even when executing custom Python logic within Snowflake UDFs.

The Bottom Line: Your Next Performance Play

The SQLGlot team’s journey offers a clear blueprint:

- Start with type annotations. You need them for mypyc to work effectively. This is increasingly non-negotiable for serious Python code anyway.

- Profile, then compile. They targeted the hottest modules first: tokenizer, parser, core AST. Leave infrequently used code as pure Python.

- Plan for a hybrid world. Some users can’t or won’t install C extensions. Maintain pure Python as a fully functional fallback.

- Be prepared to contribute. For a large project, you will likely hit compiler bugs or missing optimizations. Fixing them upstream benefits the entire ecosystem.

- Optimize for the compiler. Small changes yield big wins: using sentinel values instead of

Optional, nativei64integers for loop counters, inlining generator-based traversals, and pre-building dispatch dictionaries.

The 5x parsing speedup isn’t just a number, it’s proof that the “Python is slow” narrative is increasingly nuanced. For typed, CPU-bound, non-numeric code, mypyc offers a legitimate path to near-native speeds while staying within the Python ecosystem. You don’t have to choose between developer velocity and runtime performance anymore. You can have both, and in the process of trying, you’ll likely end up with cleaner, more correct code.

The next time you’re optimizing a data pipeline bottleneck, remember: the answer might not be a full rewrite. It might just be pip install "your-library[c]". For teams that have wrestled with the complexities of integrating Python drivers with SQL Server connections, this approach offers a refreshing alternative, improving what you have rather than replacing it.