The $4,000 Question: Can Anyone Still Afford to Run LLMs Locally?

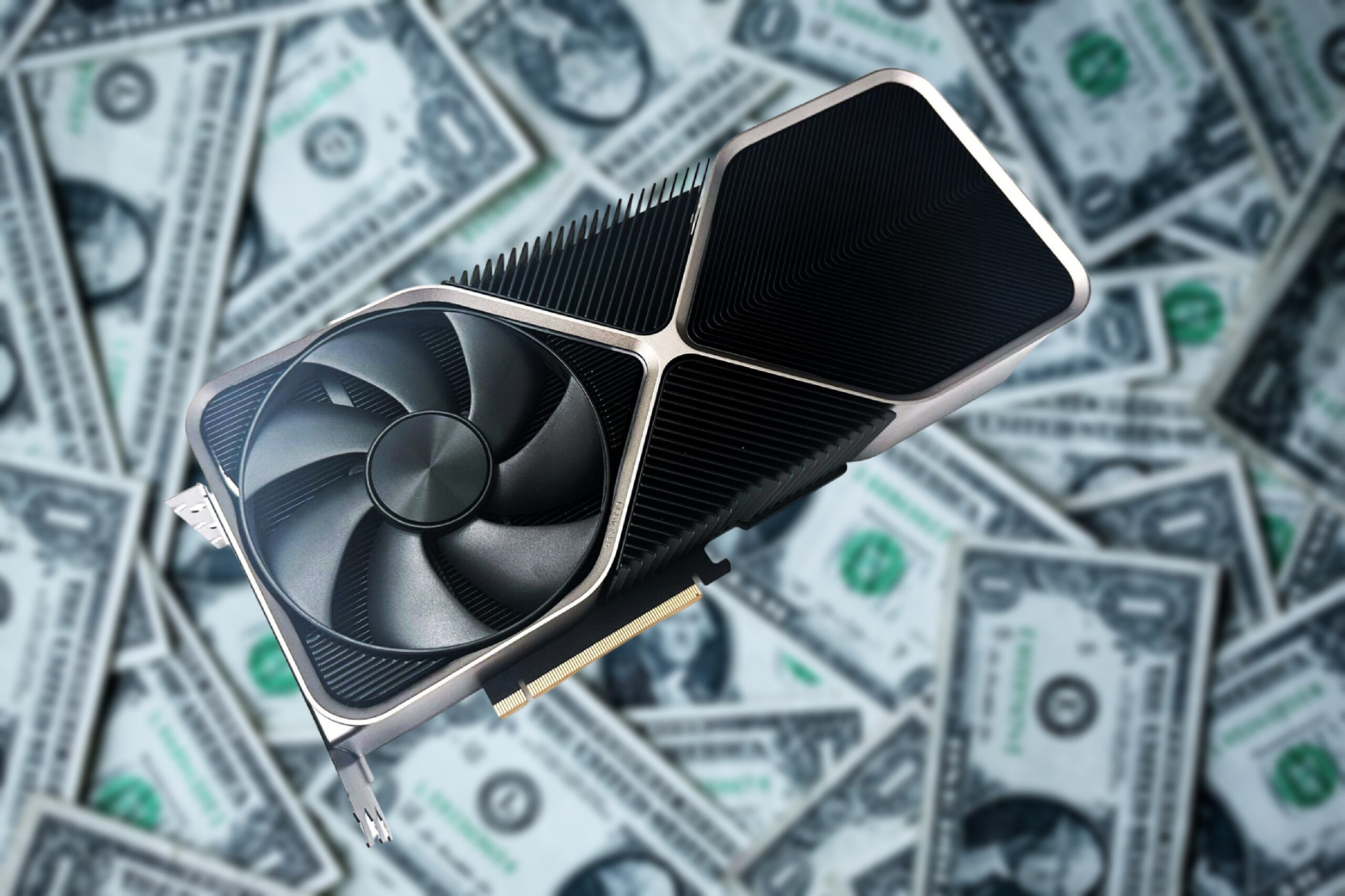

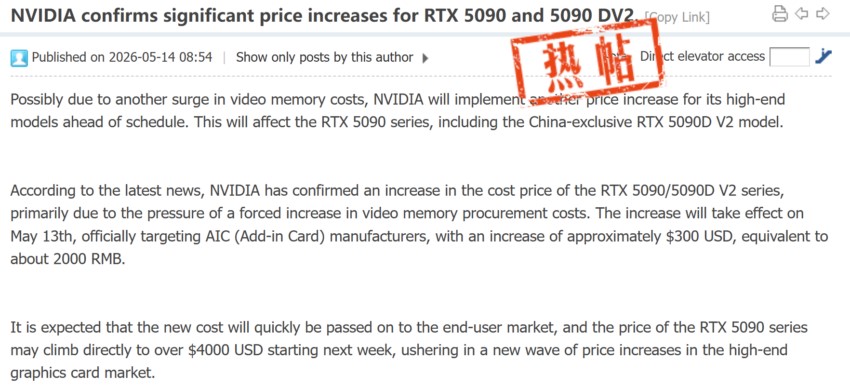

Jensen Huang’s “the more you buy, the more you save” quip feels less like a charming slogan and more like a brutal economic reality for anyone trying to deploy AI locally. The floor is collapsing beneath the idea of affordable, on-premise AI development. While headlines scream about another $300 price hike for RTX 5090 board partners due to GDDR7 shortages, a quieter, more profound shift is happening: the professional workstation GPU is becoming a viable, albeit expensive, lifeline for developers who can’t stomach the cloud bill or tolerate API latency.

This isn’t just about gamers getting priced out. This is the systematic pricing-out of an entire generation of developers and startups who hoped to run their own models. The choice is no longer between a $1,600 enthusiast card and a $3,000 Titan, it’s between a $4,000+ paperweight and a monthly subscription to a cloud provider’s API that you don’t control.

The GDDR7 Squeeze: From Shortage to Shakedown

Let’s be clear: the “GDDR7 shortage” isn’t an act of God. It’s a direct, predictable consequence of hyperscalers vacuuming up memory production for their AI data centers. As one industry report details, NVIDIA is reportedly preparing to increase the price of its GeForce RTX 5090 and RTX 5090D V2 GPUs by $300 for its add-in card (AIC) partners, a cost they will inevitably pass on. These chips were already selling for over double their $1,999 MSRP. On Western retailers like Newegg, prices regularly exceed $4,000.

The math is simple: If the street price of an RTX 5090 is already $3,799 (as per Tom’s Hardware’s latest tracking), another $300 hike from manufacturers could push final retail tags toward the $4,500-$5,000 mark. This isn’t a premium, it’s a barrier to entry.

For AI developers, VRAM is the currency. You need enough to load your model and its context. The RTX 5090’s 32GB of GDDR7 is a sweet spot for many quantized 70B-parameter models or full-precision 13B models. But at $5,000, the return on investment gets murky fast. A Claude API habit for a working developer easily runs $50-100/month. At that rate, it would take over four years of heavy API usage to justify the GPU’s upfront cost, ignoring electricity and the fact that hardware depreciates while cloud APIs get faster and cheaper.

Enter the Prosumer Pivot: The RTX 5000 Pro (48GB) as an Unlikely Hero

Just as the RTX 5090 flies out of reach, its professional sibling, the RTX 5000 Pro with 48GB of VRAM, is having a moment. On paper, it shouldn’t win. It’s based on a cut-down professional Ada Lovelace architecture, not the bleeding-edge Blackwell of the 5090. It’s not sold as a gaming or even a “consumer AI” card.

But in the r/LocalLLaMA trenches, the calculus is different. One user, with “ZERO experience with PCs”, shared their journey. Their $5,600 total system build (including the $4,300 GPU) was chosen over a Mac Studio because the latter’s prompt processing speeds for large models were a dealbreaker. The result? They’re getting “up to 80 tokens/sec in Text Generation (more like 50/60 for very big prompts) which is phenomenal. But most importantly I’m getting 4400 tokens per second in Prompt Processing!”

This is the trade-off laid bare. The RTX 5000 Pro has more VRAM (48GB vs 32GB), draws half the power (285W vs 575W), and in a local inference setup optimized for throughput, it delivers a developer experience that arguably beats renting time on distant servers. It can fit a 27B-parameter model at FP8 precision with a 200k token full-precision cache. For developers working with code or documents, that context window is gold.

The sentiment on forums reflects this pivot: “Two 5090s would definitely beat this. But it would cost significantly more, it would be crazy noisy and tear a hole in my pocket in electricity bills.”

The Hard Economics of Local vs. Cloud Versus Pro

Let’s run the numbers beyond the sticker shock.

- Cloud Rental: Per GetDeploying’s data, on-demand RTX 5090 instances now average $1.10/hr, a 23% increase since May 2025. Running one 24/7 for a month costs roughly $792. A reserved instance might cut that to ~$446/month.

- RTX 5090 Purchase: At a speculated $4,500 street price, the break-even point versus 24/7 cloud rental is about 5-10 months, not including electricity (~$40/month at 575W). But you own an asset, and your data never leaves your desk.

- RTX 5000 Pro Purchase: At ~$4,300 for the GPU alone, plus another ~$1,300 for the rest of a capable system, your total capital outlay is higher. But you get 50% more VRAM, lower power draw, and drivers/software stacks optimized for stability in professional workloads, not gaming frame times. For a small studio or serious researcher, this starts to look like a rational, if painful, capital expenditure.

This crisis discusses computational costs passed to startups in a very tangible way, a theme explored in our analysis of how an 8B model humiliated Llama 4’s 402B beast. The race for bigger models creates a hardware demand that prices out the very innovators who could make them useful.

Beyond the Flagship: The Wider GPU Market Carnage

The pricing contagion isn’t isolated to the halo product. Tom’s Hardware’s GPU price index shows the ripple effect. A GeForce RTX 5070 Ti, with an MSRP of $749, now has a “best US price” of $979. The Radeon RX 9070 XT ($599 MSRP) is at $709. Even the ostensibly budget-friendly $249 RTX 5050 is selling for $289.

The message from the market is unambiguous: any GPU with sufficient VRAM for meaningful AI work is being re-priced for an enterprise budget. The “AI-driven pricing crisis” isn’t a future threat, it’s the present reality. This describes cloud bill shocks driving alternative infrastructure choices, a pattern seen in the broader cloud exodus where ‘lift and shift’ becomes ‘lift and bail’.

The Inevitable Stratification of AI Development

- The Cloud-Captive Majority: Individual developers, startups, and academics will be forced onto cloud APIs and rented instances. They’ll trade capital expense for operational expense, control for convenience, and data privacy for accessibility. They’ll be subject to the whims of API pricing and model availability.

- The On-Premise Elite: Well-funded labs, corporations with strict data governance, and developers for whom latency or cost predictability is critical will swallow the pill and invest in professional-grade hardware like the RTX 5000 Pro or used server cards. Their development will be private, predictable, and insulated from external service changes.

- The Efficiency Innovators: A small but crucial group will focus on model optimization, highlights efficient models reducing hardware dependency, and novel architectures that do more with less, as seen in the rise of compact but capable models like Maincoder-1B. Their work is the only long-term counterbalance to the hardware oligopoly.

So, What’s a Developer to Do?

If you’re staring down a project that needs local LLM capability, your decision tree is now brutally economic.

- Re-evaluate Your Actual Needs: Do you really need 70B parameters? Can a well-tuned 7B or 13B model, perhaps one of the new wave of “coding specialist” models, get the job done on a cheaper, last-gen 24GB card?

- Seriously Consider Cloud: For intermittent or experimental workloads, cloud is almost certainly cheaper. But analyze cloud infrastructure cost burdens carefully, as unpredictable scaling can evaporate savings, a lesson from our deep dive into Databricks Serverless Jobs.

- Look Beyond the Geforce Brand: The RTX 5000 Pro, the A6000 Ada (48GB), and even older used server GPUs (like the A100 40GB) can offer better VRAM-per-dollar for inference than a scalped 5090.

- Embrace the Hybrid Model: Use a local, smaller model for rapid iteration, prototyping, and privacy-sensitive tasks. Offload the heavy, complex, or massive-context jobs to a cloud API when needed. This hybrid approach, as many are discovering, offers the best balance of cost, control, and capability.

The dream of powerful, personal AI is receding into a paywalled horizon. The RTX 5000 Pro’s sudden relevance isn’t a victory, it’s a symptom. It’s the market telling us that the “consumer” segment for high-VRAM GPUs is over. AI development hardware is now firmly in the professional workstation category, with prices to match. The question is no longer which GPU to buy, but whether you can afford to play the game at all.