Enterprise data teams are facing an architectural ultimatum: consolidate everything under Microsoft Fabric’s SaaS umbrella or bet on Databricks’ lakehouse flexibility. As vendors consolidate workflows into “unified” platforms, practitioners are caught between seamless Power BI integration and mature Spark-based engineering. This analysis cuts through the marketing to examine the technical friction, semantic layer incompatibilities, and hidden costs driving the latest cloud data wars.

When Management Chooses Fabric (And You Can’t Stop Them)

Picture this: You’re a data engineer at a large enterprise with terabytes of data and heavy transformations. Your team has been limping along on legacy Azure Synapse, and you’ve been quietly building the case for Databricks, mature, multi-cloud, built for enterprise scale. Then the email arrives: “Strategic alignment with Microsoft Fabric, Q3 rollout.” Your stomach drops.

This scenario is playing out across enterprises right now, driven by Microsoft sales cycles and the siren song of “one platform for everything.” The frustration is palpable among practitioners who see the technical debt accumulating before the migration even starts. While Fabric represents a massive leap forward from Synapse, the consensus among early adopters is that it “mostly works with some very jagged edges”, a polite way of saying you’ll be filing support tickets at 2 AM when your CI/CD pipeline inexplicably fails.

The maturity gap is real. Industry sentiment suggests Databricks remains significantly more suited for enterprise-scale engineering, with established patterns for software development lifecycle (SDLC) management through Databricks Asset Bundles (DABs). Meanwhile, Fabric’s developer experience, particularly around notebooks, capacity dashboards, and CI/CD tooling, still feels like a beta release wrapped in enterprise pricing.

The Semantic Layer Standoff

Here’s where the technical rivalry gets spicy. Microsoft has spent 28 years perfecting Power BI’s semantic layer, and they’re not about to let Databricks muscle in. According to Chris Webb’s detailed analysis from the Fabric CAT team, Power BI’s semantic models fundamentally cannot work properly with third-party semantic layers, including Databricks’ metrics views.

The architectural reality is brutal: you cannot stack semantic models. Power BI assumes it owns the business logic, calculated via DAX, and generates SQL queries against underlying data. When you try to layer Databricks’ semantic layer underneath, you get “jagged” aggregation errors, broken subtotals, and performance nightmares. DirectQuery mode, the workaround everyone tries, runs slower, costs more, and still can’t properly translate complex Databricks metrics into Power BI visuals.

This forces an uncomfortable binary choice. If your end users live in Excel and Power BI (and let’s face it, 35 million of them do), Fabric’s semantic models and Direct Lake storage offer a seamless experience that Databricks simply cannot match. But if your data engineers need reliable Spark clusters, Delta Lake optimization, and cross-cloud portability, Fabric’s limitations become blockers. For teams understanding the architectural trade-offs with Databricks, this isn’t just about technology, it’s about who controls the metadata.

The “Third Platform” Trap

Adding insult to injury, enterprises already running AWS and SAP are now being sold Fabric as the “missing piece” of their data strategy. Strategic analysis reveals this creates a nightmare scenario of platform duplication:

- Storage fragmentation: Data duplicated between S3 and OneLake

- Governance sprawl: Separate security logic in Fabric/Purview vs. existing catalog tools

- Compute abstraction hell: Different scaling models, different capacity metrics, different billing surprises

- Semantic silos: Datasphere vs. Fabric semantic models, requiring manual reconciliation

Databricks’ ace in the hole here is genuine multi-cloud portability. It runs natively on AWS, Azure, and GCP with the same APIs, same Delta Lake format, and same Unity Catalog. Fabric, by contrast, is Azure-only SaaS that replicates functionality you likely already have in AWS, effectively vendor-lock-in-as-a-service disguised as convenience.

The hidden costs pile up fast. When migrating legacy Hadoop systems to Databricks, at least you’re moving toward an open lakehouse standard. Migrating to Fabric often means accepting proprietary storage (OneLake), proprietary catalogs, and a hard dependency on Power BI for consumption, decisions that look cheap today but become expensive extraction fees tomorrow.

Maturity vs. Velocity: By The Numbers

The head-to-head comparison data from SoftwareReviews paints a nuanced picture. Databricks edges ahead with an 8.7 composite score versus Fabric’s 8.4, but the emotional footprint tells a more interesting story:

| Metric | Microsoft Fabric | Databricks |

|---|---|---|

| Likeliness to Recommend | 90% | 86% |

| Plan to Renew | 100% | 96% |

| Cost Satisfaction | 89% | 77% |

| Overall Capability | 82% | 79% |

Fabric’s perfect renewal rate suggests sticky lock-in, customers can’t easily leave once they’re embedded in the Power BI ecosystem. Databricks’ lower cost satisfaction reflects premium pricing, but engineers accept it because the platform actually works for complex transformations without the “workaround fatigue” plaguing Fabric adopters.

The developer experience gap is particularly acute around CI/CD. While Databricks offers mature, officially supported asset bundles and Git integration, Fabric’s deployment pipelines and version control remain frustratingly immature, despite recent improvements to the Fabric CLI. If you’re managing infrastructure as code, this matters more than any shiny Excel integration feature.

The Hybrid Fantasy (And Why It Fails)

“But why not use both?” It’s the most common response from architects trying to dodge the political bullet. Use Databricks for heavy ETL and Spark jobs, Fabric for semantic modeling and Power BI consumption. Best of both worlds, right?

Wrong. The tooling tax is real. Engineers now must master two different notebook environments, two different security models, two different capacity monitoring dashboards, and two different billing alarms. As one data strategist noted, this creates “cognitive and operational drain” that fragments team expertise and slows incident response.

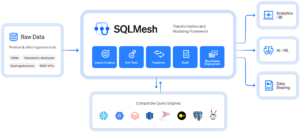

The interoperability story isn’t there yet. Despite being a “first-party Azure service”, Databricks doesn’t magically integrate with Fabric’s semantic layer. You’re still pushing Parquet files around or paying double for storage. For teams modernizing data pipeline orchestration, adding a second platform doesn’t solve complexity, it multiplies it.

The Lock-in Calculus

Let’s talk about the elephant in the room: neither platform is truly “open”, but their cages have different shapes.

Fabric’s lock-in is vertical and deep. Once you adopt OneLake and Power BI semantic models, you’re in the Microsoft stack for the long haul. The “openness” claims about XMLA support are technical half-truths, yes, the protocol is open, but try connecting a third-party semantic model to Power BI and watch the aggregation logic break. As industry observers have noted, this creates a “walled garden” where you can look at the open standards, but you can’t actually leave with your business logic intact.

Databricks’ lock-in is horizontal and runtime-based. While they champion Delta Lake (an open-source storage format), the actual compute optimizations, Unity Catalog features, and serverless SQL endpoints are proprietary Databricks magic. You’re free to move your data to AWS Athena or BigQuery, but you’ll lose the query performance and governance features that justified the Databricks premium in the first place.

The cynical take? Fabric is for companies where the BI team has more political power than the data engineering team. Databricks is for companies where the engineers still have a seat at the table. Both are responding to industry shifts in serverless data engineering by trying to own the entire stack, just with different starting points.

The Verdict: Pick Your Poison Strategically

If your users primarily consume data through Excel pivot tables and Power BI dashboards, and your transformations are moderate, Fabric is probably the right call, despite the engineering jagged edges. The semantic model integration and OneLake convenience will deliver faster time-to-insight for business users.

If you’re running heavy Spark workloads, need cross-cloud portability, or have complex machine learning pipelines, Databricks remains the only mature choice. Fabric’s Spark implementation (via Synapse Runtime) isn’t in the same league for enterprise-scale transformations.

The real mistake is letting vendor sales teams make this decision for you during a “strategic alignment” meeting. Evaluate based on who consumes your data, not who stores it. And if someone suggests “just using both”, make them diagram the data lineage and governance overhead on a whiteboard first, then watch them sweat.

The cloud data wars aren’t about technology superiority. They’re about platform consolidation, ecosystem gravity, and who gets to invoice your infrastructure budget. Choose wisely, because switching costs are measured in years, not quarters.