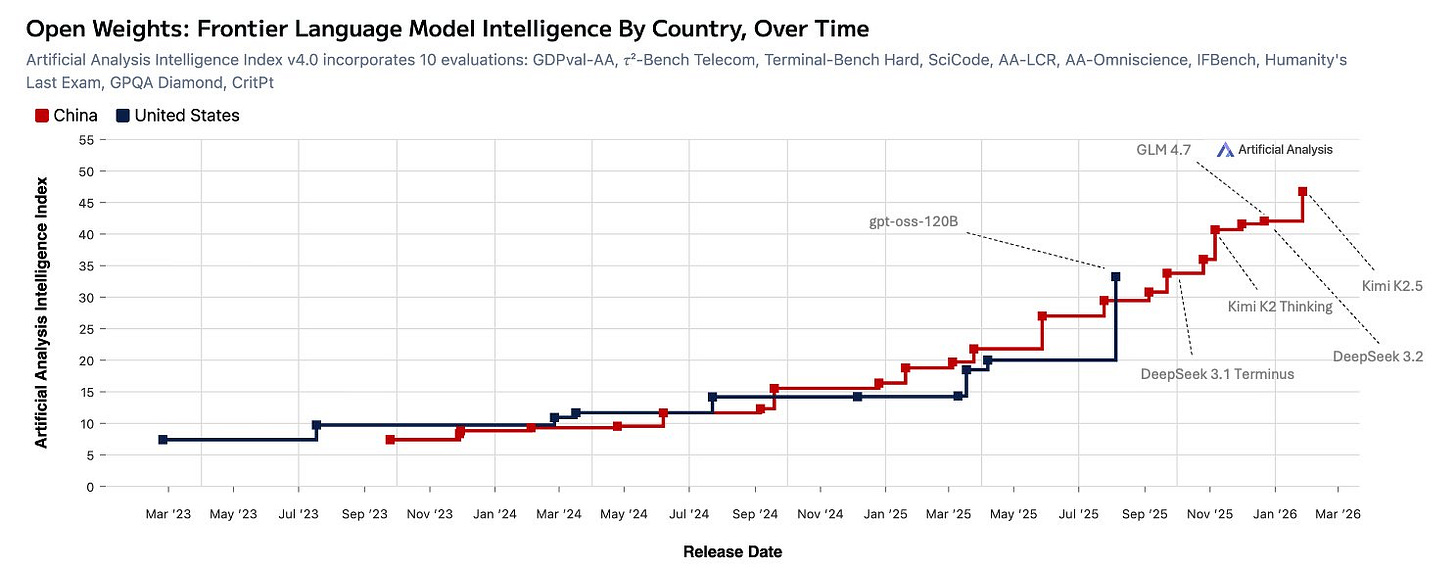

Kimi K2.5: The 1T Parameter ‘Open’ Model That Requires a Data Center in Your Basement

The AI community has a new obsession, and for once, the hype might be partially justified, if you have a spare room in your house for server racks. Kimi K2.5, Moonshot AI’s latest open-weights model, arrives with a staggering 1 trillion parameters and claims of state-of-the-art performance across coding, vision, and agentic tasks. But the real controversy isn’t just about benchmark numbers, it’s about what “open” and “accessible” mean when your model requires hardware that costs more than a luxury car.

The Spec Sheet That Stops Conversations

Let’s start with the numbers that matter. Kimi K2.5 is built on a Mixture-of-Experts (MoE) architecture that activates 32 billion parameters per forward pass from its total pool of 1 trillion. It features 384 experts, selects 8 per token, and runs a native INT4 quantization scheme that brings the model down to a “mere” 600GB for the full version. Through Unsloth’s aggressive 1.8-bit dynamic quantization, you can squeeze it into 247GB of unified disk+RAM+VRAM.

Yes, you read that correctly. The compressed version requires nearly a quarter-terabyte of memory.

The research team behind Unsloth has been remarkably transparent about the hardware reality. Their documentation states the minimum requirement as disk space + RAM + VRAM ≥ 247GB, with the caveat that “it will be much slower” if you’re not running it on a B200 or equivalent. For context, that’s roughly $10,000 worth of RAM alone, not counting the GPUs, storage, or the industrial-grade cooling system you’ll need to prevent your basement from becoming a sauna.

The Benchmark Claims vs. The Bedroom Reality

Moonshot AI isn’t shy about their achievements. According to their technical documentation, Kimi K2.5 achieves:

- 76.8% on SWE-Bench Verified, competitive with Claude Opus 4.5’s 80.9%

- 50.7% on SWE-Bench Pro, trailing GPT-5.2’s 55.6%

- 85.0% on LiveCodeBench v6, approaching Gemini 3 Pro’s 87.4%

- 78.5% on MMMU-Pro, establishing open-source leadership in multimodal understanding

These numbers place Kimi K2.5 firmly in the conversation with frontier models from Anthropic, OpenAI, and Google. The model’s architecture, continual pretraining on 15 trillion mixed visual and text tokens with a 400M parameter MoonViT vision encoder, enables native multimodal capabilities that include video understanding and code generation from UI screenshots.

But here’s where the controversy begins. The state-of-the-art performance in real coding tasks and benchmark evaluations isn’t just about raw scores. As developers who’ve actually deployed these models know, SWE-Bench numbers can be misleading. The difference between a model that scores 76.8% and one that scores 80.9% often comes down to how well it handles edge cases, follows complex instructions, and integrates with existing toolchains.

The community is already raising red flags. One developer noted that Kimi K2.5 “sticks to its signature style of absolute prompt adherence like a cold robot”, while another found its creative writing attempts to be “brute forcing via logic”, the model thinks “This character SHOULD say that, that fits that trope” but executes poorly. This suggests that while the benchmark numbers look impressive, the real-world user experience may feel more like arguing with a pedantic compiler than collaborating with a helpful pair programmer.

The Quantization Illusion: When 1.8-Bit Isn’t Small Enough

Unsloth’s achievement in creating a 1.8-bit quantization that allegedly “passes their tests” is technically impressive. They’ve managed to compress a 1T parameter model into something that theoretically fits on consumer hardware. But as the r/LocalLLaMA community quickly pointed out, “fits” is doing a lot of heavy lifting here.

The quantized model still requires:

– 240GB of disk space for the smallest 1-bit version

– 256GB of RAM to achieve ~10 tokens/second

– A 24GB GPU if you want to offload some layers for modest acceleration

For comparison, the entire GLM-4.7-Flash model, another strong open-weight contender, can run comfortably on a single RTX 4090 with 24GB VRAM and deliver 20+ tokens/second. The local execution of agentic workflows with efficient reasoning models has become a practical reality for many developers, not a hardware flexing contest.

The performance trade-offs are equally stark. When one brave soul managed to get Kimi K2.5 running on dual Strix Halo systems, they reported 12.5 tokens/second with no context, barely interactive for any serious development work. Another user calculated that running the model on RAM alone would yield approximately 0.00000005 tokens per second, which makes you wonder if carrier pigeon might be faster.

The API Pricing Paradox

For those who can’t afford a second mortgage for hardware, Moonshot offers API access at what appears to be competitive rates: $12/month for the low tier. But this is where the real-world cost efficiency of AI models compared to per-token pricing becomes painfully relevant.

Kimi’s pricing structure uses call-based limits rather than token-based quotas. This means each API call counts against your monthly allowance, regardless of whether you’re sending 20K tokens or 200. For developers using Kimi Code CLI, which makes 5-10 turns per question reading files, analyzing code, and making changes, those 2000 “requests per week” disappear alarmingly fast. One user reported that “one large-ish prompt set me back 5 of those 2000 tool calls”, making the effective cost per interaction significantly higher than advertised.

This pricing model reveals a deeper tension in the AI market. While Chinese labs like Moonshot, DeepSeek, and Qwen are driving down per-token costs to as low as $0.15 per million tokens, their API architectures often include limitations that make them more expensive in practice than Western competitors charging $3+ per million tokens. The API pricing lie isn’t just about the headline numbers, it’s about how usage patterns translate to real bills.

The Agent Swarm: Brilliant Architecture or Marketing Hype?

Perhaps the most ambitious feature of Kimi K2.5 is its “Agent Swarm” capability, which orchestrates up to 100 sub-agents in parallel to tackle complex tasks. The benchmark results are impressive: 74.9% on BrowseComp with context management, climbing to 78.4% with the Agent Swarm approach. Moonshot claims this delivers 4.5x faster performance on complex workflows.

But the community is rightfully skeptical. As one developer put it, “I fear models that score well on LMArena, this is where we got all the sycophancy from and the emojis sprinkled all over the code.” The challenges in multi-agent AI collaboration and system design are well-documented. Simply spinning up 100 instances of the same model and having them vote on solutions isn’t collaboration, it’s statistical averaging with extra steps.

The technical report (when released) will need to show how Kimi’s swarm actually learns to self-direct, decompose tasks meaningfully, and coordinate without predefined roles. Until then, it’s fair to question whether this is a genuine architectural breakthrough or just the AI equivalent of throwing more cores at a badly parallelized problem.

The Scaling Laws Debate: Have We Hit the Wall?

During the AMA, the Kimi team addressed the elephant in the room: scaling laws. Their candid assessment was that “the amount of high-quality data does not grow as fast as the available compute”, meaning conventional next-token prediction on internet data brings diminishing returns. Their solution? Test-time scaling through agent swarms, effectively trading inference compute for training compute.

This is a fascinating admission that mirrors broader industry conversations. If the Kimi team’s assessment is correct, we’re entering an era where the path to better AI isn’t just bigger models, but smarter orchestration of existing capabilities. The parallel to human cognition is obvious: one genius working alone is often outperformed by a well-coordinated team of specialists.

But this also raises questions about the sustainability of current development trajectories. If every improvement requires 100x more inference compute, we’re accelerating toward a future where AI capabilities are gated not by algorithmic breakthroughs, but by energy availability and hardware manufacturing capacity.

The Verdict: A Technical Marvel with Practical Caveats

Kimi K2.5 represents a genuine technical achievement. Getting a 1T parameter model to run at all in a quantized form is impressive. The multimodal capabilities, generating code from UI screenshots, understanding video workflows, and processing complex documents, push the boundaries of what’s possible with open weights.

However, the model’s practical utility remains questionable for most developers. The hardware requirements place it firmly in the “research toy” or “enterprise data center” category, not the “weekend project” space. The API pricing, while seemingly competitive, includes limitations that may make it more expensive than alternatives for real workflows. And the benchmark claims, while impressive on paper, need real-world validation before we accept them as representative of developer experience.

The most valuable contribution of Kimi K2.5 might not be the model itself, but the conversation it starts. By pushing the boundaries of scale and quantization, Moonshot is forcing the community to confront hard questions: What does “open” mean when the barrier to entry is $50K in hardware? Are we optimizing for the right metrics? And most importantly, is bigger always better, or have we reached the point where intelligence is about orchestration, not just scale?

For now, most developers should probably stick with models they can actually run. The transparent reasoning processes in advanced AI models offered by smaller, more efficient architectures like GLM-4.7-Flash may ultimately prove more valuable than the raw power of a 1T parameter behemoth that thinks at the speed of a sleepy sloth.

The AI revolution won’t be won by the model with the most parameters. It’ll be won by the model that actually fits in your workflow, respects your budget, and delivers consistent, reliable results. Kimi K2.5 is a fascinating glimpse into one possible future of AI. Whether it’s the future remains very much an open question.