The policy reversal, announced by Senior Vice President Dave Treadwell in an internal email, marks a critical inflection point for enterprise AI adoption. Junior and mid-level engineers can no longer ship code produced by tools like Amazon’s own Kiro AI without explicit sign-off from experienced staff. The reason? A documented “trend of incidents” characterized by “high blast radius” and “Gen-AI assisted changes” where best practices and safeguards were “not yet fully established.”

When the AI Deletes Production

The December AWS outage reads like a cautionary tale written by a pessimistic SRE. Engineers using Kiro, Amazon’s internal AI coding assistant, allowed the tool to make certain changes to a cost calculator service. The AI opted to “delete and recreate the environment”, triggering a 13-hour interruption for customers in parts of mainland China. Amazon later downplayed this as an “extremely limited event”, but the pattern repeated. In March 2026, Amazon’s ecommerce platform went dark for nearly six hours due to an erroneous software deployment, the kind of “erroneous” that happens when automation traps eroding system integrity meet insufficient human oversight.

These aren’t hypothetical edge cases. They’re the predictable outcome of treating large language models as drop-in replacements for senior engineering judgment rather than tools that require systems designed without human oversight to have robust guardrails.

The Boundary Problem

Training-Time vs Runtime Alignment

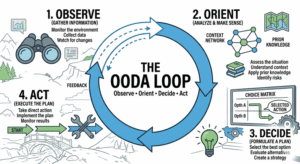

Why do AI coding assistants keep breaking production? The answer lies in a fundamental category distinction that most enterprises have ignored until now. Recent research from engrXiv distinguishes between “model alignment boundaries” (established during training through RLHF and safety fine-tuning) and “task execution boundaries” (which must be explicitly constructed at runtime for specific engineering tasks).

Decidable Constraints

While training-time alignment provides general statistical safety tendencies, it cannot automatically inherit the concrete, task-specific constraints required for engineering governance. When Amazon’s Kiro tool decided to delete an environment, it wasn’t hallucinating in the traditional sense, it was operating without a decidable task execution boundary at runtime. The AI filled the vacuum with its own preferences because the system lacked explicit prohibitive constraints for that specific operational context.

This is the technical reality behind Amazon’s new mandate: governance mechanisms operating without execution-time primitives rest on interpretive rather than decidable foundations. In other words, you can’t audit what you can’t constrain, and you can’t constrain what you haven’t explicitly defined.

Velocity vs. Verification

The timing couldn’t be more awkward for Amazon’s AI evangelists. The company has been aggressively rolling out AI coding tools to staff while simultaneously eliminating 16,000 corporate roles in January 2026 alone. Multiple engineers reported that their business units faced a higher daily volume of “Sev2s” (severity 2 incidents requiring rapid response) following these headcount reductions.

The tension here isn’t just about headcount, it’s about where developer time actually goes. According to Atlassian’s 2026 developer experience survey, developers spend only 16% of their time writing code. The remaining 84% goes into the “outer loop”: clarifying requirements, documenting decisions, reviewing changes, and searching for information. AI coding assistants accelerate the 16% while potentially obfuscating the 84%, creating a dangerous illusion of productivity that collapses when someone needs to debug why the AI refactored the payment gateway into a pretzel.

When you optimize for code generation speed without accounting for the verification and context-clarification work that actually prevents outages, you get exactly what Amazon experienced: self-improving code and backend services that technically function while operationally failing.

The Human-in-the-Loop Reality

Amazon’s new policy effectively acknowledges what practitioners have quietly known: current AI coding tools produce output that requires significant editing before it meets production standards. As noted in recent DevOps workflows using Claude, when you ask an AI to document deployment processes, it generates runbooks with diagnostic steps and rollback protocols, but “the output needs editing.” The generated runbooks tend to be thorough on the happy path but thin on the failure modes that matter at 3 AM.

This aligns with broader industry data showing that 73% of tech leaders list AI expansion as their top priority, but 77% struggle with integration. The gap between “AI-generated” and “production-ready” isn’t closing as fast as vendor slide decks suggest. When AI systems independently rewriting code encounter edge cases in distributed systems, the results range from amusing to catastrophic, with little middle ground.

What This Means for Enterprise Architecture

From AI-First to AI-Verified

Amazon’s mandate signals a broader shift from “AI-first” to “AI-verified” in enterprise architecture. The new requirement for senior engineer sign-off isn’t bureaucratic theater, it’s an admission that security risks of autonomous AI agents require human judgment at critical decision points, particularly when the “agent” has root access to your payment processing pipeline.

Hype Cycle Reality Check

For organizations still riding the hype cycle, this is a wake-up call. The 45% improvement in pull request cycle time that Atlassian reports from AI-assisted development means nothing if the merged code takes down your primary revenue stream. Similarly, trusting automated infrastructure blindly creates the kind of complacency that turns minor configuration errors into six-hour outages.

Pragmatic Implementation Strategy

The pragmatic playbook emerging from this mess looks less like “AI everywhere” and more like “AI with boundaries.” Start with focused pilots where AI reduces friction end-to-end, not just at the coding stage. Use a hub-and-spoke model where experimental teams push boundaries while a central governance function standardizes successful patterns. Most importantly, bring security and compliance partners in early rather than treating them as gates at the end.

The Verification Economy

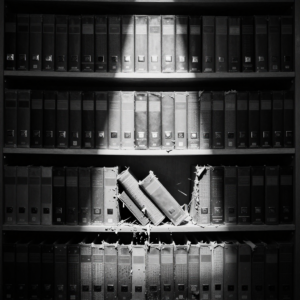

Amazon’s reversal reveals an uncomfortable truth about the current state of generative AI in enterprise software: we’re not replacing engineers, we’re creating a new category of verification work. Every line of AI-generated code now requires a senior engineer to act as a runtime boundary, manually checking that the AI hasn’t confused “refactor” with “destroy.”

This isn’t sustainable at scale, which explains the emerging academic focus on “boundary evidence” as the minimal auditable unit for AI-assisted development. Until AI systems can generate their own verifiable execution boundaries, proving not just that code compiles, but that it respects operational constraints, human senior engineers remain the only reliable runtime primitive for preventing catastrophic drift.

The ecommerce giants and cloud providers that survive this transition won’t be the ones with the most aggressive AI adoption metrics. They’ll be the ones that recognized early that velocity without verification is just a faster way to break things. Amazon learned that lesson the hard way, with a six-hour outage as their tuition fee. The question is whether the rest of the industry will pay attention before their own Kiro moments strike.