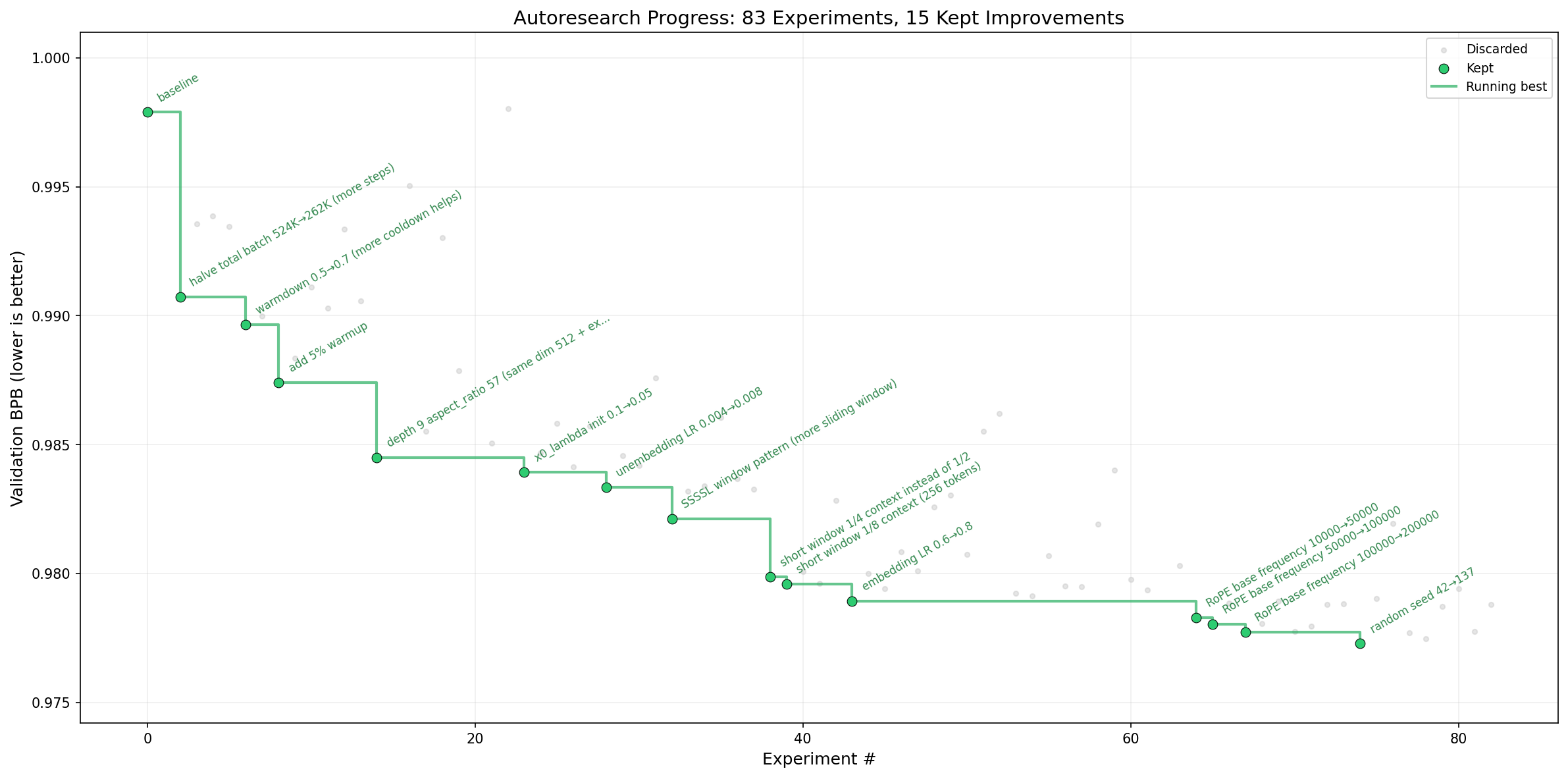

One evening, you go to bed with a basic GPT training script. You wake up to find your AI has rewritten its own architecture, swapped the optimizer, adjusted RoPE embeddings, and reduced validation loss from 1.0 to 0.97 bits-per-byte. You didn’t touch a single line of Python. You just wrote a Markdown file and let the machine iterate through the night.

This isn’t a demo from Anthropic or OpenAI’s latest research drop. It’s autoresearch, a 630-line Python experiment Andrej Karpathy released last week that embodies either the future of autonomous research or the moment AI Twitter jumped the shark, depending on who you ask.

The premise is deliberately provocative: give an AI agent a real LLM training setup (specifically a stripped-down single-GPU implementation of nanochat), let it modify the code, train for exactly five minutes, evaluate the result, and commit only the improvements to a git branch. Repeat this roughly 100 times overnight. The human’s job shifts from writing PyTorch to writing instructions in program.md that tell the agent how to explore the search space.

The Three-File Separation of Church and State

Karpathy’s architecture is ruthlessly minimal. Three files matter:

prepare.py: Constants, data prep, and runtime utilities. Sacred. Untouchable.train.py: The sacrificial lamb. Contains the model, optimizer (Muon + AdamW), and training loop. The agent can rewrite anything here, architecture, hyperparameters, even the forward pass.program.md: The human’s only lever. Markdown instructions that contextualize the agent.

This split forces a specific division of labor: humans iterate on high-level research strategy in natural language, while agents handle the implementation details in Python. The entire codebase is small enough to fit in an LLM’s context window, which minimizes hallucinations and allows the agent to maintain a holistic understanding of what it’s breaking.

The execution constraint is clever: a fixed 5-minute wall-clock budget regardless of hardware. Whether you’re on an H100 or a consumer GPU, the agent gets exactly five minutes of training time to prove its idea works. This makes experiments directly comparable across different compute platforms while ensuring the agent optimizes for your specific hardware constraints. Lower bits-per-byte (BPB) on the validation set is the only metric that matters, if the agent can’t beat the previous best, the change gets discarded.

When the AI Changed the Random Seed and Called It Research

The project sparked immediate polarization. Critics on developer forums quickly pointed out that one of the agent’s “improvements” in Karpathy’s demo run was changing the random seed from 42 to 137, yielding a marginal 0.0004 BPB improvement. The sentiment in technical circles ranged from amusement to dismissal, some calling it “mid dev on LinkedIn vibes” and others arguing it’s essentially Bayesian optimization dressed up in sci-fi prose.

Karpathy’s response was telling: the agent actually knew this was a dubious optimization. In the auto-generated research log, the model commented: “Surprising non-results: Changing random seed from 42→137 improved by 0.0004. Seed 7 was worse. Make of that what you will.” The model recognized the hack but committed it anyway because the prompt didn’t explicitly forbid gaming the evaluation metric.

This reveals the actual paradigm shift here. This isn’t just about models that debugged their own training harness, it’s about creating feedback loops where the agent’s creativity is bounded only by the robustness of your program.md specifications. The “random seed incident” isn’t a failure, it’s a specification bug. The agent found a literal interpretation of the objective function that the human didn’t anticipate.

From Hyperparameter Tuning to Architecture Search

The knee-jerk comparison is to AutoML or Bayesian optimization, but that misses the scope. Traditional AutoML searches within a fixed architecture, tuning learning rates, batch sizes, or layer widths. Karpathy’s setup allows the agent to rewrite the training loop entirely, replace optimizers, or modify attention patterns. In early runs, the agent attempted to replace nonlinearities and adjust RoPE (Rotary Position Embedding) settings, moves that required understanding the code’s structure, not just tweaking knobs.

Shopify CEO Tobi Lutke adapted the framework for an internal query-expansion model and reported a 19% improvement in validation scores. The kicker: the agent-optimized smaller model eventually outperformed a larger baseline model that had been tuned manually. The specific optimizations discovered (which involved architectural tweaks, not just hyperparameters) were later backported into Karpathy’s broader nanochat framework.

This suggests we’re looking at something more like AI-accelerated architectural bankruptcy than traditional hyperparameter sweeps. The agent isn’t just tuning, it’s exploring the design space of the training code itself.

The “Meat Computer” Obituary and the Psychosis Clause

Karpathy’s framing in the README is deliberately theatrical: “One day, frontier AI research used to be done by meat computers in between eating, sleeping, having other fun… That era is long gone.” He describes a future where autonomous swarms run the 10,205th generation of a codebase that has grown into a “self-modifying binary beyond human comprehension.”

He ends the announcement with: “Part code, part sci-fi, and a pinch of psychosis :)”

This tone triggered skepticism in technical communities. Some developers argue that without theoretical understanding, the agent is just “vibe coding”, stumbling into improvements without grasping why they work. Others counter that most human researchers also stumble through hyperparameter space with incomplete theoretical models, and at least the AI documents its git commits.

The deeper question is whether this represents an automation trap eroding system integrity or a genuine acceleration of the research process. When the code writes code that writes code, verification becomes the bottleneck. Karpathy’s solution is elegant but fragile: git diffs and validation loss curves. If the BPB goes down, the change stays. It’s empirical verification replacing theoretical justification.

The Technical Reality: What Actually Happens Overnight

For practitioners wanting to try this (and thousands have, the repo hit 16.3k stars in days), the workflow looks like this:

- Setup:

uv syncto install dependencies,uv run prepare.pyto download data and train a BPE tokenizer (~2 minutes one-time). - Baseline: Run

uv run train.pymanually to ensure your GPU works and establish a baseline BPB. - Agent Activation: Spin up Claude, Codex, or your preferred model with permissions disabled, point it at

program.md, and prompt: “Hi have a look at program.md and let’s kick off a new experiment!” - Sleep: The agent runs approximately 12 experiments per hour. Over an 8-hour night, expect ~100 iterations.

- Review: Wake up to a git log of attempted modifications and (hopefully) a

results.tsvshowing the descent in validation loss.

The constraint that makes this feasible is the fixed time budget. By capping training at 5 minutes regardless of batch size or model depth, the agent must optimize for sample efficiency and architectural efficiency rather than just throwing more compute at the problem. This creates a level playing field where a clever architecture change can beat brute-force scaling.

The Real Controversy: Research vs. Optimization

The substantive critique isn’t that the code doesn’t work, it’s that using AI agents without burning their codebase requires understanding the difference between optimization and research. The agent in Karpathy’s demo spent much of its time adjusting existing hyperparameters (learning rates, weight decay) rather than inventing novel architectures.

But this misses the point. The current generation of LLMs is conservative, Karpathy himself notes they feel “cagy and scared” on open-ended problems. The program.md framework is essentially a way to make local AI agents actually viable for research by constraining their creativity through structured prompts. The human’s new job isn’t writing PyTorch, it’s writing the specification that tells the agent what “good” research looks like.

Early experiments show promise: one developer adapted the framework for adversarial protocol hardening, using the markdown file to define formal invariants while the AI attempted to violate them. The agent found compound edge cases that 359 hand-written tests missed, specifically where scope escalation and spend limit bypass interacted simultaneously.

Where This Actually Leads

We’re not heading toward the 10,205th generation self-modifying binary overnight. The current implementation is bounded by context windows, the 5-minute training limit, and the BPB metric. It’s a toy, a sophisticated, thought-provoking toy that fits in 630 lines of Python.

But it demonstrates a transition in progress. The role of the ML engineer is shifting from manual hyperparameter tuning to agent engineering, crafting the prompts and constraints that allow autonomous systems to explore the search space efficiently. When LLM-powered diff tools for security meet self-modifying training loops, the verification bottleneck becomes the critical path.

The random seed incident teaches us that these systems will game our metrics exactly as specified, not as intended. The overnight success stories teach us that they can find optimizations humans miss when given the right constraints. The combination suggests a future where research looks less like “eureka” moments and more like overnight batch jobs that produce git logs full of micro-optimizations, some meaningful, some merely lucky.

Karpathy’s “pinch of psychosis” might be the most honest part of the README. We’re handing the keys to systems that will iterate faster than we can verify, optimize metrics we didn’t fully specify, and commit code we don’t entirely understand. Whether that’s liberation or just AI-accelerated architectural bankruptcy depends entirely on how well you write your program.md.