The appeal is seductive. A sprawling Airflow Dag repository, managed via Git and YAML, replaced by a clean, visual canvas where a business analyst can drag nodes from Salesforce to MySQL with a mouse. The promise: reduced code, faster iteration, and liberation from the data engineering team’s release schedule. The reality, as one engineer discovered when their company mandated a move from Airflow to n8n, is often a sudden, grinding halt in performance and a gnawing sense of architectural regret.

The question posted to a data engineering forum was telling: “Company wants to go from Airflow to N8N to reduce code. How do I preserve speed?” The existing flow was a classic business ETL: Salesforce and ads data funneling through MySQL, transformed, and pushed back out to Salesforce and Power BI. The developer’s primary concern wasn’t features, but core mechanics: “What I know from n8n is that it parses data in JSON, but I would prefer not to do that, and keep it all in CSV.” This isn’t a nitpick about syntax, it’s the canary in the coal mine for a fundamental architectural mismatch.

The Orchestration Spectrum: Code-First vs. Canvas-First

At its heart, this debate exposes a continuum in data tooling. On one end, you have code-first orchestrators like Apache Airflow. Workflows are defined as Directed Acyclic Graphs (DAGs) in Python. This is a developer-native environment: version control, unit testing, code reviews, and the full power of a programming language for dynamic task generation, complex branching logic, and integration with any library under the sun. Airflow, as described in a recent analysis of its ecosystem complexity, is built for engineers. Its strength is managing “dependencies temporelles et les volumes”, temporal dependencies and volume, as one French analysis aptly summarized.

On the other end, you have canvas-first automation platforms like n8n. Here, the workflow is the UI. Logic is constructed by connecting visual nodes representing triggers, API calls, data manipulations, and destinations. Its superpower is the “construction visuelle de scénarios mixtes SaaS/API”, the visual construction of mixed SaaS/API scenarios. It excels at gluing together webhooks, form submissions, and third-party services where the data payloads are relatively small and the logic is primarily about sequencing API calls.

The trap springs shut when you mistake one for the other. n8n is built for workflow automation, Airflow is built for data pipelines. As one experienced commenter bluntly put it, “n8n looks good when you are doing a POC or pilot. If you need to process large data – you will find performance degrading and requiring frequent maintenance.”.

The Performance Tax: JSON Everything and the “Black Box” Penalty

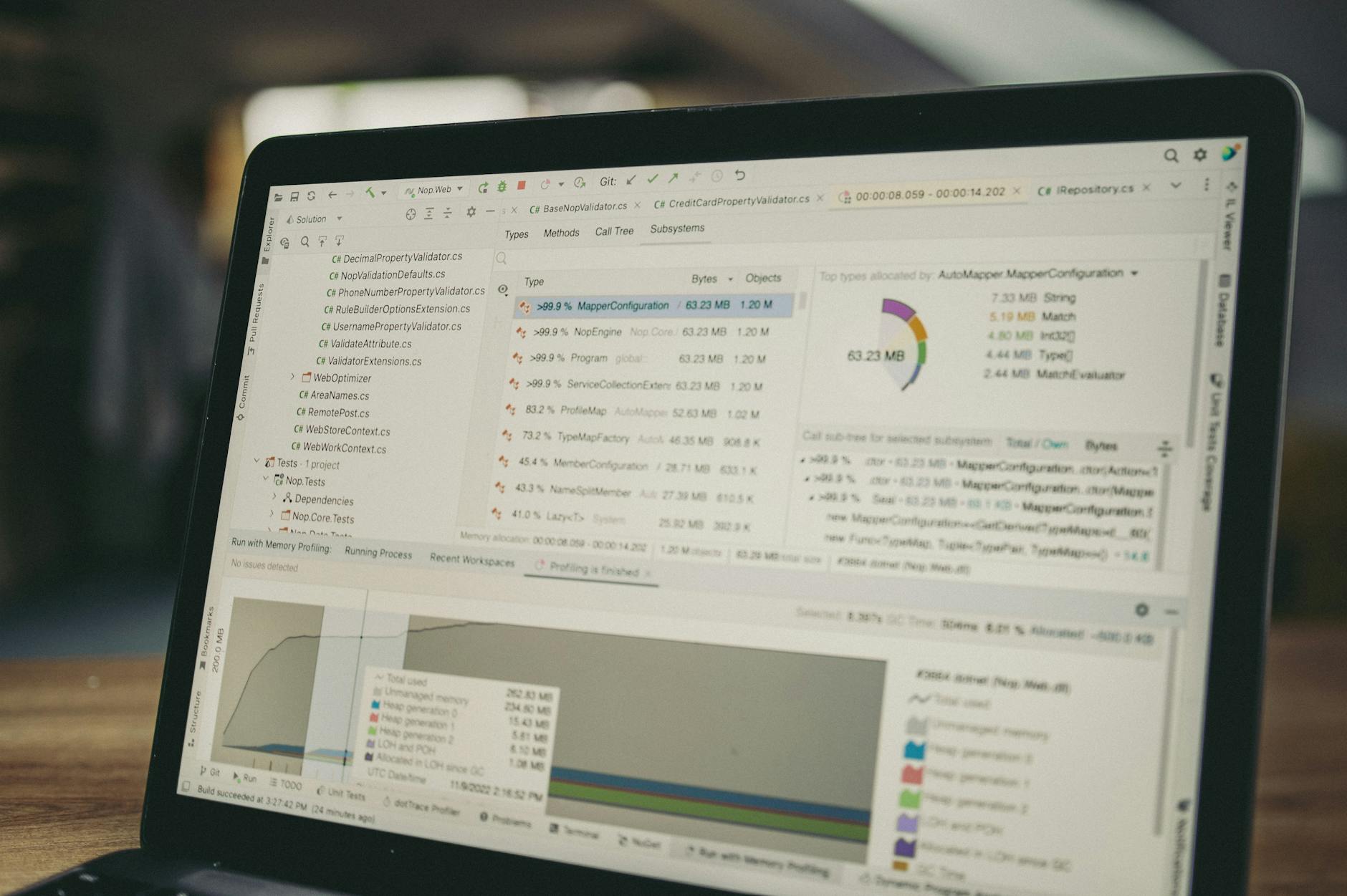

The engineer’s fear about JSON parsing is well-founded. n8n’s internal data model is JSON-centric. Every piece of data passed between nodes is wrapped in a JSON structure. For an API call returning a few hundred records, this is irrelevant overhead. For a data pipeline moving millions of rows from a database, this serialization/deserialization tax becomes a dominant performance cost. CSV or binary formats that are efficient for bulk data transfer must first be ingested into this JSON model, processed, and then re-serialized for output. The overhead isn’t linear, it can be catastrophic at scale.

This leads to the workaround the engineer already intuited: “I’m mostly considering if just calling every python code to run outside n8n would be faster than building the ETL inside n8n.” At that point, you’ve reduced n8n to a glorified, and expensive, cron scheduler with a UI. You’re paying the operational cost of running its server and the cognitive cost of managing a hybrid system, having lost the primary benefit (a unified visual flow) while inheriting all the limitations.

Furthermore, n8n’s strength in simplicity becomes a weakness in observability and governance. Airflow provides deep lineage: which task ran when, what its inputs and outputs were, detailed logs, and a clear history of failures and retries. In a complex n8n workflow, especially one passing large data between nodes, debugging a failure can mean tracing through a sprawling visual graph with limited introspection into the data at each step. The governance and multi-tenancy capabilities inherent in tools like Airflow are often afterthoughts in low-code platforms designed for speed of creation, not rigor of operation.

Picking the Right Tool for the Actual Job (Not the Marketing)

The landscape isn’t binary. It’s a spectrum of specialized tools, and the smart move is using the right one for each layer of your problem. The advice from industry guides is clear: “Most production data platforms use both: a pipeline tool like Hevo or Fivetran to move data, and an orchestration tool like Airflow or Dagster to coordinate when things run.”.

Consider this pragmatic tool positioning:

| Tool | Primary Use Case | Technical Threshold | Core Strength |

|---|---|---|---|

| n8n / Activepieces | SaaS/API workflow automation | Low to Intermediate | Visual builder, rapid prototyping |

| Apache Airflow | Data pipeline orchestration | High (Python) | Dependency management, scheduling, observability |

| Apache NiFi | High-volume dataflow routing | High | Visual data routing, provenance, protocol support |

| Node-RED | IoT/M2M integration & logic | Intermediate | Edge-friendly, protocol support (MQTT, Modbus) |

For the company wanting to move from Airflow to n8n, the crucial question isn’t about reducing code lines. It’s about workload characterization. Is the pipeline primarily moving and transforming large datasets between systems (a data engineering problem)? Or is it orchestrating a sequence of business events and notifications across SaaS tools (a business automation problem)?

The former is Airflow’s domain. The latter might be a fit for n8n, but even then, alternatives like Activepieces might be more accessible for non-technical teams, while tools like Node-RED are unbeatable for IoT scenarios. Trying to force a high-volume data pipeline into a tool designed for API choreography is like using a sports car to haul lumber. It might work for a single 2×4, but you’ll destroy the car trying to build a house.

The Real Cost: When “Low-Code” Creates High-Debt Systems

The ultimate trap isn’t just poor performance. It’s the creation of a high-debt, low-observability system. These visual workflows, while easy to start, often become “black boxes.” They lack the inherent documentation of code, can become sprawling “spaghetti graphs” that are impossible to reason about, and create a vendor/platform lock-in that’s harder to escape than a codebase.

When n8n becomes the core of your data movement, you’re also betting on its scalability, a bet that many find loses when data volume grows, as detailed in discussions about n8n memory usage and hidden costs. The company that starts with n8n for a simple sync may find itself years later with a business-critical, unmaintainable, and inefficient workflow that no engineer wants to touch and that can’t be easily ported away.

So, should you ever switch from Airflow to n8n? Only if your use case fundamentally changed from data pipeline to business process automation. For the engineer staring down that migration mandate, the path forward isn’t to fight the JSON model, but to fight the premise.

The solution is a clear separation of concerns: use Airflow (or a modern alternative like Dagster) as the robust, scalable orchestrator. It can call out to specialized tools, whether that’s a dbt model for transformation, a Spark job for heavy lifting, or even a container running a custom Python script. If there’s a place for n8n, it’s within that orchestration, perhaps as a single task node that handles a specific, lightweight API integration that truly benefits from its visual builder. The orchestration tool remains the source of truth, the scheduler, and the observability layer.

The allure of low-code is the elimination of friction. But in data engineering, some friction exists for a reason. It’s the friction of version control, of testing, of environment management, and of scalable design. Removing it doesn’t make you faster, it just ensures you’ll crash harder, later. Don’t trade your orchestration engine for a workflow canvas. Your future self, debugging a production outage at 3 AM, will thank you.