Enterprises are dumping expensive proprietary data tools like Alteryx for open-source alternatives, but the migration reveals a deeper truth: the problem was never the price tag, it was giving powerful data engineering tools to people who think merging datasets is a unique feature. When an organization drops $4,950 per seat on Alteryx Designer Cloud only to discover their “data pipeline” is a fragile house of cards held together by manual Excel uploads and undocumented SQL queries, the tool isn’t the bug, it’s the culture.

The Bill Shock Reality

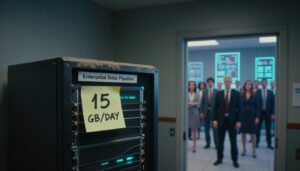

It starts with the invoice. Your organization has “invested heavily” in Alteryx, which is corporate speak for we’ve painted ourselves into a corner and the paint is made of money. The platform promises analytics process automation with a visual interface that lets “citizen data scientists” build workflows without coding. The result? A $50,000 annual subscription for the privilege of watching business analysts create vendor lock-in and open standards in enterprise AI that only runs on one machine, breaks when the source schema changes, and requires a human to manually kick off the process every morning.

The search for alternatives typically follows a predictable pattern: first, try KNIME (which is “buggy for some workflows” according to teams who’ve attempted the migration), then realize that “low cost”, “open source”, and “easier” form an impossible triangle. As one senior manager noted, you can pick two, but being greedy for all three just leads to different expensive problems.

The Spaghetti Factory Problem

The uncomfortable reality that platform vendors don’t advertise: the tool is rarely the bottleneck. When you give powerful data engineering capabilities to people who don’t understand data modeling practices, you don’t get democratized analytics, you get expensive spaghetti.

Organizations discover this when their “Alteryx guy” (there’s always one person holding the entire operation together) goes on vacation, and suddenly nobody knows how to replicate the process. The workflows become “giant labyrinths” relying on manual file inputs from analysts who got data from someone else who got it from someone else’s undocumented query. By the time the technical debt comes due, the executives who approved the “cost-saving” low-code solution have moved on, leaving the engineering team to untangle the mess.

This mirrors the broader industry pattern of excessive spending on proprietary AI infrastructure, buying expensive tools to avoid hiring competent engineers, then paying twice as much to fix the resulting chaos.

The Open-Source Escape Hatch (With Caveats)

Modern open-source ETL tools offer a genuine escape route from licensing hell, but they require a clear-eyed assessment of your team’s actual capabilities. The 2026 landscape offers several distinct flavors:

Apache NiFi: The Enterprise Workhorse

Apache NiFi remains the open-source standard for cybersecurity and government-grade data integration, rated 4.0/5 for its ability to handle complex data routing, filtering, and aggregation. It’s free, scalable, and distributed, but comes with a “steep learning curve” and deployment costs that can spike due to high hardware requirements. This isn’t a tool you hand to your Excel power user, it’s for teams who understand back pressure, queuing, and flow-specific QoS.

Airbyte: The Connector King

With 600+ connectors, Airbyte has become the darling of teams needing breadth over depth. The open-source version is free, but the hidden cost is maintenance. Unlike managed solutions, self-hosting Airbyte means you’re responsible for updates, debugging, and schema evolution. It also can’t run serverless, requiring dedicated infrastructure, and operates on batch-based processing with a minimum 5-minute latency. For teams without dedicated DevOps resources, the “free” price tag quickly accumulates engineering hours.

Meltano: The Singer Specification Gamble

Meltano offers a Python-first, open-source approach built on the Singer specification. It’s code-first and can run on serverless infrastructure, making it attractive for modern light-weight data engineering alternatives. However, the specification is complex, requiring significant upfront work to set up new data sources. Many teams view it as a risk, potential “throw-away work” if the Singer ecosystem fades, leaving you with custom connectors that nobody maintains.

dlt: The Pythonic Minimalist

The new contender is dlt, a Python library that handles incremental loading and schema inference without the bloat of full orchestration platforms. It’s lightweight, runs anywhere Python runs, and requires minimal new syntax. For teams that actually know Python (as opposed to those hoping to avoid learning it), dlt represents the sweet spot: code-first flexibility without the framework lock-in. It exemplifies the cost-efficiency of automation versus token consumption, delivering value without the overhead of expensive managed platforms.

The Cost Comparison Reality

When evaluating the financial impact, the difference between proprietary and open-source isn’t just licensing, it’s total cost of ownership. Consider the monthly landscape:

| Tool | Rating | Monthly Cost | Key Limitation |

|---|---|---|---|

| Alteryx | 4.8/5 | Custom (starts at $4,950/seat) | Proprietary lock-in |

| Matillion | 4.5/5 | $1,000 | Limited connectors (70+) |

| Hevo | 4.8/5 | $239 | Event-based pricing gets tricky |

| Fivetran | 4.5/5 | Custom (unpredictable MAR billing) | Costs scale aggressively |

| Apache NiFi | 4.0/5 | FREE | High hardware/deployment costs |

| Airbyte | N/A | FREE (self-hosted) | Heavy maintenance burden |

The data reveals a clear pattern: proprietary tools charge for convenience, while open-source tools charge for infrastructure and expertise. Fivetran’s “luxury car” pricing model, based on Monthly Active Rows, becomes unpredictable as data grows, with some startups finding it affordable initially but “expensive 6, 12 months later.” Meanwhile, Apache NiFi is free but requires significant hardware investment and expertise to operate securely.

When “Easy” Becomes a Liability

The fundamental error in the low-code promise is conflating ease of initial use with ease of maintenance. KNIME and Alteryx are “easier” only in the sense that they don’t require SQL knowledge to get started. But they create “horrendous problems downstream” because the people using them aren’t versed in data engineering best practices.

The workflows eventually rely on manual file inputs with source queries that are “seldom documented”, creating a chain of dependencies where nobody knows how to replicate the process when key personnel leave. The cost to fix this problem invariably exceeds whatever was saved by avoiding a proper data engineering hire.

As one data engineer noted, if you want things done well and cheap, “a DE with Python and Airflow on an inexpensive server is going to work much better.” The cultural mindset is the problem: organizations want to avoid investing in data engineering expertise while still getting data engineering results.

The Migration Strategy That Actually Works

If you’re looking to escape the Alteryx tax, don’t just swap tools, audit your workflows. The organizations succeeding in 2026 are those using modern light-weight data engineering alternatives like DuckDB for analytics and dlt for extraction, but crucially, they’re staffed by people who understand data modeling.

The Decision Matrix:

- Choose Airbyte if you have DevOps capacity and need 600+ connectors, but can tolerate 5-minute minimum latency

- Choose Apache NiFi if you need government-grade security and have the hardware budget, but beware the learning curve

- Choose dlt if you have Python skills and want serverless, lightweight extraction without framework bloat

- Choose Meltano if you’re willing to bet on the Singer specification and need orchestration out of the box

The uncomfortable truth? If your team is asking for “something easier than Python” because they don’t understand data engineering, giving them a visual drag-and-drop interface won’t fix the underlying competency gap. It will just create expensive technical debt that someone else has to pay down later, usually after the executives who approved it have cashed their bonuses and moved on.

The open-source revolution isn’t about making data engineering easier. It’s about making it possible without mortgaging your infrastructure budget. But the tools are only as good as the people wielding them. Sometimes the cheapest solution is admitting that you need someone who knows what a LEFT JOIN actually does.