If you’ve ever spent twenty minutes hunting through PyPI, GitHub repos, and scattered documentation just to find the correct import path for an S3 operator, you know the pain. Apache Airflow’s ecosystem has grown into a sprawling metropolis of 98 official providers and 1,602 modules, but until now, finding your way around required a local guide and a tolerance for archaeological digs through Stack Overflow.

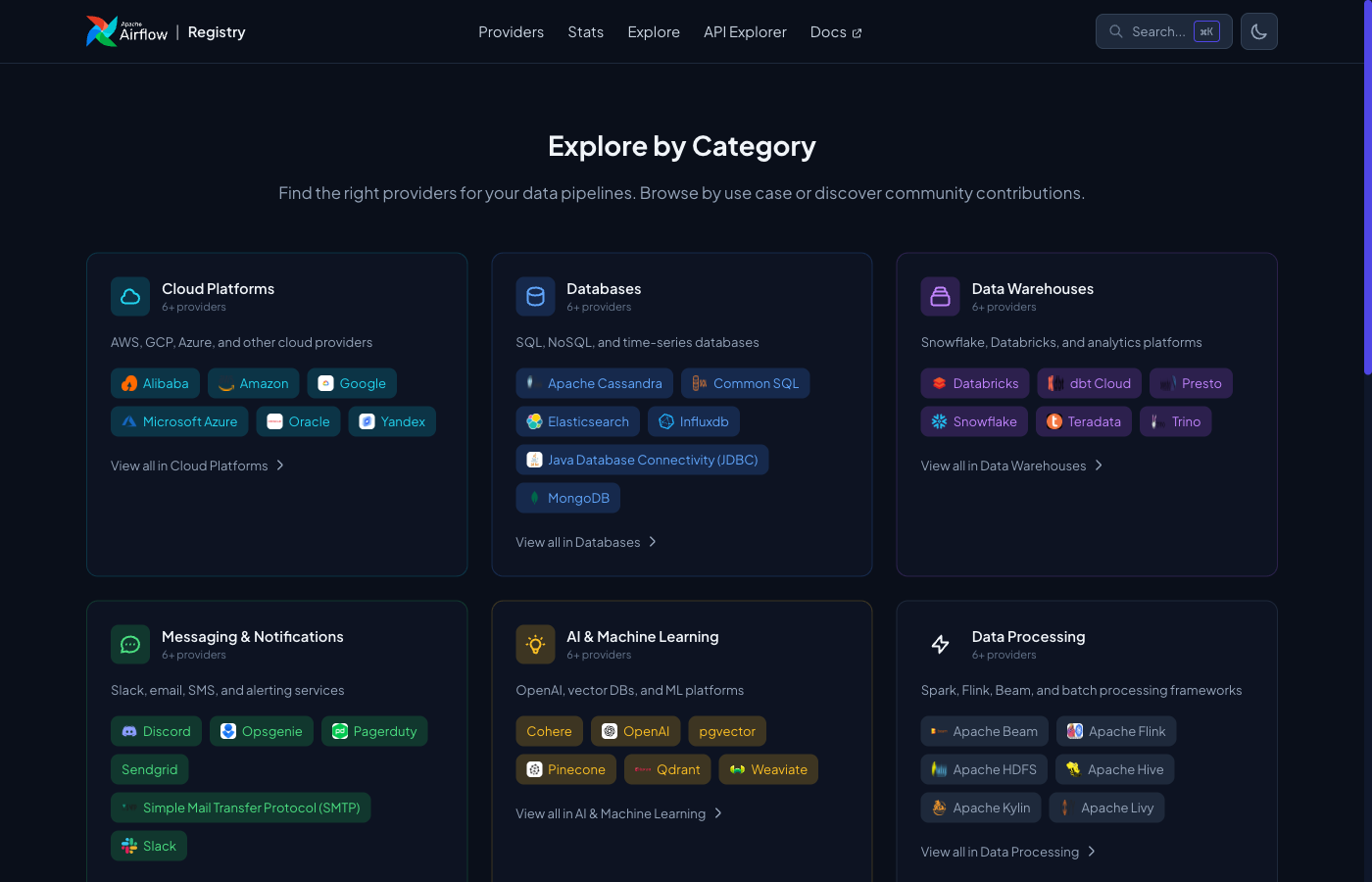

The Apache Airflow Registry changes that. Launched this week at airflow.apache.org/registry, it’s a searchable catalog that exposes the full scope of Airflow’s integration ecosystem: 125+ integrations, 329 million monthly PyPI downloads, and enough operators, hooks, sensors, and triggers to make your head spin. More importantly, it finally answers the question: “What can Airflow actually do?”

The Discovery Problem Nobody Talked About

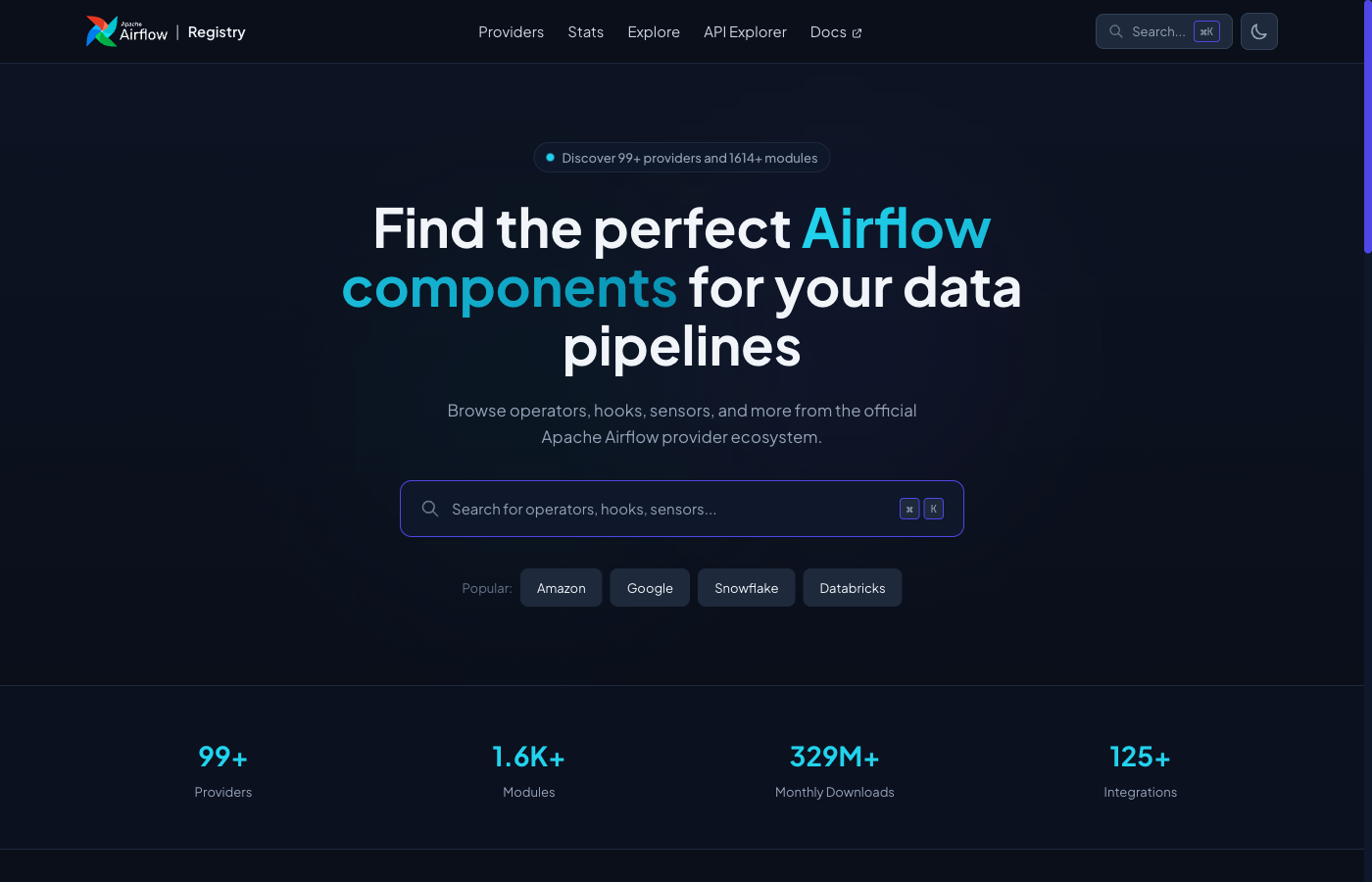

Airflow’s strength has always been its extensibility. The weakness? Discoverability. When you needed to connect to Snowflake, you had to know that apache-airflow-providers-snowflake existed, then figure out which version worked with your Airflow core, then decipher whether you needed a hook, an operator, or a sensor. This friction isn’t just annoying, it actively shapes how teams build pipelines. When discovery is hard, engineers reinvent wheels or stick to familiar tools, even when better options exist.

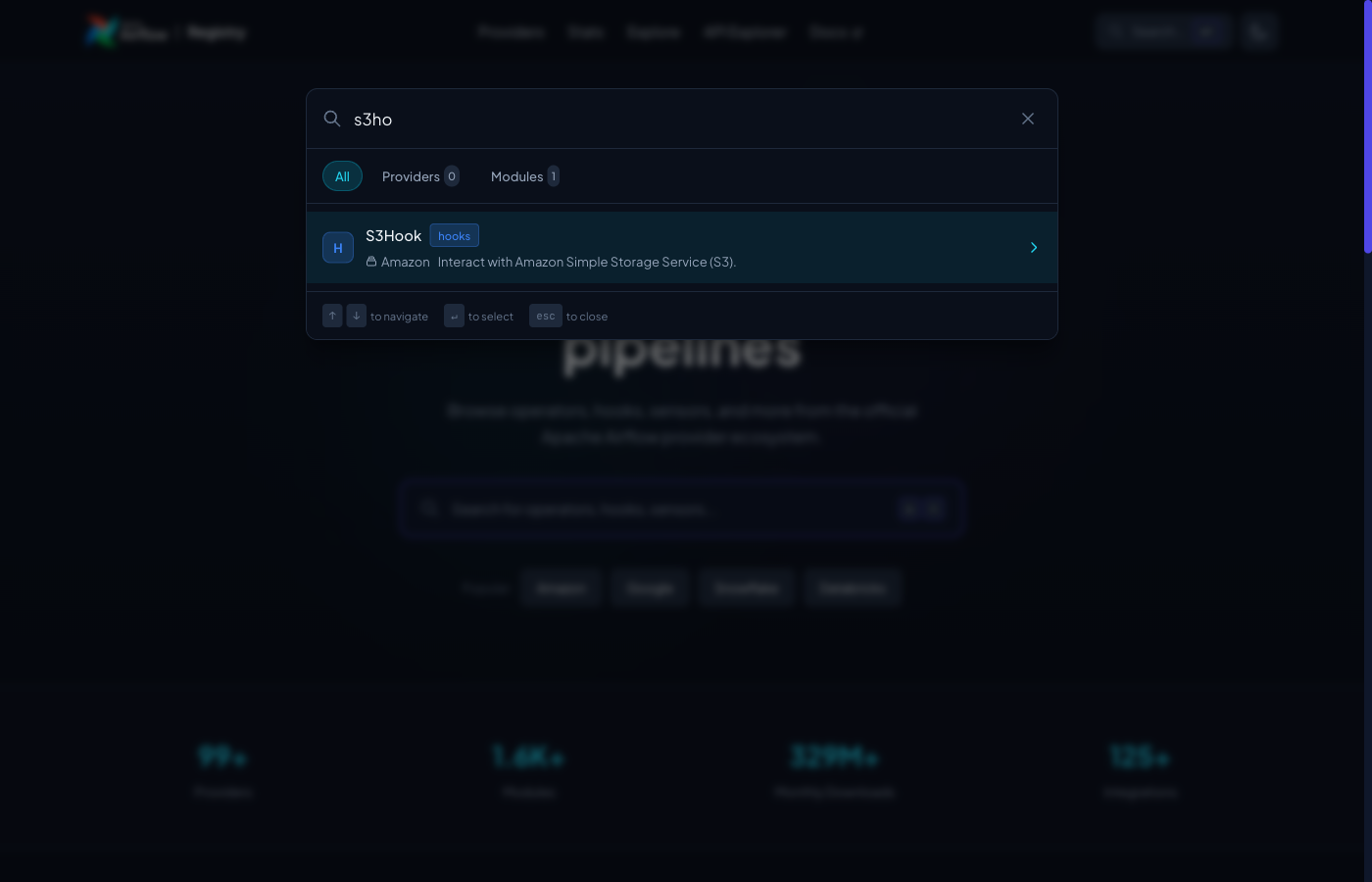

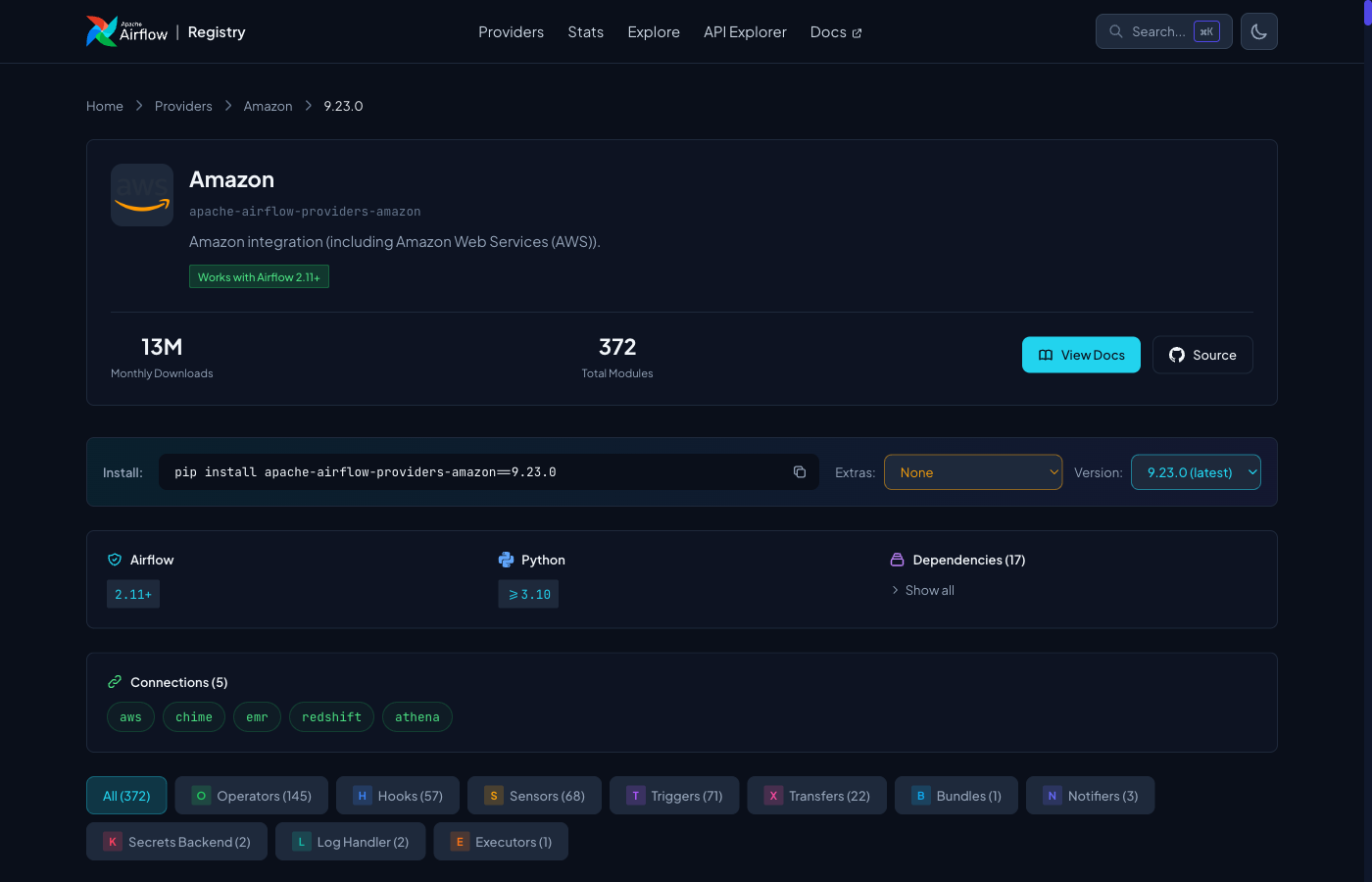

The new Registry doesn’t just list packages, it exposes the internal anatomy of each provider. Take the Amazon provider: it contains 372 modules across 10 distinct types, from operators to triggers to transfers. Previously, finding the specific Lambda invoke operator meant digging through source code or hoping your IDE autocomplete was generous. Now, it’s a Cmd+K search away.

The Connection Builder: Where the Magic Actually Happens

The most practically useful feature isn’t the search, it’s the Connection Builder. If you’ve ever fought with Airflow’s connection URI encoding (and who hasn’t?), this tool feels like a peace offering. Click any connection type, fill in the fields, and it generates the connection in three formats: URI, JSON, and environment variables.

No more guessing whether your Snowflake password needs URL encoding or if the port field accepts strings. The builder handles the translation, which means fewer 3 AM debugging sessions trying to figure out why your aws_default connection works in staging but fails in production because of a malformed query parameter.

This matters for DAG optimization best practices too. When connections are standardized and easily configured, you reduce the variability that makes DAGs brittle across environments.

By the Numbers: The Ecosystem Exposed

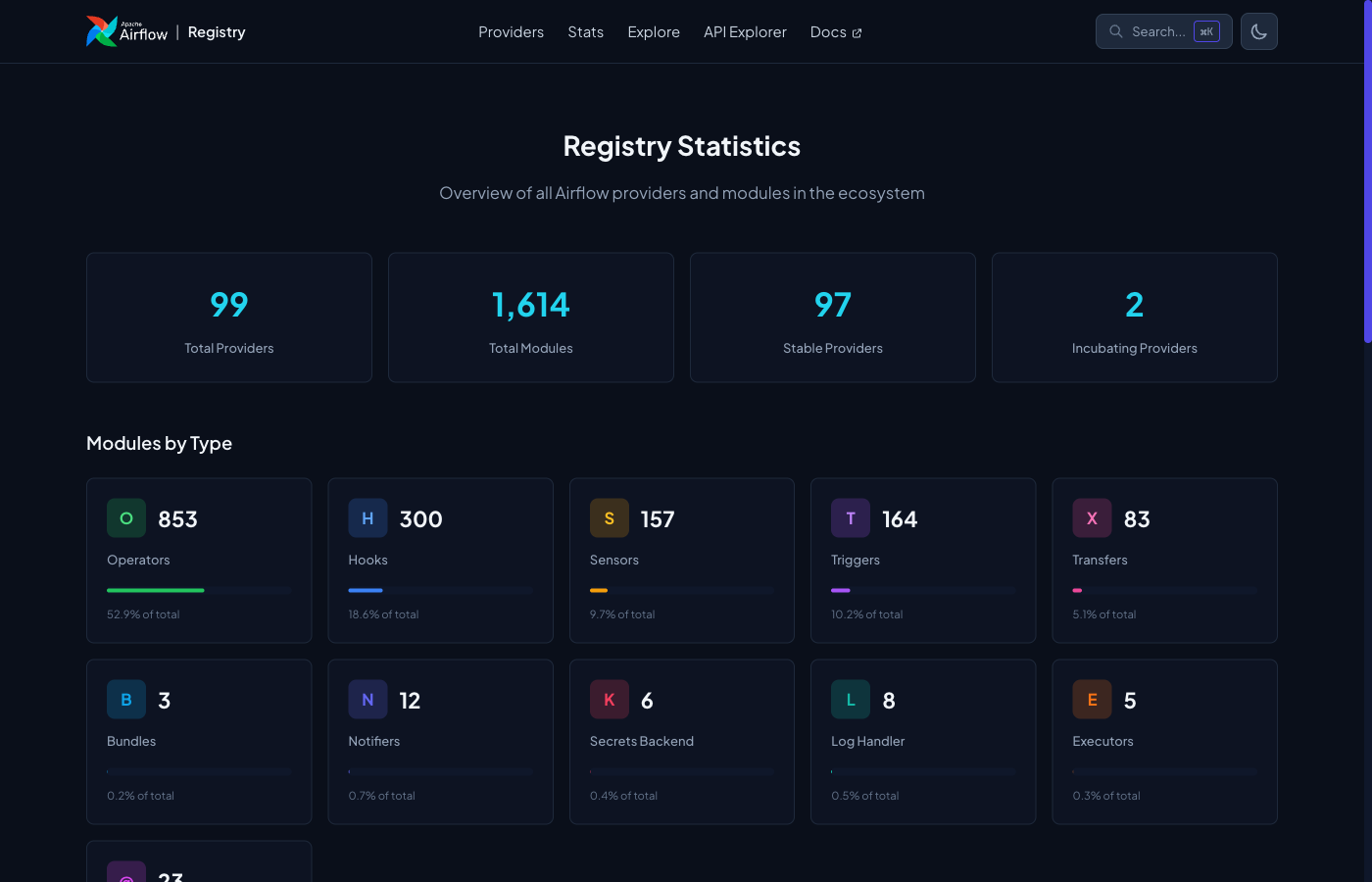

The Registry’s Stats page reveals the sheer scale of what we’re working with. The current breakdown shows:

- 848 operators

- 298 hooks

- 164 triggers

- 157 sensors

- 83 transfers

That’s not just a list, it’s a taxonomy of modern data engineering.

What’s striking is the distribution. Operators dominate (as expected), but the 164 triggers indicate how Airflow has evolved beyond simple cron replacements into event-driven orchestration. The 83 transfers show the platform’s increasing focus on data movement patterns, not just job scheduling.

The Amazon provider detail page exemplifies this density: 372 modules organized by service (S3, Lambda, Glue, Step Functions, etc.). For teams evaluating open-source ETL alternatives, this level of integration depth demonstrates why Airflow remains the default choice for complex pipelines despite the rise of low-code competitors.

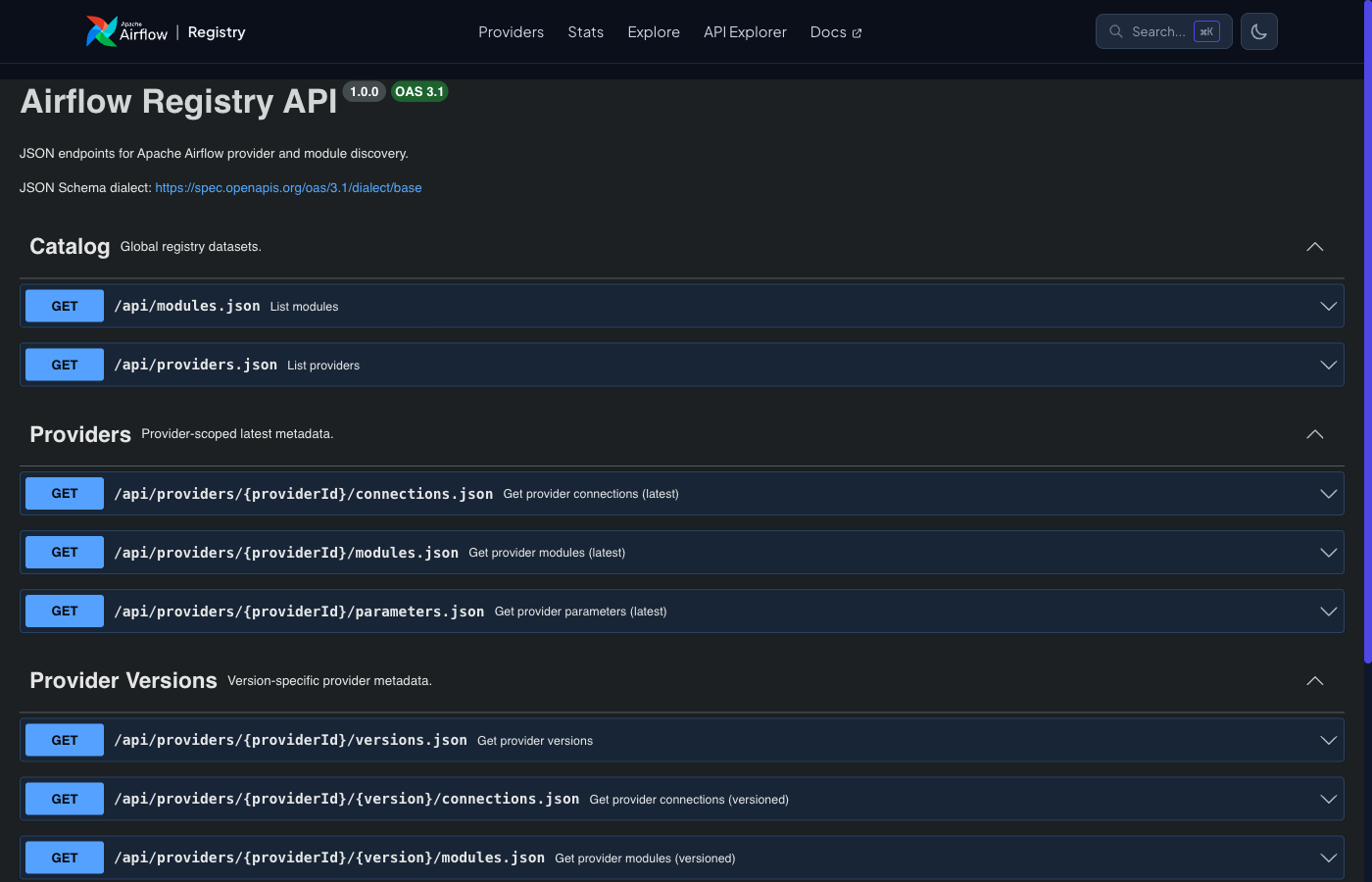

The API: Infrastructure as Code’s New Best Friend

Every piece of Registry data is available as structured JSON through a documented API. This isn’t just for building cool dashboards, it’s infrastructure as code enabler. Want to auto-generate type-safe Python clients for your internal Airflow plugins? Want to build an IDE extension that suggests the correct operator based on your imports? The API makes it possible.

The API Explorer, built on OpenAPI 3.1, lets you browse endpoints interactively. For platform teams managing Airflow at scale, this means you can programmatically audit which providers your organization uses, track version compatibility across teams, and automate dependency updates.

This programmatic access addresses a gap that has plagued Airflow adopters: the disconnect between the rich provider ecosystem and the tooling that supports it. When your IDE knows exactly which parameters S3ToSnowflakeOperator accepts because it’s querying the Registry API, the development experience finally matches the platform’s capabilities.

The Astronomer Precedent: From Commercial to Community

The Registry’s launch carries some historical weight. For years, Astronomer (the commercial Airflow vendor) maintained the Astronomer Registry, a similar catalog that served as the de facto discovery layer. The Apache Airflow PMC explicitly acknowledges this debt, noting that the community-owned Registry was “directly shaped” by Astronomer’s work.

This transition from commercial to community infrastructure matters. It ensures that provider discovery remains neutral territory, not tied to a specific vendor’s roadmap or hosting decisions. For an open-source project of Airflow’s scale, centralizing this knowledge under the Apache umbrella prevents fragmentation. It also sets the stage for the next phase: third-party provider support.

The roadmap includes plans to list community-built providers alongside official ones. This could democratize Airflow extensions in the same way VS Code’s marketplace democratized editor plugins, if the curation and quality standards hold up.

What’s Still Missing (And Why It Matters)

For all its utility, the Registry is currently a reference tool, not a marketplace. You can’t install providers directly from the interface, you still copy the pip command and manage dependencies yourself. The connection builder generates configs but doesn’t validate them against live systems. And while the module listings are comprehensive, they lack usage examples, knowing that RedshiftDataOperator exists is different from knowing how to use it without tanking your database.

The planned “richer module pages” with full parameter docs and usage examples can’t come soon enough. Until then, the Registry solves the discovery problem but leaves the implementation details to your existing documentation habits.

For teams concerned with ETL tool performance considerations, the Registry also highlights the overhead of Airflow’s modular architecture. With 1,602 modules available, the temptation to import everything “just in case” can lead to bloated worker images and slower startup times. The Registry makes it easier to find what you need, but discipline in dependency management remains your responsibility.

The Bottom Line

The Airflow Registry doesn’t change what Airflow can do, it changes how quickly you can figure out what Airflow can do. In a platform with 98 providers and 1,602 modules, that speed matters. It reduces the cognitive load of pipeline development, standardizes connection management, and provides the API foundation for the next generation of Airflow tooling.

If you’re still managing Airflow connections by hand and hunting through GitHub for operator syntax, you’re working harder than necessary. The map is finally here. Use it.