VS Code’s ‘Local’ AI Lock-in: The Subscription Requirement Hiding in Plain Sight

Microsoft’s clever twist on the ‘local AI’ promise forces developers to pay for GitHub Copilot, even when the models are running on their own hardware.

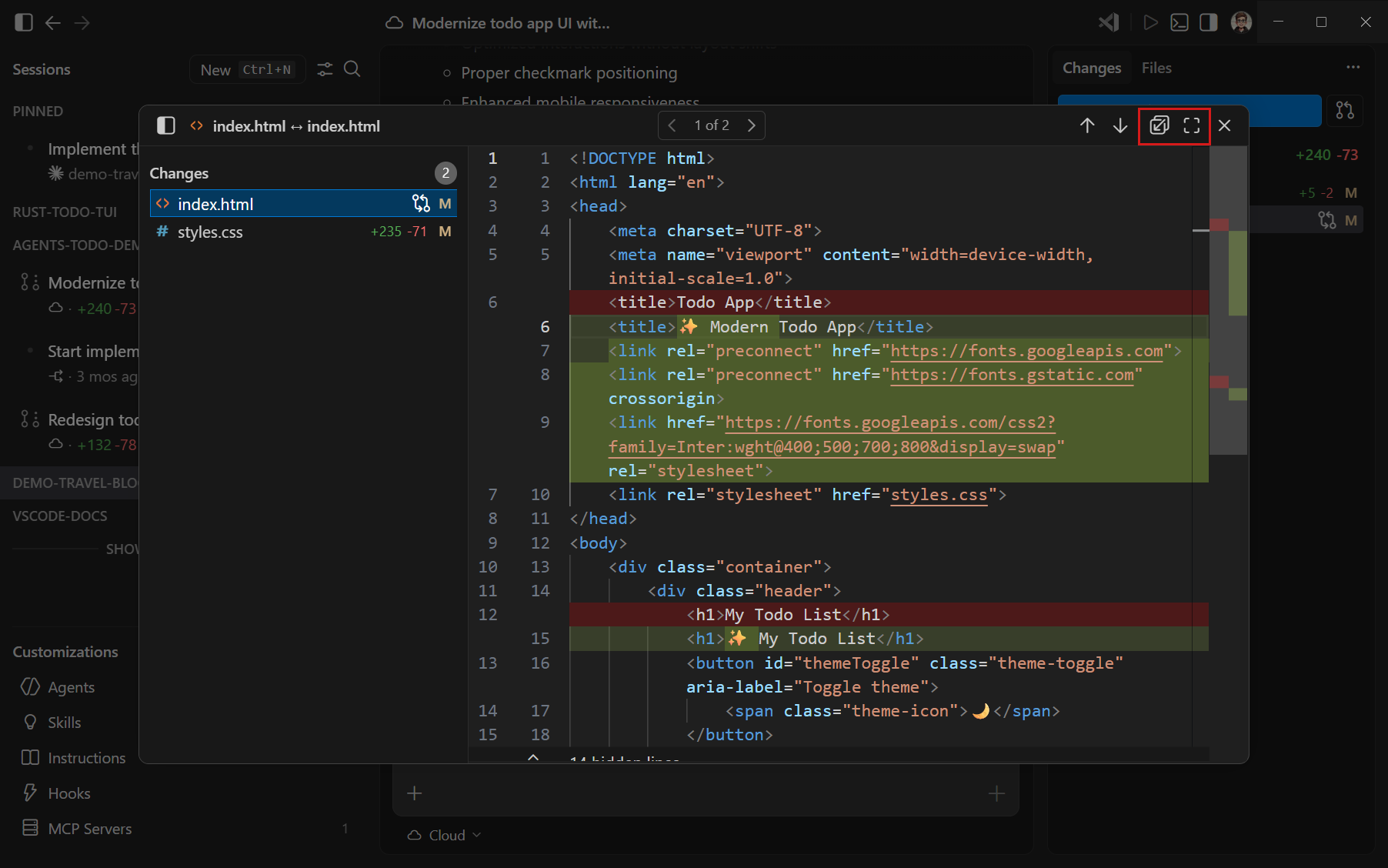

Let’s get the obvious out of the way: the marketing for VS Code’s latest agent features screams “local AI.” The interface shows you fancy model pickers suggesting you can run Ollama or LM Studio. Developer forums buzz about true offline, private coding. The documentation even has a screenshot of you selecting a local model from your running Ollama instance. It’s a beautiful illusion.

Currently, using a locally hosted models still requires the Copilot service for some tasks. Therefore, your GitHub account needs to have access to a Copilot plan (for example, Copilot Free) and you need to be online. This requirement might change in a future release.

Can I use a local model without a Copilot plan? No, currently you need to have access to a Copilot plan (for example, Copilot Free) to use a local model.

The Architecture of Dependence: Why Your Local Model Isn’t Local

The friction developers feel when trying to cut the cord isn’t a bug, it’s the business model. Let’s break down the technical specifics.

The Non-Negotiable Cloud Component

The official stance is that even with a local model selected, the Copilot service handles tasks such as sending embeddings, repository indexing, query refinement, intent detection, and side queries.

In practice, this isn’t just a few diagnostic pings. It’s the orchestration layer.

The Subscription Gatekeeper

Microsoft isn’t shy about the paywall. The OpenCode documentation reveals that “some models might need a Pro+ subscription to use.”

This isn’t just about advanced cloud models. The subscription tier dictates which parts of the “local” experience you can even touch.

The Subscription Gatekeeper: From Pro to Pro+ to Max

A recent GitHub Blog post details the new individual plan lineup, effective June 1, 2026, with a stark pricing structure:

| Plan | Price | Base Credits | Flex Allotment | Total Included Usage |

|---|---|---|---|---|

| Pro | $10/month | $10 | $5 | $15 |

| Pro+ | $39/month | $39 | $31 | $70 |

| Max | $100/month | $100 | $100 | $200 |

These credits are consumed by “longer agent runs, multi-step work, and more capable models.” The more you want to use the agent features, even with a local model, the more you pay. The “flex allotment” is a variable buffer that “can change” based on “the economics of AI”, ensuring Microsoft retains control over the value proposition.

The UI, as captured in the official docs, presents this as seamless choice. The model picker shows local options like Ollama sitting right alongside cloud-hosted GPT-5 and Claude Sonnet. But the subscription requirement is the invisible gate that opens the door to all of it.

The Offline Myth: Internet Required, Even for “Local” Chat

This is where the contradiction becomes painfully concrete. The official FAQ asks: “Can I use a local model without an internet connection?”

Answer: Currently, using a local model requires access to the Copilot service and therefore requires you to be online. This requirement might change in a future release.

That “might change” is doing a lot of heavy lifting. It means that the “Agents window”, for all its local-first branding, is fundamentally a hybrid architecture. It cannot operate in airplane mode, on a secure network, or in any environment where GitHub’s servers are unreachable.

For developers who truly need air-gapped or highly secure development, the message is clear: look elsewhere. Alternatives like Continue or Cline with Ollama and a local embedding model offer a genuine fully-local path, as noted in developer discussions.

The Practical Ecosystem vs. The Locked-in Garden

The Integrated Experience Pros

- Streamlined configuration of local models

- Clean model picker UI

- Bonus for developers already paying for Copilot Pro/Pro+

The Subscription Reality

- Local functionality framed as a value-add to subscription

- Not about empowering developers with local AI

- About enriching Copilot ecosystem to justify pricing

Contrast this with the genuinely local-first approach detailed in tutorials for building a “Fully Local AI Workspace Inside VS Code.” These guides detail using LM Studio as a local OpenAI-compatible API server (http://127.0.0.1:1234/v1) and connecting extensions like Cline directly to it with a placeholder API key.

The workflow offers private local inference, offline development, and zero API costs. It’s clunkier, involves manual configuration, and lacks the polished integration. But it is actually local. It answers to no one but the developer running it.

The Cold Hard Business Logic

From Microsoft’s perspective, this is rational. GitHub Copilot is a multi-billion-dollar business. Giving away the keys to a fully functional, offline-capable AI development environment would cannibalize that revenue.

Key Insights

- Agent features represent a compute-intensive frontier of AI coding that needs metering

- Bring Your Own Language Model Key (BYOK) creates perception of openness while maintaining subscription lifeline

- Fine print warns: “There is no guarantee that responsible AI filtering is applied” with BYOK

- Cloud-first architecture limitations become monetization opportunities across the industry

The vendor provides the essential, proprietary glue: the orchestration, the security layer, the semantic search, and rents access to it, regardless of where the heavy-lifting inference happens.

What This Means for the Future of Developer Tools

Ecosystem Pressure

If the dominant code editor successfully makes advanced AI agent features a premium, subscription-locked experience, it pressures the entire ecosystem. Will JetBrains follow suit? Will other toolchains build similar hybrid locks?

Adoption Timelines

If the most accessible toolchain places agentic features behind a paywall, it could slow experimentation and innovation at the very moment the technology is becoming viable.

What Should You Do?

- Understand the Trade-off: If you want the smoothest, most integrated “local-ish” AI experience and are already invested in the Copilot ecosystem, VS Code’s agents are a powerful addition. Just know you’re renting the bridge to your own island.

- Demand True Offline Mode: Use the feedback channels. The “might change in a future release” line is a pressure point. If enough developers signal that true offline capability is a deal-breaker, the calculus might shift.

- Explore the Alternatives: If sovereignty, cost control, or offline work is critical, invest time in the open, local stack. The learning curve is steeper, but the payoff is complete control.

The promise of local AI in VS Code is real, but it’s a promise currently held hostage by a subscription. You can have the local model, but you must pay the cloud toll. Whether this is a reasonable toll for a maintained bridge or an artificial barrier to genuine developer freedom depends entirely on your priorities, your wallet, and your tolerance for vendor lock-in in the age of intelligent tools.