The Anti-Hypertrophic Design: Why Minimalism Wins Over Microservices

Achieving balance requires understanding where complexity truly pays off—and where it drains resources.

The software industry’s obsession with microservices has reached a tipping point. While architectural complexity was once a badge of honor, a growing viral sentiment, crystallized by movements like “Have a Fucking Website”, champions radical simplicity. The data is stark: microservices can cost $80,000 monthly to operate versus $4,000 for equivalent monolithic systems, with performance penalties reaching 1,000,000x slower in-process calls.

The $80K Reality Check

Let’s start with the numbers that make CFOs weep. A recent analysis revealed that running the same feature set cost $80,000 per month in a microservices architecture versus $4,000 in a monolith. The operational overhead isn’t just infrastructure, it’s the human cost of distributed complexity. Debugging a monolith takes five minutes: check logs, find error, fix. In a microservices environment? Two hours of tracing requests across twelve services to find which retry caused the cascade failure and why the circuit breaker opened.

This isn’t theoretical. The latency penalties alone are staggering. While an in-process function call in a monolith executes in nanoseconds, a network call between microservices takes milliseconds, a 1,000,000x slowdown. Cross-AZ calls hit 10-30ms, and cross-region calls balloon to 50-200ms. As one request traverses five microservices, you’re looking at 50-100ms of overhead before any actual business logic executes. For low-latency requirements, this tax is often unacceptable.

The Viral Revolt Against Complexity

The technical backlash against architectural bloat mirrors a broader cultural shift. The viral essay “Have a Fucking Website” captured the zeitgeist perfectly: businesses and creators have abandoned the simple, owned platform (the website) for the complex, rented walled garden (social media). The parallel to backend architecture is uncanny. Teams abandoned the “boring” monolith for the glittering promise of microservices, only to find themselves locked into operational nightmares they don’t control.

The sentiment resonated because it exposes a fundamental truth: complexity is a liability, not an asset. When you don’t own your infrastructure, when you’re at the mercy of Kubernetes whims, service mesh configurations, and distributed tracing vendors, you’ve traded one form of platform dependency for another. The “revolutionary” microservices architecture mostly revolutionized paperwork, creating a generation of developers who spend more time configuring Istio than writing business logic.

When Microservices Become Shackles

The metaphor isn’t subtle, but it’s accurate. Splitting your monolith into fourteen services doesn’t clean up messy code, it distributes the mess across the network and makes it someone else’s 3 AM problem. Consider the operational staffing ratios: a mature microservices organization requires 1 SRE for every 10-15 services, while a monolith needs just 1-2 operations engineers for the entire application. At 50 services, observability costs alone range from $50,000 to $500,000 annually.

The debugging complexity is where the shackles really tighten. A simple SQL transaction in a monolith:

BEGIN;

UPDATE inventory SET stock = stock - 1 WHERE product_id = 42;

INSERT INTO orders (user_id, product_id) VALUES (101, 42);

COMMIT;

Becomes a distributed saga in microservices:

- Order Service: create order, status=PENDING

- Publish: OrderCreated

- Inventory Service: reserve stock

- Publish: StockReserved

- Order Service: status=CONFIRMED

Step 3 fails? Now you’re debugging compensating transactions, hoping the message queue doesn’t drop events, and praying the Saga pattern doesn’t bite you at 2 AM. One atomic commit transformed into five async steps, two compensating transactions, and an event bus you now maintain forever.

Airbnb’s Three-Phase Retreat

If you need proof that complexity doesn’t scale, look at Airbnb’s architectural evolution. Their journey is a masterclass in anti-hypertrophic design, knowing when to simplify.

| Airbnb Evolution Stage | Period | Primary Architecture | Core Challenge Solved | New Challenge Introduced |

|---|---|---|---|---|

| The Monorail | 2008, 2017 | Ruby on Rails Monolith | Initial speed and agility | Deployment and team bottlenecks |

| SOA Transition | 2017, 2020 | Microservices | Independent scaling and team autonomy | Service sprawl and dependency complexity |

| Service Blocks | 2020, Present | Hybrid Micro + Macroservices | API unification and reduced cognitive load | Balancing abstraction with service granularity |

After splitting into thousands of microservices, Airbnb hit “service sprawl.” Small changes required updates to multiple fine-grained services. The cognitive load became unbearable. Their solution? Service Blocks, essentially a return to larger, coherent units that group related microservices. They designed like a monolith again, even while implementing as distributed services.

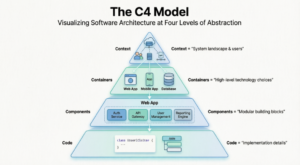

This aligns with the emerging consensus: design like a monolith, implement as microservices. The monolith’s strength was never the single codebase, it was the coherent design. Microservices’ strength was never the distributed APIs, it was team independence. You can have both, but only if you separate the design layer from the implementation layer.

The Modular Monolith: The Actual Third Way

There’s a false dichotomy that teams face: big ball of mud versus 30 microservices. The modular monolith offers a third path that respects structural boundaries without network overhead.

Keep one deployable unit. Draw real boundaries inside it:

/src

/modules

/payments

payments.controller.ts

payments.service.ts

payments.repository.ts

/inventory

inventory.controller.ts

inventory.service.ts

/notifications

notifications.service.ts

Hard rule: modules talk through exported interfaces only. No sneaking into another module’s utils folder. Same database. Same deploy. Clean boundaries. When you eventually need to pull payments into its own service (if ever), the boundary is already there. The extraction becomes boring work instead of archaeology.

This approach eliminates the “latency tax” and operational risks arising from architectural inconsistency, while maintaining the code organization benefits that drive teams toward microservices in the first place.

When You Actually Need to Split

Microservices aren’t evil, they’re just expensive. And like any expensive tool, you should only use them when the problem demands it. The decision framework is clear:

Split only when:

1. Domains truly don’t touch each other (Payments and Content have nothing to say to each other at the data layer)

2. Scaling needs are completely different (A login endpoint needing 256MB RAM versus a video transcoder needing 8GB)

3. You’ve hit 150+ engineers (Conway’s Law wins eventually, past this point, synchronized releases become impossible)

4. You have distributed tracing and centralized logs running (Without Jaeger, Tempo, or equivalent, you’re debugging blind)

Below these thresholds, microservices are premature optimization. As one engineer noted: “Netflix got to microservices after years of scale, with dedicated platform teams and hundreds of engineers. That’s not where they started. That’s where the pain eventually pushed them.”

The AI Complication

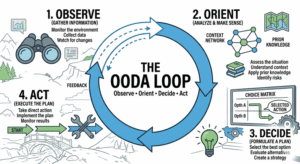

Here’s where the narrative shifts. Autonomous AI agents designing complex systems are entering the chat. If AI agents can deploy microservices in production at machine speed, does the complexity tax change?

The consensus is shifting toward “design-first” approaches where AI automated generation of microservices architectures might handle the boilerplate. But even transformations in system design methodologies by LLMs haven’t eliminated the fundamental physics: network calls are still 1,000,000x slower than memory calls, and risks of semantic drift in generative AI development can turn a 50-service deployment into an unmaintainable nightmare faster than ever.

AI might manage the complexity, but it doesn’t eliminate it. The anti-hypertrophic principle remains: start simple, add complexity only when forced, and never borrow solutions to problems you don’t have yet.

The Anti-Hypertrophic Manifesto

The viral sentiment is correct. Whether it’s websites or web services, ownership and simplicity trump complexity and rented platforms. The anti-hypertrophic design philosophy isn’t about being a Luddite, it’s about being honest about trade-offs.

Start with a modular monolith. Enforce strict boundaries. When you hit 150 engineers and genuinely different scaling requirements, split using the Strangler Fig pattern, never a big bang rewrite. Maintain a unified API design even when implementing as microservices. And above all, remember that every service you create is a service you have to wake up for at 3 AM.

The internet was built on simple, linked websites. Your architecture should follow suit. Have a fucking monolith, until you genuinely can’t.