The trust we place in the mechanics of modern software delivery is absolute and largely unexamined. A git push triggers a pipeline. A pipeline runs tests and publishes packages. We assume the binaries that emerge are pure expressions of the source code we wrote. The May 2026 compromise of 42 TanStack packages shatters that assumption, exposing a blueprint for how our own automation can be weaponized against us. This wasn't just a hack, it was a surgical demonstration that in a supply chain attack, the most dangerous vulnerability is a well-configured CI/CD workflow.

The Anatomy of a Heist: Three Vulnerabilities, One Pipeline

The attack, dubbed "Mini Shai-Hulud", was a masterclass in lateral movement. It didn't brute-force npm credentials or phish a maintainer's password. It exploited three distinct, well-documented weaknesses in GitHub Actions workflows, chaining them together to achieve a devastating result.

pull_request_targetThe entry point was a GitHub Actions workflow named

bundle-size.yml. It used the pull_request_target event, which runs in the base repository's context with elevated permissions, to benchmark PRs from forks. The workflow's author attempted a "trust split", keeping a comment-pr job separate, but missed a key detail: the actions/cache@v5 step used by the benchmark job saves to a cache scoped to the main branch. When an attacker opened a PR (#7378) and force-pushed a malicious commit, the workflow checked out the fork's code and executed it, poisoning the shared pnpm store cache.The attacker's payload, a 30,000-line obfuscated

vite_setup.mjs file, was designed to do one thing: corrupt the pnpm store cache with a key (Linux-pnpm-store-6f9233a50def742c09fde54f56553d6b449a535adf87d4083690539f49ae4da11) that the main branch's release.yml workflow would later restore. This is a known attack pattern documented by Adnan Khan in 2024. The poisoned cache persisted even after the attacker closed the PR, lying in wait for the next legitimate push to main.The

release.yml workflow, triggered by a legitimate merge, restored the poisoned cache. When the build step ran, the malicious code now had access to the runner. Crucially, release.yml had id-token: write permissions for npm's trusted publishing. The attacker didn't need to steal a long-lived npm token. Instead, they used a verbatim Python script (previously seen in the tj-actions/changed-files compromise) to scrape the GitHub Actions Runner.Worker process memory via /proc/<pid>/mem, extracting the freshly minted OIDC token. With this token, they authenticated directly to registry.npmjs.org and published 84 malicious versions across 42 packages from the project's own, trusted CI/CD pipeline.The malware injected into the packages was a credential-harvesting worm. It scanned for AWS, GCP, Kubernetes, and GitHub credentials, then used the stolen npm OIDC token to publish malicious versions of every other package the compromised identity maintained. This self-propagation turned a single-point breach into a cascading ecosystem threat.

The Uncomfortable Reality: Provenance is a Record, Not a Guarantee

Here's the twist that should keep every maintainer awake: the malicious packages carried valid SLSA Build Level 3 provenance attestations. They were cryptographically signed by npm's Sigstore infrastructure and tied to the legitimate TanStack/router release workflow. The provenance statement proved the packages came from the correct pipeline, it said nothing about the pipeline's integrity at the moment of execution.

// SLSA Provenance from compromised @tanstack/router-generator@1.166.48

{

"predicateType": "https://slsa.dev/provenance/v1",

"predicate": {

"buildDefinition": {

"buildType": "https://slsa-framework.github.io/github-actions-buildtypes/workflow/v1",

"externalParameters": {

"workflow": {

"ref": "refs/heads/main",

"repository": "https://github.com/TanStack/router",

"path": ".github/workflows/release.yml"

}

}

}

}

}

This exposes a critical gap in the "trusted publishing" narrative. A compromised CI step can produce a perfectly attested, cryptographically verifiable, malicious artifact. The system trusts the pipeline, the attacker exploits the pipeline. As explored in our analysis of open source maintainer risk models, the concentration of authority in automated systems creates a single point of catastrophic failure.

Consumer-Side Defenses: What Your Package Manager Won't Do

The npm registry's publisher-side controls (provenance, granular tokens, 2FA) did their job. The packages were published through the official, trusted pipeline. The failure happened before publication, inside the build environment. This shifts the burden of defense downstream, to the tools that install dependencies.

The latest package managers are finally acknowledging this shift. pnpm v11, released just weeks before this attack, now enables security-by-default behaviors that could have mitigated the impact:

strictDepBuilds: true(Default): Blocks all dependencypostinstallscripts unless explicitly allowlisted in anallowBuildsmap. The TanStack malware relied on apreparescript in a fakeoptionalDependency.minimumReleaseAge: 1440(Default): Imposes a 24-hour cooldown on newly published packages. Most malicious packages are detected within hours, this simple delay is brutally effective.blockExoticSubdeps: true(Default): Prevents transitive dependencies from being pulled from git repositories or tarball URLs, closing thegithub:tanstack/router#79ac49ee...vector used here.trustPolicy: no-downgrade(Opt-in): Blocks installation if a package's publishing authentication weakens (e.g., moving from trusted publisher to basic auth).

A comparison of package manager defenses paints a stark picture:

| Feature | pnpm v11 | npm CLI | Yarn Berry (v4) | Bun |

|---|---|---|---|---|

| Script blocking (default) | Yes | No | Yes | Yes |

| Per-package allowlist | Yes | No | Yes | Yes |

| Release cooldown (default) | Yes (1 day) | No | Yes (3 days) | Opt-in |

| Exotic subdep blocking | Yes | No | Partial | No |

The npm CLI, notably, offers none of these consumer-side protections by default. It remains a conduit, not a filter. If your security strategy ends at "we use npm audit", you're defenseless against this class of attack. This incident is a direct parallel to recent npm-based AI supply chain attacks, where the exploit path was through the build and publish toolchain, not the registry itself.

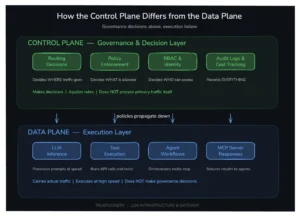

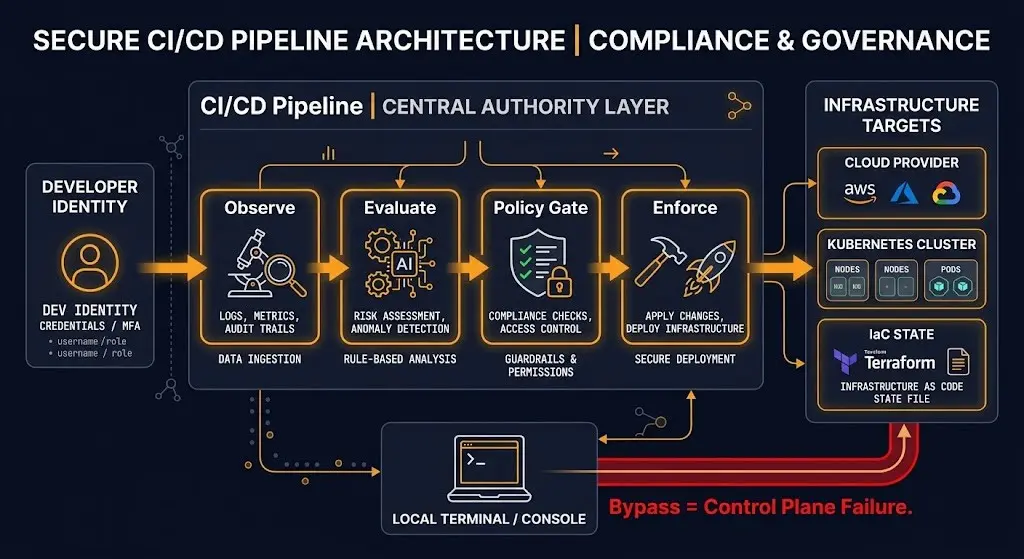

Your CI/CD Pipeline is the Control Plane You Forgot to Secure

The deeper architectural lesson is about control planes. As argued in "Your CI-CD Pipeline Is Your Real Infrastructure Control Plane", automation is not authority. A pipeline becomes a control plane only when infrastructure cannot change without it. Most pipelines fail this test.

The TanStack attack exploited a pipeline that was a single lane with no blast radius containment. A compromised cache in a benchmarking workflow could escalate to publishing rights for all @tanstack/* packages. The pipeline lacked:

* Environment Promotion Gates: No separation between "run benchmarks for a fork" and "publish to npm."

* Pipeline-as-the-Only-Path: The OIDC token, minted for publishing, was accessible to any code running in the workflow, bypassing the intended "Publish Packages" step.

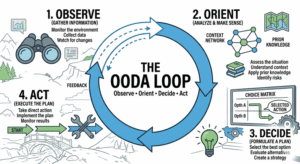

* Operational Memory: No mechanism to flag that a pull_request_target run had poisoned a cache later used by a release.

This mirrors failures seen in other ecosystems, like the PyPI compromise case studies where malicious .pth files hijacked Python runtime environments. The attack surface is the automation layer itself.

Immediate Actions: Treating Your Pipeline as Critical Infrastructure

- Audit

pull_request_targetand Forked PR Workflows: Treat any workflow usingpull_request_targetthat checks out and runs code from a fork as toxic. Isolate cache scopes. Require manual approval for first-time contributors. - Implement Mandatory Release Cooldowns: Configure your package manager (pnpm, Yarn Berry) to delay installation of new package versions. The Shai-Hulud worm was detected within 12 hours, a 24-hour cooldown is a simple, effective circuit-breaker.

- Lock Down Lifecycle Scripts: Use pnpm's

allowBuildsor Yarn'sdependenciesMetato explicitly list which packages are allowed to runpostinstallscripts. The default should be "deny." - Scope CI/CD Credentials Ruthlessly: The OIDC token should have been scoped to the specific "Publish Packages" job step, not available to the entire workflow. Use GitHub's

permissions:key at the job or step level. Follow the principle of least privilege scoped to the environment, a cornerstone of container security provenance. - Monitor for Anomalous Publishes: TanStack learned of the breach from an external researcher. Implement alerts for publishes that don't originate from the expected workflow step or that occur alongside failed tests.

The New Frontier: Defending the Software Factory

The TanStack compromise is a canonical example of a modern open source supply chain crisis. It moves beyond poisoning a single package to hijacking the entire software factory. The attacker didn't just inject malware, they became the publisher, using the project's own infrastructure, trust, and automation against it.

The fix isn't just better passwords or 2FA. It's architectural:

* Defense in Depth Across Publisher and Consumer: Relying solely on registry security (provenance) or client-side security (pnpm defaults) is insufficient. You need both.

* Zero-Trust CI/CD: Assume the runner environment is hostile. Isolate jobs, scope credentials, audit cache usage, and treat every workflow as a potential pivot point.

* Abandon Implicit Trust: The era where npm install could safely execute arbitrary code from thousands of transitive dependencies is over. We must shift to an explicit allowlist model for build-time execution.

The uncomfortable truth laid bare by this incident is that our development pipelines have become the most privileged, least scrutinized parts of our infrastructure. They hold the keys to the kingdom, and we've been handing them to any code that passes a PR check. The lesson isn't that TanStack was uniquely vulnerable, it's that their compromise simply revealed the blueprint for an attack that works against any project using similar patterns. The question isn't if your pipeline could be used this way, but how you're going to prevent it.