In late 2024, federal cybersecurity evaluators rendered a verdict on Microsoft’s Government Community Cloud High that should have killed it. Internal reports reviewed by ProPublica described the offering’s “lack of proper detailed security documentation” and concluded there was a “lack of confidence in assessing the system’s overall security posture.” One reviewer put it more bluntly: “The package is a pile of shit.”

Yet FedRAMP, the Federal Risk and Authorization Management Program tasked with safeguarding the government’s cloud infrastructure, approved it anyway. Not because the security gaps were closed. Not because Microsoft finally provided the data flow diagrams reviewers had demanded for five years. But because GCC High was already so deeply embedded across the Justice Department, Energy Department, and defense contractors that revoking it would have been politically and operationally catastrophic.

This is the architectural reality of modern federal cloud infrastructure: vendor lock-in isn’t an accident, it’s the feature that keeps insecure systems online.

The Authorization Paradox: Deploy First, Secure Later

The GCC High saga reveals a perverse incentive structure baked into federal cloud procurement. When the Justice Department authorized GCC High in 2020 through FedRAMP’s “agency path”, it created a fait accompli. By the time FedRAMP’s central reviewers got their hands on the package in April 2020, the product was already processing highly sensitive information, including data where breaches could cause “severe or catastrophic adverse effect” on national security.

For five years, Microsoft failed to produce adequate data flow diagrams showing how data hops between servers and where encryption occurs. Reviewers compared the architecture to a “pile of spaghetti pies”, decades of legacy code duct-taped into a cloud service that wasn’t designed for the isolation requirements of government work. When FedRAMP finally threatened to end the review in 2023, Microsoft and the Justice Department didn’t fix the architecture, they applied political pressure until FedRAMP capitulated.

The result? A “conditional” authorization that came with a “buyer beware” notice, essentially a government seal of approval wrapped in fine print admitting nobody knows if the thing is actually secure.

The 48-Cloud Bottleneck: Scarcity as Strategy

This isn’t just a Microsoft problem, it’s a structural scarcity issue. As of early 2025, only 48 cloud services hold full FedRAMP High authorization, despite federal cloud spending hitting $11 billion in 2024 and high-impact systems accounting for roughly 40% of expenditures.

When only a handful of vendors can meet the 421 security controls required for High authorization (nearly 30% more than Moderate), agencies face a binary choice: accept the security tradeoffs of single-vendor dependence, or forego cloud capabilities entirely. This creates what Tony Sager, former NSA computer scientist, calls “security theater”, the illusion that compliance equals safety, when in reality, the system is “little more than a rubber stamp for industry.”

The irony is thick: FedRAMP was created in 2011 to standardize security and prevent agencies from reinventing the wheel. Instead, it has centralized risk into a handful of vendor-specific architectures. When those vendors, like Microsoft, can’t even document their own encryption flows, the entire federal cloud ecosystem inherits that opacity.

Technical Debt as National Security Risk

Microsoft’s inability to produce data flow diagrams wasn’t bureaucratic stubbornness, it was architectural reality. Unlike Amazon or Google, which built cloud-native systems from the ground up, Microsoft’s government cloud offerings are built atop decades of legacy enterprise code. One reviewer described the data path as traveling “from Washington to New York with detours by bus, ferry and airplane rather than just taking a quick ride on Amtrak”, each detour representing a potential interception point if encryption isn’t properly implemented everywhere.

This is the risk of proprietary metadata trapping in data infrastructure writ large: when your security posture depends on codebases so complex that even the vendor can’t map them, you’re not buying a service, you’re inheriting technical debt with a government contract number.

The situation is compounded by FedRAMP’s current state. Under the Trump administration’s Department of Government Efficiency, the program’s budget was slashed to $10 million, its lowest in a decade, leaving roughly two dozen employees to process record numbers of authorizations. When the watchdogs are defunded, the vendors write their own rules.

Breaking the Sovereign Cloud Trap

So how do federal agencies and defense contractors escape this architectural capture? The solution isn’t just “more FedRAMP”, it’s architectural diversification and open standards.

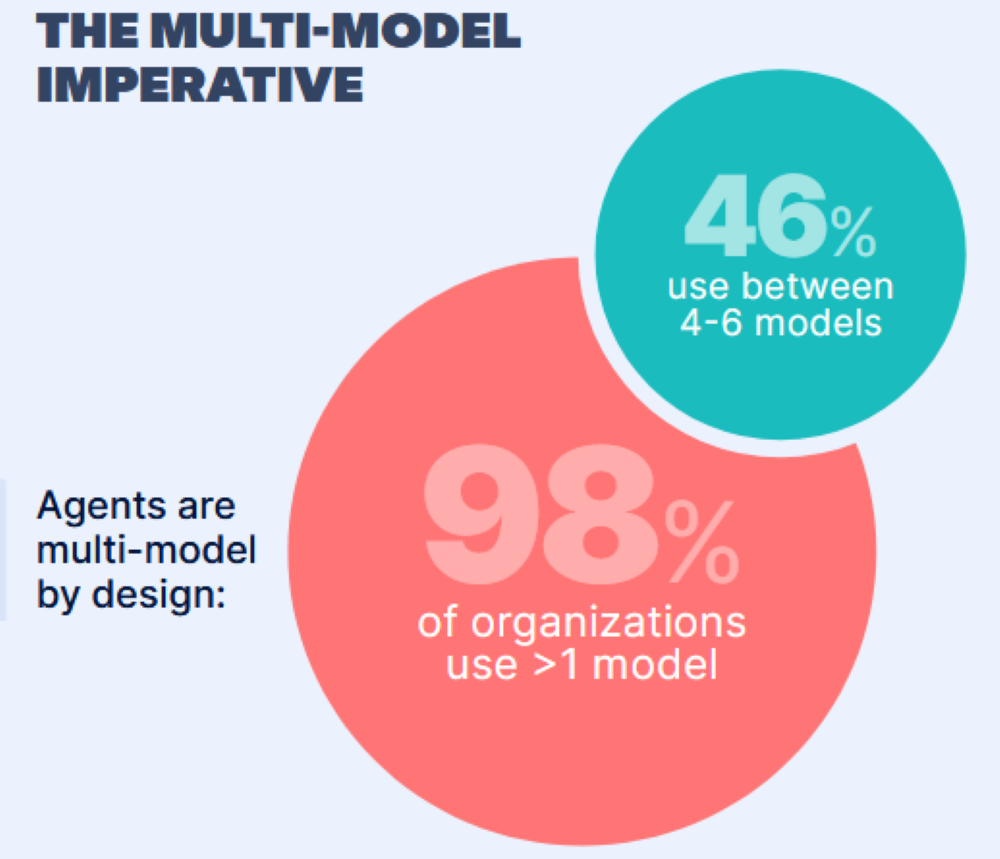

Recent research from Docker shows that 76% of global enterprises now actively worry about vendor lock-in, with teams responding by distributing workloads across multiple models and cloud environments rather than consolidating. This mirrors the broader shift toward countering API dependency through open-source ecosystems, when proprietary clouds become too risky, portable infrastructure becomes the only sane option.

For federal architecture specifically, three moves matter:

- 1. Demand API Portability in Contracts

The recent ProPublica investigation revealed that Justice Department officials discovered Microsoft was using China-based engineers to service GCC High systems, not from FedRAMP disclosures, but from investigative reporting. This happens when contracts lack enforceable transparency requirements. Without APIs, you can’t audit, migrate, or exit. Demand written confirmation of API availability and data portability before authorization, not after. - 2. Embrace FedRAMP 20x with Skepticism

FedRAMP 20x promises to streamline authorization, but the High baseline pilot won’t launch until Q1-Q2 2027. Waiting for the next generation of compliance while current systems remain opaque is a recipe for continued strategic straightjacket of single-vendor data platforms. Agencies should pursue High authorization now with vendors who can demonstrate actual architectural transparency, not just paperwork compliance. - 3. Architect for Control Inheritance, Not Vendor Dependence

The smartest defense contractors are leveraging FedRAMP High control inheritance to compress CMMC timelines by 50%, but they’re also demanding multi-cloud strategies that prevent any single vendor from becoming the sole gatekeeper. When a platform delivers 90% of compliance requirements out of the box but locks you into proprietary infrastructure, you’re trading audit readiness for trade-offs between unified data systems and vendor control.

The Real Authorization

The GCC High debacle teaches us that in critical infrastructure, vendor lock-in isn’t just about pricing or feature sets, it’s about sovereignty over your own security posture. When a vendor’s architecture is too complex to audit, too embedded to replace, and too politically connected to reject, you’ve built a house on someone else’s land.

Federal agencies are learning this the hard way. The Defense Department is now investigating Microsoft’s use of foreign engineers. The Justice Department is discovering “unknown unknowns” in systems they approved years ago. And FedRAMP, stripped of resources and political will, has become what Eric Mill, former GSA executive director for cloud strategy, warned against: “a paper-pusher” that watches the American people’s back by rubber-stamping whatever the cloud giants submit.

The path forward requires architectural courage: accepting that hardware-level dependency and infrastructure sovereignty apply to software clouds just as much as to silicon. It means rejecting the false choice between “secure enough to approve” and “too big to fail.” And it means recognizing that when security reviewers call your cloud infrastructure “a pile of shit”, the correct response isn’t authorization with conditions, it’s showing them the exit door.