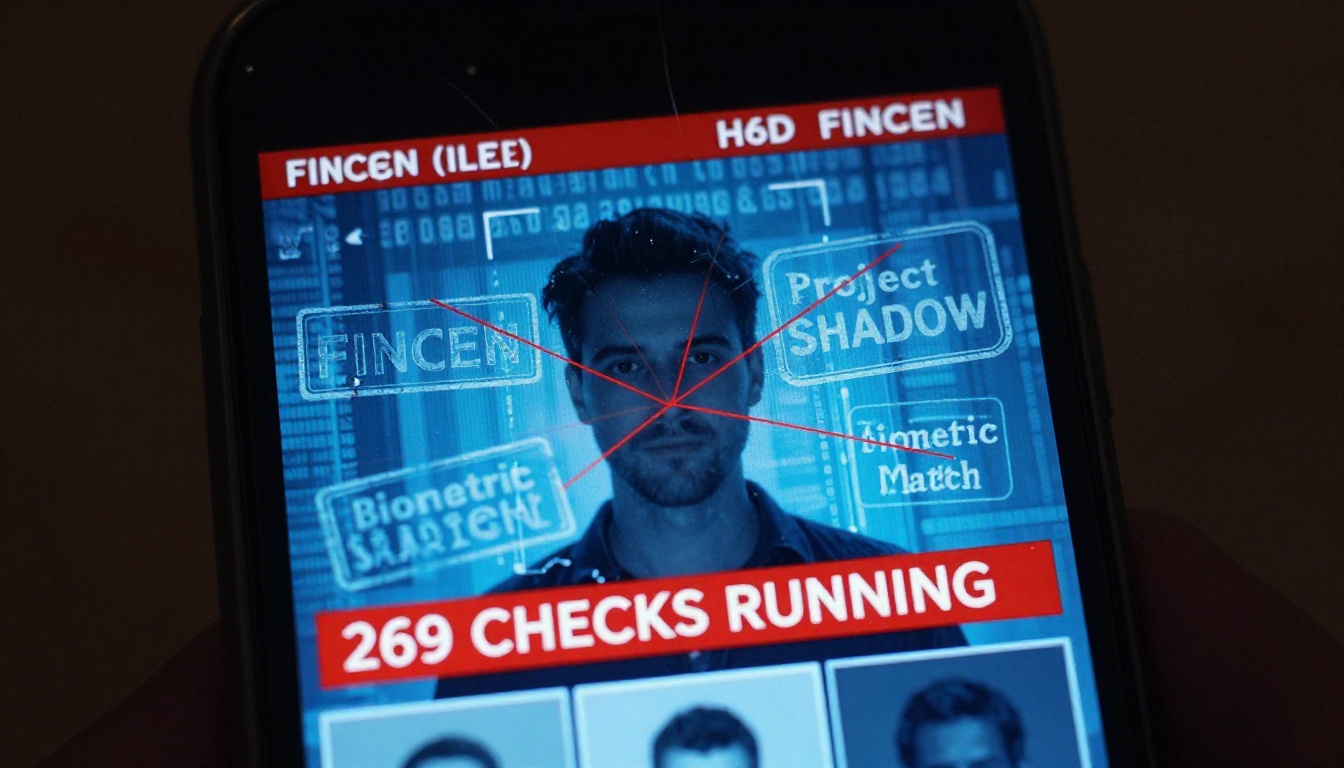

The age verification popup seemed harmless enough. “Verify you’re 18+ to access this Discord server”, it said, promising a quick selfie scan and instant approval. What it didn’t mention was that your face would be compared against a database of every politician on Earth, your identity screened against 269 distinct checks, and your data potentially filed in a Suspicious Activity Report to the US Treasury. But hey, at least the UI was smooth.

When security researchers discovered 53 megabytes of unprotected source code on a FedRAMP-authorized government endpoint last month, they didn’t just find a sloppy configuration. They uncovered the architectural blueprint of a modern surveillance system hiding in plain sight, one that connects your ChatGPT login to financial intelligence databases and tags your verification record with codenames like “Project SHADOW” and “Project LEGION.”

The “WatchlistDB” That Started It All

The investigation began with a Shodan search. A single IP address, 34.49.93.177, sitting in Google Cloud’s Kansas City region, serving an SSL certificate for openai-watchlistdb.withpersona.com. Not “openai-verify” or “openai-kyc”, but watchlistdb. Certificate transparency logs revealed it went live in November 2023, eighteen months before OpenAI publicly disclosed any identity verification requirements.

The infrastructure told its own story:

– Dedicated GCP instance isolated from Persona’s main Cloudflare-protected infrastructure

– Envoy proxy with strict mTLS client certificate requirements

– Certificate rotation every 90 days, consistent with FedRAMP compliance

– Hostname structure that didn’t match Persona’s standard patterns

This wasn’t a shared service. This was compartmentalized infrastructure, the kind you build when the compliance requirements demand isolation and the damage from a breach would be catastrophic.

53 Megabytes of “Oops” on a Government Server

The real bombshell came from a different subdomain: app.onyx.withpersona-gov.com. The ONYX deployment (more on that suspicious name later) was serving JavaScript source maps without authentication. Not minified bundles, full, original TypeScript source code with all 2,456 files embedded.

How does this happen? Vite, the build tool, generates source maps with a sourcesContent array containing the complete original source. In development, these are served from /vite-dev/ paths for debugging. Someone copied a dev configuration into production. On a FedRAMP-authorized platform, the auditors either didn’t check static assets or didn’t know what a source map was.

The extracted codebase revealed:

// DashboardSARShowView.tsx - the SAR management dashboard

const handleAutofillSAR = ... // autofill from case data

const handleValidateSAR = ... // validate against FinCEN XML schema

const handleFileSAR = ... // file electronically

const handleExportFincenPDF = ... // export FinCEN PDF

This is a full Suspicious Activity Report filing system, integrated directly into the identity verification platform. Not an export feature. A “Send to FinCEN” button.

The 269-Check Pipeline You Never Signed Up For

The lib/verificationCheck.ts file contains a CheckName enum with 269 individual verification checks. Highlights include:

Selfie checks (23):

– SelfieSuspiciousEntityDetection – what makes a face “suspicious”? The code doesn’t say

– SelfiePublicFigureDetection – compares your face to world leaders

– SelfieExperimentalModelDetection – unnamed ML models running on your biometric data

– SelfieRepeatDetection – flags if you’ve submitted similar selfies before

Government ID checks (43):

– AAMVA database lookup (US driver’s license database)

– NFC chip reading with PKI validation

– Real ID detection

Database checks (27):

– SSA death master file matching

– Social security number comparison

– Phone carrier checks

The platform maintains 13 types of tracking lists, including faces, device fingerprints, browser fingerprints, and geolocations. Face lists have a “maximum 3-year retention” with automatic deletion, but government IDs are retained permanently.

When Your Selfie Meets Project SHADOW

The most disturbing find was in the STR (Suspicious Transaction Report) filing module for FINTRAC (Canada’s financial intelligence unit):

// FINTRAC public-private partnership intelligence programs

publicPrivatePartnershipProjectNameCodes:

{ const: 1, title: 'Project ANTON' }

{ const: 2, title: 'Project ATHENA' }

{ const: 3, title: 'Project CHAMELEON' }

{ const: 5, title: 'Project GUARDIAN' }

{ const: 6, title: 'Project LEGION' }

{ const: 7, title: 'Project PROTECT' }

{ const: 8, title: 'Project SHADOW' }

These are real intelligence program codenames. The platform lets operators tag reports as related to specific operations. Your identity verification, originally for age-gating on Discord, could be filed under “Project SHADOW” and sent to Canadian intelligence.

The same codebase handles direct FinCEN filing with status enums like FiledElectronically, Accepted, Rejected. It includes complete form schemas for financial institutions, primary federal regulators (FDIC, FHFA, IRS, NCUA), and all the infrastructure for a bank compliance department.

The ONYX Question

Twelve days before publication, a new subdomain appeared: onyx.withpersona-gov.com. Its own dedicated GCP instance, wildcard certificate, Kubernetes namespace persona-onyx, and git commit 8d454ac0dc48b2f4ae7addefa22e746079c30089.

The name matches Fivecast ONYX, ICE’s $4.2 million AI surveillance tool for social media monitoring and “digital footprint” building. Persona claims it’s named after the Pokémon Onix and is unrelated. The infrastructure correlation is real, but the code doesn’t confirm the connection.

What the code does show is significant enough: facial recognition libraries, watchlist screening, biometric databases, and intelligence program tagging, all on a platform that also processes Discord’s age verification selfies.

Privacy by Design: A Cautionary Tale

This isn’t just a data breach. It’s an architectural failure of privacy by design principles:

- Purpose Limitation Violated: Age verification data used for law enforcement intelligence

- Data Minimization Ignored: 269 checks when 1 would suffice

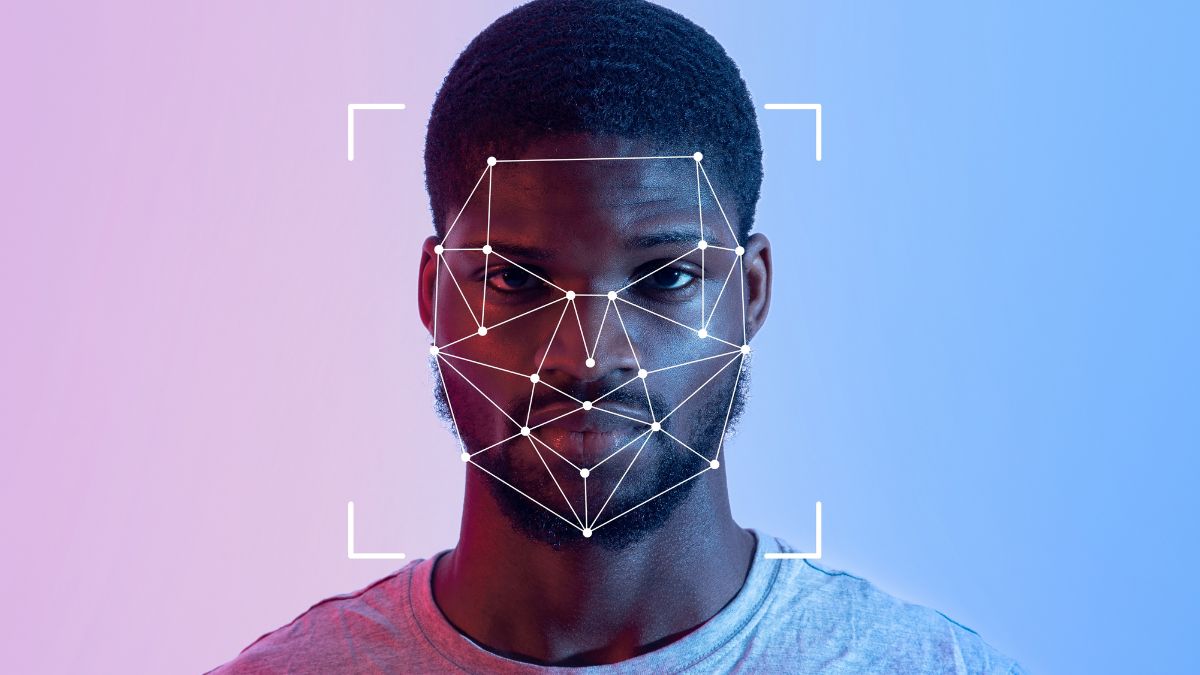

- Transparency Absent: Users aren’t told about facial matching against politicians

- Retention Inconsistent: OpenAI says “up to a year”, code shows 3 years, government IDs “permanent”

- Consent Undermined: No meaningful way to opt-out of surveillance features

The Illinois Biometric Information Privacy Act (BIPA) requires informed written consent before collection, disclosure of purpose and retention length, and a publicly available retention schedule. With “millions” of monthly screenings, Persona’s statutory damages exposure is significant: $1,000 per negligent violation, $5,000 per willful violation.

The Architecture of Inevitable Surveillance

What makes this system particularly insidious is how it normalizes surveillance through “frictionless” UX. The verification flow at inquiry.withpersona.com captures:

- Government ID scan (front/back)

- Live selfie with liveness detection

- Video capture

- Device fingerprinting (FingerprintJS)

- Browser/network signals

This data package flows to openai-watchlistdb.withpersona.com where it’s screened against:

– OFAC SDN list

– 200+ global sanctions lists

– PEP classes 1-4 with facial similarity scoring

– Adverse media (14 categories from terrorism to espionage)

– Crypto address watchlists

– Custom FinCEN screening lists

Then it either grants access or… doesn’t. No explanation. No appeal. But your data stays in the system.

Why This Matters for Software Architects

If you’re building identity systems, this is your wake-up call. The technical decisions you make about data flow, retention, and integration have profound consequences:

Compartmentalization is critical: Persona’s decision to use dedicated infrastructure for the watchlist service shows they knew the data was sensitive. But that same sensitivity demands stronger access controls and data minimization.

Source maps are not harmless: The Vite configuration error that exposed 53MB of source code represents a catastrophic failure of the security review process. For a FedRAMP-authorized system, this should have been caught.

Vendor ecosystems matter: The CSP header leak revealed integrations with Chainalysis, Equifax, SentiLink, MX, and Amplitude, all on a government platform handling SARs. Your vendor’s vendors are your problem.

The Discord’s biometric age verification plan as a case study in flawed identity architecture shows how these failures cascade to consumer platforms.

The False Promise of “On-Device” Processing

Discord initially claimed their verification used “on-device processing” to protect privacy. The Persona integration proves this was marketing fiction. When you upload a selfie for age estimation, you’re not just getting a boolean is_adult flag back, you’re feeding a surveillance pipeline.

This highlights why cryptographic verification of identity and intent in automated systems is becoming essential. Without cryptographic guarantees, “on-device” just means “uploaded and processed remotely.”

What Happens Next

Discord has cut ties with Persona, but the underlying architecture problem remains. Age verification laws are spreading, Australia’s under-16 social media ban, UK Online Safety Act, US state-level proposals. Each creates demand for systems like Persona.

The researchers who exposed this have published 18 unanswered questions, including:

– What defines a “suspicious entity” in facial detection?

– What are the “experimental model” checks running on biometric data?

– Which federal agencies use the withpersona-gov.com platform?

– Has BIPA compliance been assessed for Illinois residents?

Persona’s CEO Rick Song has responded, calling the source map exposure “not a major vulnerability” and stating the uncompressed files were “already on every person’s device.” This response misses the point: the vulnerability wasn’t the file exposure, but what the files revealed about the system’s purpose.

Building Better Identity Architecture

For architects and developers, this is a teachable moment. Here’s what needs to change:

- Threat model the entire data lifecycle: Not just breach scenarios, but misuse by authorized parties

- Implement verifiable privacy: Use cryptographic techniques that prove what data is collected and how it’s used

- Design for data minimization: If you don’t need 269 checks, don’t build them

- Audit your supply chain: Your identity vendor’s government contracts are your problem

- Plan for regulatory divergence: What’s legal for age verification may violate biometric privacy laws

The human factors in security architecture and the enduring relevance of identity trust models remind us that technical controls fail when humans are incentivized to bypass them.

The Inevitable Regulatory Crackdown

This exposure will accelerate regulatory action. BIPA lawsuits are already being prepared. The EU’s AI Act classifies biometric identification as “high-risk” requiring conformity assessments. The FTC is scrutinizing data brokers.

But regulation moves slowly. In the meantime, ensuring reliability and trust in AI-driven identity and verification systems requires proactive architecture decisions:

- Federated identity: Let users choose their identity provider

- Zero-knowledge proofs: Verify age without revealing birthdate

- Local processing with cryptographic attestation: Prove computation happened correctly without sending raw data

- Data expiration guarantees: Technical controls that delete data automatically, not policy promises

The Bottom Line

The Persona exposure reveals that identity verification has quietly evolved into surveillance infrastructure. The same platform that age-gates your Discord account also files reports to FinCEN and tags data with intelligence program codenames. This isn’t a bug, it’s the business model.

For software architects, the lesson is clear: privacy by design isn’t a checkbox, it’s a fundamental architectural constraint. When you build systems that collect biometric data, you’re not just building a feature, you’re building a surveillance tool that will be repurposed.

The choice isn’t between security and privacy. It’s between building systems that respect human dignity and building the infrastructure for a future where your selfie can land you on a watchlist.

Start asking harder questions. Demand architectural diagrams that show data flows to government systems. Review your vendors’ FedRAMP authorizations. And remember: the most dangerous surveillance system is the one that’s marketed as a convenience feature.

Because the next time you click “Verify Your Identity”, you might be doing more than proving you’re human. You might be proving you’re not a threat, to a system you never agreed to join.