The vulnerability wasn’t sophisticated. It didn’t require zero-day exploits or nation-state resources.

All it took was one command: npm pack @anthropic-ai/claude-code@2.1.88. Instead of receiving only the compiled artifacts users expected, anyone could download the entire TypeScript source tree, 1,900 files comprising 512,000+ lines of proprietary code, including the company’s multi-agent orchestration system, IDE bridge implementations, and internal tool architectures.

The Source Map Time Bomb

Source maps are debugging conveniences. They map minified, bundled JavaScript back to original source lines so developers can debug production issues without deciphering mangled variable names. They’re incredibly useful during development and debugging sessions.

They’re also complete photocopies of your original codebase.

You’re not just shipping debugging symbols—you’re shipping the entire unminified source code in a machine-readable format.

npm install effectively became git cloneWhen you include a .map file in an npm package, you’re not just shipping debugging symbols, you’re shipping the entire unminified source code in a machine-readable format. Anthropic’s build pipeline apparently failed to strip these files before publishing, meaning npm install effectively became git clone for anyone curious enough to look.

Developer forums immediately lit up with the obvious question: how does a company building AI coding assistants fail to catch a build configuration error that most automated linters flag? The prevailing sentiment ranged from schadenfreude to genuine concern about supply chain hygiene at AI labs handling increasingly sensitive infrastructure.

Anatomy of a Half-Million Line Leak

Once archived to GitHub (where it rapidly accumulated 1,900+ forks), the leaked codebase revealed fascinating architectural decisions that Anthropic probably didn’t intend to share:

Runtime Choice: Bun Over Node

Claude Code runs on Bun, not Node.js. The team leveraged Bun’s dead code elimination for feature flagging and faster startup times, a choice that suggests they’re optimizing for CLI responsiveness over ecosystem compatibility.

React in Your Terminal

The UI layer uses Ink, a React renderer for terminals. This means Claude Code’s interface is literally a React application running in your shell, complete with component-based state management and hooks. It’s an elegant architecture that treats the terminal as a first-class application platform rather than a dumb text stream.

The Tool Architecture

The codebase reveals a sophisticated permission-gated tool system with approximately 40 discrete capabilities. Each tool (file read, bash execution, web fetch, LSP integration) is a modular, permission-scoped unit. The base tool definitions alone span 29,000 lines of TypeScript, suggesting a rigorously typed approach to agent capabilities.

Query Engine (46,000 lines)

This module handles LLM API calls, streaming, caching, and orchestration, essentially the brain of the operation. It’s the largest single module, indicating where Anthropic invested most engineering effort.

Multi-Agent Spawning

Claude Code can spawn “swarms” of sub-agents for parallelizable tasks, each running in isolated contexts with specific tool permissions. This isn’t just a chat wrapper—it’s a full agentic operating system.

Déjà Vu: The AnonKode Precedent

This isn’t Anthropic’s first rodeo with source control mishaps. According to community discussions, the first release of Claude Code suffered from the identical vulnerability, leading to forks like AnonKode that remained active for months before Anthropic’s legal team intervened.

The recurrence suggests systemic issues in their build pipeline rather than a one-off mistake. When malicious npm packages appearing legitimate can persist for months with 56,000+ downloads, and when supplies chain compromised through build tools have become sophisticated enough to weaponize developers’ own AI assistants, a major AI lab repeatedly leaking its crown jewels via npm pack is more than embarrassing—it’s a supply chain security failure that affects everyone downstream.

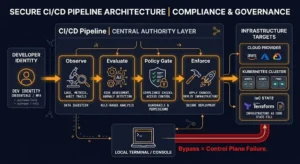

The Build Pipeline Blind Spot

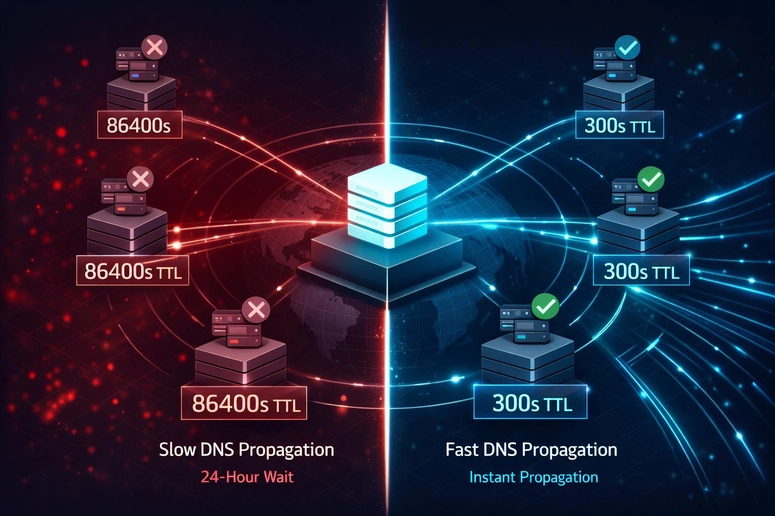

The root cause is almost certainly a misconfigured .npmignore or an overly permissive files array in package.json. Modern JavaScript bundlers often generate source maps by default, and it’s the publishing pipeline’s responsibility to exclude them from production artifacts.

For Claude Code specifically, the exposure carries additional irony. The tool is designed to help developers write better code, yet it was undone by exactly the kind of configuration oversight that proper DevSecOps practices prevent. When developers immediately began feeding the leaked code back into Claude (asking it to analyze and “improve” itself), the recursion became almost poetic—AI debugging its own leaked source code through the very interface that leaked it.

What Actually Ships in Your Packages?

If you’re publishing to npm, you need to verify your artifacts before they hit the registry. Run:

npm pack --dry-runThis shows exactly what files will be included without actually publishing. Check for:

*.mapfiles (source maps).envfiles or config directories- Test directories and fixtures

- Internal documentation or scripts

Remember that source maps are complete source code. Treat them with the same security classification as your original TypeScript files. If you wouldn’t commit your src/ directory to a public repository, don’t ship your .js.map files to npm.

The Architecture Lessons We Shouldn’t Ignore

Safe Capability Exposure

Regardless of the circumstances, Claude Code’s internal architecture offers legitimate insights for AI tooling development. The tool-based permission system, with its 29,000 lines of type definitions, demonstrates how to safely expose dangerous capabilities (shell access, file system operations) to probabilistic AI agents.

Secure IDE Integration

The JWT-authenticated IDE bridge shows how to securely connect CLI tools to editor extensions without exposing raw system access.

Distributed Agent Ecosystems

The multi-agent orchestration system, where “swarms” handle parallelizable tasks with isolated contexts, suggests where the industry is heading: not monolithic AI assistants, but distributed agent ecosystems with strict capability boundaries.

The Supply Chain Reality Check

Anthropic’s leak is symptomatic of a broader industry problem. As AI companies race to ship agentic tools that can write, build, and deploy code, they’re subjecting themselves to the same supply chain risks they’ve been warning others about.

The incident serves as a stark reminder that sophisticated AI capabilities don’t eliminate basic software engineering hygiene. If anything, they amplify the consequences of getting it wrong. When your coding assistant can accidentally expose your entire codebase because of a misconfigured build step, the “move fast and break things” philosophy starts looking less like innovation and more like negligence.

For developers integrating AI tools into their workflows, the lesson is clear: audit your dependencies, verify what actually ships in your

node_modules, and remember that the most dangerous vulnerabilities often hide in the build artifacts you never meant to publish.