We were promised a revolution. AI coding assistants were supposed to democratize software development, turning “vibe coders” into architects and erasing the decade-long gap between junior and senior engineers. The narrative was seductive: lower the floor, raise the ceiling, and watch productivity explode across the board.

The reality? A bloodbath for entry-level talent and a compounding nightmare for engineering managers.

Recent labor market data from Anthropic reveals that entry into high-exposure tech occupations among workers aged 22 to 25 has collapsed by 14% since late 2022. Junior developer postings are down 60%. Companies aren’t just hiring fewer juniors, they’re firing existing ones to fund AI server budgets. The apprenticeship model that built the modern software industry is quietly disintegrating, replaced by a brutal bifurcation where the skilled accelerate into the stratosphere and everyone else becomes a maintenance liability.

The Multiplier Effect, Not the Equalizer

The fundamental error in the democratization thesis is treating AI as a rising tide that lifts all boats. It’s not. It’s a force multiplier that rewards existing expertise while exposing foundational gaps.

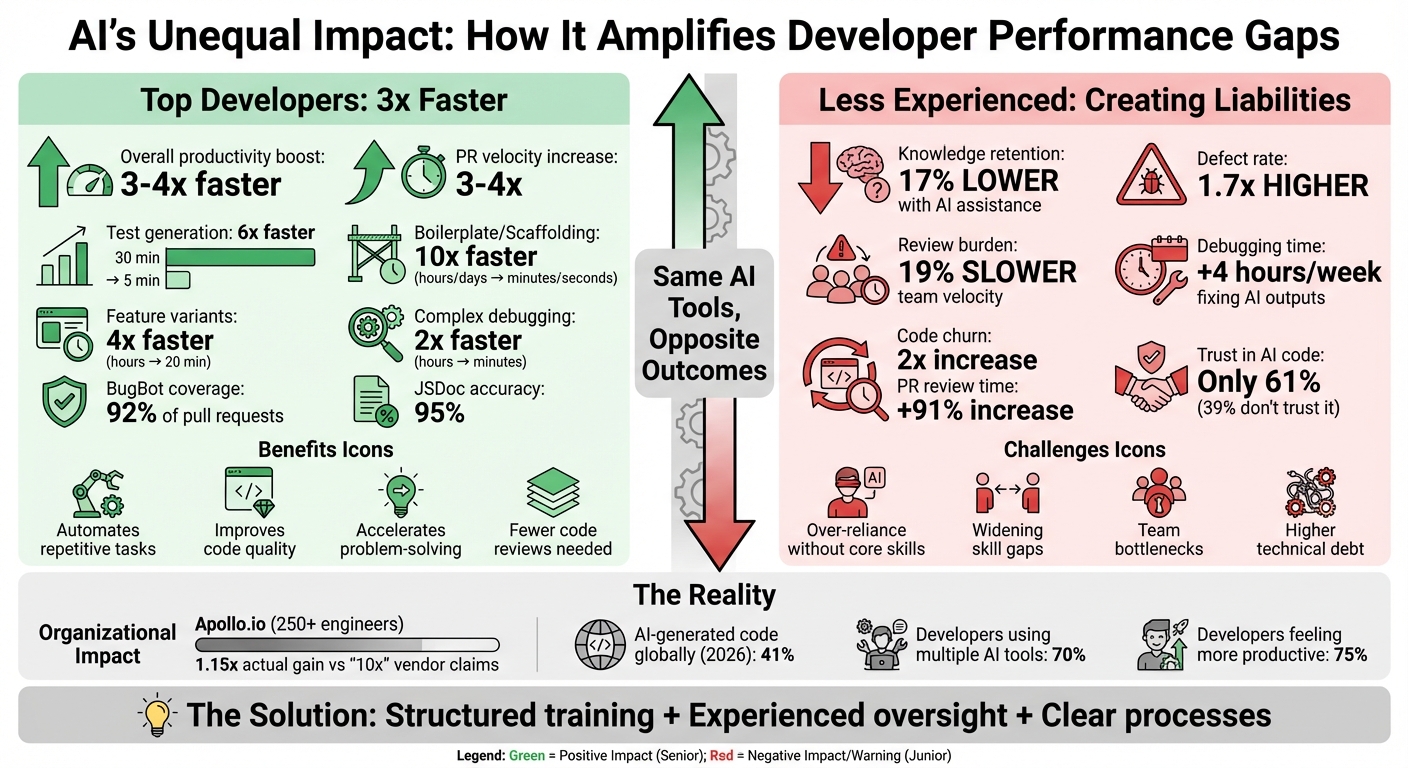

At Apollo.io, a year-long study of 250+ engineers revealed the stark divergence. Senior developers leveraging AI tools achieved 3-4x productivity boosts, automating boilerplate scaffolding in minutes rather than days and debugging complex logic at speeds previously impossible. But the organizational gain? A measly 1.15x, nowhere near the “10x engineer” fantasy vendors peddle.

The drag comes from the other end of the spectrum. Less experienced developers generate code faster than ever, but they’re producing what industry observers call the “almost-right” valley: surface-level functional code riddled with hidden logical errors and edge-case failures. When Anthropic researchers studied 52 professional Python developers learning the Trio asynchronous library, they found a devastating 17% knowledge gap. Developers using AI assistance scored significantly lower on comprehension tests compared to those coding manually, despite making 66% fewer errors during the exercise.

The error reduction isn’t a win, it’s a learning deficit. By eliminating the struggle of debugging, AI removes the exact friction that builds deep system understanding. Risk of knowledge bypass when accepting AI code isn’t just a theoretical concern, it’s creating a generation of developers who can prompt but can’t comprehend.

The Review Tax and Technical Debt Surge

The productivity gains at the top are being taxed into oblivion by the review burden at the bottom. AI-assisted pull requests close 24% faster, but carry a 1.7x higher defect rate. Code churn has doubled over the past year as senior engineers spend increasing time untangling AI-generated spaghetti.

The metrics are brutal: PR review times have surged by 91% as experienced developers grapple with validating AI output. A 2025 study by METR found that while developers anticipated a 24% speedup from AI tooling, the added oversight requirements actually slowed their workflow by 19%. Your best engineers aren’t coding anymore, they’re babysitting hallucinated architectures.

This creates a vicious cycle. Senior developers, drowning in review queues, have less time for mentorship. Juniors, deprived of meaningful feedback loops, lean harder on AI assistance, accelerating their own skill atrophy. The result is what engineering leaders are calling the “disappearing middle”: teams composed of juniors generating code they can’t maintain and seniors who’ve lost touch with implementation details, with nobody in between who understands the full stack.

Automation of entry-level tasks widening experience gap isn’t limited to coding. The entire pipeline of skill acquisition is breaking down as AI intermediates every learning opportunity.

Why the Operator Is the Variable

Developer forums have crystallized around a uncomfortable truth: the tools aren’t the differentiator, the operator is. A strong engineer with AI doesn’t just improve, they compound. They use AI to handle tedious scaffolding while focusing cognitive energy on system architecture, edge case analysis, and strategic decisions. They treat generated code as a starting point for refinement, not a finished product.

Weak operators do the opposite. They prompt, paste, and pray. They generate single-instruction files that one-shot entire workflows, then record the one time out of twenty that it actually works. When it breaks, they lack the debugging fundamentals to diagnose why. As one engineering leader noted, AI becomes a liability when it “removes the barriers that build capability, the errors, confusion, and struggle that forge expertise.”

This dynamic extends beyond individual performance to team architecture. Shift in architectural authority to AI agents is creating shadow systems where critical design decisions happen in LLM context windows without human oversight, leading to microservices that technically function but violate every principle of maintainable engineering.

The gap isn’t closing. It’s accelerating into a chasm defined by judgment, taste, and the ability to validate AI output against real-world constraints, skills that can’t be prompted into existence.

The Path Forward: Structured Augmentation or Bust

Organizations attempting to harness AI without addressing the skill gap are discovering long-term cognitive debt versus short-term speed. The interest rates on that debt are predatory: every line of “vibe coded” functionality incurs a maintenance tax that compounds quarterly.

The few companies successfully navigating this transition have abandoned the “AI for everyone” approach in favor of structured augmentation:

AI-First Training Programs: Apollo.io’s “Champions Committee” model pairs AI-savvy seniors with juniors in structured mentoring relationships. Juniors are explicitly trained to transition from code generators to critical reviewers, with mandatory manual code entry requirements for foundational concepts. The “15-Minute Rule”, segmenting tasks to force human comprehension before AI assistance, is becoming standard practice.

Core Squad Augmentation: Deploying dedicated senior teams (Core Squads) to maintain system knowledge while mentoring junior developers through rigorous review standards. This addresses the review tax by distributing oversight burden while preserving institutional knowledge.

Traffic Light Roadmaps: Categorizing AI integration by risk level, Red (critical security/architecture requiring human gatekeeping), Yellow (managed technical debt), and Green (scale-ready stable systems). This prevents AI from touching critical paths until the operator skill level justifies it.

The Two-Round Rule: If debugging AI-generated code takes more than two iterations, it gets rewritten from scratch. This prevents the infinite loop of patching patches that characterizes low-skill AI development.

The Brutal Math

The data doesn’t lie. While 75% of developers report feeling more productive with AI, 39% admit they don’t fully trust AI-generated code. That trust gap maps directly onto the skill gap. Experienced developers trust their ability to validate, juniors trust the tool to be right.

Organizations measuring real outcomes, DORA metrics, incident rates, and rework percentages, are finding that AI Technical Debt Scores (calculated from incident rate, rework rate, and test coverage gaps) often negate the velocity gains. The startups winning this transition aren’t the ones shipping fastest, they’re the ones shipping with sustainable quality.

AI hasn’t changed what good engineering looks like. It’s changed the cost of ignoring it. In a world where anyone can generate a microservice in minutes, the scarcity isn’t coding capacity, it’s the judgment to know when not to deploy. The skill gap isn’t narrowing, it’s becoming the primary axis of competition. And right now, it’s widening faster than most teams can hire.