The AI Slop Trap: Why Managers Pushing Copied Code Is Stalling Productivity

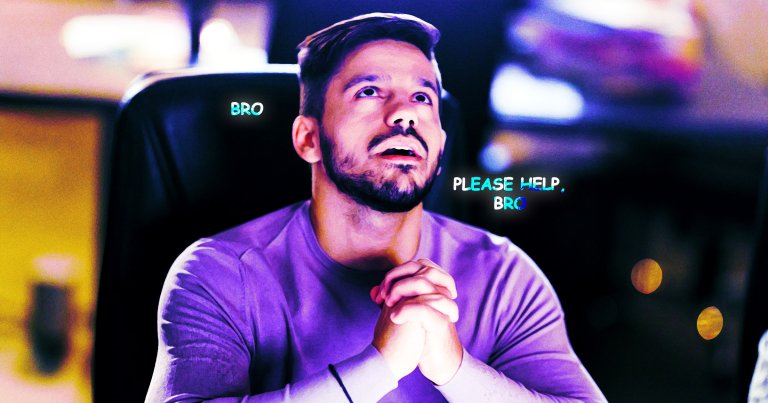

You’re stuck on a production bug. The kind that makes you question your career choices. So you ping your manager or a senior engineer, the people who used to help you think through problems, not just solve them for you. Ten minutes later, your Slack lights up with a wall of text. It’s clearly AI-generated. No context, no explanation, just a code dump that looks plausible but smells wrong.

You paste it in. It breaks. Now you’re debugging not just the original issue, but whatever hallucinated logic your manager copied from ChatGPT without reading. Congratulations: you’ve just hit the AI Slop Trap, and you’re not alone.

The Copy-Paste Management Crisis

Something shifted in the last year. The same managers who used to whiteboard solutions or ask probing questions have morphed into human API gateways for large language models. They’re not reviewing the code they forward, they’re not even running it. They’re just… distributing. And junior engineers are drowning in the cognitive toll of prioritizing delivery speed over actual comprehension.

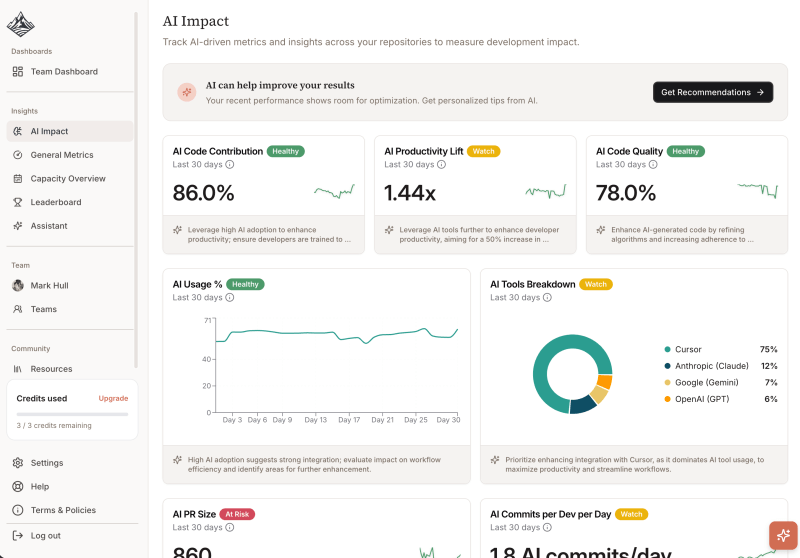

The data backs up the frustration. Enterprise analysis shows AI-generated code introduces 1.7 times more defects than human-written code, with 23.7% more security vulnerabilities and performance inefficiencies appearing nearly eight times more frequently. Less than 44% of AI-generated code is accepted without modification, yet it’s flooding repositories at unprecedented velocity.

When authority changes but accountability doesn’t, you get governance theater. Managers hit send on AI output they don’t understand, while engineers inherit the technical debt. Teams with high AI-generated technical debt now spend 20-40% of their capacity managing that debt instead of building features, a hidden tax that doesn’t show up in sprint velocity metrics but absolutely shows up in burnout rates.

When “Vibe Coding” Takes Down Production

The consequences aren’t theoretical. Earlier this month, Amazon executives summoned engineers after major outages hit their retail platform. The culprit? “Gen-AI assisted changes” that may have contributed to the downtime, according to internal communications reported by the Financial Times. The response was telling: junior and mid-level engineers must now have AI-assisted changes signed off by seniors, effectively admitting that the “productivity” gains were mirages built on unverified slop.

As Dorian Smiley, CTO of Codestrap, noted in a recent interview, coding metrics have become dangerously decoupled from quality. “Coding works if you measure lines of code and pull requests”, he explained. “Coding does not work if you measure quality and team performance. There’s no evidence to suggest that’s moving in a positive direction.”

This creates a brutal asymmetry. AI generates code ten times faster than humans, potentially accumulating ten times the debt without scaled review processes. Yet the disconnect between AI efficiency claims and burnout grows wider by the quarter. Developers report a 19% overall slowdown despite, or because of, AI tooling, with 45% experiencing increased debugging time.

The Review Gap Nobody Talks About

Here’s where it gets spicy: 96% of developers don’t fully trust AI-generated code to be functionally correct, yet only 48% always check it before committing, according to Sonar’s State of Code survey. That gap between suspicion and verification is where disasters breed.

Traditional code review wasn’t designed for machine-scale output. When a manager dumps 200 lines of AI-generated Python into a Slack thread, there’s no pull request to comment on, no diff to analyze, no accountability trail. The code simply appears, fully formed and utterly opaque.

Research from CodeRabbit confirms what engineering teams are experiencing: AI-generated code is significantly more error-prone than human-written alternatives. The errors aren’t just syntax issues, they’re subtle logic flaws, unsafe control flow patterns, and architectural misalignments that surface weeks after deployment. When managers bypass review gates to “move fast”, they’re not accelerating delivery, they’re deferring failure.

Why Junior Engineers Pay the Price

The damage concentrates at the bottom of the org chart. Junior developers learn by debugging, by understanding why solutions work, by watching seniors reason through edge cases. When that mentorship gets replaced by copy-pasted AI slop, the learning loop breaks.

One developer described the new dynamic perfectly: managers have become “basically just an interface to some AI”, abdicating the contextual judgment that makes senior engineers valuable. This creates a misalignment between engineering and management expectations, management sees closed tickets, while engineers see mounting confusion.

The result is a generation of developers who can prompt but can’t parse, who can generate but can’t verify. As one engineer noted in a viral post, the “missing rung” problem is real: AI killed the junior dev career ladder before Gen Z could even climb it. Without the scaffolding of proper code review and architectural discussion, how AI reliance erodes critical thinking skills becomes not just a risk, but an inevitability.

Building Governance That Actually Works

Fixing this requires more than asking developers to “be careful.” It demands structural changes to how AI-generated code enters production:

Separate High-Risk Paths

Transaction processing, identity flows, and data handling need deeper scrutiny than AI-generated CSS tweaks. Not all code is equal, your governance shouldn’t be either.

Enforce Explainability

Every AI-assisted change needs a human sign-off with domain ownership. Not just “LGTM”, actual evidence of review, including understanding of edge cases and failure modes.

Close the Verification Loop

Use tools like Playwright MCP and Chrome DevTools MCP to validate AI output before it ships. Screenshot broken layouts, catch console errors, feed that data back to the AI for iteration. Turn “generate and hope” into “generate, verify, iterate.”

Track Longitudinal Outcomes

Monitor AI-touched code for 30+ days post-deployment. Static analysis catches syntax, only time reveals architectural rot. Teams should measure defect rates, rework patterns, and incident frequency specifically for AI-generated modules.

The Multiplier Effect

AI is a multiplier, not a magic wand. If your engineering discipline is weak, AI multiplies that weakness. If your review processes are broken, AI accelerates the breakage. The market rejection of AI slop quality has already begun, Microsoft’s recent stock drop signaled Wall Street’s fatigue with promises unbacked by performance.

The solution isn’t to ban AI coding assistants. It’s to treat their output with the same skepticism you’d apply to a junior developer’s first pull request. Review it line by line. Question every abstraction. Verify it handles edge cases, understands system context, and aligns with architectural decisions.

When something breaks in production, nobody asks which AI generated the code. They ask who shipped it. Until managers remember that distinction, the AI Slop Trap will keep swallowing productivity whole, and taking junior engineers down with it.