The hardware was identical: 64GB DDR4, Core i9-9900K, and that beefy Turing-architecture RTX 8000. The only variable was the operating system. Yet this isn’t an isolated anomaly. It represents a systemic efficiency gap that threatens to turn expensive AI workstations into hardware performance bugs affecting local inference workstations waiting to happen.

The Benchmark Reality Check

Let’s cut through the marketing fluff and look at the raw data. When the user ran QWEN Code Next (q4, 6k context) on Windows 10, it produced 18 t/s. On Ubuntu 22.04 LTS: 31 t/s. That’s a 72% improvement for a smaller model. But the real shock came with the larger MoE (Mixture-of-Experts) architecture:

| Model | Configuration | Windows 10 | Ubuntu 22.04 | Performance Delta |

|---|---|---|---|---|

| QWEN Code Next | q4, ctx 6k | 18 t/s | 31 t/s | +72% |

| Qwen 3 30B A3B | Q4, ctx 6k | 48 t/s | 105 t/s | +118% |

These aren’t synthetic benchmarks. This is a real home lab setup producing real-world consumer GPU inference performance cases that expose how much overhead Windows actually introduces. The RTX 8000’s 672 GB/s memory bandwidth should theoretically handle the 3GB memory compute requirement for that token generation at roughly 224 iterations per second. The fact that Windows achieves only 48 t/s suggests massive overhead in the inference stack, or the OS itself, is throttling the hardware.

Is It Windows, or Is It Ollama?

The immediate counterargument from experienced practitioners is that this isn’t necessarily Windows’ fault, but rather Ollama’s Windows implementation. Community sentiment suggests that Ollama’s abstraction layer might be particularly inefficient on Windows, potentially mishandling MoE model execution or introducing unnecessary memory copy operations between system and VRAM.

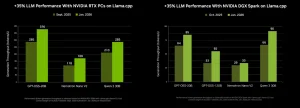

Alternative inference engines like llama.cpp show narrower gaps between platforms, sometimes as little as 1-2 t/s difference. This has led some developers to argue that the performance chasm is “100% more cause of your inference stack than the platform itself.” The recommendation? Skip Ollama entirely and run llama.cpp directly with CUDA 12 binaries, using the OpenAI-compatible server mode for your applications.

There’s merit to this argument. Ollama’s convenience comes with abstraction costs. However, even when accounting for stack-specific inefficiencies, Linux consistently demonstrates lower driver overhead, more aggressive memory management, and better process scheduling for GPU compute workloads. When you’re pushing 48GB of VRAM through complex attention mechanisms, every microsecond of driver latency compounds into significant throughput losses.

The Technical Mechanics of the Divide

Linux achieves superior inference performance through several architectural advantages that Windows struggles to match, even with WSL2 (Windows Subsystem for Linux).

Direct GPU Access vs. Driver Abstraction

Linux allows Ollama to communicate more directly with NVIDIA hardware through CUDA, while Windows relies on the Windows Display Driver Model (WDDM), which introduces additional scheduling layers. For graphics, this overhead is negligible. For compute-intensive LLM inference, it becomes a bottleneck.

Memory Management and Flash Attention

Enabling OLLAMA_FLASH_ATTENTION=1 provides 20-30% faster context handling on both platforms, but Linux extracts more benefit from this optimization due to superior memory allocation strategies. On Linux, Flash Attention reduces memory bandwidth usage by up to 40% compared to standard attention mechanisms, a critical advantage when handling longer contexts.

BIOS and Hardware Configuration

For AMD GPU users, Linux provides more granular control over memory allocation. Setting iGPU Memory Configuration to 96GB and disabling SDMA (Smart Dual-Mode Memory Access) in the BIOS can yield notable improvements in VRAM utilization and model loading times, tweaks that are harder to optimize consistently under Windows’ more restrictive hardware abstraction layer.

The WSL2 Compromise: Better, But Not Native

Many developers attempt to split the difference by running Ollama in WSL2, which offers CUDA passthrough without dual-booting. While WSL2 certainly outperforms native Windows Ollama in many scenarios, it introduces its own friction points that prevent it from matching bare-metal Linux performance.

Memory allocation becomes a critical constraint. By default, WSL2 claims only 50% of system RAM. For large models, this forces aggressive swapping. You can mitigate this by creating a .wslconfig file with explicit limits:

[wsl2]

memory=24GB

swap=8GB

processors=8Even with proper configuration, WSL2 suffers from I/O overhead when accessing Windows filesystems through /mnt/c/. Model load times increase significantly when Ollama’s model storage resides on a Windows-mounted drive rather than the native ext4 WSL2 filesystem. And while GPU passthrough works for NVIDIA (with drivers 527.xx or newer), AMD’s ROCm support in WSL2 remains patchy, forcing many AMD users back to native Windows with its performance penalties.

Docker and Containerization Overhead

If you’re containerizing your inference workloads, the OS divide widens further. Docker on Windows requires either WSL2 backend or Hyper-V isolation, adding virtualization layers that Linux Docker avoids.

On native Linux, GPU-accelerated Docker containers achieve near-bare-metal performance with simple runtime flags:

docker run --gpus all -e OLLAMA_FLASH_ATTENTION=1 \

-e OLLAMA_CONTEXT_LENGTH=32768 \

-v ollama:/root/.ollama \

-p 11434:11434 \

ollama/ollamaThe same container on Windows Docker Desktop suffers from the WSL2 translation layer, memory allocation constraints, and filesystem latency that can reduce throughput by 10-15% before the model even loads.

The Practical Fallout

For developers building dedicated AI development hardware comparisons, these performance gaps have budget implications. If you need 105 t/s throughput for your application, you can either buy a $3,000 GPU and run Linux, or buy a $6,000 GPU and run Windows. The math becomes uncomfortably clear when scaling to multi-GPU setups or edge deployment scenarios.

The “Windows tax” on inference isn’t just about slower token generation. Higher latency means longer context windows become impractical, RAG pipelines time out, and agentic workflows hit bottlenecks. When Flash Attention and optimized CUDA kernels are already squeezing every ounce of performance from consumer hardware, surrendering 50-100% of that performance to OS overhead is indefensible.

Optimization Strategies for the Trapped

If you’re stuck on Windows due to corporate policy or gaming requirements, you can minimize the damage:

- Use WSL2 with systemd enabled and store models inside the Linux filesystem (

~/.ollama/models), not on/mnt/c/ - Force Ollama to listen on

0.0.0.0rather than localhost-only to avoid Windows networking stack translation - Enable Flash Attention (

OLLAMA_FLASH_ATTENTION=1) regardless of platform, it provides the single largest performance uplift available - Update to NVIDIA drivers 535+ for 10-15% overall gains on both platforms

- Consider Vulkan backend (

OLLAMA_VULKAN=1) for AMD GPUs, which sometimes outperforms ROCm even on Linux (14.11 t/s vs 12.73 t/s in recent benchmarks)

But ultimately, if you’re serious about local LLM inference, whether running 70B parameter models on consumer GPU setups or optimizing production RAG pipelines, the data is unambiguous. Linux doesn’t just edge out Windows, it leaves it in the dust, sometimes doubling effective throughput on identical silicon.

The revolution in local AI isn’t happening on Windows. It’s happening on Ubuntu, Arch, and whatever flavor of Linux lets you get closest to the metal. Everything else is just expensive overhead.