The data analyst career path has always been something of a bait-and-switch. You start out learning SQL, Python, and visualization tools, expecting to graduate into strategic insights and business impact. Instead, you spend three years writing slightly different variations of the same GROUP BY queries for stakeholders who treat you like a human ChatGPT with database access.

Now that actual ChatGPT, and its enterprise-grade cousins, can ingest entire database schemas and generate complex joins faster than you can open your IDE, that middle ground has become a killing field. The analysts thriving in 2026 aren’t the ones who learned to write better SQL. They’re the ones who figured out how to never write it again.

The Growth Trap Hiding the Bloodbath

On paper, the AI job market looks surprisingly healthy. A recent Snowflake study surveying over 2,000 business leaders globally found that 77% of firms report AI-driven job creation, while only 46% report losses. Among organizations experiencing both, 69% said the net effect has been positive.

But dig into the sector-specific data and the picture gets uglier. Data analytics ranks among the hardest-hit functions, with 37% of organizations reporting AI-related cuts, tied with customer service for the second-highest elimination rate behind IT operations. Entry-level positions are evaporating at a staggering 63% clip, with middle management following at 46%.

This is the trap: AI is creating jobs, but not for the people currently occupying the roles being automated. The “net growth” narrative conceals a brutal churn where traditional analyst functions, data extraction, cleaning, basic reporting, are being absorbed by agents while new positions emerge for the architects who build and supervise those agents.

When the Database Schema Fits in the Context Window

The technical reality hitting junior analysts is stark. Modern LLMs with expanded context windows can ingest entire database schemas and generate production-ready SQL that often outperforms human-written queries. Organizations are discovering that complex join logic, window functions, and CTEs, the bread and butter of intermediate analytics, are exactly the type of pattern-matching tasks where AI excels.

If your primary value proposition is acting as a “human text-to-SQL parser”, you’re competing with tools that don’t sleep, don’t ask for raises, and don’t accidentally delete production tables with a misplaced DROP statement. The data showing percentage of programmer tasks already covered by AI suggests this isn’t theoretical, it’s already baked into hiring freezes and reduced headcount approvals.

The distinction matters because many analysts believed their work required too much “business context” to automate. They were wrong. The context is in the schema documentation, the query history, and the semantic layers that modern data stacks expose to AI agents. What’s left when the querying is automated isn’t strategy, it’s validation.

The Validator Class

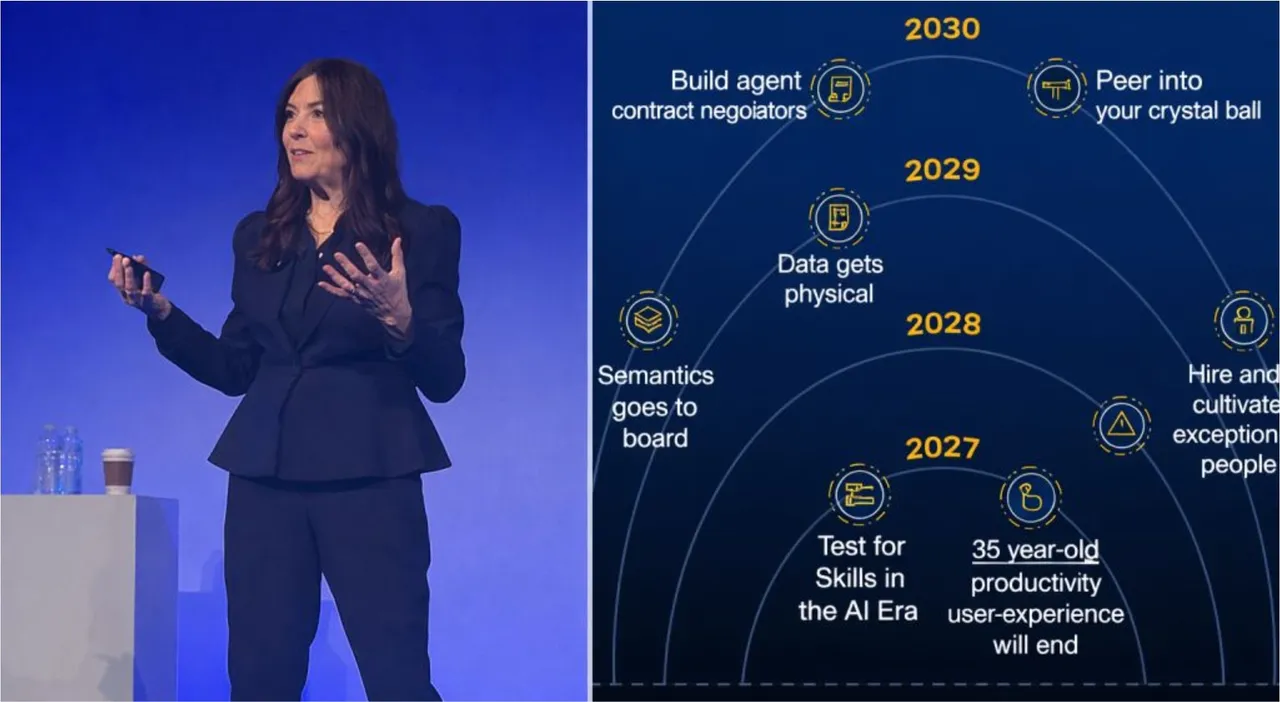

Gartner analyst Georgia O’Callaghan crystallized the shift at the recent Data & Analytics Summit: roles are pivoting from creation to curation. “Your role may shift from being a developer to acting more as a validator, where you review and adjust work by others, humans and agents”, she noted.

Source: Data & Analytics Summit

This isn’t speculative, companies like Atlan have already restructured their engineering teams to focus on teaching AI agents to code rather than writing code themselves.

This creates a bifurcation in the analytics job market. On one side, you have “fusion teams” where human analysts supervise AI agents, checking their outputs and handling edge cases. On the other side, you have the builders, the data engineers and architects designing the orchestration layers, semantic models, and agent frameworks that make the automation possible.

The validators are safer than the replaced, but they’re also capped. They become quality assurance for algorithms, which is a precarious position when the algorithms keep getting better. The builders, meanwhile, are capturing the value.

The Escape Routes

Analysts looking to avoid obsolescence have two viable exit ramps, both requiring a fundamental repositioning.

Move Down to the Infrastructure Layer

Data engineering isn’t immune to automation, pipelines can and will be generated by AI, but it involves architecture decisions, orchestration complexity, and system design that extends beyond pattern matching. As one practitioner noted, the future-proof data engineer isn’t just maintaining pipelines, they’re leveraging AI to create datasets that didn’t previously exist and partnering with analytics to build high-performance solutions that reduce time-to-insight. This requires why traditional syntax fluency masks critical system thinking gaps, understanding why a nested loop kills performance matters more than writing the query itself.

Move Up to the Business Logic Layer

The other escape is abandoning the technical middle entirely and embedding as a domain expert who uses AI tools directly. Organizations are increasingly finding that subject matter experts with LLM access can bypass the analyst layer entirely, asking their own questions and interpreting their own results. The analyst who survives is the one who becomes the strategic interpreter, the person who knows what questions to ask, not just how to query the database.

The Proficiency Premium

By 2027, Gartner predicts that 75% of hiring processes will include testing or certification for AI proficiency. This represents a fundamental shift from credentialing based on tenure and traditional technical skills to practical AI collaboration ability.

Stanley Martin Homes has already implemented this shift, prioritizing candidates who understand “the under-the-hood abstraction of what’s happening with these models” and demonstrate willingness to “test and learn and fail.” Curiosity has replaced tenure as the primary hiring heuristic.

This aligns with the broader AI fear causing widespread freeze in tech career mobility, where professionals are realizing that staying put in a declining role is riskier than pivoting. The analysts who wait for their companies to “figure out AI strategy” are finding themselves automated out of the discussion entirely.

The Burnout Accelerant

There’s a darker undercurrent to this transition. As AI tools increase individual productivity, organizations are responding not by reducing hours but by increasing output expectations. The how AI is engineering a burnout crisis despite efficiency goals dynamic hits analysts particularly hard, they’re expected to maintain the “human oversight” of AI-generated insights while also producing more analysis than ever before, effectively doing 1.5 jobs for 1.0 salaries.

Meanwhile, how AI infrastructure costs are driving aggressive staff reductions means that even as companies spend billions on AI infrastructure, they’re cutting the human infrastructure that supported the old way of working. The math is simple: if an AI agent can handle 60% of an analyst’s workflow, the company needs 40% fewer analysts, even if total analytical output increases.

The Hard Pivot

The uncomfortable truth is that “data analyst” as a standalone career path is shrinking. The role is fragmenting into two distinct species: the data engineer who builds the AI infrastructure, and the domain expert who consumes it. The middle layer, the pure analyst who extracts data but doesn’t architect systems or own business outcomes, is being compressed out of existence.

For those currently in the trap, the escape requires abandoning the safety of SQL syntax mastery and either diving deep into system architecture or climbing into strategic business functions. The tools aren’t coming for your job tomorrow, they’re already here, sitting in your database connections, writing queries you used to get paid to write.

The analysts who survive won’t be the ones who learned to write better SQL. They’ll be the ones who learned to ask better questions of the machines writing it for them.