Cloud AI’s Ad Infestation Fuels the Local LLM Rebellion

As OpenAI plants ad code in ChatGPT’s Android beta, developers question whether cloud AI’s ‘free’ models are worth the cost.

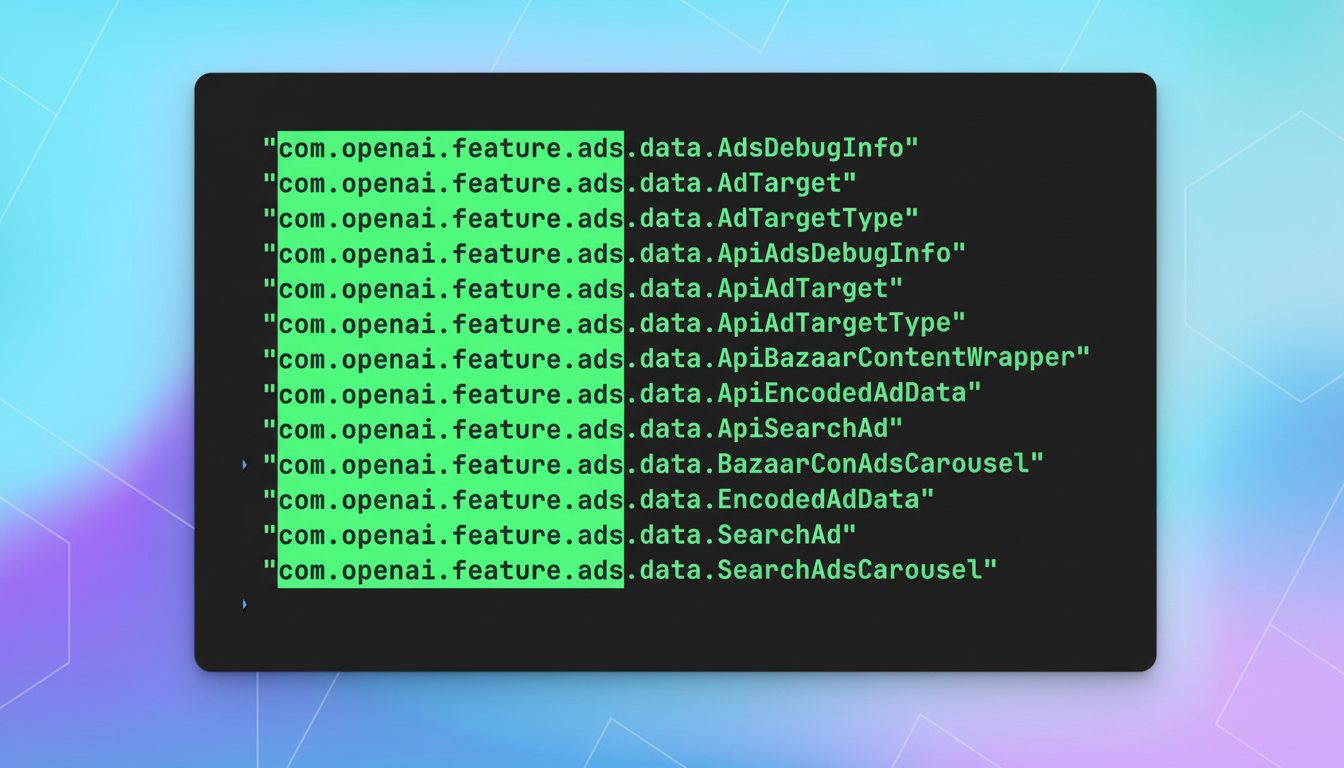

The cozy relationship between users and cloud AI services just hit an iceberg. When reverse engineer Tibor Blaho discovered ad system strings in ChatGPT’s Android beta build 1.2025.329, it confirmed what many had suspected: the “free” AI lunch was ending, and the bill included personalized ads injected directly into your conversations.

This discovery reveals specific ad integration code including “ads feature”, “search ad”, and “search ads carousel” strings that signal OpenAI’s move toward Google-style monetization. The company that once positioned itself as a pure research organization is joining the ad-driven race to profitability.

The Ad SDK Reveal: More Than Just Code Strings

The findings from TestingCatalog show this isn’t just placeholder text, it’s a fully formed ad system integration. The presence of “bazaar content” suggests marketplace-style product displays, while “search ads carousel” points toward Google-style sponsored results embedded within ChatGPT responses.

What makes this particularly concerning for developers is that OpenAI, unlike third-party ad networks, controls both the application and the servers it connects to. As security researchers note, this gives them unprecedented access to behavioral patterns and local device data, enabling hyper-targeted advertising that traditional web ads could only dream of.

Privacy Advocates Sound the Alarm

The response from the developer community has been swift and decisive. On programming forums, many express frustration that their AI conversations, often containing sensitive business logic, proprietary code, or personal reflections, could become fuel for advertising algorithms. The prevailing sentiment among technical users is that privacy concerns have reached a tipping point.

As BleepingComputer notes, “GPT likely knows more about users than Google” due to the conversational nature of interactions. This creates a perfect storm for personalized advertising that traditional ad blockers will struggle to detect, let alone combat.

The Local LLM Alternative Gains Practical Urgency

While cloud AI services dangle convenience, local large language models offer something increasingly valuable: complete data sovereignty. Tools like Ollama, LM Studio, and open-source models running on local hardware ensure that conversations never leave your network.

The technical community has been building toward this moment for years. The E-SPIN Group, which focuses on local LLMs for data sovereignty, notes that “the only data that is truly private is the data that never leaves your control.” This philosophy is gaining traction as developers realize cloud AI’s fundamental trade-off: convenience in exchange for complete data exposure.

The timing couldn’t be more perfect for local alternatives. Hardware capable of running sophisticated models has become dramatically more accessible. A used RTX 3090 with 24GB VRAM, once a luxury item, now provides serious image generation and model training capabilities for under $800. Meanwhile, Apple’s Silicon Macs offer unified memory architectures perfect for running large language models locally.

The Business Case for Going Local

For enterprises, the calculus extends beyond privacy to compliance and cost predictability. Local deployment transforms AI from a recurring operational expense to a capital investment with predictable long-term costs. While cloud services charge per token with no upper limit, local infrastructure provides unlimited usage after the initial hardware investment.

The financial breakdown is compelling: a solid local setup costs $9,000-$12,000 upfront with $100-$180 monthly power costs, while consistent cloud AI usage can run $600-$2,100 monthly. At three years, local infrastructure saves tens of thousands while providing better performance consistency and eliminating vendor lock-in.

Technical Implementation: Not Just for Experts

Setting up local AI infrastructure has matured dramatically. With tools like Ollama providing one-command installation and open web UIs mimicking ChatGPT’s interface, the barrier to entry has collapsed. Developers can now run sophisticated models locally with minimal configuration, bridging the gap between cloud convenience and local control.

The integration patterns have also standardized. As InfoQ recently covered, unified APIs now allow applications to switch between local and cloud models transparently. This means businesses can start with cloud services while building the capability to migrate sensitive workloads locally as needed.

The Inevitable Ad Escalation

History suggests this is just the beginning. As one developer noted, “They’ll add overly annoying ads, then offer premium to remove them, then introduce sponsored content for premium users, then create another tier to remove THAT.” This escalation pattern has played out across countless “free” services, each iteration pushing more users toward paid tiers while degrading the free experience.

The deeper concern is how AI-native advertising will differ from traditional formats. Unlike banner ads or search results, conversational AI can weave product recommendations directly into responses, making them virtually indistinguishable from organic content. This represents a fundamental shift in advertising, and in user trust.

The Path Forward: Pragmatic Hybrid Approaches

The most sensible approach for many organizations will be hybrid: using cloud AI for non-sensitive tasks while keeping proprietary data and workflows local. This balances the computational power of cloud services with the privacy guarantees of local processing.

As the ad-driven future of mainstream AI becomes clearer, the local alternative looks less like a fringe movement and more like essential infrastructure for anyone who values data sovereignty, cost control, and predictable performance. The ChatGPT ad leak isn’t just another privacy story, it’s the catalyst that makes local AI transition from “nice to have” to “business critical.”

The revolution won’t be televised, but it might run entirely on your own hardware. And for developers watching OpenAI’s ad integration unfold, that’s becoming the most compelling feature of all.