While neobanks like Chime and SoFi run cloud-native stacks that deploy new features in hours, traditional banks are still orchestrating “digital transformation” initiatives that amount to putting a React frontend on a COBOL mainframe. The result is a architectural Frankenstein that costs more to maintain than a Manhattan penthouse and moves about as fast as one too.

The Shitshow Behind the Curtain

Ask any data engineer who’s worked in banking about their stack, and you’ll get a variation of the same answer: it’s a “shitshow of legacy tools, sticky shit on the wall, and flavor of the month.” You will encounter every permutation under the sun, from cloud-native Azure deployments sitting side-by-side with mainframe systems from the 1970s running on virtualized environments atop modern servers.

The industry is keeping COBOL alive through sheer inertia. The U.S. runs on an estimated 95 billion lines of COBOL code, and the pool of developers who can maintain it is shrinking faster than a cheap cotton t-shirt. But here’s the kicker: that code still works. It processes transactions with the reliability of a Swiss watch, which makes the real organizational barriers to legacy modernization far more dangerous than any technical debt spreadsheet.

Why migrate your primary client data hub or bank account sub-ledger when the current system “doesn’t seem to fail on its core”? The business case for touching these systems is negative infinity, until it isn’t.

The FedNow Ticking Time Bomb

The Federal Reserve’s FedNow network and The Clearing House’s RTP rail have created a hard deadline that no amount of executive PowerPoint theater can negotiate. These systems require processing times under 50 milliseconds for real-time settlement. That’s a benchmark most legacy core banking systems, built on batch-first architectures that process transactions in nightly cycles, physically cannot meet.

Modern cloud-native cores can cut IT operational costs by 30-40% through automation and elastic scaling, but that requires actually migrating the data. And financial data migration is where dreams go to die.

The pain isn’t just the tools, it’s keeping data consistent across systems with different schemas and update cycles. Banks spend enormous effort on reconciliation and lineage rather than building fancy pipelines. When your “modern analytics layer” is just a bandage over a mainframe that thinks in end-of-day batches, you end up with data drift that can cause a customer to spend $500 on the legacy side while the new core remains blissfully unaware.

The $200 Million Migration Gamble

Let’s talk numbers. A credit union with under 100,000 accounts might escape a migration for $5-15 million over 12-18 months. A regional bank? $20-80 million and 18-30 months of purgatory. But if you’re a Tier-1 institution with millions of accounts, you’re looking at $200 million or more and a 3-5 year timeline that stretches into infinity.

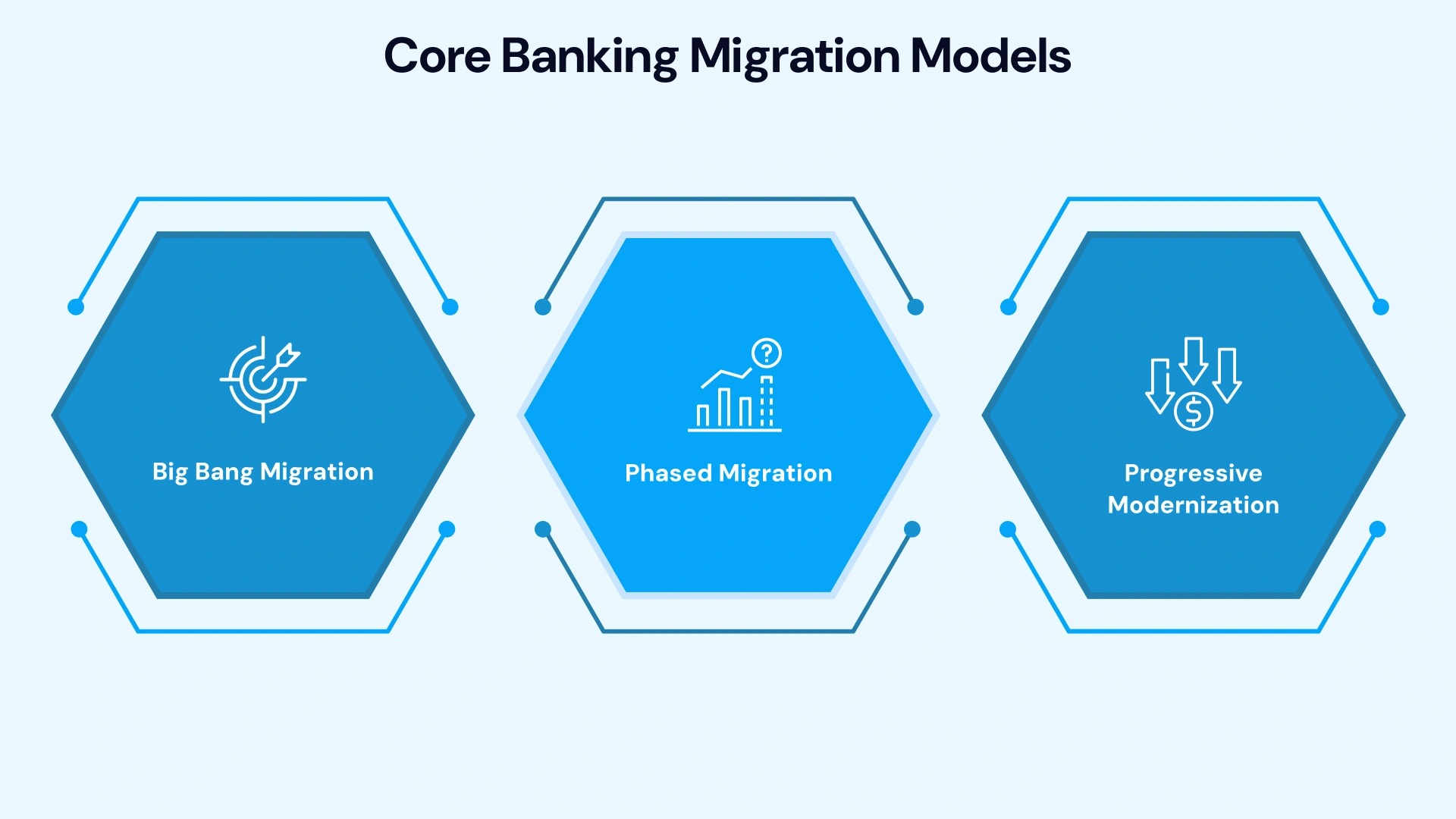

These aren’t just IT projects, they’re existential bets. The “Big Bang” migration strategy, where all systems cut over in a single event, is high-risk and suited only for smaller institutions. For everyone else, it’s the “Strangler Fig” pattern or nothing: gradually wrapping the legacy core with APIs, intercepting traffic, and routing requests to the new core as capabilities migrate.

This approach requires running dual systems for years. Every transaction must write to both the legacy and new core simultaneously (dual-write), with automated reconciliation frameworks comparing balances hourly. Any variance above 0.001% halts the migration. Real-world challenges in migrating legacy data platforms to cloud environments show that undiscovered integrations and shadow IT feeds are the silent killers that blow budgets and timelines.

The Data Governance Quagmire

Master Data Management (MDM) in banking isn’t just about creating “golden records”, it’s about mapping complex relationships between individuals, businesses, guarantors, and beneficial owners for AML compliance while dealing with data scattered across GL, procurement, grants, payroll, and project systems.

Traditional ETL strips out the very context that makes financial data useful. When leadership questions a variance, analysts can’t drill down because the system shows aggregates, not the transactions behind them. This is why banks are drowning in compliance regulations yet can’t produce a unified view of a customer’s relationship across retail, commercial, and wealth management divisions.

The irony? The compliance complexities of microservices in finance mean that even when banks do modernize, they often create distributed systems that are harder to audit than the monoliths they replaced. A microservices architecture with thirty-seven services might look beautiful on a diagram, but if you can’t trace a transaction through the mesh for a regulator, you’ve just traded one nightmare for another.

Zero-Downtime or Bust

In 2026, the “scheduled maintenance window” is a relic. With FedNow integration, banking never sleeps. A three-hour migration outage doesn’t just trigger refunds, it creates “bank fail” trends on social media and serves as the perfect push factor for customers to switch to digital-native competitors.

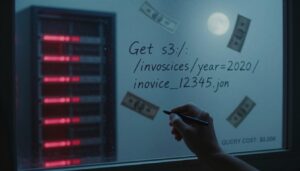

Zero-downtime migration (ZDM) isn’t aspirational, it’s an engineering constraint. This requires:

- Change Data Capture (CDC) tools like Debezium monitoring legacy transaction logs and streaming every insert, update, and delete to the new core in near real-time

- Blue-green deployments where traffic switches instantly between identical environments, with the old system kept warm for rollback

- Database sharding for large-scale systems, splitting massive databases into manageable chunks that can be migrated independently

- Canary migrations that roll out to internal staff first, then new customers, then retail accounts, then high-value commercial relationships

Each wave requires a 72-hour rollback window and a hypercare team standing by. Legacy decommissioning only happens after 90 days of clean reconciliation and explicit risk committee sign-off.

The Vendor Lock-in Trap

Oracle pricing and Broadcom’s recent licensing changes are pushing teams toward open source, but migration risk keeps them stuck longer than they’d like. Banks find themselves running stored procedures heavy on Oracle RDS while trying to implement Kafka and AWS Glue for real-time processing, a bizarre hybrid where Databricks gets listed in data analyst job reqs while the actual core still runs on Sybase and ODBC connections.

This creates a skills gap where engineers need to know both the old world (COBOL, mainframe JCL, screen-scraper macros over green screens) and the new (Kubernetes, event-driven architecture, CDC pipelines). The result is often a “flavor of the month” approach where new tools get layered on top without ever replacing the foundation.

The Path Forward (If You Must)

If you’re staring down this barrel, start with the dependency map. You cannot migrate what you haven’t mapped. Document every integration, fraud platforms, AML engines, card networks, ACH processors, and their SLA requirements.

Then choose your migration model based on your risk profile:

| Strategy | Risk Level | Timeline | Best For |

|---|---|---|---|

| Big Bang | High | 3-12 months | Credit unions, small community banks |

| Phased | Medium | 18-30 months | Regional banks |

| Strangler Fig | Low | 2-5 years | Tier-1 banks with complex landscapes |

Frame your migration as a risk management exercise, not a technology project. Regulators respond better to programs with explicit controls, rollback criteria, and communication plans. Build real-time reconciliation dashboards that update every 5 minutes during cutover, with immutable audit trails for every transaction.

And remember: evidence of architectural fragility in major banking institutions isn’t hard to find. When a bank can’t reliably deliver emails to customers, it’s a window into how fundamentally broken enterprise infrastructure has become behind the marble facades.

The Inevitable Conclusion

The banking tech lag isn’t a failure of imagination, it’s a rational response to decades of accumulated risk. The systems work, they’re regulated to the hilt, and the downside of breaking them far exceeds the upside of modernizing until the moment it doesn’t.

But that moment is arriving. FedNow, RTP, and AI-ready infrastructure requirements are forcing hands. Banks that treated migration as an IT project will fail. The ones that survive will have treated it as business-critical program management: executive sponsorship, phased approaches, and partners who understand both the engineering and the regulatory environment.

The “digital transformation” slides look great in the boardroom. But when your core banking system predates the personal computer, those slides are just expensive fiction.