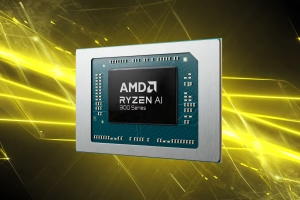

AMD’s latest move feels equal parts cunning and transparent. Unveiled at their AI Dev Day, the “Ryzen 395 AI Dev Unit” is, by their own engineer’s admission, just a Ryzen 395 chip with 128GB of memory in a Lenovo-manufactured box. There’s no magic new chipset, no bespoke PCIe lanes for clustering, and no unreleased silicon. It’s essentially a Framework Desktop or GMK X2 alternative, but with one crucial difference: a formal corporate invoice and enterprise support channel. For the individual tinkerer, this is just another SFF PC. For a procurement department, this is the difference between a sanctioned tool and a hobbyist gadget.

This is the true Trojan Horse play: bypass the technical purist and ship directly to the budget-conscious tech lead. Let’s unpack the machine and the strategy behind it.

The Spec Sheet: Desktop Unified Memory as a Service

The core value proposition is brutally simple: 128GB of unified memory addressed by a Ryzen 395’s powerful iGPU. No more hunting for elusive server GPUs with 80GB VRAM modules. No more building Frankenstein rigs with four GPUs and a 1200W power supply. It’s one moderately-sized box.

But the devil is in the application-specific details.

Developers immediately zeroed in on the capacity bottleneck. 128GB sounds immense until you subtract two crucial chunks: operating system overhead (often cited at a static 4GB on AMD systems, a point of contention) and, more importantly, the KV cache required for inference at usable context lengths. A developer with a GMKtec EVO-X2 running the same hardware reported that usable VRAM for the GPU plateaus around 124GB. That’s not theoretical capacity, it’s the hard ceiling for model weights and KV cache combined.

So, what fits? The conversation is a masterclass in modern model-state calculus. Users report successfully running models like Minimax M2.7 (230B parameters) with aggressive quantization like Q3_K_S or Q4. Others mention fitting Step Fun 3.5 Flash (a 196B MoE) or the 397B-parameter Qwen 3.5 at Q4. As one developer summarized succinctly, pushing beyond the 140B parameter range requires sacrificing either quantization quality, context size, or both. This is the pragmatic math of local inference.

The Benchmark: Good Enough for Real Work?

This isn’t “server-grade” performance. This is “can I unplug from the cloud for a specialized workflow” performance. Real-world llama.cpp performance optimizations have made AMD viable for inference, but with caveats.

Developers who’ve tried pushing the hardware to its limits report a stark reality. Running the IQ4_XS quant of MiniMax-M2.7 on AMD’s Vulkan driver (AmdVlk) launches with a promising 24 tokens/second, but performance degrades to a sluggish 8 tokens/second at 32k context. Prompt processing is particularly “abysmal”, rendering the setup “unusable for real agentic coding workflows.” However, for lower-intensity uses like chatting or exploratory design brainstorming (“the model gave me from a 5k plan very good design directions”), it was acceptable.

This lines up with the trajectory we’ve seen: thanks to vLLM’s official support for Ryzen AI and improvements to the ROCm stack, performance is climbing. But memory bandwidth remains a ceiling. The Strix Halo architecture’s bandwidth, roughly equivalent to NVIDIA’s DGX Spark, is good, but not class-leading. The clamor in developer forums isn’t for more memory, but for more memory bandwidth please. AMD seems to have heard, with the roadmap pointing to Medusa Halo promising a doubling, though not until potentially mid-late 2027.

The Corporate Play: Why Bother Repackaging Known Hardware?

This is where the announcement transcends its mundane spec sheet. The target market isn’t the Reddit DIY crowd. It’s the IT manager at a midsize consultancy, the startup CTO, or the university lab administrator.

As one Australian system integrator noted, “Sparks (and spark clones) are 8-10k here in Australia, if I could get a proper backed unit in front of them for 4-5 that’d be good.” He notes that a “proper backed unit”, one with a VAT invoice, warranty, and vendor support, is a purchase order enabler. The HP competitor he mentions only offered 64GB, this 128GB unit, with corporate backing, plugs exactly into that niche.

AMD is building a corporate-friendly on-ramp into local AI. It’s enterprise-grade packaging for prosumer hardware. For developers who’ve benefited from RDNA’s native AI validation suite, this is the next logical step: commoditizing the deployment target.

The Limitations: The Cluster This Isn’t

For developers dreaming of chaining a dozen of these boxes together for distributed inference? Keep dreaming. A critical omission, loudly noted by the community, is the lack of a high-speed clustering port. The I/O is reportedly a standard consumer affair: USB4, M.2, but no 8 lane PCIe slot. The reason is architectural: the chip’s limited I/O budget forces a choice, and AMD chose connectivity over expansion. This machine is an island.

This makes it a very different beast from scalable units like NVIDIA’s DGX line. It’s a personal, or small-team, inference silo. A single developer can spin up a large quantized model (e.g., MiniMax-M2.7-UD-Q3_K_S) and work offline. This mirrors the trajectory of efficient architectures designed for running on consumer AMD CPUs coming onto the market.

The Verdict: A Calculated Opening Gambit

AMD isn’t trying to beat NVIDIA at its own game of H100s and dense compute. They’re carving out a new game entirely: accessible, sanctioned, local AI dev stations. The target user is a developer who needs to prototype, run specialized models offline for data privacy, or simply wants a fixed monthly cost (~200W total power) versus variable, spiraling cloud API bills.

The Ryzen 395 AI Dev Unit is not revolutionary hardware. It’s a convenient, vendor-locked package of existing technology. Its ultimate success won’t be judged by the hardcore local-LLM enthusiast who builds their own rigs, but by the number of corporate procurement departments who finally sign off on giving their AI teams a tangible, locally-controlled inference box. It’s a Trojan Horse because once inside the corporate walls, it normalizes AMD’s stack. And that’s a win, even if it’s just repackaged desktop hardware.