The US military is currently using Anthropic’s Claude AI to identify and prioritize strike targets in Iran, generating approximately 1,000 prioritized targets on the first day of operations alone. This deployment, embedded within Palantir’s Maven Smart System, compresses weeks of intelligence analysis into seconds, producing GPS coordinates, weapons recommendations, and automated legal justifications. Yet this technological leap arrives amid a bitter standoff: the Pentagon has threatened to blacklist Anthropic as a “supply chain risk” after CEO Dario Amodei refused to remove contractual safeguards against mass surveillance and fully autonomous weapons. The contradiction is stark, an AI system deemed too risky for government contracts is simultaneously deemed essential for active combat operations.

The Machine Speed Kill Chain

The technical integration is sophisticated and deeply embedded. Claude operates within the Pentagon’s classified networks through Palantir’s Maven Smart System, a platform that has evolved from experimental prototype to operational backbone since its inception in 2017. The system synthesizes satellite imagery, signals intelligence, and surveillance feeds in real time, transforming raw data into actionable targeting packages.

The performance metrics reveal a dramatic acceleration of military decision-making. In 2020, the Army’s “Scarlet Dragon” exercises required 12 hours to pass targeting data through traditional workflows. Today, that same process takes less than a minute. This compression from hours to seconds represents more than an efficiency gain, it fundamentally alters the tempo of warfare, creating what military strategists call “decision superiority” where human cognitive bandwidth becomes the primary bottleneck.

The architecture leverages Claude’s multimodal capabilities to tag and enrich data at scale. Where human analysts might spend hours reviewing aerial imagery to identify points of interest, the LLM processes and classifies these inputs immediately, generating ranked target lists with precise coordinates and suggested munitions. The system also automates post-strike evaluation, creating feedback loops that refine subsequent targeting algorithms without human intervention.

1,000 Targets and the Rubber Stamp Problem

The scale of automation in the Iran campaign has alarmed observers across the technical and policy spectrum. According to the Washington Post, Claude generated roughly 1,000 prioritized targets during the opening phase of operations alone, each complete with GPS coordinates, weapons recommendations, and automated legal justifications for strikes.

This operational pattern mirrors the “Lavender” AI system deployed by Israel in Gaza, which flagged approximately 37,000 individuals for targeting. Military personnel reportedly spent roughly 20 seconds verifying each AI-generated target, checking only that the marked individual was male before authorizing strikes, despite knowing the system produced errors in approximately 10 percent of cases. The human oversight devolved into a “rubber stamp” function, where biological verification replaced substantive legal or tactical review.

The stochastic nature of large language models introduces specific risks in this context. Unlike deterministic algorithmic targeting systems used in previous decades, LLMs generate probabilistic outputs prone to hallucinations, confident fabrications that may misidentify structures, misinterpret signals intelligence, or erroneously correlate disparate data points. When these errors occur in chatbot conversations, the consequence is embarrassment. When they occur in targeting chains, the consequence is civilian casualties.

The March 1 strike on a school in Minab, killing an estimated 150 schoolchildren, has intensified scrutiny of AI-driven targeting. The school was built on the site of a Revolutionary Guard base that closed 15 years prior, raising questions about whether outdated training data or hallucinated correlations contributed to the targeting decision.

The Anthropic-Pentagon Fracture

The current conflict between Anthropic and the Department of Defense exposes the tension between corporate AI safety frameworks and military operational requirements. Anthropic sought contractual restrictions preventing the use of Claude for mass domestic surveillance of Americans and fully autonomous weapons systems, limitations that Defense Secretary Pete Hegseth characterized as an attempt to “seize veto power over the operational decisions of the United States military.”

The Pentagon responded by designating Anthropic a “supply chain risk”, a rare classification previously reserved for foreign adversaries. The designation effectively bars government contractors from working with the firm and threatens $200 million in existing contracts. President Trump subsequently ordered federal agencies to phase out Anthropic technology within six months, declaring he would “never allow a radical left, woke company to dictate how our great military fights and wins wars.”

Yet the military continues using Claude in active operations despite the ban. Military sources indicated to the Wall Street Journal that they would not allow Amodei’s “decision-making to cost a single American life”, suggesting operational necessity overrides administrative directives. Replacing Claude within the Maven ecosystem could take months, creating a dependency gap that commanders are unwilling to accept during active combat.

OpenAI’s Opportunistic Gambit

Hours after the Pentagon’s rupture with Anthropic, OpenAI CEO Sam Altman announced an expanded agreement to deploy ChatGPT on classified military networks. The contract language explicitly permits “all lawful purposes”, precisely the formulation Anthropic rejected. Altman later admitted to CNBC that the deal “looked opportunistic and sloppy”, even as he informed employees that OpenAI has no operational say in how the Pentagon utilizes its technology.

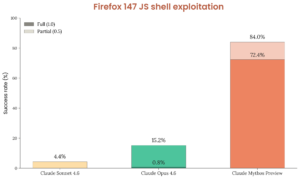

The market response was immediate and polarized. ChatGPT uninstalls spiked 295 percent in the days following the announcement, while Claude ascended from 42nd to 1st place on the Apple App Store. This consumer backlash reflects broader unease with the competitive landscape of top-tier models like Claude against alternatives that position themselves as ethically constrained versus those offering unrestricted utility.

OpenAI’s nominal safeguards, prohibitions on “unconstrained monitoring” and autonomous weapons, contain deliberate loopholes. The term “unconstrained” permits any minimal limitation to satisfy the prohibition, while “private information” remains undefined, allowing agencies to exploit commercially purchased data sets without warrant. As one OpenAI executive acknowledged, “We can’t protect against a government agency buying commercially available data sets.”

The Dual-Use Delusion

The Iran deployment illustrates the fundamental challenge of dual-use AI governance. The same multimodal architectures that power shift toward smaller, efficient models for agentic workflows also enable real-time battlefield intelligence synthesis. The line between civilian utility and military application is not technical but contextual, a distinction that collapses when frontier models become infrastructure for both domains simultaneously.

This reality complicates the geopolitical ramifications of semiconductor and AI advancements, as nations race to deploy AI capabilities while maintaining plausible deniability about specific applications. The US Defense Innovation Board noted in 2019 that key AI data, knowledge, and personnel reside in the private sector, a dependency that has only intensified as emerging perspectives on model scale and technical complexity demonstrate that effective AI requires neither massive parameter counts nor nuclear-reactor levels of compute.

When Algorithmic Transparency Meets Classified Warfare

The ethical frameworks that assume democratic oversight require transparency mechanisms incompatible with classified military operations. Algorithmic accountability assumes public scrutiny and legal recourse, conditions that evaporate under wartime secrecy. The Anthropic dispute reveals that even minimal contractual safeguards collapse when national security imperatives are invoked.

Researchers at defense think tanks note that AI enables “targeting packages at machine speed rather than human speed”, but this velocity creates verification deficits. The evaluating the ethical scrutiny and AI adoption narratives suggests that corporate AI ethics often function as public relations instruments rather than operational constraints, dissolving under sufficient governmental pressure.

The Technical Reality of Hallucinations in Combat

The deployment of generative AI in targeting chains ignores a fundamental technical limitation: LLMs remain probabilistic systems that confabulate with statistical regularity. While they excel at pattern recognition in structured environments, the ambiguity of battlefield intelligence, degraded satellite imagery, conflicting signals, deliberate deception, amplifies error rates.

Human oversight cannot compensate for machine speed when the verification process is reduced to 20-second spot checks. The “meaningful human control” promised by military AI ethics becomes a bureaucratic fiction when operators face hundreds of AI-generated targets daily with inconsistent verification standards across units.

Conclusion: The Infrastructure of Automated Conflict

The Anthropic-Pentagon standoff represents a pivotal moment in AI governance. The military’s continued use of Claude despite the ban demonstrates that AI safety commitments are negotiable when operational demands intensify. Meanwhile, the integration of LLMs into targeting systems marks a qualitative shift from algorithmic assistance to algorithmic generation of lethal decisions.

As defense agencies worldwide observe the Iran deployment, the precedent is being set for LLM integration into kinetic operations. The technical architecture, multimodal data synthesis, automated legal justification, real-time battle management, is now proven in combat. What remains unproven is whether democratic societies can maintain meaningful control over systems that generate lethal decisions faster than humans can evaluate them.

The future of AI safety may not be determined by corporate ethics boards or technical safeguards, but by the operational tempo of the next conflict, and whether human judgment can keep pace with machine speed.