While everyone obsesses over Qwen3’s text generation capabilities, the real revolution is happening in its TTS pipeline. The system doesn’t just clone voices, it reduces them to manipulable embeddings that enable semantic search, emotion control, and algebraic voice operations that would make a linear algebra professor giddy.

From Waveform to Vector: How Qwen3 Embeds Your Voice

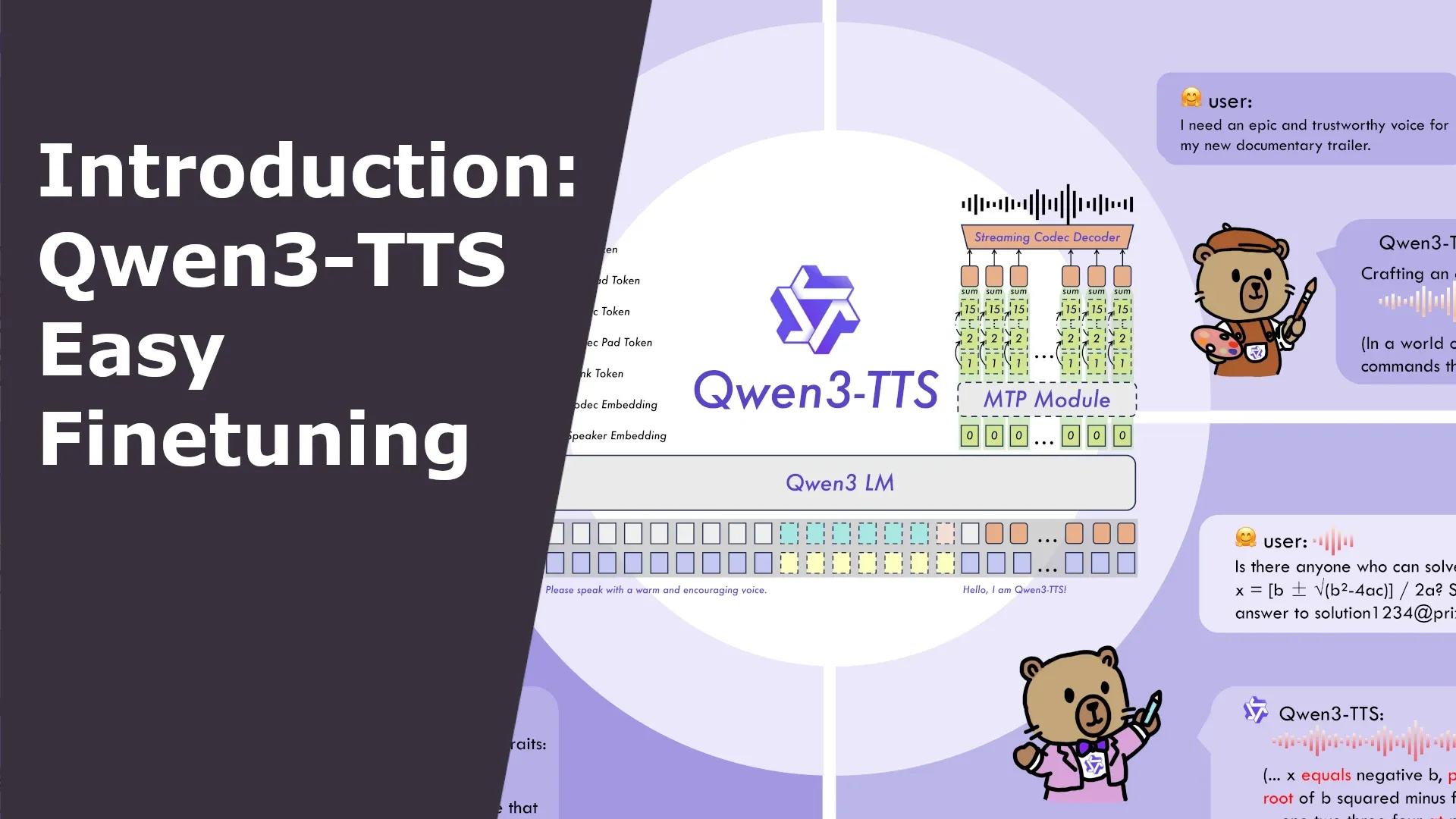

The embedding model itself is surprisingly lightweight, just a few million parameters, making it feasible to run on-device or in browsers using ONNX-optimized versions. One developer has already extracted these encoders and published them on HuggingFace, complete with web-ready inference tools. This means voice cloning isn’t just for researchers with GPU clusters anymore, it’s becoming a browser-based utility.

The Voice Algebra Nobody Asked For (But Everyone Will Use)

Want to make a voice sound more robotic? Find the dimensions that correlate with synthetic speech and amplify them. Need to inject emotion? There’s a vector for that. Community experiments have already demonstrated that sparse autoencoders can identify the specific sub-dimensions controlling gender, pitch, and emotional valence. With just a small annotated dataset (n=10), you can isolate the k most correlated dimensions and transform them directly.

This isn’t theoretical. The vLLM-Omni fork with speaker embedding support already enables real-time voice manipulation during inference. You can embed a reference voice, modify the vector programmatically, and generate speech from the transformed embedding, all in a single pipeline. The performance improvements are substantial: manual KV-caching for the code predictor and regional torch.compile optimizations deliver real-time factors below 0.7 for medium-length utterances.

Semantic Search: Finding Voices Without Metadata

For content creators producing multi-voice dubbing, this is transformative. Rather than fine-tuning separate models for each character, a process requiring 10-30 minutes of clean audio per voice, you could potentially maintain a library of voice embeddings and interpolate between them. The Qwen3-TTS fine-tuning guide shows how single-speaker models achieve stability that zero-shot cloning can’t match, but embedding-based approaches could offer a middle ground: consistent character voices with less data.

The implications extend beyond entertainment. Speaker diarization, identifying who spoke when in a conversation, becomes trivial when you have robust embeddings. Current open-source diarization models struggle with overlapping speech and long recordings, but vector-based speaker identification could fundamentally change that.

When Your Voice Becomes a Commodity

The ethical framework hasn’t caught up. Reusing code without explicit permission is a legal minefield, but what about reusing voice embeddings? If someone records your voice at a public event, embeds it, and uses that vector to generate synthetic speech, have they violated your rights? The embedding is derived from your voice but isn’t the voice itself, it’s a mathematical transformation. Current copyright law has no framework for this.

Voice cloning apps like Voicebox already demonstrate how accessible this is becoming. Built on Qwen3-TTS, it offers instant cloning from seconds of audio, multi-voice composition tools, and local processing that keeps data private. The app is free, open-source, and runs on consumer hardware. The barrier to voice cloning isn’t technical anymore, it’s purely ethical.

The Latency Wars and Real-Time Manipulation

The answer appears to be yes. The Swift implementation running on MLX achieves real-time factors of 0.55 on M2 Max chips, and the vLLM-Omni optimizations bring server-side inference into the sub-100ms range. This means live voice transformation is feasible, think real-time dubbing, accessibility tools that modify speech characteristics on the fly, or gaming voice chat where your voice morphs based on character state.

The technical debate around Qwen3-TTS latency and real-time voice synthesis performance has been heated, with some calling it benchmarketing and others demonstrating genuine sub-100ms performance. The embedding manipulation adds another layer: even if generation is fast, can vector transformations keep pace? Early experiments suggest the embedding operations are negligible compared to the autoregressive generation, making real-time voice algebra practical.

Fine-Tuning vs. Vector Manipulation: Two Paths Forward

The other camp is exploring embedding-based manipulation as a lightweight alternative. Why retrain a 600M parameter model when you can just adjust a 1024-dimensional vector? The trade-off is control: fine-tuning captures nuanced pronunciation patterns and emotional range, while vector manipulation offers coarse-grained transformations.

For multi-voice dubbing scenarios, the embedding approach could be revolutionary. Instead of maintaining separate models for each character, you could have a single base model and a library of voice embeddings. Switching characters becomes a vector swap, not a model swap. The Qwen3-TTS Studio ecosystem is already building infrastructure for this, though multi-speaker fine-tuning is still on the roadmap.

The Open-Source Advantage and the Coming Flood

This democratization means voice cloning is no longer confined to well-funded labs. A developer with a modest GPU can rip the embedding encoder, build a voice database, and offer semantic search and manipulation as a service. The lightweight TTS models contrasting with Qwen3’s approach show there’s demand for smaller, faster alternatives, but Qwen3’s embedding architecture offers something those models can’t: true voice algebra.

Practical Implementation: How to Start Manipulating Voices

- Extract embeddings using the standalone encoder from marksverdhei’s collection

- Run inference with the vLLM-Omni fork that supports speaker embedding parameters

- Transform vectors using simple arithmetic or more sophisticated sparse autoencoders

- Generate speech from modified embeddings in real-time

The ONNX versions enable browser-based inference, while the full PyTorch models run on anything from Apple Silicon to DGX Spark. The community is already building web apps that make this accessible to non-technical users, abstracting away the vector mathematics behind sliders for “gender”, “age”, and “emotion.”

Conclusion: Your Voice Is Now a API Parameter

We’re moving from voice cloning as a novelty to voice manipulation as a primitive operation. In the same way we adjust temperature and top-p for text generation, we’ll soon adjust voice embeddings for speech synthesis. The technology is here, it’s open-source, and it’s getting easier to use every week.

The question isn’t whether we can manipulate voices mathematically, we can. The question is what happens when anyone with a laptop and a few seconds of your audio can do it. The embedding genie is out of the bottle, and it’s a 1024-dimensional vector that looks suspiciously like your vocal identity.

Ready to experiment? The tools are waiting. Your voice is already a vector, you just didn’t know it yet.