The Strangler Fig pattern has become architecture’s favorite security blanket. Wrap your legacy monolith in a shiny new facade, incrementally replace components, and avoid the big-bang rewrite that killed Netscape. Clean, elegant, theoretically perfect. But in high-stakes environments, think telecom OSS handling subscriber events at 3 AM with absolute zero tolerance for downtime, theory collides with reality like a Perl script trying to parse JSON.

When your core system is C++ structs held together by undocumented glue scripts and the original developers have vanished into retirement or rival companies, the Strangler Fig doesn’t just fail, it becomes a complexity multiplier that threatens the very stability it promises to protect.

The Black Box Problem

The Strangler Fig pattern assumes you can intercept traffic at clean boundaries, APIs, event streams, or well-defined interfaces. But what happens when your “interface” is a collection of internal state mutations buried in header files that haven’t been touched since 2003?

Consider the scenario facing teams maintaining core OSS platforms from the early 2000s: billing and mediation layers written in C++, stitched together with Perl glue scripts containing critical business logic that exists nowhere else. Nobody who wrote this code still works at the company. The system handles subscriber events at massive scale, 24/7, with genuine zero tolerance for downtime, not “five nines”, but actual zero.

Management wants AI/ML integration for predictive network fault detection and automated ticket routing. The problem is obvious: you can’t train models on data you can’t cleanly extract. And you can’t cleanly extract data from a system where half the logic lives in undocumented structs and Perl one-liners that parse log files through regex black magic.

This is where incremental rewrites fail without an anti-corruption layer. Without clean seams to strangle, you’re not performing surgery, you’re performing acupuncture on a corpse, hoping it stands up and walks.

The AI Modernization Trap

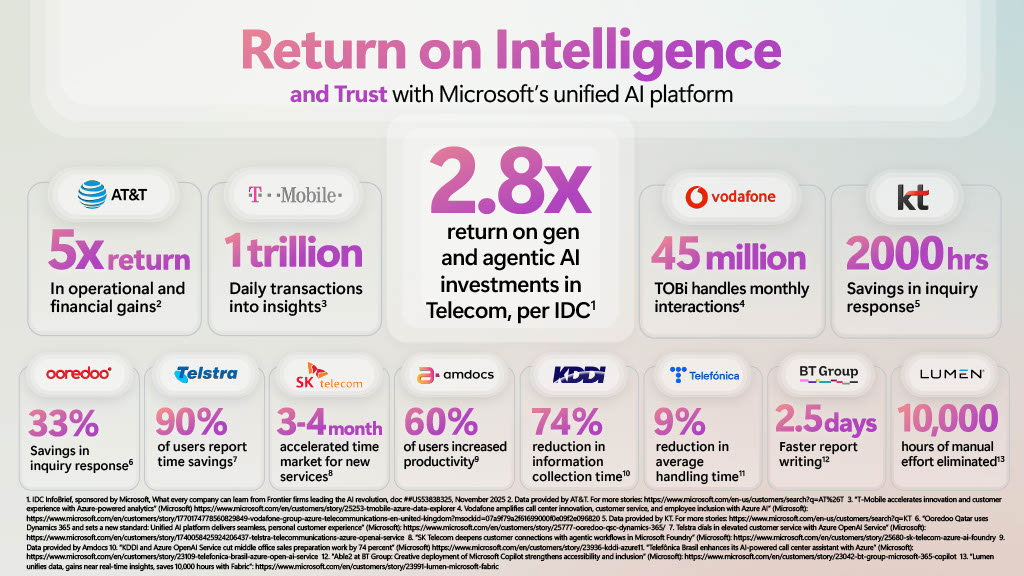

The pressure to modernize isn’t just technical, it’s existential. According to recent IDC research cited by Microsoft, telecom operators are achieving 2.8x return on generative and agentic AI investments, with leading companies hitting up to 5x returns. Far EasTone Telecom has pushed nearly 60% of its NOC operations to be AI-assisted, executing 10,500 operational tasks monthly with average response times of 16 seconds.

But here’s the trap: these AI initiatives require clean, observable data pipelines. When your legacy system lacks event hooks or clean APIs, you’re faced with a choice, attempt to strangle a system that has no neck, or admit that your “incremental” migration requires months of read-only observation just to understand what the system actually does.

The Google Cloud approach to “agentic telcos” assumes Level 4 and 5 autonomy, networks that identify, diagnose, and fix problems without human intervention. Their framework relies on digital twins built from Cloud Spanner Graph and unified data layers. None of this works when your data is trapped in a black box of legacy C++ where “documentation” means a comment saying // TODO: fix this hack later from 1998.

Why the Fig Can’t Strangle Here

The canonical Strangler Fig implementation looks beautiful in conference slides: feature flags, clean routing logic, gradual traffic shifting. The LobeHub implementation shows a clean four-line controller pattern in C#:

public async Task<HttpResponseMessage> StartWorkflowExecution([FromBody] EyeShareWorkflowDesignerModel workflowDesigner)

{

var tenant = Features.ResolveTenant(Request.Headers);

if (await FeatureResolverSingleton.GetIsFeatureEnabledAsync(Features.WorkflowStrangle, tenant))

return CreateResponseMessage(await strangledService.Value.StartWorkflowExecution(workflowDesigner));

return CreateResponseMessage(legacyService.Value.StartWorkflowExecution(workflowDesigner, EyeShareToken));

}

Elegant. But this assumes your legacy system has a StartWorkflowExecution method you can call. It assumes you can lazy-load services without triggering side effects that bill customers incorrectly or drop network packets. In telecom OSS, that legacyService might be a singleton that mutates global state, writes directly to hardware buffers, and sends billing records via a protocol that predates HTTP.

When you try to strangle a system with no clean boundaries, you don’t get a gradual migration. You get a distributed system where every request fans out to both the old and new code paths, creating data consistency nightmares that only surface at 3 AM when European traffic peaks. The “zero downtime” promise becomes “zero downtime except for the three outages you caused trying to keep both systems in sync.”

The Organizational Death Spiral

Even if you solve the technical challenges, you hit the human wall. Teams maintaining these legacy systems aren’t sitting around waiting for modernization projects. They’re already maxed out keeping the current system alive, patching the Perl scripts, restarting the C++ daemons that leak memory, and explaining to executives why the billing run took six hours instead of four.

Attempting a Strangler Fig migration with your existing team is like asking firefighters to rebuild the house while it’s still burning. The cognitive load of maintaining legacy operations while building the new system creates a reliability debt that compounds weekly. Engineers context-switch between debugging memory leaks in 20-year-old C++ and writing modern Java microservices, resulting in mistakes in both domains.

This touches on the organizational factors behind failed legacy rewrites. The pattern assumes you have bandwidth for parallel implementation. In reality, you need external teams who can focus entirely on the new architecture while your internal team keeps the lights on. But finding vendors who can navigate undocumented C++ and Perl without suggesting a full rewrite is its own challenge, most either dodge the question or immediately propose burning it all down and starting fresh.

What Actually Works (Hint: It’s Not Sexy)

Teams that have survived this specific hellscape report that hybrid strategies offer the only viable path. Instead of attempting to strangle the entire monolith, you identify specific emission points, mediation layers, data export hooks, or reporting interfaces, and perform targeted rewrites there. You use a limited Strangler Fig pattern only around these specific boundaries, not the core.

The counterintuitive first step isn’t writing new code, it’s read-only observability. Spend three to four months instrumenting at the network and database level, capturing data flows without touching the core. Call it a “data foundation sprint” if you need to sell it to leadership, what you’re really doing is mapping the minefield before marching through it.

This approach aligns with the shift toward modular OSS/BSS architectures that telecom vendors are now advocating. Rather than replacing the entire stack, you extract specific microservices, billing mediation, network inventory, fault management, while leaving the core black box untouched.

The Amdocs agentic operating system (aOS) approach demonstrates this reality: it doesn’t replace your existing BSS/OSS stacks but operates on top of them, coordinating cross-domain workflows without requiring you to untangle the legacy spaghetti first. It acknowledges that some systems are too critical and too complex to strangle incrementally.

When to Admit the Pattern Doesn’t Fit

There’s a heretical thought that architecture blogs rarely admit: sometimes the full rewrite is the correct answer, despite the industry memes about Netscape. When your system lacks observable state, clean boundaries, and the original developers are ghosts, the “safe” incremental approach might actually be riskier than a greenfield replacement with parallel run validation.

The general causes of migration failure timelines often stem from pretending that incremental approaches reduce risk when they actually extend the danger window. A six-month big-bang rewrite with comprehensive testing and parallel operation might be safer than three years of dual-path routing where every change touches both legacy and modern code.

If your Strangler Fig implementation requires you to maintain two full implementations of business logic indefinitely, because you can’t safely delete the old code, you haven’t modernized. You’ve just doubled your maintenance burden and created a distributed system with all the failure modes of the old architecture plus all the failure modes of the new one.

The Hard Truth

The Strangler Fig pattern works beautifully when you have clean APIs, event-driven architectures, and teams with bandwidth for parallel implementation. It fails catastrophically when applied to systems that evolved before API-first design, where business logic bleeds across process boundaries and data consistency depends on timing quirks that emerged over decades of patches.

In telecom OSS and similarly high-stakes environments, the question isn’t “How do we strangle this system?” It’s “Do we have the observability to even attempt it without causing an outage?” If the answer is no, your first architecture decision shouldn’t be about migration patterns. It should be about how to watch without touching, because you can’t modernize what you can’t see, and you can’t strangle what has no neck.