For years, the database orthodoxy had a simple rule: JSON is a transport format, not a storage format. Store it in your database and you deserve the performance nightmare coming your way. The relational purists weren’t entirely wrong, text JSON parsing is shockingly expensive. But they made one critical miscalculation: they assumed JSON would stay text.

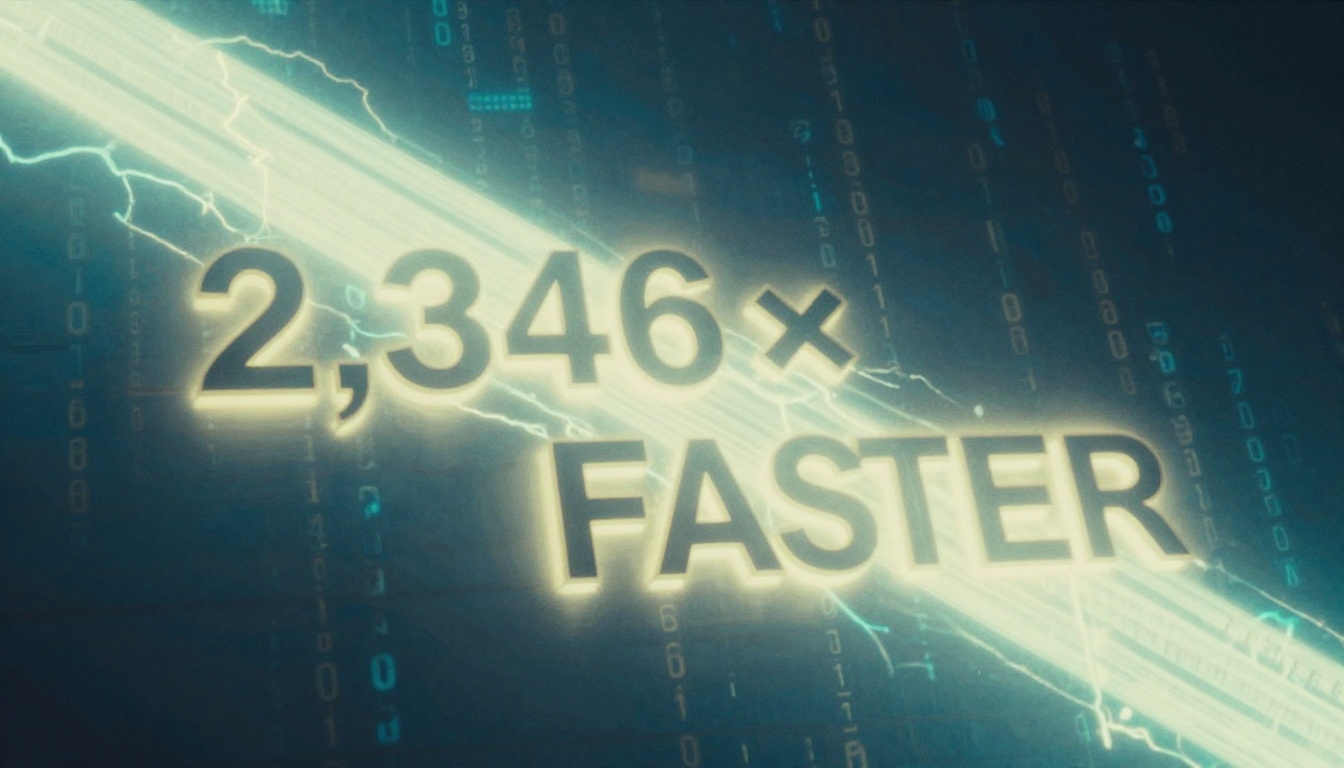

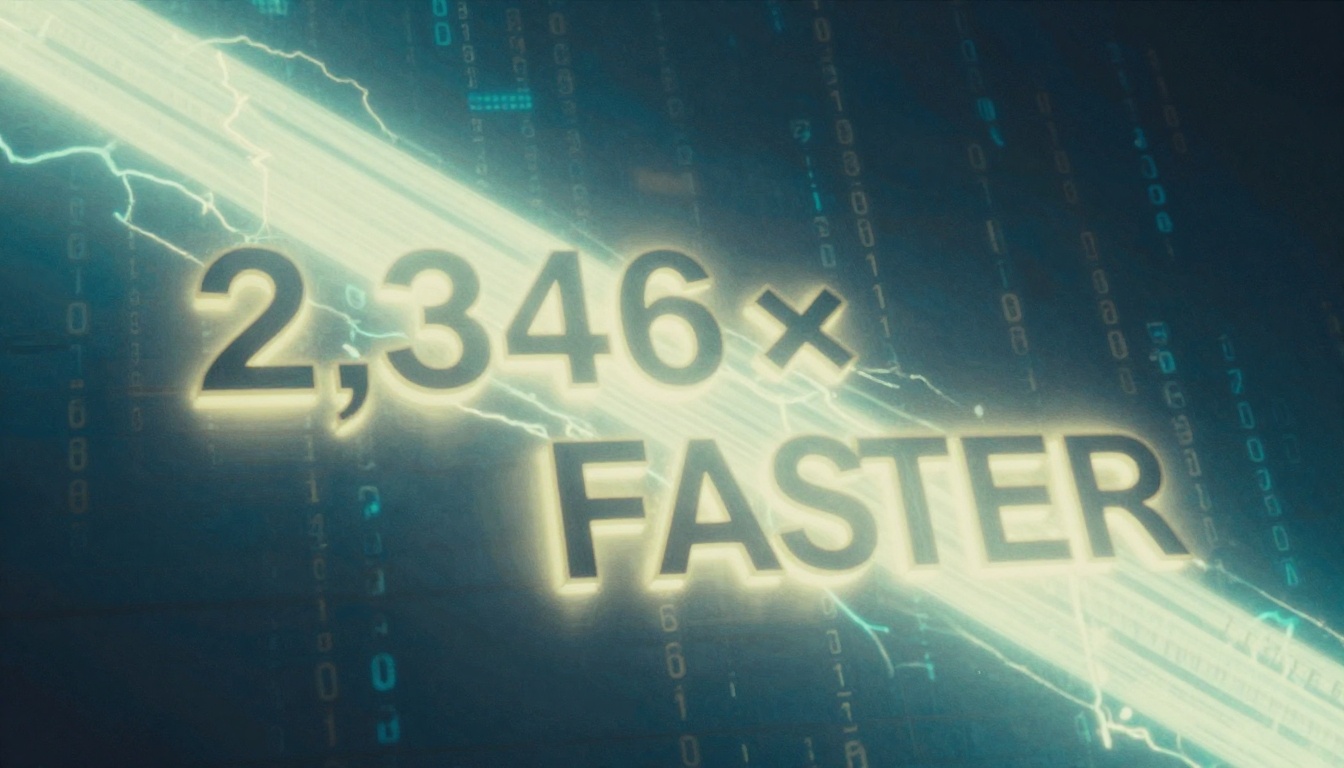

They were wrong. Binary encoding didn’t just fix JSON’s performance problems, it weaponized semi-structured data into something that can outperform rigid schemas in real-world workloads. The proof? A straightforward binary encoding delivers 2,346x faster lookups compared to parsing text JSON on every query. That’s not an incremental improvement, that’s a fundamental shift in what’s possible.

The Performance Problem Nobody Wanted to Admit

Let’s start with the uncomfortable truth: parsing JSON text is shockingly expensive, even with the fastest parsers available. Using simdjson, one of the fastest JSON parsers that can hit multi-GB/s throughput, a realistic query on the 617KB twitter.json takes 136,784 nanoseconds to parse and extract a single nested value (doc["statuses"].at(75)["user"]["name"]). For a million rows, that’s nearly two and a half minutes of pure parsing time. You could brew coffee while your “modern” database grinds through what should be a simple lookup.

The benchmarks reveal something even more disturbing:

BM_simdjson_object_ondemand/1000/0 2267 ns

BM_simdjson_object_ondemand/1000/250 3867 ns

BM_simdjson_object_ondemand/1000/500 5414 ns

BM_simdjson_object_ondemand/1000/750 7207 ns

Notice the pattern? Lookup time grows linearly with position. Accessing the 750th key in a 1,000-element object takes roughly 3x longer than accessing the first. That’s because plain text JSON has no random access. You can’t jump to the 750th element without walking past the previous 749. Every query pays the cost of parsing data it doesn’t even need.

This is why relational purists laughed at the idea of production JSON workloads. And honestly? They had a point.

Binary Encoding: The 2,346x Speedup That Changed Everything

The breakthrough wasn’t some revolutionary new data model, it was recognizing that JSON’s problem wasn’t its structure, but its representation. Convert that same JSON into a binary format once, store it, and suddenly you’re not parsing anymore. You’re doing pointer arithmetic.

Here’s what a minimal binary encoding looks like in practice. Take this JSON:

{"b": 12345, "c": false, "a": "hello"}The binary representation becomes:

[1 byte type=object][3 index pointers]

[1 byte value="a"][1 byte type=string][5 bytes value="hello"]

[1 byte value="b"][1 byte type=number][4 bytes value=12345]

[1 byte value="c"][1 byte type=boolean_false]Two critical optimizations make this fly:

- Type tags are single bytes: No more scanning for

{and:characters. The parser knows exactly what it’s looking at instantly. - Sorted keys enable binary search: Instead of linear scanning, you can jump directly to the key you need in O(log N) time.

The structure uses a simple node format:

typedef struct VarNode {

VarType type : 4, // 4 bits for type

uint32_t length : 28, // 28 bits for length/element count

// Data follows immediately

} VarNode;For arrays and objects, metadata entries store relative offsets to child nodes, enabling true random access. Want the 500th element of a 1,000-item array? Jump directly to it. No scanning required.

The performance difference is staggering. The same twitter.json query that took 136,784ns with text parsing now completes in 58.3 nanoseconds with binary encoding. That’s 2,346 times faster. For a million rows, you’re looking at 0.058 seconds instead of 2.5 minutes. The performance argument against JSON just evaporated.

PostgreSQL JSONB: When Theory Meets Production Reality

PostgreSQL’s JSONB implementation is the most mature example of binary JSON in the wild, but it’s also a masterclass in design tradeoffs. Unlike our minimal encoding, JSONB is engineered to play nice with PostgreSQL’s existing infrastructure, particularly TOAST (The Oversized-Attribute Storage Technique).

The key difference? JSONB uses an offset-or-length scheme with fixed stride for indexing. This means arrays and objects have predictable entry sizes, making it easier to compute offsets within PostgreSQL’s page layout. But there’s a catch: large JSONB documents (>2KB) get TOASTed out-of-line, which introduces overhead for random access.

This is why PostgreSQL JSONB shows a notable performance dip after documents exceed 2KB. The database is designed for transactional workloads where row-wise operations dominate. For analytics on large documents, you want columnar storage, not row-based.

The practical consequences are clear: JSONB has a slight write overhead due to parse-on-insert, but reads are significantly faster since there’s no reparsing. More importantly, JSONB supports GIN indexing and containment operators that make structured querying possible.

JSON vs JSONB: The Battle for Your Storage

The distinction matters more than a single letter suggests:

-- JSON stores raw text verbatim

SELECT '{"a":1, "a":2}'::json;

-- Returns: {"a":1, "a":2} (duplicate keys preserved)

-- JSONB stores binary representation

SELECT '{"a":1, "a":2}'::jsonb;

-- Returns: {"a": 2} (last value wins, whitespace stripped)JSONB strips whitespace, discards duplicate keys, and may reorder keys internally. The rule of thumb is simple: always use JSONB unless you need byte-identical round-tripping. In five years of production JSON workloads, I’ve needed the json type exactly once, for an audit log that required forensic exactness.

Indexing Strategies: The Difference Between Glory and Disaster

Here’s where JSONB goes from “interesting feature” to “production-ready.” The index type you choose must match your query patterns. Get this wrong, and your GIN index sits unused while PostgreSQL happily runs sequential scans on a million rows.

The Three Indexing Approaches

1. GIN Index (Default jsonb_ops)

Indexes every key and value in every document. Supports @>, ?, ?|, ?&, @?, and @@ operators. Flexible but large (124MB on a 1M row table) and write-heavy (+38% insert overhead).

CREATE INDEX idx_products_attr_gin ON products USING gin (attributes);2. GIN Index (jsonb_path_ops)

Hashes path-to-value combinations. Significantly smaller (78MB) and faster for containment queries, but only supports @> (and JSONPath since PostgreSQL 12). Write overhead is lower (+29%).

CREATE INDEX idx_products_attr_path ON products USING gin (attributes jsonb_path_ops);3. B-tree Expression Index (Targeted)

When you always query the same 1-3 keys, this is your secret weapon. Dramatically smaller (21MB) and faster (0.08ms vs 285ms for key equality), but only works for the specific expression defined.

CREATE INDEX idx_products_color ON products ((attributes->>'color'));The catch? The expression in your WHERE clause must match the index definition exactly, including type casts. This subtlety trips up more teams than any other JSONB performance issue.

Benchmark Reality Check

I ran a reproducible benchmark on PostgreSQL 16.3 with 1 million rows of realistic JSONB documents (~500 bytes each). The results are sobering:

| Query Type | No Index | GIN (jsonb_ops) | GIN (jsonb_path_ops) | B-tree Expression |

|---|---|---|---|---|

Containment (@>) |

285 ms | 1.2 ms | 0.9 ms | N/A |

Key equality (->>) |

268 ms | N/A* | N/A* | 0.08 ms |

| Range scan (numeric) | 312 ms | N/A | N/A | 4.5 ms |

| Index size | 0 MB | 124 MB | 78 MB | 21 MB |

| Insert overhead | baseline | +38% | +29% | +8% |

*GIN indexes do not accelerate ->> extraction queries. This is the single most common JSONB indexing misconception.

The takeaway? Expression B-tree indexes deliver 3,500x improvements for targeted queries, but only if you know your access patterns upfront. GIN indexes are your flexible friend, but they come with size and write costs.

The Design Space: Why BSON Isn’t Enough

You might think BSON, the format MongoDB popularized, would be the obvious standard. It’s not. BSON makes several tradeoffs that hurt analytical workloads:

- Arrays are encoded as objects with numeric keys (storage overhead)

- Keys are null-terminated C-strings (requires scanning to determine length)

- No random access to fields within objects or indices within arrays

- Keys aren’t sorted (no deterministic representation for comparison)

- Legacy deprecated types clutter the spec

CBOR and MessagePack suffer similar limitations: they’re optimized for compact transport, not fast repeated lookups. They don’t provide the random access that’s become the baseline for analytical workloads.

The design space for binary JSON is actually quite nuanced:

Supported Workloads: Are you extracting scalars ($.a.b.c) or entire subtrees? Scalar extraction benefits from flattened inverted indexes, while subtree extraction needs contiguous storage with clear boundaries.

Storage Cost: Deduplicating strings in a global table saves space but breaks self-containment. Each nested structure requires consulting the global table, complicating extraction.

Random Access: True O(1) array access requires indexing metadata. The offset strategy (1-byte vs 4-byte) is a direct tradeoff between document size and maximum supported document size.

Data Types: Modern encodings detect integer vs double vs decimal at parse time. Some preserve the original number string for precision, others parse immediately into native types.

Parquet VARIANT: Beyond JSON in the Lakehouse

As we move into lakehouse architectures, Parquet’s VARIANT type represents the next evolution. It’s not just JSON, it’s a generic container for semi-structured data with richer types (timestamps, UUIDs) and sophisticated optimizations.

The encoding is clever:

7 2 1 0

+------------------------------------+------------+

| value_header | basic_type |

+------------------------------------+------------+

| |

: value_data :

| |

+-------------------------------------------------+Four basic types (primitive, short string, object, array) with 20 primitive types total. Short strings (<64 bytes) pack length into the header, saving a separate length field. Objects use two offset lists: one for key references into a deduplicated string table, another for value nodes.

The key insight? Keys are deduplicated, but values remain self-contained. This balances storage efficiency with extraction performance. You can slice out a subtree without consulting global tables, but you don’t waste space repeating common keys like user_id or timestamp.

Parquet VARIANT also supports shredding: partially or fully extracting JSON into columns for better compression and predicate pushdown. The tradeoff is consistency management between the binary documents and shredded columns, a problem lakehouse query engines like Floe are actively solving.

When JSONB Is Your Best Friend (And When It’s Your Worst Enemy)

The Right Choice: Hybrid Models

The most effective pattern is the hybrid relational-document model. Store core entity fields as normalized, typed columns with a JSONB column for flexible extensions:

CREATE TABLE products (

id bigserial PRIMARY KEY,

name text NOT NULL,

price numeric(12,2) NOT NULL,

category text NOT NULL,

attributes jsonb NOT NULL DEFAULT '{}'::jsonb

);

INSERT INTO products (name, price, category, attributes) VALUES

('Classic Tee', 19.99, 'apparel', '{"size":"M","color":"black"}'),

('ThinkPad X1', 1299.00, 'electronics', '{"cpu":"i7-1365U","ram_gb":16}');This gives you relational integrity where it matters and schema flexibility where you need it. For multi-tenant applications or domains with stable “core” entities but unpredictable “extensions”, this pattern is unbeatable.

The Wrong Choice: Anti-Patterns That Kill Performance

Mistake #1: GIN Index + Extraction Operator Mismatch

-- You create this index:

CREATE INDEX idx_data_gin ON events USING gin (data);

-- You write this query:

SELECT * FROM events WHERE data->>'type' = 'click';

-- Result: Sequential scan. The GIN index sits unused.The fix? Either rewrite to use containment (data @> '{"type":"click"}') or create an expression B-tree index on the specific key.

Mistake #2: Large Document Updates

Every jsonb_set() call rewrites the entire document. A 10KB JSONB update writes 10KB to WAL, not just the 8 bytes you changed. Promote frequently updated fields to typed columns. I once saw a notification system hit severe WAL amplification at 500K rows because each “mark as read” update rewrote 4-8KB of JSONB. Promoting three fields to columns dropped WAL volume by 80%.

Mistake #3: Missing Type Casts

-- Index on numeric expression:

CREATE INDEX idx_price ON products (((attributes->>'price')::numeric));

-- Query that won't use it:

SELECT * FROM products WHERE attributes->>'price' > '100';

-- This is a text comparison, and it's also a correctness bug!The expression must match exactly. Text comparison '9' > '100' is true lexicographically. Don’t find out the hard way.

The Broader Ecosystem: Why This Matters Beyond Postgres

The JSON resurgence isn’t just a PostgreSQL story. It’s playing out across the entire data stack:

- MongoDB built its empire on BSON, proving document models could scale

- Snowflake treats semi-structured data as a first-class citizen via

VARIANT - Apache Iceberg supports

VARIANTstarting in v3, bringing binary JSON to lakehouses - FloeDB is building a lakehouse SQL engine that intelligently encodes and shreds binary JSON when it makes sense

Even LLMs are getting into the act. When models return structured responses through APIs, they “speak JSON.” The absurdity of asking a powerful natural-language model to squeeze itself into JSON has led to formats like TOON, a JSON encoding designed to be token-efficient for LLMs while remaining human-readable.

The ecosystem has converged: semi-structured data is here to stay, and binary encoding is the price of admission.

Actionable Takeaways: Your JSONB Survival Guide

-

Start with normalized columns. Reach for JSONB when schema flexibility genuinely demands it, not as a migration shortcut.

-

Match your index to your query pattern:

- Always query the same 1-3 keys? Expression B-tree index

- Need flexible exploration across varied keys? GIN

jsonb_ops -

Only need containment checks? GIN

jsonb_path_ops -

Verify with

EXPLAIN (ANALYZE, BUFFERS). The difference between a well-indexed JSONB query and a mismatched one isn’t 2x. It’s often 1,000x or more. -

Audit your UPDATE statements. If the same JSONB keys get written repeatedly, promote them to columns. Generated columns (PostgreSQL 12+) can bridge this transition without immediate application changes.

-

Benchmark with realistic data volumes. JSONB performance is non-linear. What works at 10,000 rows can collapse at 500,000.

-

Consider the full ecosystem. For analytics workloads, Parquet VARIANT in a lakehouse may outperform PostgreSQL JSONB. For transactional workloads with flexible schemas, PostgreSQL JSONB is battle-tested. For document-level access patterns, a dedicated document store might still win.

The relational purists weren’t wrong about JSON’s problems. They just couldn’t imagine the solutions. Binary encoding didn’t just fix JSON, it transformed it into something that can outperform the rigid schemas it was supposed to replace. The question isn’t whether to use JSON in your database. It’s which binary encoding and indexing strategy matches your workload.

And if you’re still parsing text JSON on every query? You’re leaving 2,346x performance on the table. The resurgence is here. The only question is whether you’ll join it or get left behind.

Further Reading: For deeper dives into related topics, check out our analysis of the performance pitfalls of using JSON in large-scale data processing, explore PostgreSQL’s evolving support for JSON and binary formats like JSONB, or understand the challenges in efficient data ingestion for AI systems, including format considerations.